The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

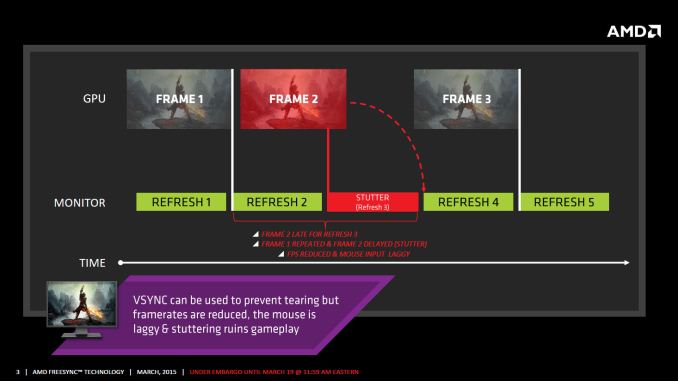

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

lordken - Thursday, March 19, 2015 - link

are you sure? they only have to come with different name (if they want). Just as both amd/nvidia calls and use "DisplayPort" as DisplayPort , they didnt have came up with their own implementations of it as DP is standardized by VESA so they used that.Or I am missing your point what you wanted to say.

Question is if it become core/regular part of lets say DP1.4 onwards as just now it is only optional aka 1.2a and not even in DP1.3 - if I understand that correctly.

iniudan - Thursday, March 19, 2015 - link

Well the implementation need to be in their driver, they not gonna give that to Nvidia. =pchizow - Thursday, March 19, 2015 - link

So it is also closed/proprietary on an open spec? Gotcha, so I guess Nvidia should just keep supporting their own proprietary solution. Makes sense to me.ddarko - Thursday, March 19, 2015 - link

You know repeating a falsehood 100 times doesn't make it true, right?chizow - Tuesday, March 24, 2015 - link

You mean like repeating FreeSync can be made backward compatible with existing monitors with just a firmware flash, essentially for Free? I can't remember how many times that nonsense was tossed about in the 15 months it took before FreeSync monitors finally materialized.Btw, it is looking more and more like FreeSync is a proprietary implementation based on an open-spec just as I stated. FreeSync has recently been trademarked by AMD so there's not even a guarantee AMD would allow Nvidia to enable their own version of Adaptive-Sync on FreeSync (TM) branded monitors.

ddarko - Thursday, March 19, 2015 - link

From the PC Perspective article you've been parroting around like gospel all day today:"That leads us to AMD’s claims that FreeSync doesn’t require proprietary hardware, and clearly that is a fair and accurate benefit of FreeSync today. Displays that support FreeSync can use one of a number of certified scalars that support the DisplayPort AdaptiveSync standard. The VESA DP 1.2a+ AdaptiveSync feature is indeed an open standard, available to anyone that works with VESA while G-Sync is only offered to those monitor vendors that choose to work with NVIDIA and purchase the G-Sync modules mentioned above."

http://www.pcper.com/reviews/Displays/AMD-FreeSync...

That's the difference between an open and closed standard, as you well know but are trying to obscure with FUD.

chizow - Friday, March 20, 2015 - link

@ddarko, it says a lot that you quote the article but omit the actually relevant portion:"Let’s address the licensing fee first. I have it from people that I definitely trust that NVIDIA is not charging a licensing fee to monitor vendors that integrate G-Sync technology into monitors. What they do charge is a price for the G-Sync module, a piece of hardware that replaces the scalar that would normally be present in a modern PC display. It might be a matter of semantics to some, but a licensing fee is not being charged, as instead the vendor is paying a fee in the range of $40-60 for the module that handles the G-Sync logic."

And more on that G-Sync module AMD claims isn't necessary (but we in turn have found out a lot of what AMD said about G-Sync turned out to be BS even in relation to their own FreeSync solution):

"But in a situation where the refresh rate can literally be ANY rate, as we get with VRR displays, the LCD will very often be in these non-tuned refresh rates. NVIDIA claims its G-Sync module is tuned for each display to prevent ghosting by change the amount of voltage going to pixels at different refresh rates, allowing pixels to untwist and retwist at different rates."

In summary, AMD's own proprietary spec just isn't as good as Nvidia's.

Crunchy005 - Friday, March 20, 2015 - link

AMDs spec is not proprietary so stop lying. Also I love how you just quoted in context what you quoted out of context in an earlier comment. The only argument you have against freeSync is ghosting and as many people have pointed out is that it is not an inherent issue with free sync but the monitors themselves. The example given in that shows three different displays that all are affected differently. The LG and benq both show ghosting differently but use the same freeSync standard so something else is different here and not freeSync. On top of that the LG is $100 less than the asus and the benQ $150 less for the same features and more inputs. I don't see how a better more well rounded monitor that can offer variable refresh rates with more features that is cheaper is a bad thing. From the consumer side of things that is great! A few ghosting issues that i'm sure are hardly noticeable to the average user is not a major issue. The videos shown there are taken at a high frame rate and slowed down, then put into a compressed format and thrown on youtube in what is a very jerky hard to see video, great example for your only argument. If the tech industry could actually move away from proprietary/patented technology, and maybe try to actually offer better products and not "good enough" products that force customers into choosing and being locked into one thing we could be a lot father along.chizow - Friday, March 20, 2015 - link

Huh? How do you know Nvidia can use FreeSync? I am pretty sure AMD has said Nvidia can't use FreeSync, if they decide to use something with DP 1.2a Adaptive Sync they have to call it something else and create their own implementation, so clearly it is not an Open Standard as some claim.And how is it not an issue inherent with FreeSync? Simple test that any site like AT that actually wants answers can do:

1) Run these monitors with Vsync On.

2) Run these monitors with Vsync Off.

3) Run these monitors with FreeSync On.

Post results. If these panels only exhibit ghosting in 3), then obviously it is an issue caused by FreeSync. Especially when we have these same panel makers (the 27" 1440p BenQ is apparently the same AU optronics panel as the ROG Swift) have panels on the market, both non-FreeSync and G-Sync) that have no such ghosting.

And again you mention the BenQ vs. the Asus, well guess what? Same panel, VERY different results. Maybe its that G-Sync module doing its magic, and that it actually justifies its price. Maybe that G-Sync module isn't bogus as AMD claimed and it is actually the Titan X of monitor scalers and is worth every penny it costs over AMD FreeSync if it is successful at preventing the kind of ghosting we see on AMD panels, while allowing VRR to go as low as 1FPS.

Just a thought!

Crunchy005 - Monday, March 23, 2015 - link

Same panel different scalers. AMD just uses the standard built into the display port, the scaler handles the rest there so it isn't necessarily freeSync but the variable refresh rate technology in scaler that would be causing the ghosting. So again not AMD but the manufacturer."If these panels only exhibit ghosting in 3), then obviously it is an issue caused by FreeSync"

Haven't seen this and you haven't shown us either.

"Maybe its that G-Sync module doing its magic"

This is the scaler so the scaler, not made by AMD, that supports the VRR standard that AMD uses is what is controlling that panel not freeSync itself. Hence an issue outside of AMDs control. Stop lying and saying it is an issue with AMD. Nvidia fanboys lying, gotta keep them on the straight and narrow.