The Best Server CPUs Compared, Part 1

by Johan De Gelas on December 22, 2008 10:00 PM EST- Posted in

- IT Computing

ERP and OLTP Benchmark 1: SAP S&D

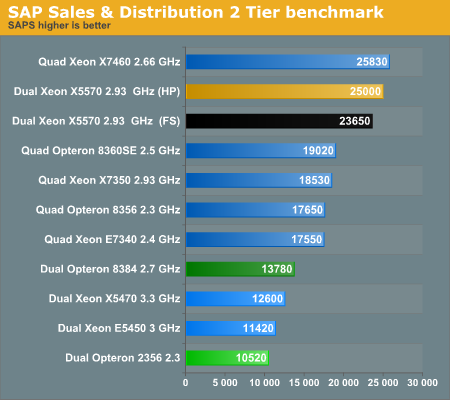

The SAP S&D (sales and distribution, 2-tier internet configuration) benchmark is an extremely interesting benchmark as it is a real world client-server application. We decided to look at SAP's benchmark database. The results below are two tier benchmarks, so the database and the underlying OS can make a big difference. Unless we keep those parameters the same, we cannot compare the results. The results below are all run on Windows 2003 Enterprise Edition and MS SQL Server 2005 database (both 64-bit). Every "two tier Sales & Distribution" benchmark was performed on the SAP's "ERP release 2005".

In our previous server oriented article, we summed up a rough profile of SAP S&D:

- Very parallel resulting in excellent scaling

- Low to medium IPC, mostly due to "branchy" code

- Not really limited by memory bandwidth

- Likes large caches

- Sensitive to sync ("cache coherency") latency

There are no quad socket results for the latest 45nm AMD parts, but we can still get a pretty good idea where it would land. The "Barcelona" Opteron scales from 10520 SAPS (2 CPUs) to 17650 (4 CPUs), or an improvement of about 68%. The quad Opteron 8384 will probably scale a bit better, so we speculate it will probably attain a score of about 23000 to 24000 SAPS. It won't beat the best Intel score (Dunnington), but it will come close enough and offer an excellent performance/watt ratio. If you are wondering about the phenomenal Xeon X5570 scores, we discussed them here.

Also interesting is that the dual 2.7GHz "Shanghai" is about 31% faster than the dual 2.3GHz "Barcelona", while the clock speed advantage is only 17%. It clearly shows that the larger L3 cache pays off here. Now let's look at some more exotic setups: octal socket or similar systems.

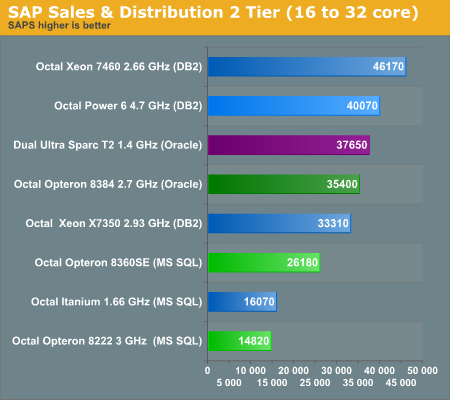

This overview is hardly relevant if you are deciding which x86 server to buy, but it is a feast for those following the complete server market -- or those of us who are interested in different CPU architectures. There is a battle raging between three different philosophies: the thread machine-gun SUN UltraSPARC T2, the massive speed daemon IBM POWER6, and the cost effective x86 architectures. The UltraSPARC 2 machine only has four sockets, but each socket contains eight CPUs that have a fine grained multithreaded, in order, "Gatling gun" that cycles between eight threads. That means one quad socket machine keeps up to 256 threads alive. The POWER6 machine contains eight CPUs, but each CPU is only a dual-core CPU. However, each POWER6 CPU is a deeply pipelined, wide superscalar architecture running at 4.7GHz, backed up with massive caches (4MB L2, 32MB L3). The very wide superscalar architecture is used more efficiently thanks to Simultaneous Multi-Threading (SMT).

Each T2 with eight "mini cores" needs 95W compared to 130W for two massive POWER6 cores, so the SUN Server needs 4 x 95W for the CPUs, while the IBM server needs 8 x 130W. These differences could be smaller percentagewise when you look at how much power each server system will need, but when it comes to performance/watt, it will be hard to beat the T2 here. The latest octal Opteron server should come close (8 x 75W) as it does not use FB-DIMMs while the UltraSPARC T2 does. However, we are speculating here; let's get back to our own benchmarking.

29 Comments

View All Comments

zpdixon42 - Wednesday, December 24, 2008 - link

DDR2-1067: oh, you are right. I was thinking of Deneb.Yes performance/dollar depends on the application you are running, so what I am suggesting more precisely is that you compute some perf/$ metric for every benchmark you run. And even if the CPU price is less negligible compared to the rest of the server components, it is always interesting to look both at absolute perf and perf/$ rather than just absolute perf.

denka - Wednesday, December 24, 2008 - link

32-bit? 1.5Gb SGA? This is really ridiculous. Your tests should be bottlenecked by IOJohanAnandtech - Wednesday, December 24, 2008 - link

I forgot to mention that the database created is slightly larger than 1 GB. And we wouldn't be able to get >80% CPU load if we were bottlenecked by I/Odenka - Wednesday, December 24, 2008 - link

You are right, this is a smallish database. By the way, when you report CPU utilization, would you take IOWait separate from CPU used? If taken together (which was not clear) it is possible to get 100% CPU utilization out of which 90% will be IOWait :)denka - Wednesday, December 24, 2008 - link

Not to be negative: excellent article, by the waymkruer - Tuesday, December 23, 2008 - link

If/When AMD does release the Istanbul (k10.5 6-core), The Nehalem will again be relegated to second place for most HPC.Exar3342 - Wednesday, December 24, 2008 - link

Yeah, by that time we will have 8-core Sandy Bridge 32nm chips from Intel...Amiga500 - Tuesday, December 23, 2008 - link

I guess the key battleground will be Shanghai versus Nehalem in the virtualised server space...AMD need their optimisations to shine through.

Its entirely understandable that you could not conduct virtualisation tests on the Nehalem platform, but unfortunate from the point of view that it may decide whether Shanghai is a success or failure over its life as a whole. As always, time is the great enemy! :-)

JohanAnandtech - Tuesday, December 23, 2008 - link

"you could not conduct virtualisation tests on the Nehalem platform"Yes. At the moment we have only 3 GB of DDR-3 1066. So that would make pretty poor Virtualization benches indeed.

"unfortunate from the point of view that it may decide whether Shanghai is a success or failure"

Personally, I think this might still be one of Shanghai strong points. Virtualization is about memory bandwidth, cache size and TLBs. Shanghai can't beat Nehalem's BW, but when it comes to TLB size it can make up a bit.

VooDooAddict - Tuesday, December 23, 2008 - link

With the VMWare benchmark, it is really just a measure of the CPU / Memory. Unless you are running applications with very small datasets where everything fits into RAM, the primary bottlenck I've run into is the storage system. I find it much better to focus your hardware funds on the storage system and use the company standard hardware for server platform.This isn't to say the bench isn't useful. Just wanted to let people know not to base your VMWare buildout soley on those numbers.