SUN’s UltraSparc T1 - the Next Generation Server CPUs

by Johan De Gelas on December 29, 2005 10:03 AM EST- Posted in

- CPUs

Introduction

SUN's Ultrasparc T1, formerly known as Niagara, is much more than just a new UltraSparc. It is the harbinger of a new generation of CPUs, which focus almost solely on Thread Level Parallelism. No less than 32 independent parts of a different program (threads) can be "in flight" on the chip. It is SUN's first implementation of their Throughput computing philosophy, and compared to what we are used to in the AMD/Intel world, it is a pretty extreme architecture that focuses on network and server performance.

Stubborn Server applications

The basic idea behind the UltraSparc T1 is that most modern superscalar Out-Of-Order CPUs may be excellent for games, digital content creation and scientific calculations, but they are not a good match for commercial server loads.

These complex CPUs can decode up to 3 (Opteron) to 8 (Power 5) instructions in parallel, put in a buffer and try to issue them across 9 or more units. In theory, these CPUs can decode, issue, execute and retire up to 3 (Opteron) to 5 (IBM Power) instructions per clock cycle. They have huge buffers (up to 200 instructions) to keep many instructions in flight.

Server workloads, however, cannot make good use of all this parallelism for several reasons. The main reason is that commercial server loads move a lot of data around and perform relatively little calculation on that data. Moving a lot of data around means that you may need a lot of accesses to the memory, which results in many cycles wasted while the CPU has to wait for the data to arrive. As many different users query different parts of the database, caching cannot be as efficient (low locality of reference). In the past years, memory latency has become worse as memory speed increased a lot slower than the speed of the CPU. Memory latency is even worse on MP (Multi-Processor) systems, and has risen from a few tens of CPU cycles to 200-400 clock cycles. The second reason is that many of the calculations performed on that data involve data dependent (read: hard to predict) branches, which makes it even harder to do a lot in parallel.

You might counter these two problems by eliminating the branches through predication and incorporate very large caches. That is what the Itanium family does, but even the mighty Itanium is not capable of running those server loads at high speeds despite predication and gigantic caches. Below, you can see Intel's own numbers for CPU utilization on the 3 different workloads.

The applications that can be found inside Spec Integer benchmark are still rather compute-intensive compared to server applications. Compression, FPGA Circuit Placement and Routing, Compiling and interpreting, and computer visualization are representatives of very CPU intensive integer loads. On average, the best desktop CPUs such as the Athlon 64 or Intel Dothan are capable of sustaining 0.8 to 1 instructions per clock cycle in this benchmark, while the Pentium 4 is around 0.5-0.7 IPC. Itanium is capable of a 1.3-1.5 IPC. That may sound like very low numbers, but let us compare SpecInt with typical server loads. In the table below, you find how the 4-way superscalar USIIIi does on the various benchmarks.

Rather than focus on the absolute numbers, it is more important to note that web applications have 3 times less IPC than CPU intensive integer apps. OLTP databases (TPC-C) do even worse: the CPU sustains on average 0.2 instructions per clock pulse, or 4.5 less than SpecInt. These numbers are no different for the Opteron or Xeon. So despite Out of Order execution, nifty branch prediction schemes and big caches, commercial server loads utilize a very meagre 10 to 15% of the potential of modern CPUs.

One possible solution is to focus on clock speed instead of trying to process as many instructions in parallel (ILP, instruction level parallelism). The long pipelines of such CPUs make the branch prediction problem worse, and the power consumption goes up exponentially as we discussed in a previous article about dynamic power and power leakage.

SUN's Ultrasparc T1, formerly known as Niagara, is much more than just a new UltraSparc. It is the harbinger of a new generation of CPUs, which focus almost solely on Thread Level Parallelism. No less than 32 independent parts of a different program (threads) can be "in flight" on the chip. It is SUN's first implementation of their Throughput computing philosophy, and compared to what we are used to in the AMD/Intel world, it is a pretty extreme architecture that focuses on network and server performance.

Fig 1. The 2U SUN T2000

Stubborn Server applications

The basic idea behind the UltraSparc T1 is that most modern superscalar Out-Of-Order CPUs may be excellent for games, digital content creation and scientific calculations, but they are not a good match for commercial server loads.

These complex CPUs can decode up to 3 (Opteron) to 8 (Power 5) instructions in parallel, put in a buffer and try to issue them across 9 or more units. In theory, these CPUs can decode, issue, execute and retire up to 3 (Opteron) to 5 (IBM Power) instructions per clock cycle. They have huge buffers (up to 200 instructions) to keep many instructions in flight.

Server workloads, however, cannot make good use of all this parallelism for several reasons. The main reason is that commercial server loads move a lot of data around and perform relatively little calculation on that data. Moving a lot of data around means that you may need a lot of accesses to the memory, which results in many cycles wasted while the CPU has to wait for the data to arrive. As many different users query different parts of the database, caching cannot be as efficient (low locality of reference). In the past years, memory latency has become worse as memory speed increased a lot slower than the speed of the CPU. Memory latency is even worse on MP (Multi-Processor) systems, and has risen from a few tens of CPU cycles to 200-400 clock cycles. The second reason is that many of the calculations performed on that data involve data dependent (read: hard to predict) branches, which makes it even harder to do a lot in parallel.

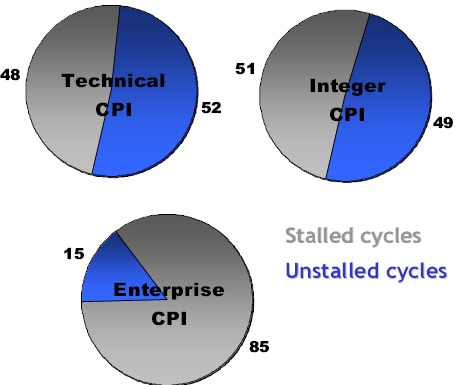

You might counter these two problems by eliminating the branches through predication and incorporate very large caches. That is what the Itanium family does, but even the mighty Itanium is not capable of running those server loads at high speeds despite predication and gigantic caches. Below, you can see Intel's own numbers for CPU utilization on the 3 different workloads.

Fig 2: Intel reporting the percentage of stalled cycles of different applications on the Itanium 2 family. Source:Intel.

The applications that can be found inside Spec Integer benchmark are still rather compute-intensive compared to server applications. Compression, FPGA Circuit Placement and Routing, Compiling and interpreting, and computer visualization are representatives of very CPU intensive integer loads. On average, the best desktop CPUs such as the Athlon 64 or Intel Dothan are capable of sustaining 0.8 to 1 instructions per clock cycle in this benchmark, while the Pentium 4 is around 0.5-0.7 IPC. Itanium is capable of a 1.3-1.5 IPC. That may sound like very low numbers, but let us compare SpecInt with typical server loads. In the table below, you find how the 4-way superscalar USIIIi does on the various benchmarks.

| Benchmark | IPC |

| SPECint | 0.9 |

| SPECjbb | 0.5 |

| SPECweb | 0.3 |

| TPC-C | 0.2 |

Rather than focus on the absolute numbers, it is more important to note that web applications have 3 times less IPC than CPU intensive integer apps. OLTP databases (TPC-C) do even worse: the CPU sustains on average 0.2 instructions per clock pulse, or 4.5 less than SpecInt. These numbers are no different for the Opteron or Xeon. So despite Out of Order execution, nifty branch prediction schemes and big caches, commercial server loads utilize a very meagre 10 to 15% of the potential of modern CPUs.

One possible solution is to focus on clock speed instead of trying to process as many instructions in parallel (ILP, instruction level parallelism). The long pipelines of such CPUs make the branch prediction problem worse, and the power consumption goes up exponentially as we discussed in a previous article about dynamic power and power leakage.

49 Comments

View All Comments

thesix - Friday, December 30, 2005 - link

If you're talking about POWER5's SMT, currently it provides two HW threads per core:http://publib.boulder.ibm.com/infocenter/pseries/i...">http://publib.boulder.ibm.com/infocente...x.doc/ai...

If you look closer at T1, the best one has 8 cores, each core supports four HW threads.

http://www.sun.com/processors/UltraSPARC-T1/">http://www.sun.com/processors/UltraSPARC-T1/

SMT and CMT appear to be the same type of technology (at least conceptual wise) with different names from two vendors.

> The very very poor FP performance of T1 is the truth.

> We have to remind ourselves that it is only a integer CPU. It's FP performance is too terrible.

OK. Since you have repeated so many times, I am sure everyone who's reading this will remember, and I do not disagree :-).

Thanks.

Betwon - Friday, December 30, 2005 - link

We think that it is diffirent between CMT and SMT.For exapmle:

P4 630 is a kind of SMT CPU, but not a CMT CPU.

AthlonX2 is a kind of CMT CPU, but not a SMT CPU.

From anandtech:

T1 has no branch prediction,and it has only one-instruction-issue/core, 8KB L1D/core(too few for 4 threads to use).

POWER5 has 32KB L1D/core, which is used by two threads.

We think that the SMT of T1 may be OK, unless 4 threads only use very few L1D cache(It is impossible for most cases)

Betwon - Friday, December 30, 2005 - link

edit:The only explain about how to improve the efficiency(very poor) is to use SMT to hide the stall's latency(by branch miss/cache miss ect.)

But a core has only 8KB L1(which will be used by 4 threads), the cache miss will increase. It is possible to become worst.

Betwon - Friday, December 30, 2005 - link

edit: T1 have no branch prediction and it has only one_inst_issue/core.Brian23 - Friday, December 30, 2005 - link

Obviously the apps that they used to benchmark in this article like running on the chip. Also, this chip doesn't run windows. It runs Sun's proprietary operating system. (I forgot what it's called.) Sun will give this new chip software support because they want it to do well.I think I read in the article that the chip is backwards compatable with the previous design Sun chips, meaning a lot of software is already available that will run on the chip.

Betwon - Friday, December 30, 2005 - link

NO!It is too narrow for the areas of 32-thread-parallel-well apps.

'have many threads' is not equal to '32-thread-parallel-well'!

Even there are 32 threads, but without parallel-well , This new CPU will waste more than 90% of it's potential.

The efficiency of Itanium( Itanium is capable of a 1.3-1.5 IPC) is much better than x86-CPU(0.7-0.9 IPC). Itanium never used OOO logic and long pipelines.

Betwon - Friday, December 30, 2005 - link

The efficiency of Itanium2 is still better than IBM's POWER5, and a Itanium2 core may retire 6 instrutions/cycle,and POWER5's can retire 5-instrutions/cycle.But a core of this new CPU is only one instrutions/cycle.

Brian23 - Friday, December 30, 2005 - link

I think you missed the part where x86 chips spend 400 cycles waiting on memory accesses when the Sun chip just keeps chugging with another thread while the load is happening.Calin - Tuesday, January 3, 2006 - link

Those 400 cycles are related to the higher clock speed (if your processor would be twice as slow, it would wait only 200 cycles). I assume the 400 cycles are based on the Xeon processor (that has high clock speed and slower FSB).Betwon - Friday, December 30, 2005 - link

NO!It is not true for all the x86 CPU.When Athlon64 spend many cycles waiting on memory accesses,

For P4 with HT,P4 just keeps chugging with another thread while the load is happening.

Do you understand what I want to say?