Imagination Announces PowerVR Series7 GPUs - Series7XT & Series7XE

by Ryan Smith on November 10, 2014 8:50 AM EST- Posted in

- GPUs

- Mobile

- Imagination Technologies

- PowerVR

- PowerVR Series7

Though the first PowerVR Series 6XT-equipped products have only recently launched – including the unexpectedly powerful iPad Air 2 – the development cycle for SoCs and the realities of IP licensing mean that Imagination is already focusing on GPU designs for late 2015 and beyond. Just as one design reaches consumer hands the next generation gets completed, and the SoC integration work begins.

Taking place this week are Imagination Technologies’ Chinese idc14 and Imagination Summits developer events. While Imagination holds these events in multiple countries over the year, the Chinese event is in many respects the most important from a hardware standpoint. With the bulk of SoC GPU licensees headquartered in the Asia-Pacific region – firms such as Allwinner, Rockchip, and Samsung – Imagination’s Chinese event is perhaps their biggest customer event and consequently an important venue for product announcements. Again this backdrop Imagination will be using this week’s events to announce the next generation of PowerVR GPUs, PowerVR Series7.

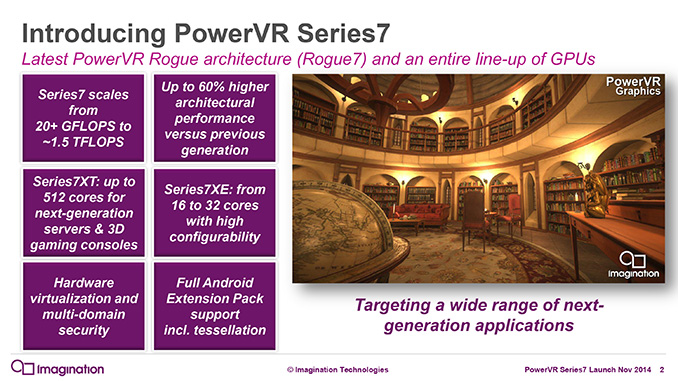

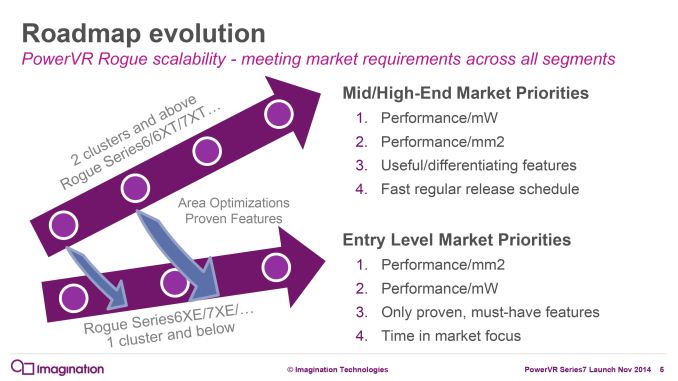

PowerVR Series7 is the the successor to Imagination’s current PowerVR Series 6XT lineup of GPUs. Like Series 6XT, Series7 is composed of two variants, Series7XT for the high end and Series7XE for the low-end, and in turn each contains a number of individual configurations. Ranging from half a shader cluster (USC) to 16 clusters, Imagination is seeking to cover virtually the entire range of SoC-equipped devices, from high-end IoT/wearables to tablets, set top boxes, and even HPC severs.

From an architectural standpoint Series7 will be a further iteration on Imagination’s Rogue architecture, which was first used in 2012’s Series6. With each generation Imagination has further tweaked and expanded their designs to improve performance/efficiency and to cover new use cases, and for Series7 the story is much the same. This year Imagination has sat down with us to give us an overview of what’s new and changed in their architecture for Series7, so let’s dive right in.

| PowerVR GPU Comparison | ||||||

| Series7XT | Series7XE | Series6XT | Series6XE | |||

| Clusters | 2 - 16 | 0.5 - 1 | 2 - 8 | 0.5 - 1 | ||

| FP32 FLOPs/Clock | 128 - 1024 | 32 - 64 | 128 - 512 | 32 - 64 | ||

| FP16 FLOPs/Clock | 256 - 2048 | 64 - 128 | 256 - 1024 | 64 - 128 | ||

| Pixels/Clock (ROPs) | 4 - 32? | 2 - 4? | 4 - 16 | 2 - 4 | ||

| Texels/Clock | 4 - 32 | 1 - 2 | 4 - 16 | 1 - 2 | ||

| OpenGL ES | 3.1 | 3.1 | 3.1 | 3.1 | ||

| Android Extension Pack / Tessellation | Yes | Optional | Optional | No | ||

| Direct3D | Base: FL 10_0 Optional: FL 11_1 |

FL 9_3 | FL 10_0 | FL 9_3 | ||

| OpenCL | Base: 1.2 EB Optional: 1.2 FP |

1.2 EB | 1.2 EB | 1.2 EB | ||

| Architecture | Rogue | Rogue | Rogue | Rogue | ||

PowerVR Series7 Architecture

From an architectural standpoint Imagination is already starting in a strong position for Series7 with the Rogue architecture. With the Rogue USCs implementing a modern shader pipeline, there’s no innate weakness to the design that requires correction. However as is the case for all SoC GPUs, there is a constant need to deliver better power efficiency and space efficiency, as these are the primary factors limiting overall performance and fabrication improvements alone can’t deliver all of the necessary gains. For this reason Imagination has continued to iterate on the Rogue architecture for Series7 to further improve its efficiency and resulting performance.

Outside of the underlying architecture however, there is also the need to deliver new features to keep up with modern APIs, developer demands, and of course the competitive landscape. In that respect Series6XT is a bit more dated; while it supports OpenGL ES 3.1 its base configuration (by far the most common) does not have the hardware features to support the more extensive Android Extension Pack, and for that matter it also lacks the features necessary to support Direct3D feature level 11. For these reasons Series7 will also be responsible for delivering feature improvements to Imagination’s GPU lineup to keep it up to date with the latest standards.

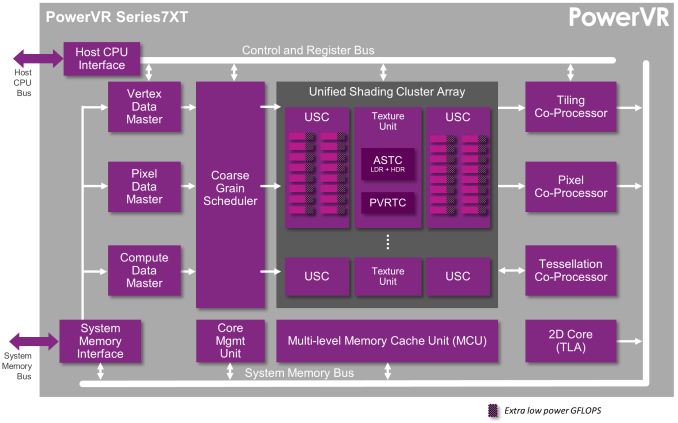

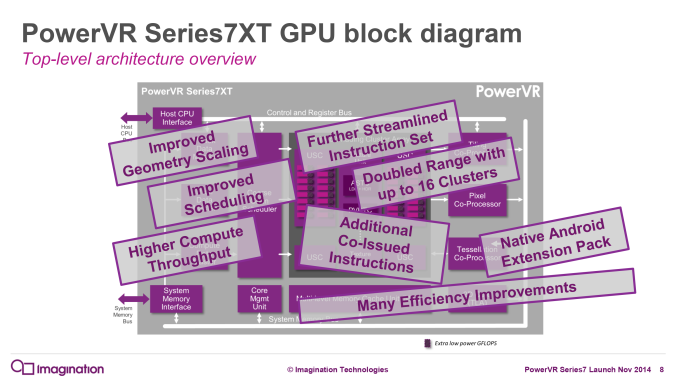

Looking at the overall architecture then (with an emphasis on 7XT), what we find is still very much Rogue in nature and is called as much by Imagination. While various blocks have been upgraded or overhauled in some manner, there is only a single new block. Available on the base configuration of the 7XT and as an option for the 7XE it is the Tessellation Co-Processor. Exactly as the name describes it, the Tessellation Co-Processor is hardware block responsible for and working in conjunction with the Vertex Data Master to implement full tessellation support. The tessellator itself is fixed function for power efficiency reasons, with hull and domain shading handled through shading hardware. The addition of tessellation hardware along with the standard inclusion of ASTC support are the major functional changes that enable Android Extension Pack support on the base Series7XT over the base Series6XT.

For the other blocks, Imagination has implemented improvements all throughout the architecture. The geometry performance of the Vertex Data Master (geometry frontend) has been doubled to alleviate bottlenecking there. Meanwhile the Compute Data Master has been upgraded as well to allow it to setup wavefronts more quickly (up to 300% faster), which is especially helpful for quickly processing large numbers of small kernels, something Imagination tells us was more common than expected.

Finally the Coarse Grain Scheduler has also been upgraded in conjunction with the USCs. Primarily focusing on reducing inter-tile dependencies, Series7 can now more frequently issue work to idle USCs that in Series6/XT/XE were waiting on other USCs to finish their work before the whole block moved on. With fewer dependencies, idle USCs can now be issued work from other sources or move on to their next tile in a larger number of circumstances.

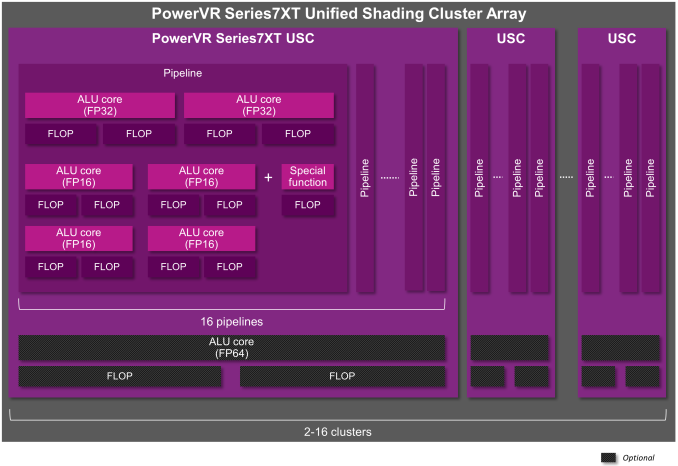

Diving into the Series7 USC, what we find is again largely similar to Series6XT. The number of FP16 and FP32 ALUs and resulting floating point operation throughput is unchanged, however the Special Function Unit (SFU) has received a pair of changes. First and foremost, the SFU can now natively handle FP16 operations along with FP32 operations, whereas the 6XT SFU would promote everything to FP32. By offering native FP16 execution, Imagination is able to avoid wasting power by not doing unnecessary higher precision work on FP16 data sets. Keep in mind that SFU operations are already relatively expensive, so native FP16 special functions should have a tangible impact on power consumption. Meanwhile though it’s drawn as a single SFU in Imagination’s logical diagrams, I suspect that part of this change is that Imagination has implemented separate FP16 and FP32 SFUs as part of the existing FP16 and FP32 ALU blocks, in which case there are actually 2 SFUs (though just like the ALUs you can only use one at a time).

Speaking of utilizing, the second SFU enhancement has to deal with when it can be used. Starting with Series7, SFU operations can now be co-issued with ALU operations, allowing for both blocks to be used at once as opposed to one or the other on 6XT. Now to be clear here only SFUs can be co-issued, and wavefronts can only use either the FP16 or FP32 ALUs (and not both at once), but there is now a degree of co-issue capability within a USC that was not available before. Imagination tells us that SFUs were coming up in code more than expected, and as a result adding co-issue capabilities would improve performance.

To accomplish this, Imagination has expanded their instruction set to enable co-issue functionality along with further improving performance. New bundled/fused instructions have been added, which are what trigger the co-issued SFU. These fused instructions also allow for certain common sequences that are issued over multiple instructions to instead be issued as a single fused instruction, which in turn reduces code size slightly and potentially allows for these operations to be performed in fewer cycles.

Meanwhile, exclusive to Series7XT is optional support for FP64 operations. If the FP64 is included in the exact 7XT core licensed, each pipeline gets a single FP64 ALU, which allows them to process up to 2 FLOPs/USC/clock.

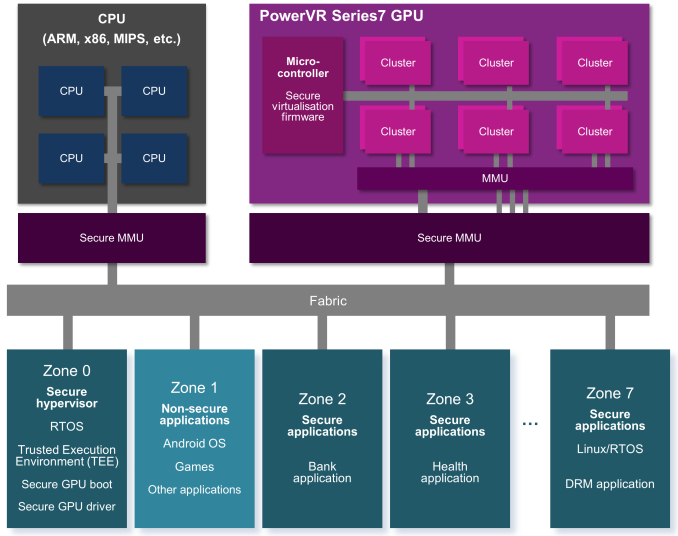

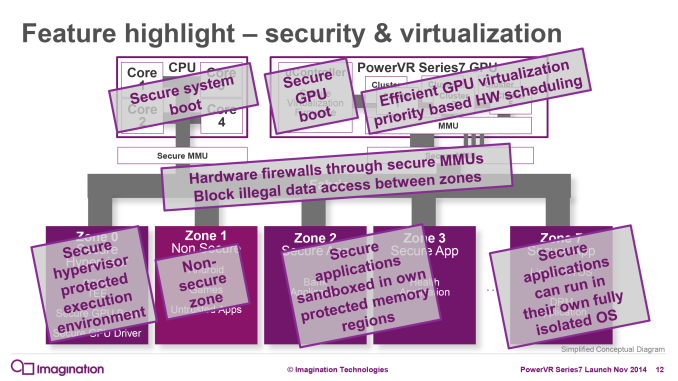

Finally, while not a graphics feature pre-se, Series7 will be introducing one more feature to the family. A base feature in 7XT and optional to 7XE will be GPU support for hardware security zones, which uses virtualization technology to create up to 8 zones that are fully isolated from each other.

Within the mobile space application sandboxing is already common, and indeed this functionality is already present on a number of CPUs. However in the case of security zones that are only supported on the CPU, the zone separation essentially has to be emulated on the GPU, requiring a full task flush and reload of the entire GPU in order to switch between tasks. Besides not being performant, software enforced security is functionally less robust than hardware enforced security and in turn means the GPU can in theory be used to attack other zones.

Consequently for Series7 Imagination is adding security zone support to their hardware to go along with the security zones already supported with CPUs. From a practical standpoint what we’re looking at is the capability to do better application sandboxing to keep applications from getting out and touching other parts of the system. This is something of a mixed bag for users since sandboxing can be used for both good and evil. Hardware zones can be used to secure certain high-profile applications (banking, health, Apple Pay, etc), but said zones are also responsible for enabling stronger DRM on video content and hardening the system against jailbreaks in cases where direct root access is not allowed by the manufacturer.

49 Comments

View All Comments

Badelhas - Monday, November 10, 2014 - link

Second!Nenad - Monday, November 10, 2014 - link

Seeing "these should be taken with a grain of salt" comment, I wonder if anyone ever did comparison of past announcements and how accurate they were? Was Imagination announcement for 6XT close to reality? How about similar pre-release figures given by NVidia, Intel etc... ?It would be interesting article on its own ;p

chizow - Monday, November 10, 2014 - link

I would say given the recent benchmark performances of both the Nexus 9 and iPad 2, both Nvidia and Imagination/Apple delivered on their claimed performance increases from this time last year and CES in the form of A8X and Tegra K1 (Denver). There's really only 2 players right now in the tablet ARM-based SoC market if you are looking at pure performance, Apple with PowerVR and Nvidia, everyone else is ~1.5 generations behind.kron123456789 - Monday, November 10, 2014 - link

That's why i wanna see what beast Nvidia will announce at CES 2015.LostAlone - Tuesday, November 11, 2014 - link

It's kinda silly to say only Tegra and Apple exist on the SoC market at the moment - The fact that those two happen to have just had their latest designs put to market in high profile tablet devices. There hasn't been a high end ARM tablet put to market in recent months that wasn't Tegra or an iDevice. So sure, they are technically winning that extremely specific race, but in the wider mobile sector (which uses the exact same SoCs most of the time) no-one has a clear lead.The most recent Snapdragon flagship won't be around for a while yet and the Exynos 5233 was only in some Note 4s and is yet to be in a mass market device, there's no suggestion that either of those two won't be competitive with the Apple and Nvidia going forward.

Obviously more recent chips tend to be better, so the ones we've seen most recently are very likely the most powerful of what there is at this exact second. But they certainly don't blow anything out of the water. Apple and Nvidia are doing good at this exact moment. Their chips compare well in benchmarks to what else is on the market. But those benchmarks are certainly not much ahead of what was on the market when they were released, and there's absolutely nothing to suggest that they are now somehow ahead of Qualcomms next generation, and the Exynos 5 series is certainly on par with them.

When the Tegra 4 came to market it was impressively ahead of the curve in graphics especially. But sadly no-one bought into all that much back then, and everyone else has continued pushing on with their own designs and closed that gap to the point where it's an even race. There is no generation gap what so ever.

And the thing is that none of this is even relevant anymore anyway. Mobile devices don't need better graphics. We don't make use of what we have already. Even if you do happen to be 15 and (somehow) have a flagship phone I severely doubt even someone with your buckets of free time and large periods waiting for buses and/or being driven around actually appreciate the (slight) difference between this years and last years flagships in terms of GPU power alone. Beyond that the amount of CPU horsepower in modern devices is laughably overblown now to the point where outside benchmarking you genuinely have to try very very hard to tap even a fraction of what the processor can do. At this point in the evolution of the mobile space the specs just don't make enough of a difference to wow people now. Last years flagships are still fine devices. The year befores are too. And the year befores. They might not all have the latest software, but they still do what they originally did just as well as ever they did it.

Just stop buying into the hype.

jospoortvliet - Tuesday, November 11, 2014 - link

You're pretty much right, but neglect to mention two things: improvements in features (like always-on voice detection, higher resolutions, better camera's etc; and then there are improvements like battery life, which, as owner of the One (m7) can appreciate: I don't care for the features or performance of the One (m8) but I would gladly spend a few hundred bucks for its battery life. And the move from a Snapdragon 600 to 80x is big in that regard...kron123456789 - Tuesday, November 11, 2014 - link

When first Tegra 4 came out, there already was Snapdragon 800(devices with those SoCs came out in summer 2013). When first device with Tegra K1 came out(Xiaomi MiPad), there wasn't anything, that could compete with it. When second device came out(Shield Tablet), there still wasn't anything. And when third K1 device came out(Nexus 9, with dual-core Denver CPU) there was ONLY iPad Air 2.K1 and A8x are the most powerful ARM SoCs right now, and that will be changed only in 2015. Exynos 7 Octa and Snapdragon 805 cant compete with K1 or A8X. But, there is one major problem with K1 and A8X - these are SoCs for tablets, not for smartphones.

So, yeah, in performace side Nvidia and Apple are 1.5 generations ahead.

chizow - Wednesday, November 12, 2014 - link

Exactly, and Nvidia still has 2 major trump cards to play with Erista which will have both 20nm and Maxwell GPU arch, so they'll extend their lead again as early as Q2.The problem Nvidia (and Apple) is their performance advantages right now are going to a blackhole, there's nothing that really takes advantage of this lead and therefore, they can't use this as an advantage to establish clear market dominance (at least for Nvidia on the Android side). Their challenge will be to grow the gaming ecosystem to take advantage of this benefit, but in the meantime, they will continue to iterate and dominate the competition.

chizow - Wednesday, November 12, 2014 - link

Who's buying into the hype when there's no need to? The latest two products on the market show Nvidia and Apple are head and shoulders above the competition and there is nothing on Qualcomm's roadmap that will challenge this until maybe next year, by which time Nvidia for sure will have punted the ball out of their range again with Erista (Maxwell and 20nm). Apple won't have as many levels to pull to improve A9, unless they also go with Maxwell GPU IP.http://anandtech.com/show/8670/google-nexus-9-prel...

lilmoe - Monday, November 10, 2014 - link

In response to the last paragraph, I thought the only competitor for Imagination's GPU was Mali (since both are the only GPUs that can be licensed to integrators)... Kepler/Maxwell and Adreno aren't in the "business space".