ARM Announces the Cortex-R52 CPU: Deterministic & Safe, For ADAS & More

by Ryan Smith on September 19, 2016 7:30 PM EST

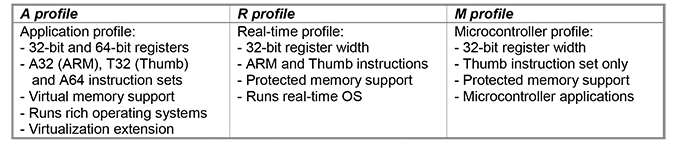

Though it didn’t attract a ton of attention at the time, back in 2013 ARM announced the ARMv8-R architecture. An update for ARM’s architecture for real-time CPUs, ARMv8-R was developed to further the real-time platform by adding support for newer features such as virtualization and memory protection. At the time the company didn’t announce any specific CPU designs for the architecture, but rather just announced the architecture on its own.

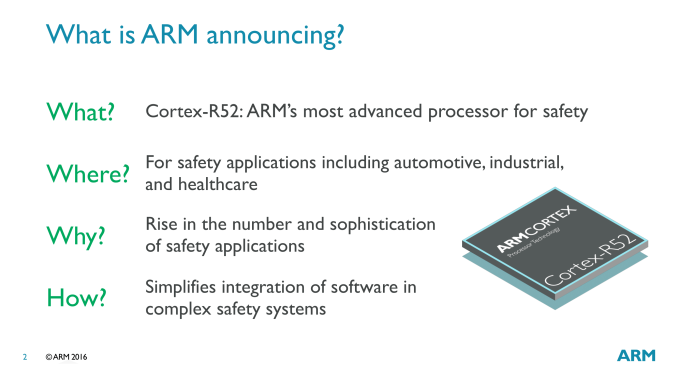

Now just under 3 years later, ARM is announcing their first ARMv8-R CPU design this evening with the Cortex-R52. An upgrade of sorts to ARM’s existing Cortex-R5, the R52 is the company’s first implementation of ARMv8-R. R52 makes specific use of many of the new features enabled by the architecture, while improving performance at the same time. ARM is pitching the new CPU core at markets that need a safety-critical CPU – a market that the Cortex-R series has been in for a while – where the deterministic nature of the CPU’s execution model is critical to ensuring quick and accurate execution.

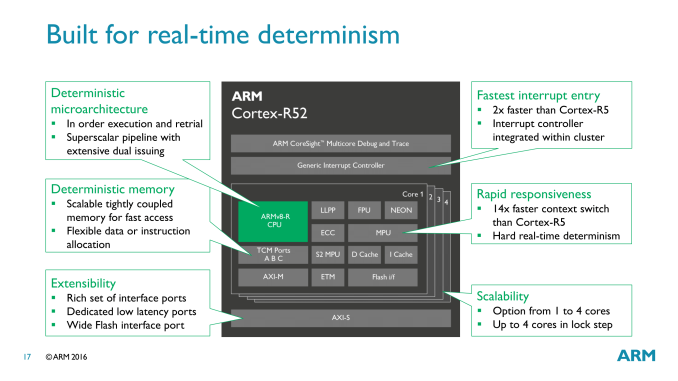

While the focus on today’s CPU design announcement is on functionality and utility over microarchitecture, ARM has revealed a bit about how the Cortex-R52 is organized under the hood. The microarchitecture is a direct evolution of the previous Cortex-R5. This means we’re looking at a dual-issue in-order execution pipeline, with a pipeline length of 8 stages. Broadly speaking, this description is very similar to that of the better-known Cortex-A7/A53 cores, which implies that this is a real-time optimized version of the basic elements in that design.

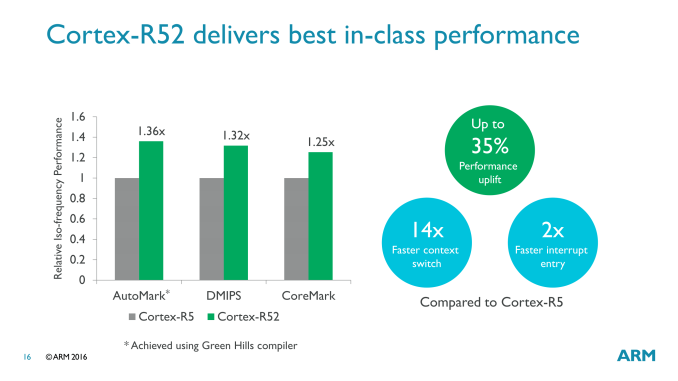

As the Cortex-R series is focused on determinism and real-time responsiveness over total performance, ARM doesn’t heavily promote these cores on the basis of performance. But at least within the Cortex-R family, they are talking about a performance increase of upwards of 35% in common CPU benchmarks. More important for this market than throughput however is responsiveness: for the R52, ARM has done some specific work to improve interrupt entry and context switching performance, doubling the former and achieving a staggering 14-fold increase on the latter.

The big deal here of course is the deterministic nature of the CPU. The entire microarchitecture is optimized to avoid variable time, non-deterministic operations, which is why it’s an in-order processor to begin with. This design extends to how memory is managed as well, with ARM avoiding a virtual memory system and its associated TLB translation-misses in favor of a model they call the Protected System Memory Architecture (PSMA), which is used in conjunction with an MPU to handle memory operations without the translation.

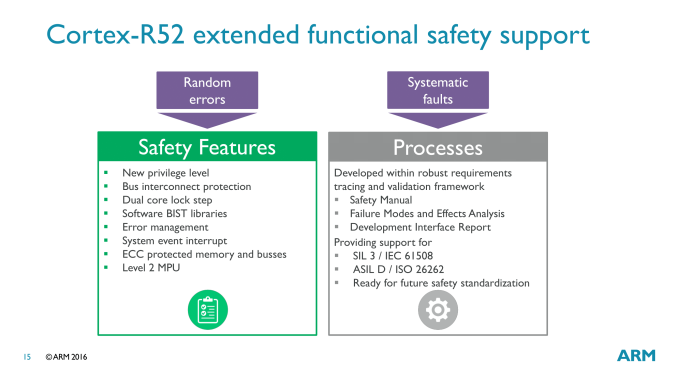

On the safety side of matters, the R52 has a few different error-resiliency features to ensure accuracy. Multi-core lock step returns for this design, allowing two R52 cores to execute the same task in parallel for redundancy. And on the memory side of matters, ECC is offered across both the memory busses and the memory itself, in order to avoid random bitflips.

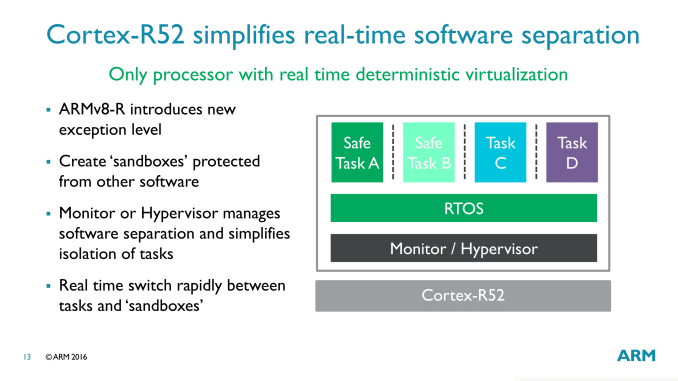

Meanwhile in terms of new functionality for hardware developers, as part of ARMv8-R, Cortex-R52 implements support for hardware virtualization. Like virtually everything else in R52, this is deterministic as well, with the hypervisor working with the MPU to offer each guest OS its own section of the physical memory space. According to ARM this is a particularly important advancement, as previous means of separating tasks on real-time CPUs were non-deterministic, which is an obvious problem for the target market.

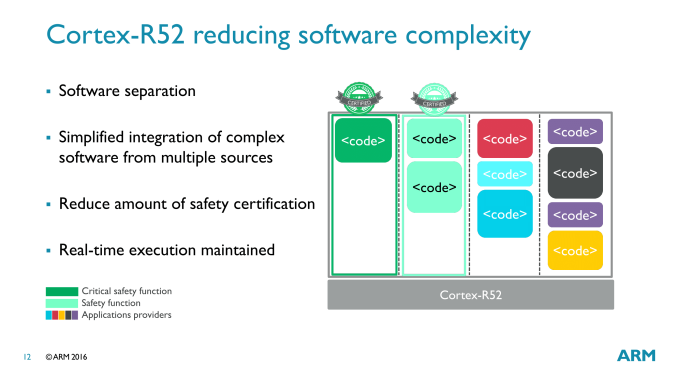

The significance of virtualization in a real-time processor is that it allows for multiple tasks to be executed on the R52 without interfering with each other. In large, complex devices (e.g. cars), this allows for fewer processors within the device, as these tasks can be consolidated onto a smaller number of processors. At the same time, the rigid separation between the tasks means that it’s possible to run both safety-critical and non-critical (but still real-time) tasks on an R52 together, knowing that the latter will not interrupt the safety-critical tasks. For cars and other devices where there is stringent safety certification, this is especially useful as it means that other tasks can be added (via their own guest OS) without invalidating the certifications of the safety-critical tasks.

This is also why ARM’s earlier context switching and interrupt entry improvements are so important. With a hypervisor now in play and multiple tasks executing on a single processor, the vastly improved ability to switch between tasks is critical for allowing multi-tasking without a major performance hit from context switching overhead.

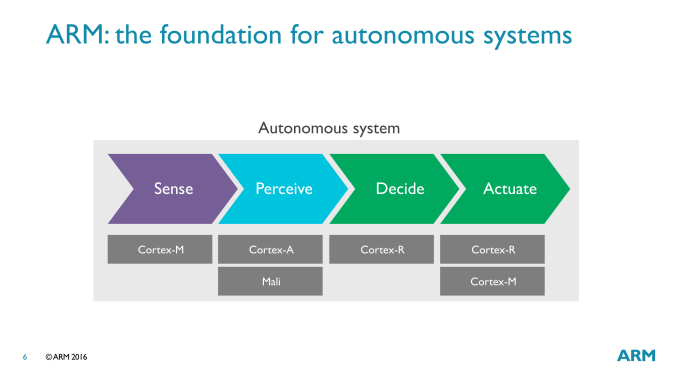

Finally, for the potential market for the Cortex-R52, ARM is pushing the big three traditional markets for real-time and safety-critical processors; automotive, industrial, and medical. All three of these make significant use of real-time functionality, and there’s also a great deal of overlap on safety as well.

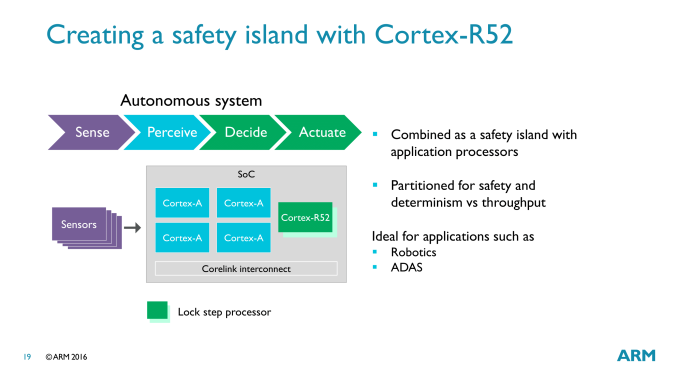

ARM is particular interested in the Advanced Driver Assistance Systems (ADAS) market, where the Cortex-R is part of a full system of ARM IP. A full ADAS setup from start to end would utilize all three processor types – M, R, and A – with the Cortex-R handling the real-time decision making and executing on those decisions, while Cortex-A would be used to handle sensor perception/interpretation, and Cortex-M would be in many of the individual sensors.

Wrapping things up, as with most other ARM IP announcements, the announcement of the Cortex-R52 is setting the stage for future products. ARM isn’t talking about specific customers at this time, but they already have a number of companies who have licensed ARMv8-R and will be in need of a CPU design to go with it. To that end, we should be seeing Cortex-R52 start appearing under the hood of various devices in the coming years.

Source: ARM

20 Comments

View All Comments

Samus - Monday, September 19, 2016 - link

I don't understand why you would want an R series chip over an A series chip when the cost of licensing an A series is already pennies on the dollar. This being targeted at mission critical "safety" applications indicates the target market isn't really the penny pinching crowd and will likely desire the more available, more standard, and more sophisticated cortex A53/A57, which is ridiculously cheap to license for single core SoC's.Ryan Smith - Monday, September 19, 2016 - link

The short answer is that the A series CPUs are optimized for throughput and total performance. They lack the determinism and real-time guarantees that the R series provides; the time it takes for an instruction to complete is too variable. With the R5/52 series, you know exactly how long something is going to take, and the order it completes in. Which makes state validation a heck of a lot easier.Samus - Tuesday, September 20, 2016 - link

Interesting. I just figured for the simple applications R is targeted at, a high clocked A series core would be more than up to the task to guarantee availability. Can't the pipeline be optimized for real time availability with a linear kernel scheduler?Qwertilot - Tuesday, September 20, 2016 - link

There's a very big difference between something 'probably' being enough and being *sure* that it is :)nightbringer57 - Tuesday, September 20, 2016 - link

There are lots of things in a modern, fast processor that are good, but prevent you from ensuring a 100% probability that the tasks will be dealt with on time.This is about the maximum latency for one run of the task.

Say your application processor can run a task 1000 times/second. With caches, out-of-order execution, and all modern, sophisticated hardware mechanisms, you can achieve this. The issue is that you're going to have wildly varying latencies between the start and the end of the task's run. Even if you're going to run it at an average 1ms latency, it may very well end up having a latency between 0.1 ms and 10ms.

In a real-time processor, which is usually simpler, and deterministic, you may not be able to run the same task more than 400 times a second. But you can actually make sure that this run will never last for more than 3ms.

In such a system, you can actually mathematically prove that you will never get a task "overload": you know that the task will never last more than 3 ms, and by structuring your software in a rigorous way, you can make sure that all tasks will always be performed in a known, and sufficient, timespan. Which is pretty useful for safety-related stuff.

ravyne - Tuesday, September 20, 2016 - link

The target application for these types of processors are mostly safety critical (things crash or people die if it goes wrong) or timing critical (exact -- not just precise -- response time guaranteed or output is corrupted, often rapid but not always so). Things like controllers for industrial robotics, flight controls, your car's airbags, are good examples where even the smallest chance of non-determinism causing a system fault or too slow of a response is too much disaster to risk. On the timing-critical side, things like hard drive controllers are good examples of where the R architecture has a strong presence -- hard disk tracks are only getting tighter, and your data would be terribly corrupted if ever your drive's firmware made a timing mistake.rgulde - Thursday, August 25, 2022 - link

Question I have is related to hypervisor and core link interconnect - I presume the SoC has a resource domain controller to favor R52 peripheral access.--Does this also mean by configuration that the core link interconnect (AXI or AMBRA bus, AHB) can not bandwidth limit the R52 core?

--How about faults on the A side - is reload of code limited to A side? Do they load code independently?

--Does the R52 have a lower priority task to communicate data to be processed via some sort of shared memory? E.G. Offshoring AI Neural Nets to the A side core for analysis - thinking autonomous vehicle, or control feedback from main A side integrator of many flight controls and inertial navigation (optical gyros) running at perhaps a slower rate.

michael2k - Monday, September 19, 2016 - link

Redundancy is free with R.ddriver - Monday, September 19, 2016 - link

A is NOT realtime. Even with a RT kernel it doesn't come anywhere close to R for real time.extide - Tuesday, September 20, 2016 - link

Pretty much all hard drives and most SSD controllers are based on Cortex R series chips, BTW.