The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTDragon Age: Inquisition

Our RPG of choice for 2015 is Dragon Age: Inquisition, the latest game in the Dragon Age series of ARPGs. Offering an expansive world that can easily challenge even the best of our video cards, Dragon Age also offers us an alternative take on EA/DICE’s Frostbite 3 engine, which powers this game along with Battlefield 4.

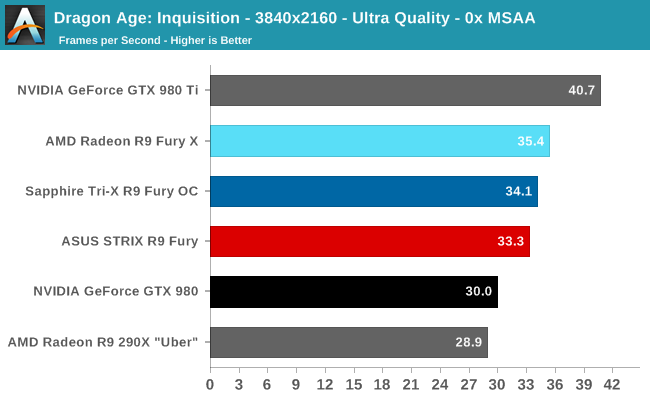

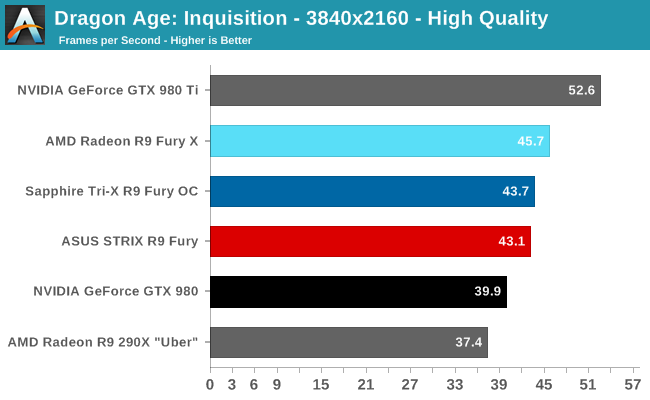

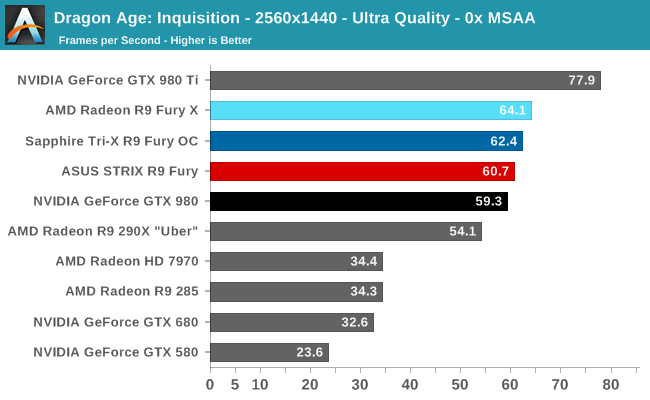

Dragon Age is another solid win for AMD at 4K, with the R9 Fury taking an 8-11% lead over the GTX 980. However it’s also a game that’s better played at 1440p than 4K on the R9 Fury, at which point that lead shrinks to just 2%. At the very least the R9 Fury can claim to be the minimum card required to crack 60fps at that resolution, a feat the GTX 980 falls just short of.

288 Comments

View All Comments

FlushedBubblyJock - Wednesday, July 15, 2015 - link

Oh, gee, forgot, it's not amd's fault ... it was "developers and access" which is not amd's fault, either... of course...OMFG

redraider89 - Monday, July 20, 2015 - link

What's your excuse for being such an idiotic, despicable and ugly intel/nvidia fanboy? I don't know, maybe your parents? Somewhere you went wrong.OldSchoolKiller1977 - Sunday, July 26, 2015 - link

I am sorry and NVIDIA fan boys resort to name calling.... what was it that you said and I quote "Hypocrite" :)redraider89 - Monday, July 20, 2015 - link

Your problem is deeper than just that you like intel/nvidia since you apparently hate people who don't like those, and ONLY because they like something different than you do.ant6n - Saturday, July 11, 2015 - link

A third way to look at it is that maybe AMD did it right.Let's say the chip is built from 80% stream processors (by area), the most redundant elements. If some of those functional elements fail during manufacture, they can disable them and sell it as the cheaper card. If something in the other 20% of the chip fails, the whole chip may be garbage. So basically you want a card such that if all the stream processors are functional, the other 20% become the bottleneck, whereas if some of the stream processors fail and they have to sell it as a simple Fury, then the stream processors become the bottleneck.

thomascheng - Saturday, July 11, 2015 - link

That is probably AMD's smart play. Fury was always the intended card. Perfect cards will be the X and perhaps less perfect card will be the Nano.FlushedBubblyJock - Thursday, July 16, 2015 - link

"fury was always the intended card"ROFL

amd fanboy out much ?

I mean it is unbelievable, what you said, and that you said it.

theduckofdeath - Friday, July 24, 2015 - link

Just shut up, Bubby.akamateau - Tuesday, July 14, 2015 - link

Anand has been running DX12 benchmarks last spring. When they compared Radeon 290x to GTX 980 Ti nVidia ordered them to stop. That is why no more DX12 benchmarks have been run.Intel and nVidia are at a huge disadvantage with DX12 and Mantle.

The reason:

AMD IP: Asynchronous Shader Pipelines and Asynchronous Compute Engines.

FlushedBubblyJock - Wednesday, July 15, 2015 - link

We saw mantle benchmarks so your fantasy is a bad amd fanboy delusion.