The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTCivilization: Beyond Earth

Shifting gears from action to strategy, we have Civilization: Beyond Earth, the latest in the Civilization series of strategy games. Civilization is not quite as GPU-demanding as some of our action games, but at Ultra quality it can still pose a challenge for even high-end video cards. Meanwhile as the first Mantle-enabled strategy title Civilization gives us an interesting look into low-level API performance on larger scale games, along with a look at developer Firaxis’s interesting use of split frame rendering with Mantle to reduce latency rather than improving framerates.

Unlike Battlefield 4 where we needed to switch back to DirectX for performance reasons on the R9 Fury X, AMD’s latest card still holds up rather well on Mantle here, probably due to the fact that Civilization is a newer game. Though not drawn in this chart, what we find is that AMD loses a frame or two per second for running Mantle, but in return they see far, far better minimums (more on that later).

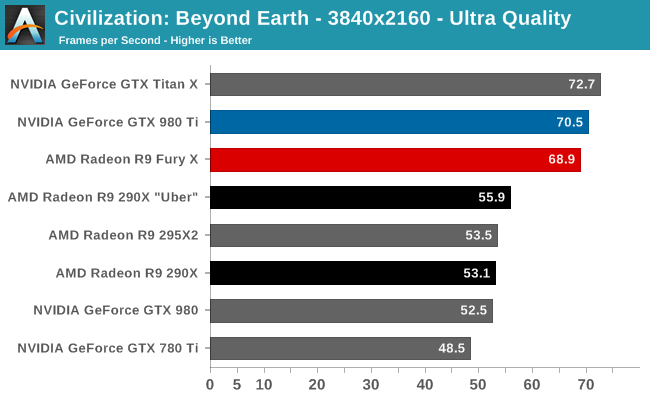

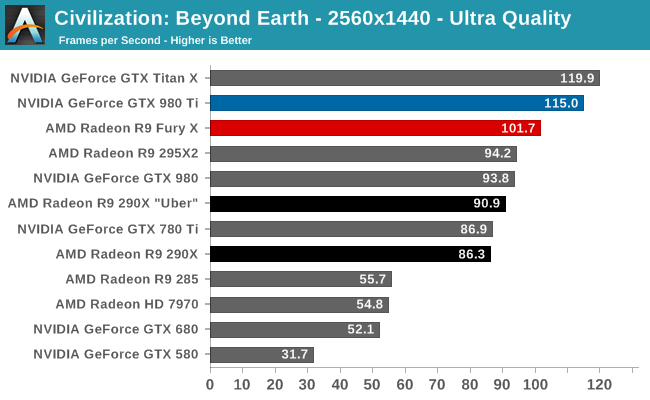

Overall then the R9 Fury X looks pretty good at 4K. Even at Ultra quality it can deliver a better than 60fps average and is within 2% of the GTX 980 Ti. On the other hand AMD struggles a bit more at 1440p, where the absolute framerate is still rather high, but relative to the GTX 980 Ti it’s now an 11% performance gap. This being a Mantle game, the fact that AMD does fall behind is a bit surprising, as at a high level they should be enjoying the CPU benefits of the low-level API. We’ll revisit 1440p performance a bit later on, but this is going to be a recurring quirk for AMD, and a detriment for 1440p 144Hz monitor owners.

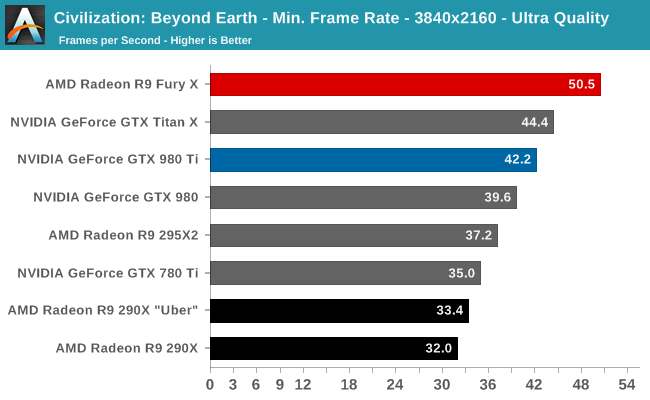

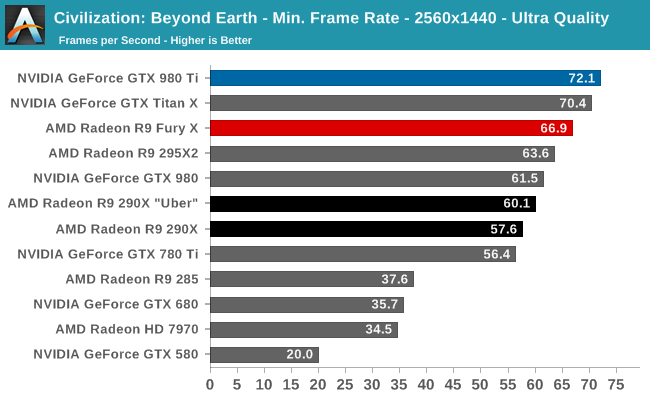

The bigger advantage of Mantle is really the minimum framerates, and here the R9 Fury X soars. At 4K the R9 Fury X delivers a minimum framerate of 50.5fps, some 20% better than the GTX 980 Ti. Both cards do well enough here, but it goes without saying that this is a very distinct difference, and one that is well in AMD’s favor. The only downside for AMD here is that they can’t keep this advantage at 1440p, where they go back to trailing the GTX 980 Ti in minimum framerates by 7%.

On that note I do have one concern here with AMD’s support plans for Mantle. Mainly I’m worried that as well as the R9 Fury X does here, there’s a risk Mantle may stop working in the future. The GCN 1.2 based R9 285 can’t use the Mantle path at all (it crashes), and the R9 Fury X is not all that different in architecture.

458 Comments

View All Comments

TallestJon96 - Sunday, July 5, 2015 - link

This card and the 980 ti meet two interesting milestones in my mind. First, this is the first time 1080p isn't even considered. Pretty cool to be at the point where 1080p is considered at bit of a low resolution for high end cards.Second, it's the point where we have single cards can play games at 4k, with higher graphical settings, and have better performance than a ps4. So at this point, if a ps4 is playable, than 4k gaming is playable.

It's great to see higher and higher resolutions.

XtAzY - Sunday, July 5, 2015 - link

Geez these benchies are making my 580 looking ancient.MacGyver85 - Sunday, July 5, 2015 - link

Idle power does not start things off especially well for the R9 Fury X, though it’s not too poor either. The 82W at the wall is a distinct increase over NVIDIA’s latest cards, and even the R9 290X. On the other hand the R9 Fury X has to run a CLLC rather than simple fans. Further complicating factors is the fact that the card idles at 300MHz for the core, but the memory doesn’t idle at all. HBM is meant to have rather low power consumption under load versus GDDR5, but one wonders just how that compares at idle.I'd like to see you guys post power consumption numbers with power to the pump cut at idle, to answer the questions you pose. I'm pretty sure the card is competitive without the pump running (but still with the fan to have an equal comparison). If not it will give us more of an insight in what improvements AMD can give to HBM in the future with regards to power consumption. But I'd be very suprised if they haven't dealt with that during the design phase. After all, power consumption is THE defining limit for graphics performance.

Oxford Guy - Sunday, July 5, 2015 - link

Idle power consumption isn't the defining limit. The article already said that the cooler keeps the temperature low while also keeping noise levels in check. The result of keeping the temperature low is that AMD can more aggressively tune for performance per watt.Oxford Guy - Sunday, July 5, 2015 - link

This is a gaming card, not a card for casuals who spend most of their time with the GPU idling.Oxford Guy - Sunday, July 5, 2015 - link

The other point which wasn't really made in the article is that the idle noise is higher but consider how many GPUs exhaust their heat into the case. That means higher case fan noise which could cancel out the idle noise difference. This card's radiator can be set to exhaust directly out of the case.mdriftmeyer - Sunday, July 5, 2015 - link

It's an engineering card as much as it is for gaming. It's a great solid modeling card with OpenCL. The way AMD is building its driver foundation will pay off big in the next quarter.Nagorak - Monday, July 6, 2015 - link

I don't know that I agree about that. Even people who game a lot probably use their computer for other things and it sucks to be using more watts while idle. That being said, the increase is not a whole lot.Oxford Guy - Thursday, July 9, 2015 - link

Gaming is a luxury activity. People who are really concerned about power usage would, at the very least, stick with a low-wattage GPU like a 750 Ti or something and turn down the quality settings. Or, if you really want to be green, don't do 3D gaming at all.MacGyver85 - Wednesday, July 15, 2015 - link

That's not really true. I don't mind my gfx card pulling a lot of power while I'm gaming. But I want it to sip power when it's doing nothing. And since any card spends most of its time idling, idling is actually very important (if not most important) in overal (yearly) power consumption.Btw I never said that idle power consumption is the defining limit, I said power consumption is the defining limit. It's a give that any Watt you save while idling is generally a Watt of extra headroom when running at full power. The lower the baseline load the more room for actual, functional (graphics) power consumption. And as it turns out I was right in my assumption that the actual graphics card minus the cooler pump idle power consumption is competitive with nVidia's.