The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTFar Cry 4

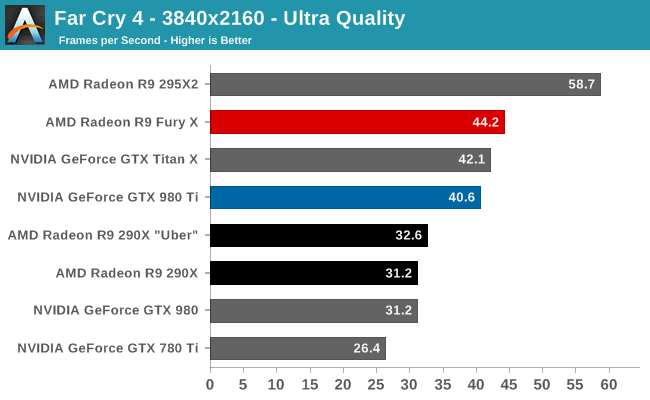

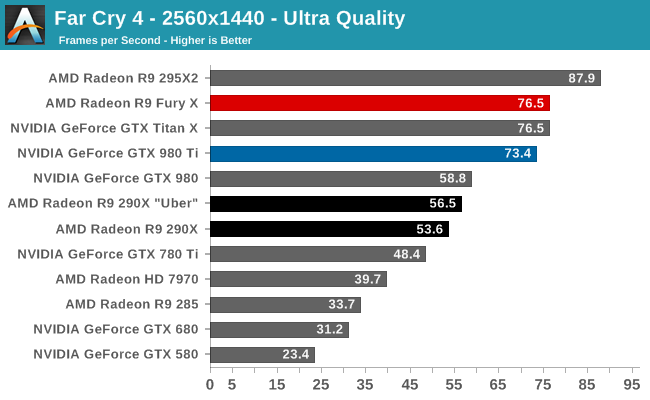

The next game in our 2015 GPU benchmark suite is Far Cry 4, Ubisoft’s Himalayan action game. A lot like Crysis 3, Far Cry 4 can be quite tough on GPUs, especially with Ultra settings thanks to the game’s expansive environments.

Like the Talos Principle, this is another game that treats AMD well. The R9 Fury X doesn’t just beat the GTX 980 Ti at 4K Ultra, but it beats the GTX Titan X as well. Even 1440p Ultra isn’t too shabby, with a smaller gap but none the less the same outcome.

Overall what we find is that the R9 Fury X has a 9% lead at 4K Ultra, and a 4% lead at 1440p Ultra, making this one of the only games where AMD takes the lead at 1440p. However something interesting happens if we run at 4K with lower quality settings, and that lead evaporates very quickly, shifting to an NVIDIA lead by roughly the same amount. At this time I don’t have a good explanation for this other than to say that whatever is going on at Ultra, it clearly is very different from what happens at Medium quality, and it favors AMD.

Finally, the performance gains over the R9 290X are around average. At 4K and 1440p Ultra the R9 Fury X picks up 35%; at 4K Medium that shrinks to 30%.

458 Comments

View All Comments

looncraz - Friday, July 3, 2015 - link

We don't yet know how the Fury X will overclock with unlocked voltages.SLI is almost just as unreliable as CF, ever peruse the forums? That, and quite often you can get profiles from the wild wired web well before the companies release their support - especially on AMD's side.

chizow - Friday, July 3, 2015 - link

@looncrazWe do know Fury X is an exceptionally poor overclocker at stock and already uses more power than the competition. Who's fault is it that we don't have proper overclocking capabilities when AMD was the one who publicly claimed this card was an "Overclocker's Dream?" Maybe they meant you could Overclock it, in your Dreams?

SLI is not as unreliable as CF, Nvidia actually offers timely updates on Day 1 and works with the developers to implement SLI support. In cases where there isn't a Day 1 profile, SLI has always provided more granular control over SLI profile bits vs. AMD's black box approach of a loadable binary, or wholesale game profile copies (which can break other things, like AA compatibility bits).

silverblue - Friday, July 3, 2015 - link

No, he did actually mention the 980Ti's excellent overclocking ability. Conversely, at no point did he mention Fury X's overclocking ability, presumably because there isn't any.Refuge - Friday, July 3, 2015 - link

He does mention it, and does say that it isn't really possible until they get modified bios with unlocked voltages.e36Jeff - Thursday, July 2, 2015 - link

first off, its 81W, not 120W(467-386). Second, unless you are running furmark as your screen saver, its pretty irrelevant. It merely serves to demonstrate the maximum amount of power the GPU is allowed to use(and given that the 980 Ti's is 1W less than in gaming, it indicates it is being artfically limited because it knows its running furmark).The important power number is the in game power usage, where the gap is 20W.

Ryan Smith - Thursday, July 2, 2015 - link

There is no "artificial" limiting on the GTX 980 Ti in FurMark. The card has a 250W limit, and it tends to hit it in both games and FurMark. Unlike the R9 Fury X, NVIDIA did not build in a bunch of thermal/electrical headroom in to the reference design.kn00tcn - Thursday, July 2, 2015 - link

because furmark is normal usage right!? hbm magically lowers the gpu core's power right!? wtf is wrong with younandnandnand - Thursday, July 2, 2015 - link

AMD's Fury X has failed. 980 Ti is simply better.In 2016 NVIDIA will ship GPUs with HBM version 2.0, which will have greater bandwidth and capacity than these HBM cards. AMD will be truly dead.

looncraz - Friday, July 3, 2015 - link

You do realize HBM was designed by AMD with Hynix, right? That is why AMD got first dibs.Want to see that kind of innovation again in the future? You best hope AMD sticks around, because they're the only ones innovating at all.

nVidia is like Apple, they're good at making pretty looking products and throwing the best of what others created into making it work well, then they throw their software into the mix and call it a premium product.

Intel hasn't innovated on the CPU front since the advent of the Pentium 4. Core * CPUs are derived from the Penitum M, which was derived from the Pentium Pro.

Kutark - Friday, July 3, 2015 - link

Man you are pegging the hipster meter BIG TIME. Get serious. "Intel hasn't innovated on the CPU front since the advent of the Pentium 4..." That has to be THE dumbest shit i've read in a long time.Say what you will about nvidia, but maxwell is a pristinely engineered chip.

While i agree with you that AMD sticking around is good, you can't be pissed at nvidia if they become a monopoly because AMD just can't resist buying tickets on the fail train...