The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTPower Efficiency: Putting A Lid On Fiji

Last, but certainly not least, before ending our tour of the Fiji GPU we need to talk about power.

Power is, without question, AMD’s biggest deficit going into the launch of R9 Fury X. With Maxwell 2 NVIDIA took what they learned from Tegra and stepped up their power efficiency in a major way, which allowed them to not only outperform AMD’s Hawaii GPUs, but to do so while consuming significantly less power. In this 4th year of 28nm the typical power efficiency gains that come from a smaller process are another year off, so both AMD and NVIDIA have needed to invest in power efficiency at an architectural level for 28nm.

The power situation on Fiji in turn is a bit of a mixed bag, but largely positive for AMD. The good news here is that AMD has indeed taken power efficiency very seriously for Fiji, and in turn has made a number of changes to boost power efficiency and bring it more in line with what NVIDIA has achieved, leading to R9 Fury X being rated for the same 275W Typical Board Power (TBP) as the R9 390X, and just 25W more than R9 290X. The bad news, as we’ll see in our benchmarks, is that AMD won’t quite meet NVIDIA’s power efficiency numbers; but they had a significant gap to close and they have done a very admirable job in coming this far.

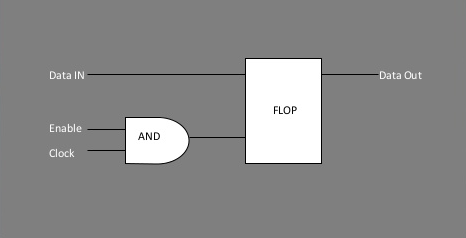

A basic implementation of clock gating. Image Source: Mahesh Dananjaya - Clock Gating

So what has AMD done to better control power consumption? Perhaps the biggest improvement here is that AMD has improved their clock gating technology by implementing multi-level clock gating throughout the chip, in order to better cut off parts of the GPU that are not in use and thereby reduce their power consumption. With clock gating the clock signal is turned off to a functional unit, leaving said unit turned on but not doing any work or switching transistors, which allows for significant power savings even without turning said unit off via power gating (and without the time-cost of bringing it back up). Even turning off a functional unit for a couple of dozen cycles, say while the geometry engines wait on the shaders to complete their work, brings down power consumption in load states as well as the more obvious idle states.

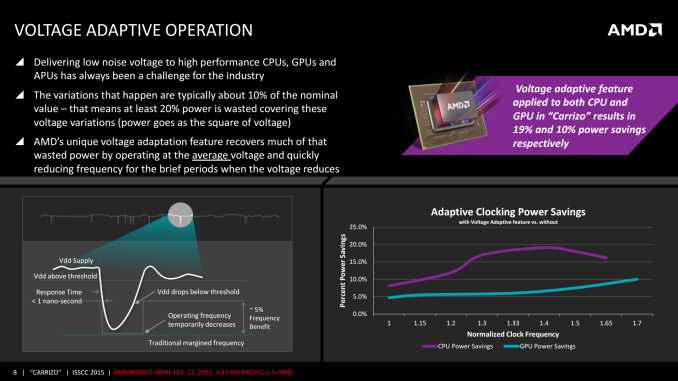

Meanwhile AMD has taken some lessons from their recently-launched Carrizo APU – which is also based on GCN 1.2 and designed around improving power efficiency – in order to boost power efficiency for Fiji. What AMD has disclosed to us is that the power flow for Fiji is based on what they’ve learned from the APUs, which in turn has allowed AMD to better control/map several aspects of Fiji’s voltage needs for better operation. Voltage adaptive operation, for example, allows AMD to use a lower voltage that’s closer to Fiji’s real voltage needs, reducing the amount of power wasted by operating Fiji at a voltage higher than it needs to operate. VAO essentially uses thinner voltage safeguards to accomplish this, pulling back the clockspeed momentarily if the supply voltage drops below Fiji’s operational requirements.

Similarly, AMD has also put a greater focus on the binning process to better profile chips before they leave the factory. This includes a tighter voltage/frequency curve (enabled by VSO) to cut down on wasted voltage, but it also includes new processes to better identify and compensate for leakage on a per-chip basis. Leakage is the eternal scourge for chip designers, and with 28nm it has only gotten worse. Even with the now highly-mature process, leakage can still consume (or rather allows to escape) quite a bit of power if not controlled for. This is also one of the reasons that FinFETs will be so important in TSMC’s next-generation 16nm manufacturing process, as FinFETs cut down on leakage.

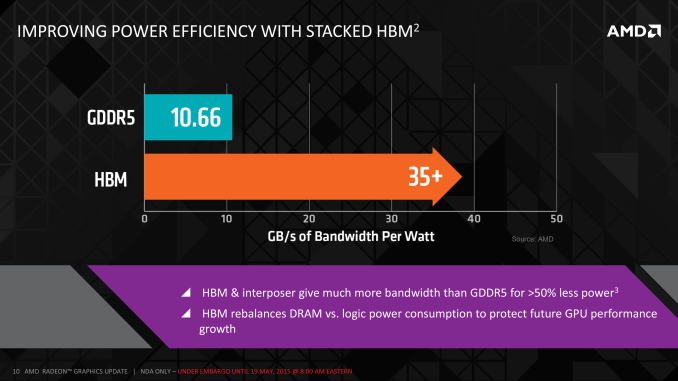

AMD’s third power optimization comes from the use of HBM, which along with its greater bandwidth also offers lower power consumption relative to even the 512-bit wide 5Gbps GDDR5 memory bus AMD used on R9 290X. On R9 290X AMD estimates that memory power consumption was 15-20% (37-50W) of their 250W TDP, largely due to the extensive PHYs required to handle the complicated bus signaling of GDDR5.

By AMD’s own metrics, HBM delivers better than 3x the bandwidth per watt of GDDR5 thanks to the simpler bus and lower operating voltage of 1.3v. Given that AMD opted to spend some of their gains on increasing memory bandwidth as opposed to just power savings, the final power savings aren’t 3X, but by AMD’s estimates the amount of power they’re spending on HBM is around 15-20W, which has saved R9 Fury X around 20-30W of power relative to R9 290X. These are savings that AMD can simply keep, or as in the case of R9 Fury X, spend some of them on giving the card more power headroom for higher performance.

The final element in AMD’s plan to improve energy efficiency on Fiji is a bit more brute-force but none the less important, and that’s temperature controls. As our long-time readers may recall from the R9 290 (Hawaii) launch in 2013, with the reference R9 290X AMD picked a higher temperature gradient over lower operating temperatures in order to maximize the cooling efficiency of their reference cooler. The tradeoff was that they had to accept higher leakage as a result of the higher temperatures, though as AMD’s second-generation 28nm product they felt they had leakage under control.

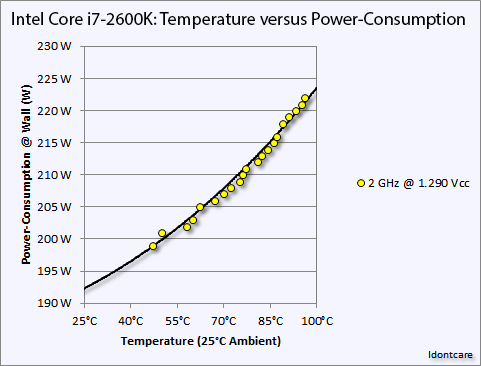

An example of the temperature versus power consumption principle on an Intel Core i7-2600K. Image Credit: AT Forums User "Idontcare"

But with R9 Fury X in particular and its large, overpowered closed loop liquid cooler, AMD has gone in the opposite direction. AMD no longer needs to rely on temperature gradients to boost cooler performance, and as a result they’ve significantly dialed down the average operating temperature of the Fiji GPU in R9 Fury X in order to further mitigate leakage and reduce overall power consumption. Whereas R9 290X would go to 95C, R9 Fury X essentially tops out at 65C, as that’s the point after which it will start ramping up the fan speed rather than allow the GPU to get any warmer. This 30C reduction in GPU temperature undoubtedly saves AMD some power on leakage, and while the precise amount isn’t disclosed, as leakage is a non-linear relationship the results could be rather significant for Fiji.

To put this to the test, we did a bit of experimenting with Crysis 3 to look at power consumption over time. While the R9 Fury X doesn’t allow us to let it run any warmer, we are able to monitor power consumption at the start of the benchmark run when the card has just left idle at around 40C, and compare it to when the run is terminated at 65C.

| Crysis 3 Power Consumption | ||||

| GPU Temperature | Power Consumption @ Wall | |||

| Start Of Run | 40C | 388W | ||

| 15 Minutes, Equilibrium | 65C | 408W | ||

What we find is that Fury’s power consumption increases by 20W at the wall between the start and the end, and this despite the fact that the scene is unchanged, the framerate is unchanged, and the CPU usage is unchanged. The roughly 18W difference after the PSU comes from the video card, its power consumption increasing with the GPU temperature and a slighter bump from the approximately 100RPM increase in fan speeds. Had AMD allowed Fury X to go to 83C (the same temperature as the GTX 980 Ti), it likely would have been closer to a 300W TBP card, and 95C would be higher yet, indicating just how important temperature controls are for AMD in order to get the best energy efficiency as is possible out of Fiji.

Last, but not least on the subject of power consumption, we need to quickly discuss the driver situation. AMD tells us that for R9 Fury X they were somewhat conservative on how they adjusted clockspeeds, favoring performance over power savings. As a result R9 Fury X doesn’t downclock as often as it could, staying at 1050MHz more often, practically running at maximum clockspeeds whenever a real load is put on it so that it offers the best performance possible should it be needed.

What AMD is telling us right now is that future drivers for Fiji products will be better tuned than what we’re seeing on Fury X, such that those parts won’t run at their full load clocks quite so aggressively. The nature of this claim invites a wait-and-see approach, but based on what we’re seeing with R9 Fury X so far, it’s not an unrealistic goal for AMD. More aggressive power control and throttling not only improves power consumption under light loads, but it also stands to improve power consumption under full load. GCN can switch voltages as quickly as 10 microseconds, or hundreds of times in the span of time it takes for a GPU to render a single frame, so there are opportunities there for the GPU to take short breaks whenever a bottleneck is occurring in the rendering process and the card’s full 1050MHz isn’t required for a thousand cycles or so.

On that note, AMD has also told us to keep our eyes peeled for what they deliver with the R9 Fury (vanilla). Without its closed loop liquid cooler, the R9 Fury will not have the same overbuilt cooling apparatus available, and as a result it sounds like AMD will take a more aggressive approach in-line with the above to better control power consumption.

458 Comments

View All Comments

testbug00 - Sunday, July 5, 2015 - link

You don't need architecture improvements to use DX12/Vulkan/etc. The APIs merely allow you to implement them over DX11 if you choose to. You can write a DX12 game without optimizing for any GPUs (although, not doing so for GCN given consoles are GCN would be a tad silly).If developers are aiming to put low level stuff in whenever they can than the issue becomes that due to AMD's "GCN everywhere" approach developers may just start coding for PS4, porting that code to Xbox DX12 and than porting that to PC with higher textures/better shadows/effects. In which Nvidia could take massive performance deficites to AMD due to not getting the same amount of extra performance from DX12.

Don't see that happening in the next 5 years. At least, not with most games that are console+PC and need huge performance. You may see it in a lot of Indie/small studio cross platform games however.

RG1975 - Thursday, July 2, 2015 - link

AMD is getting there but, they still have a little bit to go to bring us a new "9700 Pro". That card devastated all Nvidia cards back then. That's what I'm waiting for to come from AMD before I switch back.Thatguy97 - Thursday, July 2, 2015 - link

would you say amd is now the "geforce fx 5800"piroroadkill - Thursday, July 2, 2015 - link

Everyone who bought a Geforce FX card should feel bad, because the AMD offerings were massively better. But now AMD is close to NVIDIA, it's still time to rag on AMD, huh?That said, of course if I had $650 to spend, you bet your ass I'd buy a 980 Ti.

Thatguy97 - Thursday, July 2, 2015 - link

oh believe me i remember they felt bad lol but im not ragging on amd but nvidia stole their thunder with the 980 tiKateH - Thursday, July 2, 2015 - link

C'mon, Fury isn't even close to the Geforce FX level of fail. It's really hard to overstate how bad the FX5800 was, compared to the Radeon 9700 and even the Geforce 4600Ti.The Fury X wins some 4K benchmarks, the 980Ti wins some. The 980Ti uses a bit less power but the Fury X is cooler and quieter.

Geforce FX level of fail would be if the Fury X was released 3 months from now to go up against the 980Ti with 390X levels of performance and an air cooler.

Thatguy97 - Thursday, July 2, 2015 - link

To be fair the 5950 ultra was actually decentMorawka - Thursday, July 2, 2015 - link

your understating nvidia's scores.. the won 90% of all benchmarks, not just "some". a full 120W more power under furmark load and they are using HBM!!looncraz - Thursday, July 2, 2015 - link

Furmark power load means nothing, it is just a good way to stress test and see how much power the GPU is capable of pulling in a worst-case scenario and how it behaves in that scenario.While gaming, the difference is miniscule and no one will care one bit.

Also, they didn't win 90% of the benchmarks at 4K, though they certainly did at 1440. However, the real world isn't that simple. A 10% performance difference in GPUs may as well be zero difference, there are pretty much no game features which only require a 10% higher performance GPU to use... or even 15%.

As for the value argument, I'd say they are about even. The Fury X will run cooler and quieter, take up less space, and will undoubtedly improve to parity or beyond the 980Ti in performance with driver updates. For a number of reasons, the Fury X should actually age better, as well. But that really only matters for people who keep their cards for three years or more (which most people usually do). The 980Ti has a RAM capacity advantage and an excellent - and known - overclocking capacity and currently performs unnoticeably better.

I'd also expect two Fury X cards to outperform two 980Ti cards with XFire currently having better scaling than SLI.

chizow - Thursday, July 2, 2015 - link

The differences in minimums aren't miniscule at all, and you also seem to be discounting the fact 980Ti overclocks much better than Fury X. Sure XDMA CF scales better when it works, but AMD has shown time and again, they're completely unreliable for timely CF fixes for popular games to the point CF is clearly a negative for them right now.