The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

The GTX Titan X represents a very interesting intersection for NVIDIA, crossing Maxwell’s unparalleled power efficiency with GTX Titan’s flagship level performance goals and similarly high power allowance. The end result is that this gives us a chance to see how well Maxwell holds up when pushed to the limit; to see how well the architecture holds up in the form of a 601mm2 GPU with a 250W TDP.

| GeForce GTX Titan X Voltages | ||||

| GTX Titan X Boost Voltage | GTX 980 Boost Voltage | GTX Titan X Idle Voltage | ||

| 1.162v | 1.225v | 0.849v | ||

Starting off with voltages, based on our samples we find that NVIDIA has been rather conservative in their voltage allowance, presumably to keep power consumption down. With the highest stock boost bin hitting a voltage of just 1.162v, GTX Titan X operates notably lower on the voltage curve than the GTX 980. This goes hand-in-hand with GTX Titan X’s stock clockspeeds, which are around 100MHz lower than GTX 980.

| GeForce GTX Titan X Average Clockspeeds | |||

| Game | GTX Titan X | GTX 980 | |

| Max Boost Clock | 1215MHz | 1252MHz | |

| Battlefield 4 |

1088MHz

|

1227MHz

|

|

| Crysis 3 |

1113MHz

|

1177MHz

|

|

| Mordor |

1126MHz

|

1164MHz

|

|

| Civilization: BE |

1088MHz

|

1215MHz

|

|

| Dragon Age |

1189MHz

|

1215MHz

|

|

| Talos Principle |

1126MHz

|

1215MHz

|

|

| Far Cry 4 |

1101MHz

|

1164MHz

|

|

| Total War: Attila |

1088MHz

|

1177MHz

|

|

| GRID Autosport |

1151MHz

|

1190MHz

|

|

Speaking of clockspeeds, taking a look at our average clockspeeds for GTX Titan X and GTX 980 showcases just why the 50% larger GM200 GPU only leads to an average performance advantage of 35% for the GTX Titan X. While the max boost bins are both over 1.2GHz, the GTX Titan has to back off far more often to stay within its power and thermal limits. The final clockspeed difference between the two cards depends on the game in question, but we’re looking at a real-world clockspeed deficit of 50-100MHz for GTX Titan X.

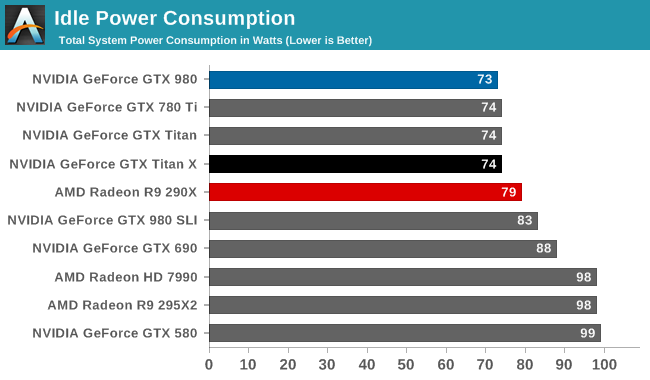

Starting off with idle power consumption, the GTX Titan X comes out strong as expected. Even at 8 billion transistors, NVIDIA is able to keep power consumption at idle very low, with all of our recent single-GPU NVIDIA cards coming in at 73-74W at the wall.

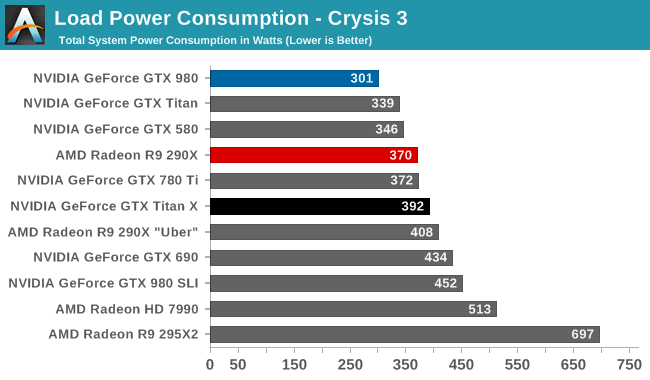

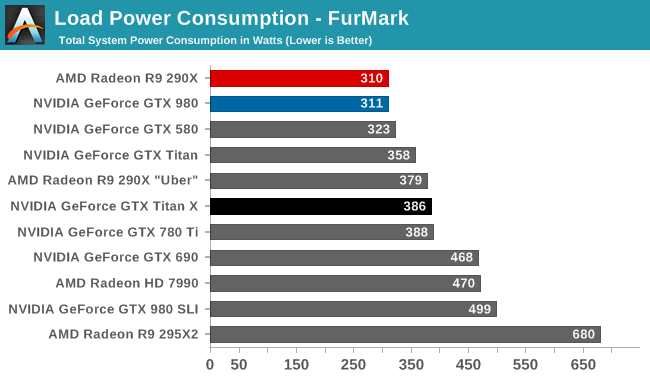

Meanwhile load power consumption for GTX Titan X is more or less exactly what we’d expect. With NVIDIA having nailed down their throttling mechanisms for Kepler and Maxwell, the GTX Titan X has a load power profile almost identical to the GTX 780 Ti, the closest equivalent GK110 card. Under Crysis 3 this manifests itself as a 20W increase in power consumption at the wall – generally attributable to the greater CPU load from GTX Titan X’s better GPU performance – while under FurMark the two cards are within 2W of each other.

Compared to the GTX 980 on the other hand, this is of course a sizable increase in power consumption. With a TDP difference on paper of 85W, the difference at the wall is an almost perfect match. GTX Titan X still offers Maxwell’s overall energy efficiency, delivering greatly superior performance for the power consumption, but this is a 250W card and it shows. Meanwhile the GTX Titan X’s power consumption also ends up being very close to the unrestricted R9 290X Uber, which in light of the Titan’s 44% 4K performance advantage further drives home the point about NVIDIA’s power efficiency lead at this time.

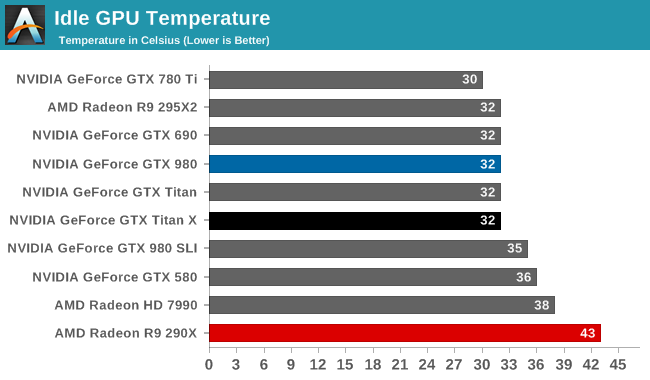

With the same Titan cooler and same idle power consumption, it should come as no surprise that the GTX Titan X offers the same idle temperatures as its GK110 predecessors: a relatively cool 32C.

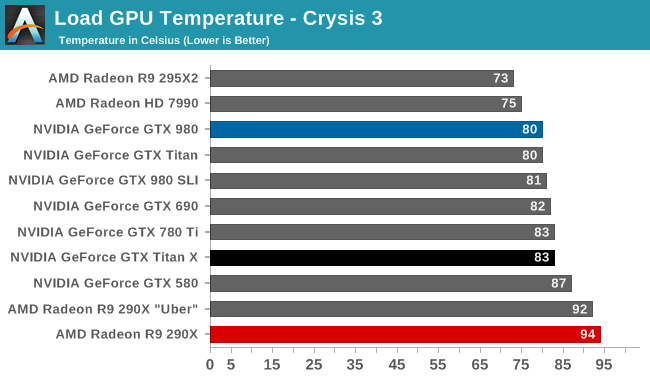

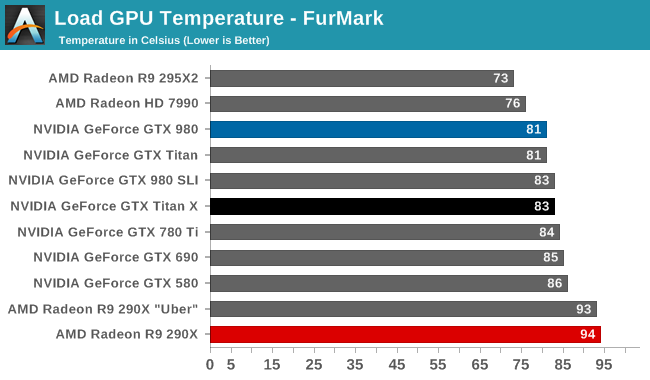

Moving on to load temperatures, the GTX Titan X has a stock temperature limit of 83C, just like the GTX 780 Ti. Consequently this is exactly where we see the card top out at under both FurMark and Crysis 3. 83C does lead to the card temperature throttling in most cases, though as we’ve seen in our look at average clockspeeds it’s generally not a big drop.

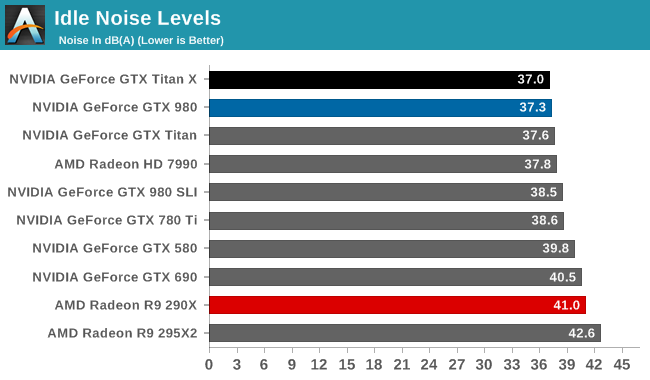

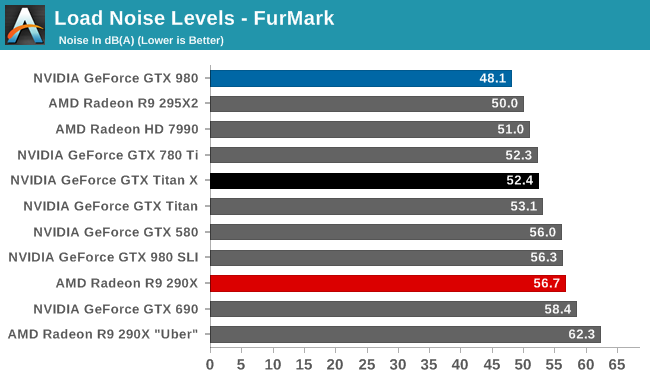

Last but not least we have our noise results. With the Titan cooler backing it, the GTX Titan X has no problem keeping quiet at idle. At 37.0db(A) it's technically the quietest card among our entire collection of high-end cards, and from a practical perspective is close to silent.

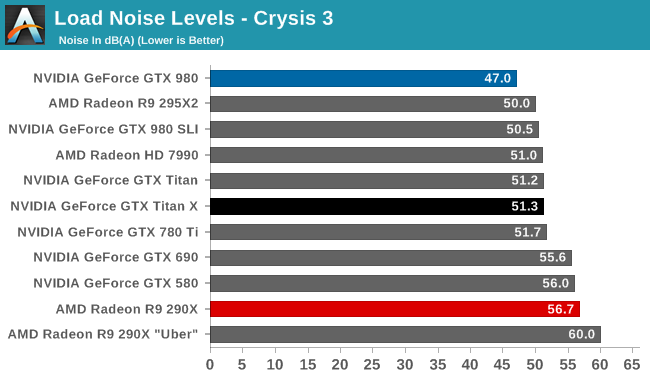

Much like GTX Titan X’s power profile, GTX Titan X’s noise profile almost perfectly mirrors the GTX 780 Ti. With the card hitting 51.3dB(A) under Crysis 3 and 52.4dB(A) under FurMark, it is respectively only 0.4dB and 0.1dB off from the GTX 780 Ti. From a practical perspective what this means is that the GTX Titan X isn’t quite the hushed card that was the GTX 980 – nor with a 250W TDP would we expect it to be – but for its chart-topping gaming performance it delivers some very impressive acoustics. The Titan cooler continues to serve NVIDIA well, allowing them to dissipate 250W in a blower without making a lot of noise in the process.

Overall then, from a power/temp/noise perspective the GTX Titan X is every bit as impressive as the original GTX Titan and its GTX 780 Ti sibling. Thanks to the Maxwell architecture and Titan cooler, NVIDIA has been able to deliver a 50% increase in gaming performance over the GTX 780 Ti without an increase in power consumption or noise, leading to NVIDIA once again delivering a flagship video card that can top the performance charts without unnecessarily sacrificing power consumption or noise.

276 Comments

View All Comments

Dug - Thursday, March 19, 2015 - link

Thank you for pointing this out.chizow - Monday, March 23, 2015 - link

Uh, they absolutely do push 4GB, its not all for the framebuffer but they use it as a texture cache that absolutely leads to a smoother gaming experience. I've seen SoM, FC4, AC:Unity all use the entire 4GB on my 980 at 1440p Ultra settings (textures most important ofc) even without MSAA.You can optimize as much as you like but if you can keep texture buffered locally it is going to result in a better gaming experience.

And for 780Ti owners not being happy, believe what you like, but these are the folks jumping to upgrade even to 980 because that 3GB has crippled the card, especially at higher resolutions like 4K. 780Ti beats 290X in everything and every resolution, until 4K.

https://www.google.com/?gws_rd=ssl#q=780+ti+3gb+no...

FlushedBubblyJock - Thursday, April 2, 2015 - link

Funny how 3.5GB wass just recently a kickk to the insufficient groin, a gigantic and terrible lie, and worth a lawsuit due to performance issues... as 4GB was sorely needed, now 4GB isn't used....Yes 4GB isn't needed. It was just 970 seconds ago, but not now !

DominionSeraph - Tuesday, March 17, 2015 - link

You always pay extra for the privilege of owning a halo product.Nvidia already rewrote the pricing structure in the consumer's favor when they released the GTX 970 -- a card with $650 performance -- at $329. You can't complain too much that they don't give you the GTX 980 for $400. If you want above the 970 you're going to pay for it. And Nvidia has hit it out of the ballpark with the Titan X. If Nvidia brought the high end of Maxwell down in price AMD would pretty much be out of business considering they'd have to sell housefire Hawaii at $150 instead of being able to find a trickle of pity buyers at $250.

MapRef41N93W - Tuesday, March 17, 2015 - link

Maxwell architecture is not designed for FP64. Even the Quadro doesn't have it. It's one of the ways NVIDIA saved so much power on the same node.shing3232 - Tuesday, March 17, 2015 - link

I believe they could put FP64 into it if they want, but power efficiency is a good way to make ads.MapRef41N93W - Tuesday, March 17, 2015 - link

Would have required a 650mm^2 die which would have been at the limits of what can be done on TSMC 28nm node. Would have also meant a $1200 card.MapRef41N93W - Tuesday, March 17, 2015 - link

And the Quadro a $4000 card doesn't have it, so why would a $999 gaming card have it.testbug00 - Tuesday, March 17, 2015 - link

would it have? No. They could have given it FP64. Could they have given it FP64 without pushing the power and heat up a lot? Nope.the 390x silicon will be capable of over 3TFlop FP64 (the 390x probably locked to 1/8 performance, however) and will be a smaller chip than this. The price to pay will be heat and power. How much? Good question.

dragonsqrrl - Tuesday, March 17, 2015 - link

Yes, it would've required a lot more transistors and die area with Maxwell's architecture, which relies on separate fp64 and fp32 cores. Comparing the costs associated with double precision performance directly to GCN is inaccurate.