The Joys of 802.11ac WiFi

by Jarred Walton on July 8, 2013 8:15 PM ESTA Quick Test of Real-World Wireless Performance

For testing, I grabbed a bunch of the hardware I had around and gathered one set of numbers: average throughput copying a single large file from my desktop with a wired Gigabit Ethernet connection to a laptop. I’m using Western Digital’s MyNet AC1300 router as the wireless router. The AC1300 is running firmware v1.03.09, and it's a product that has now been around for eight or so months. It supports up to 450Mbps (3x3:3 MIMO) on 802.11n connections and up to 1300Mbps on 5GHz 11ac connections. (Unfortunately, I didn't ever seem to connect with more than two 11ac streams with my current hardware, including Western Digital's AC Bridge.) We’re still not at Gigabit Ethernet speeds in most cases, but with nearly triple the throughput of the fastest 11n wireless it’s definitely getting closer.

For the adapters, I tested both single- and dual-band offerings. On the 802.11ac side, I used an 11ac bridge (the Western Digital My Net AC Bridge), a USB 11ac adapter (Linksys AE6000), and a Clevo P157SM notebook from Mythlogic (Pollux 1613) equipped with Intel’s latest Wireless-AC 7260 adapter. I should take a minute to note that one of the great things about going with a boutique laptop vendor like Mythlogic is the ability to customize not just the storage and CPU, but the WiFi adapter as well. Then I tossed in three other laptops, two of which I’m working on reviewing along with a previously reviewed laptop. The ASUS UX51VZ uses Intel’s common Wireless-N 6235 adapter (dual-band 2x2:2 MIMO with Bluetooth 4.0), Acer’s Aspire R7-571-6468 “oversized hybrid” uses a Broadcom BCM43XNM chipset (based on the hardware ID, it appears to be the BCM943228HM4L, or at least something similar—it’s also dual-band 2x2:2 MIMO with Bluetooth 4.0 support), and the MSI GE40 is the lone 2.4GHz single-band 150Mbps offering sporting a Realtek RTL8723AE chipset. All of the adapters were tested in both 2.4GHz and 5GHz modes (where applicable).

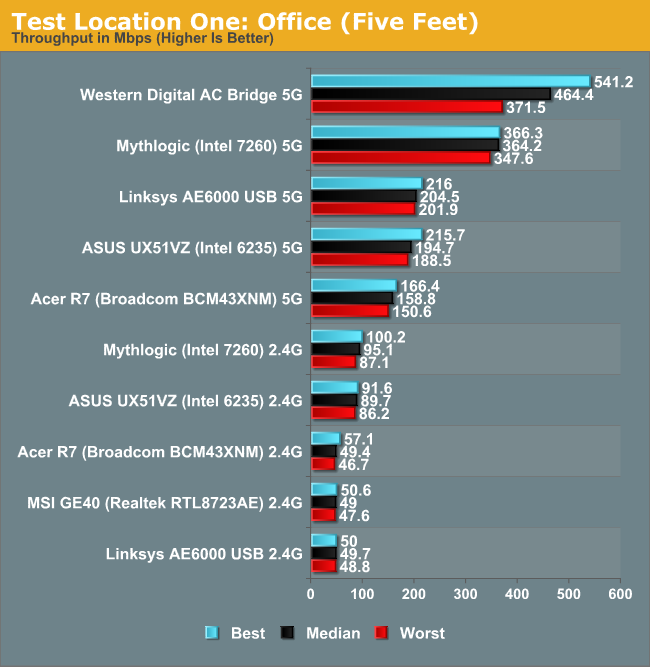

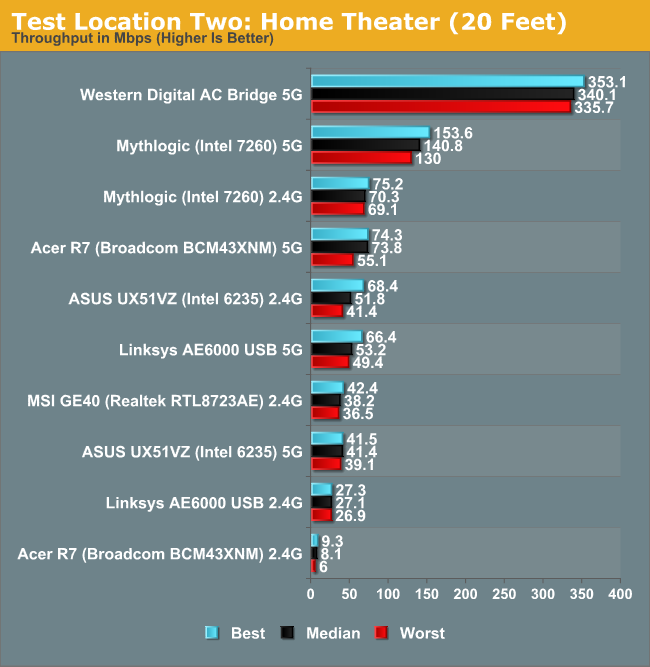

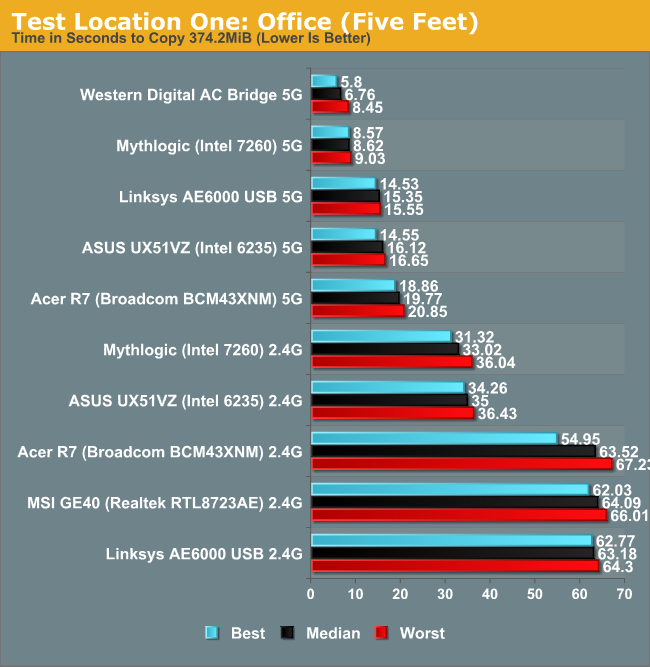

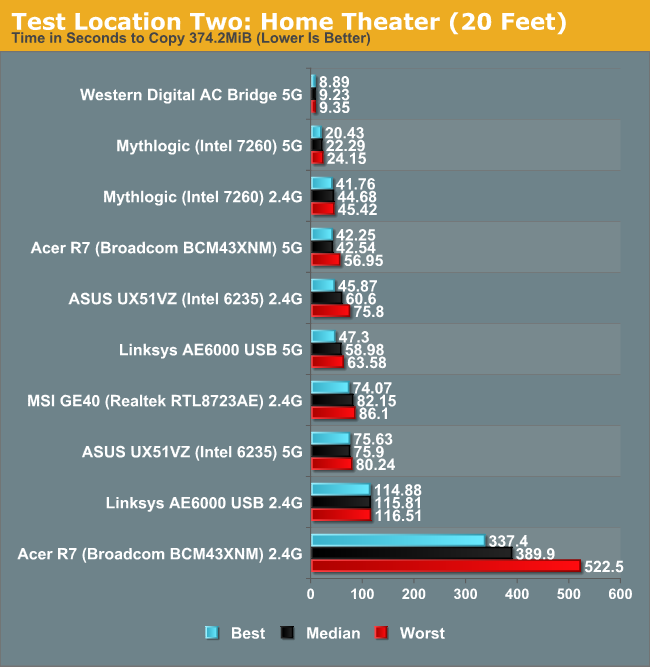

And with that said, here are the results from two test locations. Location one is with the target device in the same room as the router (my office), about five feet away. The second location is downstairs at the home theater area (my “second office”), about 20 feet away with one wall and one floor in between. The results show minimum, median, and maximum throughput of five test runs, with the caveat that I made sure there were no outliers—sometimes Windows or WiFi will have a really slow run or two.

Not surprisingly, the AC Bridge posts the best performance of all the solutions. With three spatial streams, I was hoping to get a lot closer to 800-900Mbps of throughput, but most of the time it appears to have run at closer to two streams. Performance isn't all that different from the Mythlogic laptop with Intel’s 7260 AC adapter at the short range location, but it’s over twice the speed at the second test location. What’s even worse is how poorly many of the solutions end up performing at the second test location.

I’m not surprised to see the Mythlogic notebook post excellent throughput over 5GHz 802.11ac, but it ends up being the third fastest solution even when falling back to 2.4GHz 802.11n at the second location! I could live with throughput of 50+ Mbps throughout my home, but pulling over 300Mbps from the downstairs HTPC to my other networked PCs over wireless is simply awesome…as long as the wireless signal doesn’t get dropped.

I haven’t actually had that happen with the Western Digital AC1300 (yet), but over the past seven or so years of testing notebooks and laptops for AnandTech, dropped wireless connections are a far too common occurrence in my experience. I’ve been through about ten wireless routers during that time, and even the best were never quite as stable/dependable as wired Ethernet. Some might go a couple weeks or even a month without needing a reboot (e.g. the Amped Wireless), while others might have WiFi "crash" every couple of days.

Throw in the lackluster WiFi adapters you find in most laptops (represented here by the Realtek RTL8723AE) and you can hopefully understand why having Gigabit Ethernet in notebooks still matters to a lot of people. I've got a second set of graphs that shows the same data as above, only in seconds required rather than Mbps:

Our current mobile test suite at present weighs in at a hefty 121GiB; over Gigabit Ethernet it takes about 20 minutes to copy everything over. Now imagine a laptop like the MSI GE40, except without the Ethernet port, and I’d be looking at nearly six hours just to copy the testing files over WiFi. (Thank goodness for USB 3.0 external SSD adapters!) But perhaps you never bother with copying large amounts of files over your local network, so slower WiFi speeds aren’t such an issue, right? My Comcast Internet now tops out with downstream speeds of up to 40Mbps and upstream rates of 12Mbps; when I’m not in my office, that means some of the adapters/laptops out there are likely going to bottleneck my Internet speed. It’s not the end of the world when you can only get 25Mbps, but there are certainly times when you’ll notice the difference—particularly if you have multiple PCs accessing the net concurrently.

One final item to discuss is that the adapters aren’t the whole story. You can see in the charts above that just because something supports two spatial streams on WiFi doesn’t inherently mean better performance than single stream adapters. The tiny USB WiFi adapter only manages average performance on a 5GHz connection from a couple rooms away from the router, and on a 2.4GHz connection it ends up being the slowest option outside of the Acer R7 2.4GHz result. Better antennas matter, and in the case of the Acer R7, the tuning of the antenna is likely another major factor.

The R7 actually fails to detect my 2.4GHz network from 50 feet away, yet it still manages to connect to the 5GHz network, and the R7 isn’t alone in such funky behavior. I’ve had laptops where the WiFi adapter powers off when the system has been running more than about 48 hours straight (even if the WiFi wasn’t doing anything), and the only way to get it back is to turn the adapter off and then turn it back on. And if laptops can sometimes have flaky WiFi implementations, many of the tablets out there are worse—range on two of my Android tablets is probably 50 feet at best before I have problems, so even my driveway is “too far” to get WiFi coverage.

139 Comments

View All Comments

thesavvymage - Tuesday, July 9, 2013 - link

up until a little bit ago, Linksys was a brand of Cisco's, so including them both as brand examples isnt really correct. They were however just sold to belkin, so Cisco doesnt even make consumer routers anymoreDanNeely - Tuesday, July 9, 2013 - link

1. 160mhz channels are an optional feature.2. Making hardware that can work on that wide a channel is significantly more difficult than narrower options. N only supported 40mhz channels; so they already had to push the tx/rx modules to double their bandwidth already.

3. For mobile devices the wider bandwidth will result in higher power consumption for the wifi chip. I wouldn't be surprised if 160mhz channels never become common for anything except bridges/etc.

4. At 160mhz you're down to 2 channels in the US now (possibly 4 in the future); which is worse from a conflict standpoint than the 3 channels we've got at 2.4ghz now.

The last point is the biggest reason I don't expect to see 160mhz channels any time soon. It's in the spec; but it has major real world problems. IMO it was added just to let them waive around bigger (theoretical) bandwidth numbers for bragging rights vs commonly available wired networks (never mind that in real world situations 1gb wired will be faster anyway).

DarkXale - Tuesday, July 9, 2013 - link

Actually number 3 is false.A higher bandwidth permits using modulation that requires less energy per bit.

DanNeely - Tuesday, July 9, 2013 - link

Unless I'm misunderstanding something, the higher bandwidths are used to pack more bits in; so the wider streams still need the same amount of power/bit but just cram more total bits into the stream at any given time.DarkXale - Tuesday, July 9, 2013 - link

A higher throughput of course will net a higher power drain (if you're using the bandwidth for that), but a wider bandwidth itself does not cause that.Jaybus - Tuesday, July 9, 2013 - link

The wider channel width would take a bit more power, but that would more than be made up for by allowing more bits per token. Higher throughput will use more power, of course, but does not affect power per bit. Where the power is being increased is in the RF amplifier. It of course takes more power to transmit 3 signals than it does to transmit 2.Also, it takes more power for a 5 GHz carrier than it does for a 2.4 GHz carrier. This is because the rise and fall times for the RF amplifier are the same. Amplifiers are less efficient during the rise and fall time, and the higher the frequency the larger the percentage of time they are in a rise/fall state. This is assuming a class D amplifier design, which it almost certainly is, as it is the most power efficient..

name99 - Tuesday, July 9, 2013 - link

"Making hardware that can work on that wide a channel is significantly more difficult than narrower options"Sufficiently hard that that is not the way it is done.

160MHz support is done through channel bonding, ie running essentially two 80MHz channels in parallel. This means duplication of everything, plus logic to synchronize the two. If you want the two 80MHz channels to be discontiguous, it also means a more aggressive (likely duplicated) set of RF components to handle the two disparate frequencies.

For all these reasons, 160MHz, like MU-MIMO, has been left to the next gen of chips (and who knows if it will be implemented, even there; it's possible all the vendors will conclude that reducing power and area are more important priorities for the immediate future).

Modus24 - Tuesday, July 9, 2013 - link

Seems like Jarred is assuming it's only using 2 streams. It's more likely the lower rates are due to the bad antenna design he mentioned and the link had to drop to a lower order modulation (ie. BPSK, QPSK, 16-QAM, etc.) in order to reduce the bit errors.danstek - Tuesday, July 9, 2013 - link

To correct a statement in the third paragraph, MacBook Air is traditionally 2x2:2 and only the MacBook Pro has had 3x3:3 WiFi implementations.JarredWalton - Tuesday, July 9, 2013 - link

Fixed, thanks.