AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Partially Resident Textures: Not Your Father’s Megatexture

John Carmack’s id Software may not be the engine licensing powerhouse it was back in the Quake 3 days, but that hasn’t changed the revolutionary nature of his engine designs. The reason we bring this up is because there’s a great deal of GPU technology that can be directly mapped to concepts Carmack first implemented. For id Tech 4 Carmack implemented shadow volume technology, which was then first implemented in hardware by NVIDIA as their UltraShadow technology, and has since then been implemented in a number of GPUs. For id Tech 5 the trend has continued, now with AMD doing a hardware implementation of a Carmack inspired technology.

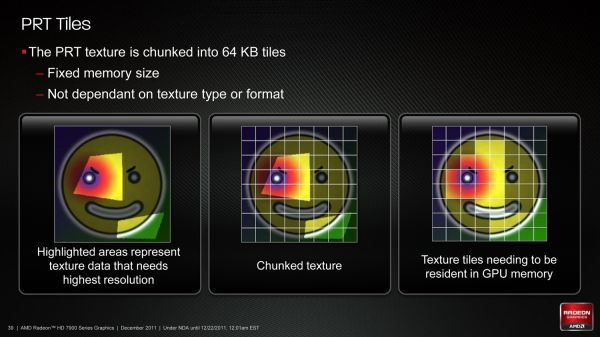

Among the features added to Graphics Core Next that were explicitly for gaming, the final feature was Partially Resident Textures, which many of you are probably more familiar with in concept as Carmack’s MegaTexture technology. The concept behind PRT/Megatexture is that rather than being treated as singular entities, due to their size textures should be broken down into smaller tiles, and then the tiles can be used as necessary. If a complete texture isn’t needed, then rather than loading the entire texture only the relevant tiles can be loaded while the irrelevant tiles can be skipped or loaded at a low quality. Ultimately this technology is designed to improve texture streaming by streaming tiles instead of whole textures, reducing the amount of unnecessary texture data that is streamed.

Currently MegaTexture does this entirely in software using existing OpenGL 3.2 APIs, but AMD believes that more next-generation game engines will use this type of texturing technology. Which makes it something worth targeting, as if they can implement it faster in hardware and get developers to use it, then it will improve game performance on their cards. Again this is similar to volume shadows, where hardware implementations sped up the process.

In order to implement this in hardware AMD has to handle two things: texture conversion, and cache management. With texture conversion, textures need to be read and broken up into tiles; AMD is going with a texture format agnostic method here that can simply chunk textures as they stand, keeping the resulting tiles in the same format. For AMD’s technology each tile will be 64KB, which for an uncompressed 32bit texture would be enough room for a 128 x 128 chunk.

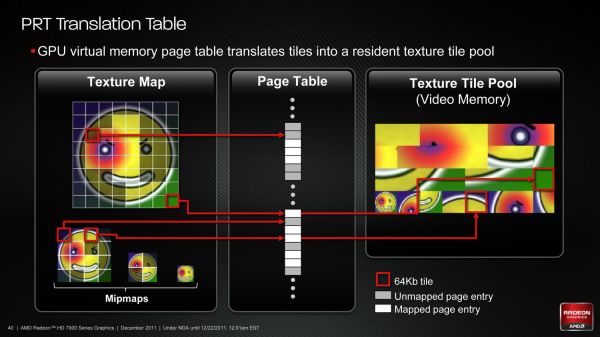

The second aspect of PRT is managing the tiles. In essence PRT reduces local video memory to a very large cache, where tiles are mapped/pinned as necessary and then evicted as per the cache rules, and elsewhere the hardware handles page/tile translation should a tile not already be in the cache. Large tomes have been written on caching methods, and this aspect is of particular interest to AMD because what they learn about caching here they can apply to graphical workloads (i.e. professional) and not just gaming.

To that end AMD put together a technology demo for PRT based on Per-Face Texture Mapping (PTEX), a Disney-developed texture mapping technique that maps textures to polygons in a 1:1 ratio. Disney uses this technique for production rendering, as by constraining textures to a single polygon they don’t have to deal with any complexities that arise as a result of mapping a texture over multiple polygons. In the case of AMD’s demo it not only benefits for the reasons that Disney uses it, but also because when combined with tessellation it trivializes vector displacement, making art generation for tessellated games much easier to create. Finally, PRT fits into all of this by improving the efficiency of accessing and storing the Ptex texture chunks.

Wrapping things up, for the time being while Southern Islands will bring hardware support for PRT software support will remain limited. As D3D is not normally extensible it’s really only possible to easily access the feature from other APIs (e.g. OpenGL), which when it comes to games is going to greatly limit the adoption of the technology. AMD of course is working on the issue, but there are few ways around D3D’s tight restrictions on non-standard features.

292 Comments

View All Comments

Scali - Monday, December 26, 2011 - link

Lol, how's that, when I'm the one saying that AMD's cards are the best performers in Crysis 2?I'm neutral, a concept that is obviously alien to you. Idiots...

Scali - Monday, December 26, 2011 - link

Heck, I'm also the guy who made Endless City run on non-nVidia cards. How does that make me an nVidia fanboy?CeriseCogburn - Thursday, March 8, 2012 - link

That's sad when an nvidia fanboy has to help all the amd fannies with software coding so they can run a benchmark, then after all that work to help the underprivileged, nothing but attacks after the facts... finally silence them.It's really sad when the truth is so far from the pop culture mind that actually speaking it is nearly forbidden.

Thank you for helping them with the benchmark. Continue to be kind in such ways to the sour whining and disgruntled, as it only helped prove how pathetic amd dx11 was...

james007 - Friday, December 30, 2011 - link

This sounded like such an awesome card and I was psyched to get it the moment it comes out -- until reading the part about dropping the 2nd DVI port. A DisplayPort-to-SLDVI doesn't do it, for me, because my desktop has to drive two 30" displays. In fact, I would love to be able to drive a third display so I can have a touch-screen also. My current (previous-generation) VDC does drive both displays just fine.This does not seem like such an infrequent requirement, especially for high-end users. Why would they drop the ability to drive the 2nd display? !!!

Argh!

The_Countess666 - Saturday, December 31, 2011 - link

not trying to sell you anything but, HDMI to dual-link dvi does exist (see link, or google yourself for other shops).http://sewelldirect.com/hdmi-to-dvi-dual-link-cabl...

and these cards do have 1 HDMI-out so that should work for you.

Penti - Wednesday, January 4, 2012 - link

It's the IHV that makes those decisions any way, just because it's not on a reference card doesn't mean they won't show up or that you can't build a card with it. But the HDMI supports more then 1920x1200 finally on this card any how. I guess they could deliver a card with the old type of DVI>HDMI adapters. Obviously opting for HDMI and multidisplaycapable displayport 1.2 makes more sense though. It's been around for years now.Penti - Wednesday, January 4, 2012 - link

Just make sure you actually has the number of connections you need when buying the card, many 7970 bords only appear to support single-link DVI on the DVI-connector.poordirtfarmer2 - Wednesday, January 4, 2012 - link

Enjoyed the article.So this new 79XX architecture is about a GPU architecture that’s also good for “compute work”. The reference to NVIDIA ‘s professional video cards (Quadro ; Telsa), implies to me that this might mean video cards viable for use both in gaming and in engineering / video work stations.

I’m not a pro, but do a lot of video editing, rendering and encoding. I’ve avoided dedicating a machine with an expensive special purpose QUADRO video card. Am I reading the wrong thing into this review, or might the new 79XX and the right driver give folks like me the best of both worlds?

radojko - Thursday, January 5, 2012 - link

UVD 3 in NextGen is a disappointing. Nvidia is two generation in front with PureVideo HD 5.psiboy - Monday, January 9, 2012 - link

Well Mr Ryan Smith I must ask why the omission of 1920 x 1080 in al lbenchmarks... given that almost every new monitor for quite some time has been natively 1920 x 1080... what is it with you guys and Tom's lately.. you both seem to have been ignoring the reality of what most of your readers are using!