AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Power, Temperature, & Noise

As always, we wrap up our look at a new video card with a look at the physical performance attributes: power consumption, temperatures, and noise. With new process nodes being the lifeblood of the GPU industry, each new process gives GPU manufacturers a chance to move their product along a continuum; do they take advantage of a power consumption reduction for the same performance level, a performance increase for the same power consumption, or something in between? In AMD’s case they’ve chosen to try to maximize performance within Cayman’s power budget, which means power, temperature, and noise should be similar to what we’ve seen with 6970, cooler improvements not withstanding.

Before we get into the charts, it’s worth noting right now that we don’t have a good idea of what 7970’s operational voltage is, as we don’t have any tools that can read 7970’s VRMs. We believe it’s close to Cayman’s, but this is something that will require confirmation in the future.

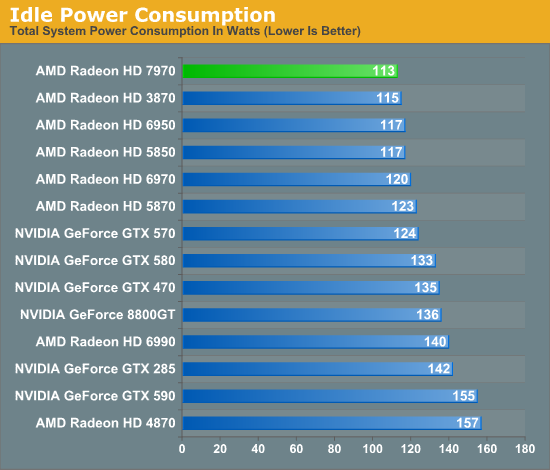

Starting as always with idle power, we can immediately see the benefits of the work AMD has put into idle power usage with Southern Islands. AMD has been working hard to reduce idle power consumption since the 4870 and at this point they’ve finally beaten even the 3870, which was a relatively small GPU using GDDR3 RAM. AMD’s official typical idle TDP here is 15W, and we have little doubt they’re going to continue to whittle that number down on future generations.

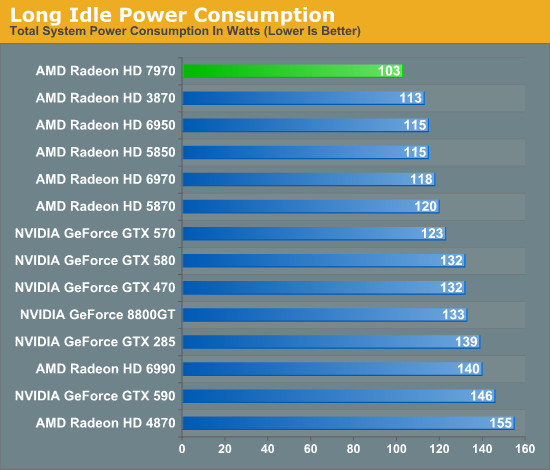

While we’re looking at idle power, we also wanted to take a look at AMD’s “long idle” scenario, where a blanked display or being slave GPU in a multi-GPU setup gives a GPU the opportunity to go into a deeper sleep state as it’s not needed for any work. Through ZeroCore Power AMD is able to shut off virtually every aspect of Tahiti when in a long idle state, allowing AMD to reduce the power consumption of 7970 to under 3W. Meanwhile for every other card there’s a very slight gain to be had in long idle because the GPU can power down all of its display transmitters, but it’s not nearly as effective as shutting down the entire GPU, which is why 7970 has a 10W advantage at the wall versus the next closest GPU.

As we said earlier in our look at ZeroCore Power technology, while the numbers are impressive enough, for the desktop the real use will be in multi-GPU systems as slave GPUs can be put in a ZCP state even while the master GPU is awake and working, significantly reducing idle power and noise in multi-GPU systems.

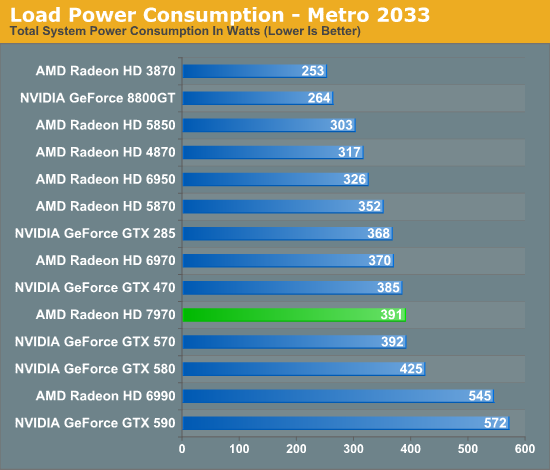

Moving on to our load power tests, based on our testing we have swapped out Crysis for Metro 2033 in this iteration of our benchmark suite as Metro 2033 was proving to be a bit more strenuous on our GPUs and is the only game to ever trigger PowerTune on the 6970.

Looking at Metro it’s clear that the smaller fabrication process for 6970 has allowed AMD to achieve a lower power consumption level relative to their gaming performance – the 7970 leads the GTX 580 by 20-30% in performance while consuming 34W less at the wall. At the same time this test calls into question AMD’s power targets. We’re clearly drawing more power than the 6970 – 21W more to be precise – and while this could be explained by CPU power consumption I’m not convinced that’s the case.

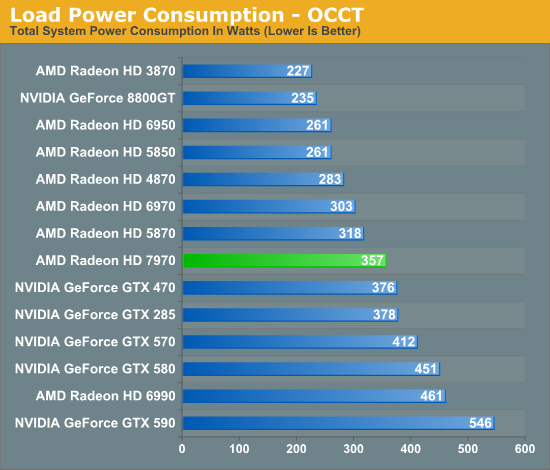

As for our pathological power consumption test we’ve moved on to OCCT, which at this point in time escapes NVIDIA’s throttling mechanism and in spite of the presence of PowerTune still manages to be a hardware workload on AMD’s GPUs than FurMark. OCCT backs up our earlier data from Metro that 7970 draws more power than 6970 even with the similar power targets. We’re drawing 50W more at the wall, which on paper at least would put 7970’s power consumption closer to 280W if AMD’s original 250W target for 6970 is still correct. If all of this is correct, then it calls into doubt AMD’s published power targets, as it looks like AMD has made a power/performance tradeoff with 7970 by trading slightly higher power consumption for what would be higher performance.

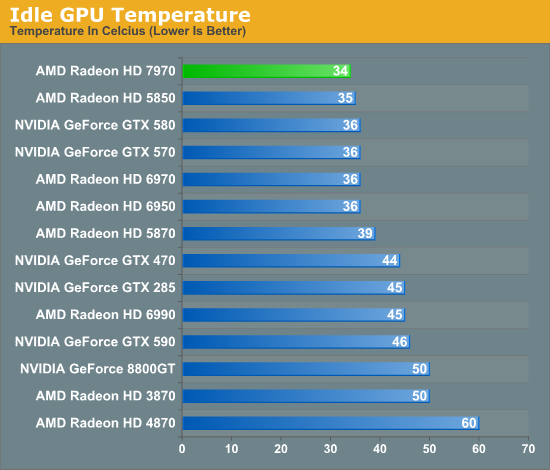

Up next is our look at idle temperatures. All of the last generation GPUs were highly competitive here and as a result it’s a tough crowd as there’s relatively little room to further reduce a GPU’s temperature. Nevertheless the 7970 manages to squeak by the competition, with a recorded idle temperature of 34C, 1C lower than the GTX 580 and 2C lower than the 6970.

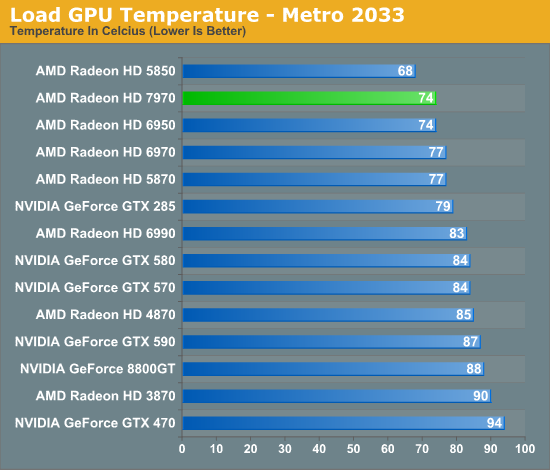

Moving on to temperatures under Metro, the results are quite surprising: the 7970 does really, really good here given its power consumption. At 74C the only cooler video cards are the 6850 and 5850, both of which are lower tier, lower power versions of higher end parts. Part of this can be traced back to AMD’s cooling optimizations such as moving back to a full slot exhaust vent, but I don’t believe that’s a full explanation. We’ll get back to this when we’re looking at noise.

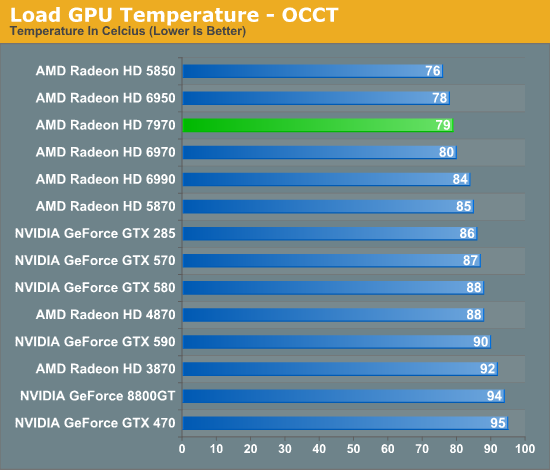

While the overall numbers are higher in OCCT, the relative rankings are unchanged from Metro. The only cooler cards in this lineup are the 6950 and 5850. Here the 7970 hits 79C, while the 6970 is slightly behind at 80C, and the GTX 500 series is in the upper 80s.

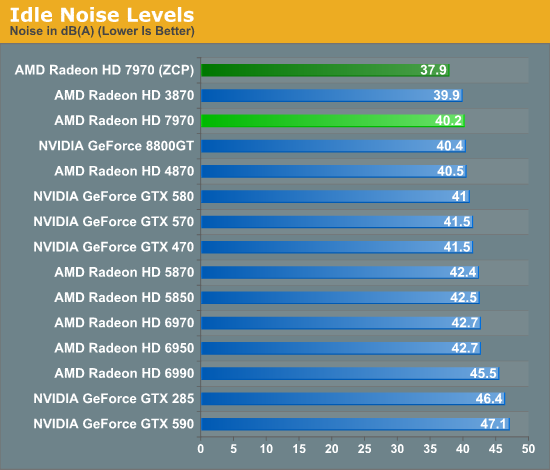

Last we have our look at noise, starting with idle noise. Much like our idle temperatures most single-GPU video cards tend to cluster together within 1dB, but the 7970 has managed to silence most of the rest of the pack, with a recorded noise level of 40.2dB. More impressive perhaps is the 7970 when in its Zero Core Power state. Because the fan turns off, the card is silent; 37.9dB(A) is the noise floor of our testbed.

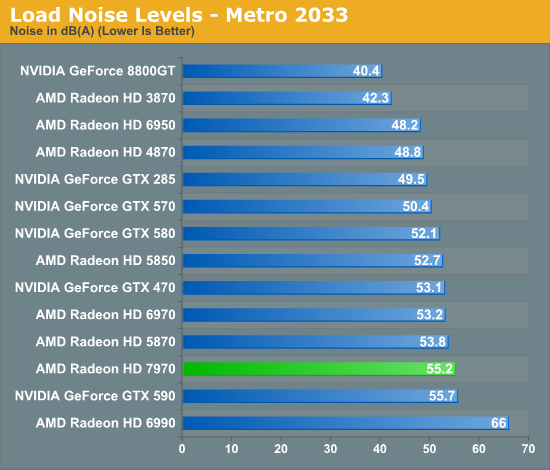

Moving on to our load noise measurements the full picture of the 7970’s cooling performance finally starts coming together. We’ve seen that the 7970 is a cool card, but is it a quiet card? The numbers say no. The 7970 is 3dB louder than the GTX 580 and 2dB louder than the 6970 even with its physical cooling improvements. Based on this combined with our temperature data it’s clear that AMD has targeted aggressive cooling over silence, whereas NVIDIA traditionally targets silence over aggressive cooling. When it comes to our testbed AMD has probably overdone it – we could easily exchange 10C for less noise – but with a wide range of computers in the world it’s hard to tell from a single testbed whether the cooling here is genuinely too aggressive for everyone, or if it’s the opposite. What isn’t in question is that the 7970 is going to be moderately loud, which coming from AMD is a surprising development.

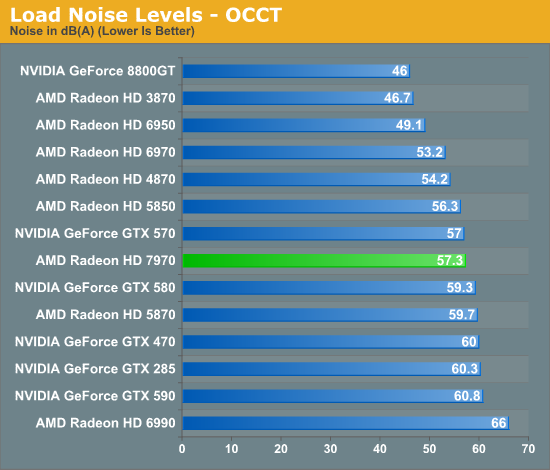

Once again with OCCT the numbers are higher, but the facts are largely the same. The 7970 is now quieter than the GTX 580 due to PowerTune, but it’s now 4dB louder than the 6970. Unlike Metro 57.3dB of noise is in the middle of the pack, but it stands to reason that AMD could have been a bit less aggressive on their fan speeds and made a quieter card as a result.

292 Comments

View All Comments

B3an - Thursday, December 22, 2011 - link

Anyone with half a brain should have worked out that being as this was going to be AMD's Fermi that it would not of had a massive increase for gaming, simply because many of those extra transistors are there for computing purposes. NOT for gaming. Just as with Fermi.The performance of this card is pretty much exactly as i expected.

Peichen - Friday, December 23, 2011 - link

AMD has been saying for ages that GPU computing is useless and CPU is the only way to go. I guess they just have a better PR department than Nvidia.BTW, before suggesting I have suffered brain trauma, remember that Nvidia delivered on Fermi 2 and GK100 will be twice as powerful as GF110

CeriseCogburn - Thursday, March 8, 2012 - link

Well it was nice to see the amd fans with half a heart admit amd has accomplished something huge by abandoned gaming, as they couldn't get enough of screaming it against nvidia... even as the 580 smoked up the top line stretch so many times...It's so entertaining...

CeriseCogburn - Thursday, March 8, 2012 - link

AMD is the dumb company. Their dumb gpu shaders. Their x86 copying of intel. Now after a few years they've done enough stealing and corporate espionage to "clone" Nvidia architecture and come out with this 7k compute.If they're lucky Nvidia will continue doing all software groundbreaking and carry the massive load by a factor of ten or forty to one working with game developers, porting open gl and open cl to workable programs and as amd fans have demanded giving them PhysX ported out to open source "for free", at which point it will suddenly be something no gamer should live without.

"Years behind" is the real story that should be told about amd and it's graphics - and it's cpu's as well.

Instead we are fed worthless half truths and lies... a "tesselator" in the HD2900 (while pathetic dx11 perf is still the amd norm)... the ddr5 "groundbreaker" ( never mentioned was the sorry bit width that made cheap 128 and 256 the reason for ddr5 needs)...

Etc.

When you don't see the promised improvement, the radeonites see a red rocket shooting to the outer depths of the galaxy and beyond...

Just get ready to pay some more taxes for the amd bailout coming.

durinbug - Thursday, December 22, 2011 - link

I was intrigued by the comment about driver command lists, somehow I missed all of that when it happened. I went searching and finally found this forum post from Ryan:http://forums.anandtech.com/showpost.php?p=3152067...

It would be nice to link to that from the mention of DCL for those of us not familiar with it...

digitalzombie - Thursday, December 22, 2011 - link

I know I'm a minority, but I use Linux to crunch data and GPU would help a lot...I was wondering if you guys can try to use these cards on Debian/Ubuntu or Fedora? And maybe report if 3d acceleration actually works? My current amd card have bad driver for Linux, shearing and glitches, which sucks when I try to number crunch and map stuff out graphically in 3d. Hell I try compiling the driver's source code and it doesn't work.

Thank you!

WaltC - Thursday, December 22, 2011 - link

Somebody pinch me and tell me I didn't just read a review of a brand-new, high-end ATi card that apparently *forgot* Eyefinity is a feature the stock nVidia 580--the card the author singles out for direct comparison with the 7970--doesn't offer in any form. Please tell me it's my eyesight that is failing, because I missed the benchmark bar charts detailing the performance of the Eyefinity 6-monitor support in the 7970 (but I do recall seeing esoteric bar-chart benchmarks for *PCIe 3.0* performance comparisons, however. I tend to think that multi-monitor support, or the lack of it, is far more an important distinction than PCIe 3.0 support benchmarks at present.)Oh, wait--nVidia's stock 580 doesn't do nVidia's "NV Surround triple display" and so there was no point in mentioning that "trivial fact" anywhere in the article? Why compare two cards so closely but fail to mention a major feature one of them supports that the other doesn't? Eh? Is it the author's opinion that multi-monitor gaming is not worth having on either gpu platform? If so, it would be nice to know that by way of the author's admission. Personally, I think that knowing whether a product will support multi monitors and *playable* resolutions up to 5760x1200 ROOB is *somewhat* important in a product review. (sarcasm/massive understatement)

Aside from that glaring oversight, I thought this review was just fair, honestly--and if the author had been less interested in apologizing for nVidia--we might even have seen a better one. Reading his hastily written apologies was kind of funny and amusing, though. But leaving out Eyefinity performance comparisons by pretending the feature isn't relative to the 7970, or that it isn't a feature worth commenting on relative to nVidia's stock 580? Very odd. The author also states: "The purpose of MST hubs was so that users could use several monitors with a regular Radeon card, rather than needing an exotic all-DisplayPort “Eyefinity edition” card as they need now," as if this is an industry-standard component that only ATi customers are "asking for," when it sure seems like nVidia customers could benefit from MST even more at present.

I seem to recall reading the following statement more than once in this review but please pardon me if it was only stated once: "... but it’s NVIDIA that makes all the money." Sorry but even a dunce can see that nVidia doesn't now and never has "made all the money." Heh...;) If nVidia "made all the money," and AMD hadn't made any money at all (which would have to be the case if nVidia "made all the money") then we wouldn't see a 7970 at all, would we? It's possible, and likely, that the author meant "nVidia made more money," which is an independent declaration I'm not inclined to check, either way. But it's for certain that in saying "nVidia made all the money" the author was--obviously--wrong.

The 7970 is all the more impressive considering how much longer nVidia's had to shape up and polish its 580-ish driver sets. But I gather that simple observation was also too far fetched for the author to have seriously considered as pertinent. The 7970 is impressive, AFAIC, but this review is somewhat disappointing. Looks like it was thrown together in a big hurry.

Finally - Friday, December 23, 2011 - link

On AT you have to compensate for their over-steering while reading.Death666Angel - Thursday, December 22, 2011 - link

"Intel implemented Quick Sync as a CPU company, but does that mean hardware H.264 encoders are a CPU feature?" << Why is that even a question. I cannot use the feature unless I am using the iGPU or use the dGPU with Lucid Virtu. As such, it is not a feature of the CPU in my book.Roald - Thursday, December 22, 2011 - link

I don't agree with the conclusion. I think it's much more of a perspective thing. Comming from the 6970 to the 7970 it's not a great win in the gaming deparment. However the same can be said from the change from 4870 to 5870 to 6970. The only real benefit the 5870 had over the 4870 was DX11 support, which didn't mean so much for the games at the time.Now there is a new architechture that not only manages to increase FPS in current games, it also has growing potential and manages to excell in the compute field aswell at the same time.

The conclusion made in the Crysis warhead part of this review should therefore also have been highlighted as finals words.