AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Partially Resident Textures: Not Your Father’s Megatexture

John Carmack’s id Software may not be the engine licensing powerhouse it was back in the Quake 3 days, but that hasn’t changed the revolutionary nature of his engine designs. The reason we bring this up is because there’s a great deal of GPU technology that can be directly mapped to concepts Carmack first implemented. For id Tech 4 Carmack implemented shadow volume technology, which was then first implemented in hardware by NVIDIA as their UltraShadow technology, and has since then been implemented in a number of GPUs. For id Tech 5 the trend has continued, now with AMD doing a hardware implementation of a Carmack inspired technology.

Among the features added to Graphics Core Next that were explicitly for gaming, the final feature was Partially Resident Textures, which many of you are probably more familiar with in concept as Carmack’s MegaTexture technology. The concept behind PRT/Megatexture is that rather than being treated as singular entities, due to their size textures should be broken down into smaller tiles, and then the tiles can be used as necessary. If a complete texture isn’t needed, then rather than loading the entire texture only the relevant tiles can be loaded while the irrelevant tiles can be skipped or loaded at a low quality. Ultimately this technology is designed to improve texture streaming by streaming tiles instead of whole textures, reducing the amount of unnecessary texture data that is streamed.

Currently MegaTexture does this entirely in software using existing OpenGL 3.2 APIs, but AMD believes that more next-generation game engines will use this type of texturing technology. Which makes it something worth targeting, as if they can implement it faster in hardware and get developers to use it, then it will improve game performance on their cards. Again this is similar to volume shadows, where hardware implementations sped up the process.

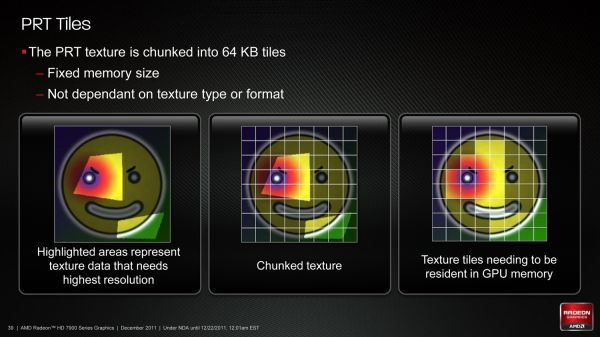

In order to implement this in hardware AMD has to handle two things: texture conversion, and cache management. With texture conversion, textures need to be read and broken up into tiles; AMD is going with a texture format agnostic method here that can simply chunk textures as they stand, keeping the resulting tiles in the same format. For AMD’s technology each tile will be 64KB, which for an uncompressed 32bit texture would be enough room for a 128 x 128 chunk.

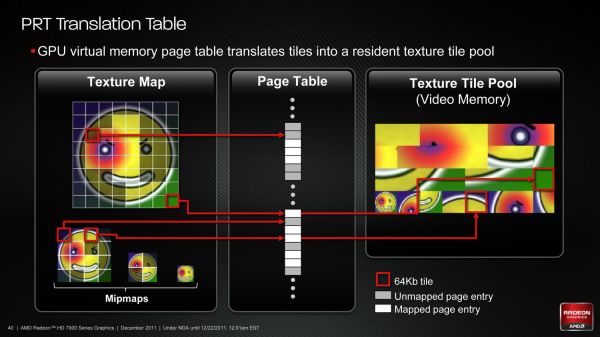

The second aspect of PRT is managing the tiles. In essence PRT reduces local video memory to a very large cache, where tiles are mapped/pinned as necessary and then evicted as per the cache rules, and elsewhere the hardware handles page/tile translation should a tile not already be in the cache. Large tomes have been written on caching methods, and this aspect is of particular interest to AMD because what they learn about caching here they can apply to graphical workloads (i.e. professional) and not just gaming.

To that end AMD put together a technology demo for PRT based on Per-Face Texture Mapping (PTEX), a Disney-developed texture mapping technique that maps textures to polygons in a 1:1 ratio. Disney uses this technique for production rendering, as by constraining textures to a single polygon they don’t have to deal with any complexities that arise as a result of mapping a texture over multiple polygons. In the case of AMD’s demo it not only benefits for the reasons that Disney uses it, but also because when combined with tessellation it trivializes vector displacement, making art generation for tessellated games much easier to create. Finally, PRT fits into all of this by improving the efficiency of accessing and storing the Ptex texture chunks.

Wrapping things up, for the time being while Southern Islands will bring hardware support for PRT software support will remain limited. As D3D is not normally extensible it’s really only possible to easily access the feature from other APIs (e.g. OpenGL), which when it comes to games is going to greatly limit the adoption of the technology. AMD of course is working on the issue, but there are few ways around D3D’s tight restrictions on non-standard features.

292 Comments

View All Comments

Zingam - Thursday, December 22, 2011 - link

And at the time when it is available in D3D. AMD's implementation won't be compatible... :D That's sounds familiar. So will have to wait for another generation to get the things right.Ryan Smith - Thursday, December 22, 2011 - link

As for your question about FP64, it's worth noting that of the FP64 rates AMD listed for GCN, "0" was not explicitly an option. It's quite possible that anything using GCN will have at a minimum 1/16th FP64.Sind - Thursday, December 22, 2011 - link

Excellent review thanks Ryan. Looking forward to see what the 7950 performance and pricing will end up. Also to see what nv has up their sleeves. Although I can't shake the feeling amd is holding back.chizow - Thursday, December 22, 2011 - link

Another great article, I really enjoyed all the state-of-the-industry commentary more than the actual benchmarks and performance numbers.One thing I may have missed was any coverage at all of GCN. Usually you guys have all those block diagrams and arrows explaining the changes in architecture. I know you or Anand did a write-up on GCN awhile ago, but I may have missed the link to it in this article. Or maybe put a quick recap in there with a link to the full write-up.

But with GCN, I guess we can close the book on AMD's past Vec5/VLIW4 archs as compute failures? For years ATI/AMD and their supporters have insisted it was the better compute architecture, and now we're on the 3rd major arch change since unified shaders, while Nvidia has remained remarkably consistent with their simple SP approach. I think the most striking aspect of this consistency is that you can run any CUDA or GPU accelerated apps on GPUs as old as G80, while you even noted you can't even run some of the most popular compute apps on 7970 because of arch-specific customizations.

I also really enjoyed the ISV and driver/support commentary. It sounds like AMD is finally serious about "getting in the game" or whatever they're branding it nowadays, but I have seen them ramp up their efforts with their logo program. I think one important thing for them to focus on is to get into more *quality* games rather than just focusing on getting their logo program into more games. Still, as long as both Nvidia and AMD are working to further the compatibility of their cards without pushing too many vendor-specific features, I think that's a win overall for gamers.

A few other minor things:

1) I believe Nvidia will soon be countering MLAA with a driver-enabled version of their FXAA. While FXAA is available to both AMD and Nvidia if implemented in-game, providing it driver-side will be a pretty big win for Nvidia given how much better performance and quality it offers over AMD's MLAA.

2) When referring to active DP adapter, shouldn't it be DL-DVI? In your blurb it said SL-DVI. Its interesting they went this route with the outputs, but providing the active adapter was definitely a smart move. Also, is there any reason GPU mfgs don't just add additional TMDS transmitters to overcome the 4x limitation? Or is it just a cost issue?

3) The HDMI discussion is a bit fuzzy. HDMI 1.4b specs were just finalized, but haven't been released. Any idea whether or not SI or Kepler will support 1.4b? Biggest concern here is for 120Hz 1080p 3D support.

Again, thoroughly enjoyed reading the article, great job as usual!

Ryan Smith - Thursday, December 22, 2011 - link

Thanks for the kind words.Quick answers:

2) No, it's an active SL-DVI adapter. DL-DVI adapters exist, but are much more expensive and more cumbersome to use because they require an additional power source (usually USB).

As for why you don't see video cards that support more than 2 TMDS-type displays, it's both an engineering and a cost issue. On the engineering side each TMDS source (and thus each supported TMDS display) requires its own clock generator, whereas DisplayPort only requires 1 common clock generator. On the cost side those clock generators cost money to implement, but using TMDS also requires paying royalties to Silicon Image. The royalty is on the order of cents, but AMD and NVIDIA would still rather not pay it.

3) SI will support 1080P 120Hz frame packed S3D.

ericore - Thursday, December 22, 2011 - link

Core Next: It appears AMD is playing catchup to Nvidia's Cuda, but to an extent that halves the potential performance metrics; I see no other reason why they could not have achieved at varying 25-50% improvement in FPS. That is going to cost them, not just for marginally better performance 5-25%, but they are price matching GTX 580 which means less sales though I suppose people who buy 500$ + GPUs buy them no matter what. Though in this case, they may wait to see what Nvidia has to offer.Other New AMD GPUs: Will be releasing in February and April are based on the current architecture, but with two critical differences; smaller node + low power based silicon VS the norm performance based silicon. We will see very similar performance metrics, but the table completely flips around: we will see them, cheaper, much more power efficient and therefore very quiet GPUs; I am excited though I would hate to buy this and see Nvidia deliver where AMD failed.

Thanks Anand, always a pleasure reading your articles.

Angrybird - Thursday, December 22, 2011 - link

any hint on 7950? this card should go head to head with gtx580 when it release. good job for AMD, great review for Ryan!ericore - Thursday, December 22, 2011 - link

I should add with over 4 billion transistors, they've added more than 35% more transistors but only squeeze 5-25% improvement; unacceptable. That is a complete fail in that context relative to advancement in gaming. Too much catchup with Nvidia.Finally - Thursday, December 22, 2011 - link

...that saying? It goes like this:If you don't show up for a race, you lose by default.

Your favourite company lost, so their fanboys may become green of envydia :)

Besides that - I'd never shell out more than 150€ for a petty GPU, so neither company's product would have appealed to me...

piroroadkill - Thursday, December 22, 2011 - link

Wait, catchup? In my eyes, they were already winning. 6950 with dual BIOS, unlock it to 6970.. unbelievable value.. profit??Already has a larger framebuffer than the GTX580, so...