AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Crysis: Warhead

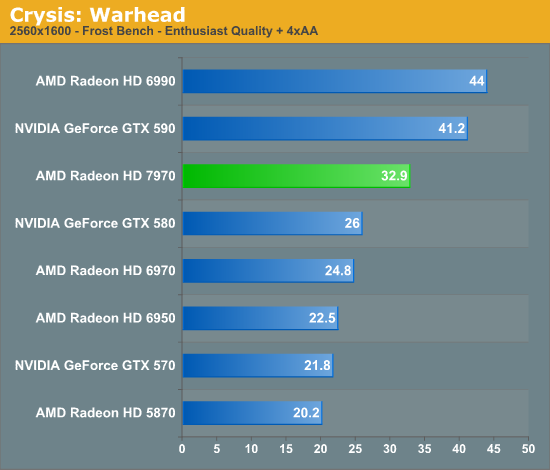

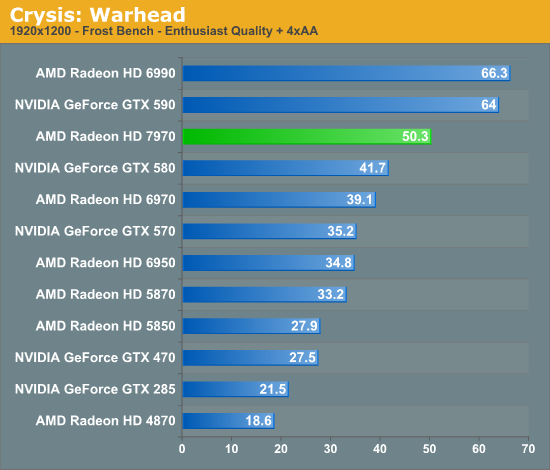

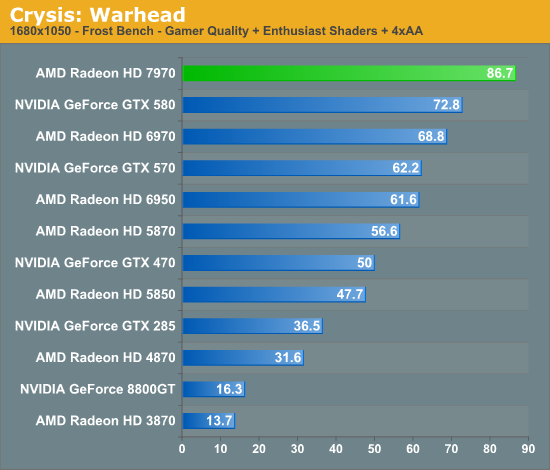

Kicking things off as always is Crysis: Warhead. It’s no longer the toughest game in our benchmark suite, but it’s still a technically complex game that has proven to be a very consistent benchmark. Thus even 4 years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” While we’re closer than ever, full Enthusiast settings at a 60fps is still beyond the grasp of a single-GPU card.

This year we’ve finally cranked our settings up to full Enthusiast quality for 2560 and 1920, so we can finally see where the bar lies. To that extent the 7970 is closer than any single-GPU card before as we’d imagine, but it’s going to take one more jump (~20%) to finally break 60fps at 1920.

Looking at the 7970 relative to other cards, there are a few specific points to look at; the GTX 580 is of course its closest competitor, but we can also see how it does compared to AMD’s previous leader, the 6970, and how far we’ve come compared to DX10 generation cards.

One thing that’s clear from the start is that the tendency for leads to scale with the resolution tested still stands. At 2560 the 7970 enjoys a 26% lead over the GTX 580, but at 1920 that’s only a 20% lead and it shrinks just a bit more to 19% at 1680. Even compared to the 6970 that trend holds, as a 32% lead is reduced to 28% and then 26%. If the 7970 needs high resolutions to really stretch its legs that will be good news for Eyefinity users, but given that most gamers are still on a single monitor it may leave AMD closer to 40nm products in performance than they’d like.

Speaking of 40nm products, both of our dual-GPU entries, the Radeon HD 6990 and GeForce GTX 590 are enjoying lofty leads over the 7970 even with the advantage of its smaller fabrication process. To catch up to those dual-GPU cards from the 6970 would require a 70%+ increase in performance, and even with a full node difference it’s clear that this is not going to happen. Not that it’s completely out of reach for the 7970 once you start looking at overclocking, but the reduction in power usage when moving from TSMC 40nm to 28nm isn’t nearly large enough to make that happen while maintaining the 6970’s power envelope. Dual-GPU owners will continue to enjoy a comfortable lead over even the 7970 for the time being, but with the 7970 being built on a 28nm process the power/temp tradeoff for those cards is even greater compared to 40nm products.

Meanwhile it’s interesting to note just how much progress we’ve made since the DX10 generation though; at 1920 the 7970 is 130% faster than the GTX 285 and 170% faster than the Radeon HD 4870. Existing users who skip a generation are a huge market for AMD and NVIDIA, and with this kind of performance they’re in a good position to finally convince those users to make the jump to DX11.

Finally it should be noted that Crysis is often a good benchmark for predicting overall performance trends, and as you will see it hasn’t let us down here. How well the 7970 performs relative to its competition will depend on the specific game, but 20-25% isn’t too far off from reality.

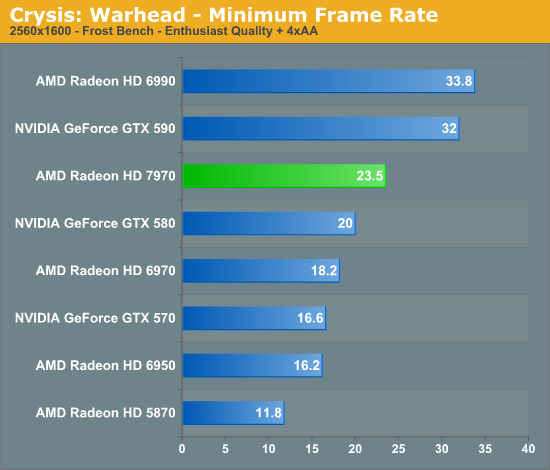

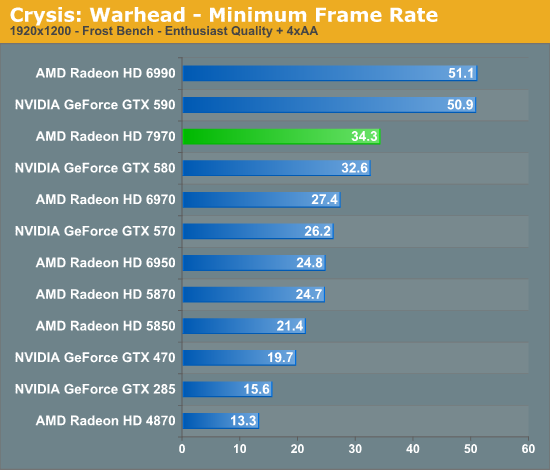

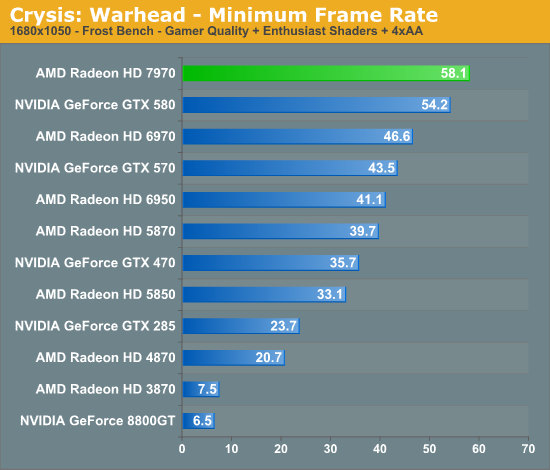

Looking at our minimum framerates it’s a bit surprising to see that while the 7970 has a clear lead when it comes to average framerates the minimums are only significantly better at 2560. At that resolution the lowest framerate for the 7970 is 23.5 versus 20 for the GTX 580, but at 1920 that becomes a 2fps, 5% difference. It’s not that the 7970 was any less smooth in playing Crysis, but in those few critical moments it looks to be dipping a bit more than the GTX 580.

Compared to the 6970 on the other hand the minimum framerate difference is much larger and much more consistent. At 2560 the 7970’s minimums are 29% better, and even at lower resolutions it holds at around 25%. Clearly even with AMD’s new architecture their designs still inherit some of the traits of their old designs.

292 Comments

View All Comments

nitro912gr - Thursday, December 22, 2011 - link

I was planning a switch from AMD (4850) to a(n) nVidia GPU for my next upgrade, because they perform well both in computing and in gaming, and I need both fields to be filled here.But now I'm not sure about that, I will wait a bit to see how the software will welcome the new architecture first.

I hope they work as well so I can just pick the cheapest GPU.

Chloiber - Thursday, December 22, 2011 - link

The thing I still don't like about the new AMD cards is their massive problems with anisotropic filtering. AMD promised twice (with Cayman and Tahiti) that the "AF-Bug" is gone. But it's still mediocre to NV and worse than older cards (pre-R600).The bad thing about this is, that it's easily detectable in games and not just a theoretical flaw. It got better than Cayman, but it's still worse than NVs AF.

CeriseCogburn - Thursday, March 8, 2012 - link

Yes, thank you for that. We are supposed to ignore all amd radeon issues and failures and less thans, though, so we can extoll the greatness....Then when they "finally catch up to nvidia years later with some fix", the reviewers can tell us and admit openly that amd radeon has a years long problem of inferiority to nvidia that they "finally solved" and then we can get a gigantic zipped up download to show what "was for years amd fail hidden and not spoken of" is gone !

Hurrah !

Wow it's so much fun seeing it happen, again.

KoVaR - Thursday, December 22, 2011 - link

Awesome job on power consumption and noise levels. If only AMD did so well in the CPU realm...alpha754293 - Thursday, December 22, 2011 - link

Can you play a game while running a compute job?There's word that even for the nVidia Tesla compute accelerators (based on Fermi) that it stutters when you try to play a game or video while it is actively computing/working on something else.

Is that the case here too?

SlyNine - Thursday, December 22, 2011 - link

I'm sure it does, Context switching still occures a huge penalty.MrSpadge - Thursday, December 22, 2011 - link

GCN won't be able to help this on its own. The software needs to catch up. It's a major concern for true GP-GPU and heterogenous computing, though! And not even just launching a game, trying to use your desktop is enough of a problem already..MrS

shin0bi272 - Thursday, December 22, 2011 - link

Id really like to see is when you guys bench with an nvidia physx game... run the bench with physx on (maxed out if there's an option) once and off once.I know everyone is going to claim that physx is a gimmick but a good portion of that reason is because when a game supports it NO ONE BENCHMARKS IT WITH IT ON! That's like buying a big screen tv and covering half of it with duct tape. And lets not forget AMD opted to not use the tech when nvidia offered it to them... so AMD's loss is Nvidia's gain and no one uses it in their reviews because its not hardware neutral. That's partial favoritism IMHO.

Also why wasnt the gtx590 or the 6990 tested @ 16x10 dx10 HQ 16xaf on Metro2033? The 580 was tested and the 6970 were but not the dual chip cards. Whats up with that?

Finally - Thursday, December 22, 2011 - link

Spoken like a true Nvidia viral marketing shillshin0bi272 - Friday, December 23, 2011 - link

So because I prefer the extra eye candy physx offers I cant ask a question about a testing methodology? Sounds like someone has physx envy.