AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Building Tahiti & The Southern Islands

Now that we’ve had a chance to go over the basis of the Graphics Core Next architecture, let’s talk about the finished products.

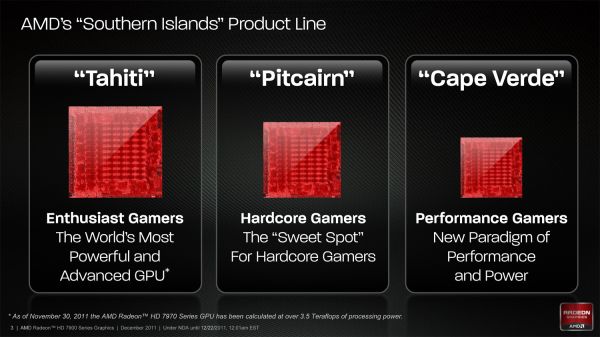

Today AMD will be launching Tahiti, the first GPU of the Southern Islands family. Southern Islands will initially be composed of 3 GPUs: Tahiti, Pitcairn, and Cape Verde. Tahiti is the largest and most powerful member of the Southern Islands family, while Pitcairn and Cape Verde get progressively smaller. AMD has not yet announced the branding or launch dates for Pitcarn and Cape Verde, but it typically takes AMD around 6 months to launch a complete family. As such it’s reasonable to expect that all 3 GPUs will have launched by the end of June although there’s a good likelihood of it happening sooner than that.

All 3 GPUs are based on the GCN architecture, and as family members will have similar features while varying the number of functional units accordingly. Along with the architecture change Southern Islands brings with it a slew of additional features that we’ll get to in the following pages, including Partially Resident Texture (PRT) support, PCIe 3.0, FastHDMI, Direct3D 11.1, and AMD’s fixed-function H.264 encoder, the Video Codec Engine.

But today is all about Tahiti, so let’s get down to business.

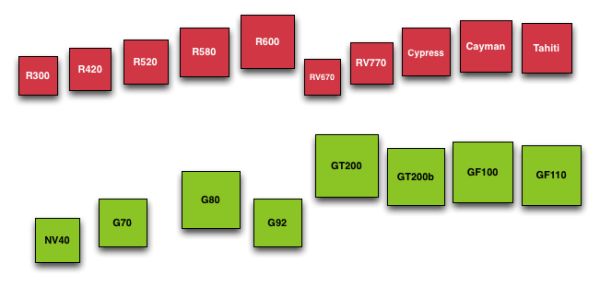

As we quickly covered in our introduction, Tahiti is a 4.31B transistor GPU based on the GCN architecture and built on TSMC’s new 28nm High-K process. Due to TSMC canceling their 32nm process last year AMD has had to wait over 2 years for the next full node rather than taking advantage of the half-node process as they typically do, and as a result the jump from Cayman at 40nm to Tahiti at 28nm is much bigger than with past product launches. Whereas Cayman had 2.64B transistors and a die size of 389mm2, Tahiti has a whopping 63% more transistors than Cayman and yet it’s still smaller, coming in at a slightly more petite 365mm2.

At this point AMD hasn’t provided us with the typical board power values for 7970, but we do know that PowerTune is limited to 250W. In terms of design 7970 is clearly intended to work in similar environments as the 6970, in which case power consumption should be similar to the 6970.

Interestingly enough however we’re hearing that 7970 cards are proving to be very overclockable, which is a good sign for the state of TSMC’s 28nm process, and at the same time a bit distressing. Moore’s Law has continued to hold with respect to transistor density, but the power consumption benefits of using smaller nodes has continued to wane. Having a lot of overclocking headroom means that the 7970 has the potential to be much faster, but it also means that the 7970 (and 28nm GPUs in general) are going to be bottlenecked by power. In which case seeing as how we’re already approaching 300W with single-GPU video cards, the performance gains realized from future fabrication processes would be limited to the ever diminishing returns on power consumption improvements.

Diving deeper into Tahiti, as per the GCN architecture Tahiti’s 2048 SPs are organized into 32 Compute Units. Each of these CUs contains 4 texture units and 4 SIMD units, along with a scalar unit and the appropriate cache and registers. At the 7970’s core clock of 925MHz this puts Tahiti’s theoretical FP32 compute performance at 3.79TFLOPs, while its FP64 performance is ¼ that at 947GFLOPs. As GCN’s FP64 performance can be configured for 1/16, ¼, or ½ its FP32 performance it’s not clear at this time whether the 7970’s ¼ rate was a hardware design decision for Tahiti or a software cap that’s specific to the 7970. However as it’s obvious that Tahiti is destined to end up in a FireStream card we will no doubt find out soon enough.

Meanwhile the frontend/command processor for Tahiti is composed of 2 Asynchronous Command Engines (ACEs) and 2 geometry engines. Just as with Cayman each geometry engine can dispatch 1 triangle per clock, giving Tahiti the same theoretical 2 triangle/clock rate as Cayman. As we’ll see however, in practice Tahiti will be much faster than Cayman here due to efficiency improvements.

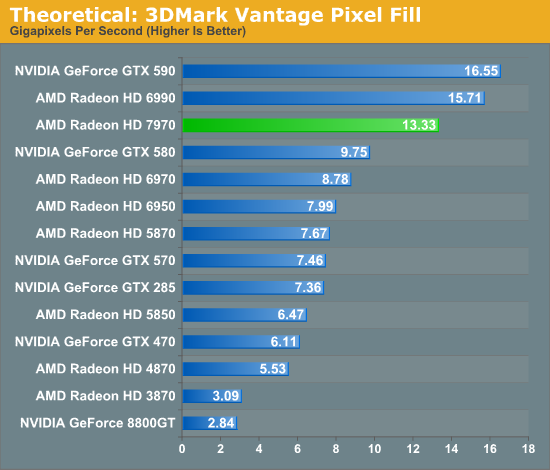

Looking beyond the frontend and shader cores, we’ve seen a very interesting reorganization of the rest of the GPU as opposed to Cayman. Keeping in mind that AMD’s diagrams are logical diagrams rather than physical diagrams, the fact that the ROPs on Tahiti are not located near the L2 cache and memory controllers in the diagram is not an error. The ROPs have in fact been partially decoupled from the L2 cache and memory controllers, which is also why there are 8 ROP partitions but only 6 memory controllers. Traditionally the ROPs, L2 cache, and memory controllers have all been tightly integrated as ROP operations are extremely bandwidth intensive, making this a very unusual design for AMD to use.

As it turns out, there’s a very good reason that AMD went this route. ROP operations are extremely bandwidth intensive, so much so that even when pairing up ROPs with memory controllers, the ROPs are often still starved of memory bandwidth. With Cayman AMD was not able to reach their peak theoretical ROP throughput even in synthetic tests, never mind in real-world usage. With Tahiti AMD would need to improve their ROP throughput one way or another to keep pace with future games, but because of the low efficiency of their existing ROPs they didn’t need to add any more ROP hardware, they merely needed to improve the efficiency of what they already had.

The solution to that was rather counter-intuitive: decouple the ROPs from the memory controllers. By servicing the ROPs through a crossbar AMD can hold the number of ROPs constant at 32 while increasing the width of the memory bus by 50%. The end result is that the same number of ROPs perform better by having access to the additional bandwidth they need.

The big question right now, and one we don’t have an answer to, is what were the tradeoffs for decoupling the ROPs? Clearly the crossbar design has improved ROP performance through the amount of memory bandwidth they can access, but did it impact anything else? The most obvious tradeoff here would be for potentially higher latency, but there may be other aspects that we haven’t realized yet.

On that note, let’s discuss the memory controllers quickly. Tahiti’s memory controllers aren’t significantly different from Cayman’s but there are more of them, 50% more in fact, forming a 384bit memory bus. AMD has long shied away from non-power of 2 memory busses, and indeed the last time they even had a memory bus bigger than 256bits was with the ill-fated 2900XT, but at this point in time AMD has already nearly reached the practical limits of GDDR5. AMD’s ROPs needed more memory bandwidth, but even more than that AMD needed more memory bandwidth to ensure Tahiti had competitive compute performance, and as such they had little choice but to widen their memory bus to 384bits wide by adding another 2 memory controllers.

It’s worth noting though that the addition of 2 more memory controllers also improves AMD’s cache situation. With 128KB of L2 cache being tied to each memory controller, the additional controllers gave AMD 768KB of L2 cache, rather than the 512KB that a 256bit memory bus would be paired with.

292 Comments

View All Comments

RussianSensation - Thursday, December 22, 2011 - link

That's not what the review says. The review clearly explains that it's the best single-GPU for gaming. There is nothing biased about not being mind-blown by having a card that's only 25% faster than GTX580 and 37% faster than HD6970 on average, considering this is a brand new 28nm node. Name a single generation where AMD's next generation card improved performance so little since Radeon 8500?There isn't any!

SlyNine - Friday, December 23, 2011 - link

2900XT ? But I Don't remember if that was a new node and what the % of improvement was beyond the 1950XT.But still this is a 500$ card, and I don't think its what we have come to expect from a new node and generation of card. However some people seem more then happy with it, Guess they don't remember the 9700PRO days.

takeulo - Thursday, December 22, 2011 - link

as ive read the review this is not a disappointment infact its only a single gpu card but it toughly competing or nearly chasing with the dual gpu's graphics card like 6990 and gtx 590 performance...imagine that 7970 is also a dual gpu?? it will tottally dominate the rest... sorry for my bad english..

eastyy - Thursday, December 22, 2011 - link

the price vs performance is the most important thing for me at the moment i have a 460 that cost me about £160 at the time and that was a few years ago...seems like the cards now for the same price dont really give that much of a increaseMorg. - Thursday, December 22, 2011 - link

What seems unclear to the writer here is that in fact 6-series AMD was better in single GPU than nVidia.Like miles better.

First, the stock 6970 was within 5% of the gtx580 at high resolutions (and excuse me, but if you like a 500 bucks graphics board with a 100 bucks screen ... not my problem -- ).

Second, if you put a 6970 OC'd at GTX580 TDP ... the GTX580 is easily 10% slower.

So overall . seriously ... wake the f* up ?

The only thing nVidia won at with fermi series 2 (gtx5xx) is making the most expensive highest TDP single GPU card. It wasn't faster, they just picked a price point AMD would never target .. and they got i .. wonderful.

However, AMD raped nVidia all the way in perf/watt/dollar as they did with Intel in the Server CPU space since Opteron Istanbul ...

If people like you stopped spouting random crap, companies like AMD would stand a chance of getting the market share their products deserve (sure their drivers are made of shit).

Leyawiin - Thursday, December 22, 2011 - link

The HD 7970 is a fantastic card (and I can't wait to see the rest of the line), but the GTX 580 was indisputably better than the HD 6970. Stock or OC'd (for both).Morg. - Friday, December 23, 2011 - link

Considering TDP, price and all - no.The 6970 lost maximum 5% to the GTX580 above full HD, and the bigger the resolution, the smaller the GTX advantage.

Every benchmark is skewed, but you should try interpreting rather than just reading the conclusion --

Keep in mind the GTX580 die size is 530mm² whereas the 6970 is 380mm²

Factor that in, aim for the same TDP on both cards . and believe me .. the GTX580 was a complete total failure, and a total loss above full HD.

Yes it WAS the biggest single GPU of its time . but not the best.

RussianSensation - Thursday, December 22, 2011 - link

Your post is ill-informed.When GTX580 and HD6970 are both overclocked, it's not even close. GTX580 destroyed it.

http://www.xbitlabs.com/articles/graphics/display/...

HD6950 was an amazing value card for AMD this generation, but HD6970 was nothing special vs. GTX570. GTX580 was overpriced for the performance over even $370 factory preoverclocked GTX570 cards (such as the almost eerily similar in performance EVGA 797mhz GTX570 card for $369).

All in all, GTX460 ~ HD6850, GTX560 ~ HD6870, GTX560 Ti ~ HD6950, GTX570 ~ HD6970. The only card that had really poor value was GTX580. Of course if you overclocked it, it was a good deal faster than the 6970 that scaled poorly with overclocking.

Morg. - Friday, December 23, 2011 - link

I believe you don't get what I said :AT THE SAME TDP, THE HD6xxx TOTALLY DESTROYED THE GTX 5xx

THAT MEANS : the amd gpu was better even though AMD decided to sell it at a TDP / price point that made it cheaper and less performing than the GTX 5xx

The "destroyed it" statement is full HD resolution only . which is dumb . I wouldn't ever get a top graphics board to just stick with full HD and a cheap monitor.

Peichen - Friday, December 23, 2011 - link

According to your argument, all we'd ever need is IGP because no stand-alone card can compete with IGP at the same TDP / price point.