The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTDesigning GP104: Running Up the Clocks

So if GP104’s per-unit throughput is identical to GM204, and the SM count has only been increased from 2048 to 2560 (25%), then what makes GTX 1080 60-70% faster than GTX 980? The answer there is that instead of vastly increasing the number of functional units for GP104 or increasing per-unit throughput, NVIDIA has instead opted to significantly raise the GPU clockspeed. And this in turn goes back to the earlier discussion on TSMC’s 16nm FinFET process.

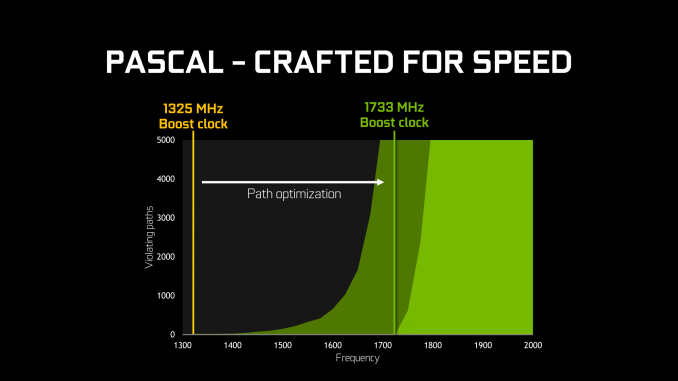

With every advancement in fab technology, chip designers have been able to increase their clockspeeds thanks to the basic physics at play. However because TSMC’s 16nm node adds FinFETs for the first time, it’s extra special. What’s happening here is a confluence of multiple factors, but at the most basic level the introduction of FinFETs means that the entire voltage/frequency curve gets shifted. The reduced leakage and overall “stronger” FinFET transistors can run at higher clockspeeds at lower voltages, allowing for higher overall clockspeeds at the same (or similar) power consumption. We see this effect to some degree with every node shift, but it’s especially potent when making the shift from planar to FinFET, as has been the case for the jump from 28nm to 16nm.

Given the already significant one-off benefits of such a large jump in the voltage/frequency curve, for Pascal NVIDIA has decided to fully embrace the idea and run up the clocks as much as is reasonably possible. At an architectural level this meant going through the design to identify bottlenecks in the critical paths – logic sections that couldn’t run at as high a frequency as NVIDIA would have liked – and reworking them to operate at higher frequencies. As GPUs typically (and still are) relatively low clocked, there’s not as much of a need to optimize critical paths in this matter, but with NVIDIA’s loftier clockspeed goals for Pascal, this changed things.

From an implementation point of view this isn’t the first time that NVIDIA has pushed for high clockspeeds, as most recently the 40nm Fermi architecture incorporated a double-pumped shader clock. However this is the first time NVIDIA has attempted something similar since they reined in their power consumption with Kepler (and later Maxwell). Having learned their lesson the hard way with Fermi, I’m told a lot more care went into matters with Pascal in order to avoid the power penalties NVIDIA paid with Fermi, exemplified by things such as only adding flip-flops where truly necessary.

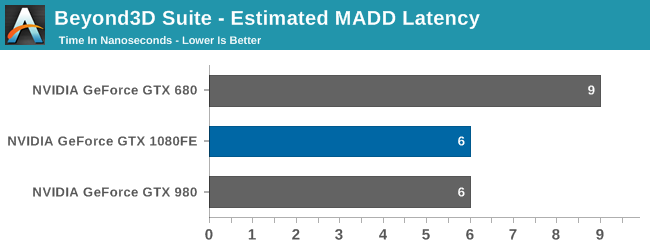

Meanwhile when it comes to the architectural impact of designing for high clockspeeds, the results seem minimal. While NVIDIA does not divulge full information on the pipeline of a CUDA core, all of the testing I’ve run indicates that the latency (in clock cycles) of the CUDA cores is identical to Maxwell. Which goes hand in hand with earlier observations about throughput. So although optimizations were made to the architecture to improve clockspeeds, it doesn’t look like NVIDIA has made any more extreme optimizations (e.g. pipeline lengthening) that detectably reduces Pascal’s per-clock performance.

Finally, more broadly speaking, while this is essentially a one-time trick for NVIDIA, it’s an interesting route for them to go. By cranking up their clockspeeds in this fashion, they avoid any real scale-out issues, at least for the time being. Although graphics are the traditional embarrassingly parallel problem, even a graphical workload is subject to some degree of diminishing returns as GPUs scale farther out. A larger number of SMs is more difficult to fill, not every aspect of the rendering process is massively parallel (shadow maps being a good example), and ever-increasing pixel shader lengths compound the problem. Admittedly NVIDIA’s not seeing significant scale-out issues quite yet, but this is why GTX 980 isn’t quite twice as fast as GTX 960, for example.

Just increasing the clockspeed, comparatively speaking, means that the entire GPU gets proportionally faster without shifting the resource balance; the CUDA cores are 43% faster, the geometry frontends are 43% faster, the ROPs are 43% faster, etc. The only real limitation in this regard isn’t the GPU itself, but whether you can adequately feed it. And this is where GDDR5X comes into play.

200 Comments

View All Comments

Scali - Wednesday, July 27, 2016 - link

There is hardware to quickly swap task contexts to/from VRAM.The driver can signal when a task needs to be pre-empted, which it can now do at any pixel/instruction.

If I understand Dynamic Load Balancing correctly, you can queue up tasks from the compute partition on the graphics partition, which will start running automatically once the graphics task has completed. It sounds like this is actually done without any interference from the driver.

tamalero - Friday, July 22, 2016 - link

I swear the whole 1080 vs 480X remind me of the old fight between the 8800 and the 2900XTwhich somewhat improved int he 3870 and end with a winner whit the 4870.

I really hope AMD stops messing with the ATI division and lets them drop a winner.

AMD has been sinking ATI and making ATI carry the goddarn load of AMD's processor division failure.

doggface - Friday, July 22, 2016 - link

Excellent article Ryan. I have been reading for several days whenever i can catch five minutes, and it has been quite the read! I look forward to the polaris review.I feel like u should bench these cards day 1, so that the whingers get it out od their system. Then label these reviews the "gp104" review, etc. It really was about the chip and board more than the specific cards....

PolarisOrbit - Saturday, July 23, 2016 - link

After reading the page about Simultaneous Multi Projection, I had a question of whether this feature could be used for more efficiently rendering reflections, like on a mirror or the surface of water. Does anyone know?KoolAidMan1 - Saturday, July 23, 2016 - link

Great review guys, in-depth and unbiased as always.On that note, the anger from a few AMD fanboys is hilarious, almost as funny as how pissed off the Google fanboys get whenever Anandtech dares say anything positive about an Apple product.

Love my EVGA GTX 1080 SC, blistering performance, couldn't be happier with it

prisonerX - Sunday, July 24, 2016 - link

Be careful, you might smug yourself to death.KoolAidMan1 - Monday, July 25, 2016 - link

Spotted the fanboy apologistbill44 - Monday, July 25, 2016 - link

Anyone here knows at least the supported audio sampling rates? If not, I think my best bet is going with AMD (which I'm sure supports 88.2 & 176.4 KHz).Anato - Monday, July 25, 2016 - link

Thanks for the review! Waited it long, read other's and then come this, this was the best!Squuiid - Tuesday, July 26, 2016 - link

Here's my Time Spy result in 3DMark for anyone interested in what an X5690 Mac Pro can do with a 1080 running in PCIe 1.1 in Windows 10.http://www.3dmark.com/3dm/13607976?