NVIDIA Announces GameWorks VR Branding, Adds Multi-Res Shading

by Ryan Smith on May 31, 2015 6:00 PM EST

Alongside the GeForce GTX 980 Ti and G-Sync announcements going on today in conjunction with Computex, NVIDIA is also announcing an update for their suite of VR technologies.

First off, initially introduced alongside the GeForce GTX 980 in September as VR Direct, today NVIDIA is announcing that they are bringing their VR technologies in under the GameWorks umbrella of developer tools. The collection of technologies will now be called GameWorks VR, adding to the already significant collection of GameWorks tools and libraries.

Multi-Res Shading

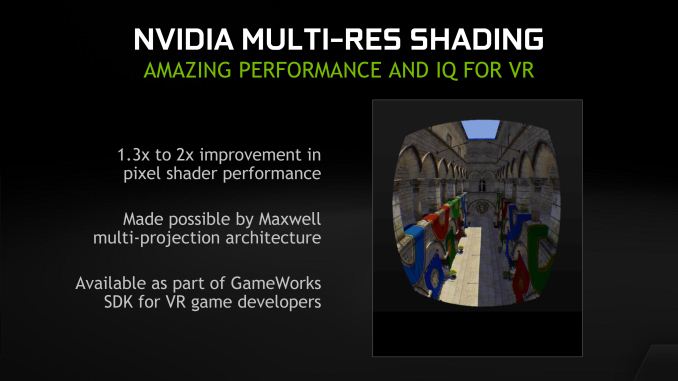

Meanwhile, alongside today’s rebranding, NVIDIA is also announcing a new feature for GameWorks VR: multi-resolution shading, or multi-res shading for short. With multi-res shading, NVIDIA is looking to leverage the Maxwell 2 architecture’s Multi-Projection Acceleration in order to increase rendering efficiency and ultimately the overall performance of their GPUs in VR situations.

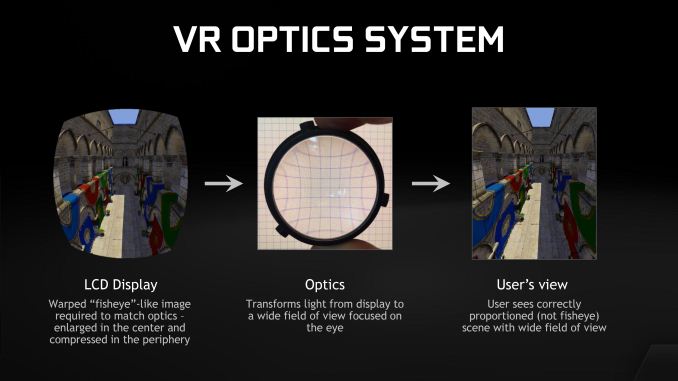

The fundamental issue at play here is how rendering works in conjunction with the optics that go into a VR headset. VR headsets uses lenses to help users focus on a screen mere inches from their eyes, and to help compensate for the wide field of vision of the human eye. However in doing this, these lenses also introduce distortion, which in turn must be compensated for at the rendering phase in order to similarly distort the image such that it appears correct to the user after going through the lenses.

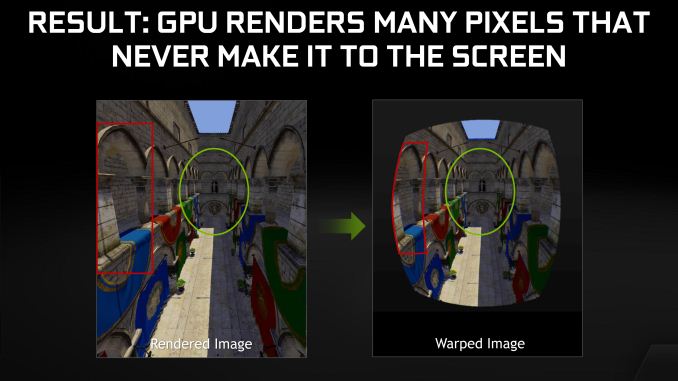

From a rendering perspective none of this is problematic, as distorting a rendered image is actually a pretty straightforward pixel shader operation. However one of the products of this distortion is that the distorted image has less information in it than the original image, due to the combination of the presence of optics in a headset and the fact that the human eye sees more detail at the center than at its edges. The end result is that GPUs are essentially rendering more detail than they need to at the edges of a frame, which in turn introduces some room for psychovisual rendering optimizations.

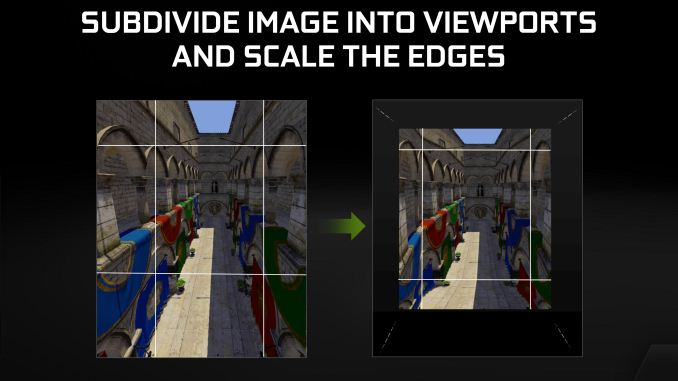

This brings us to multi-res shading and the theory behind this feature. The crux of the theory behind multi-resolution rendering is that if a GPU could render parts of a single frame at differing levels of detail – a full-detailed center and a lower detailed peripheral – then that GPU could reduce the overall amount of work required to render a frame, boosting performance and/or lowering the GPU requirements for VR. Rendering a frame in such a fashion would result in a loss of image quality – a subdivided image rendered at different detail levels would not be identical to a traditionally rendered full-detail image – but since image quality is compromised by the distortion itself and the compromised portions of the image are being directed at the less-sensitive outer edges of the eye, the end result is that one can bring down the detail level of the outer edge of an image without significantly compromising the projected image or the user experience. Think of it as lossy compression for VR.

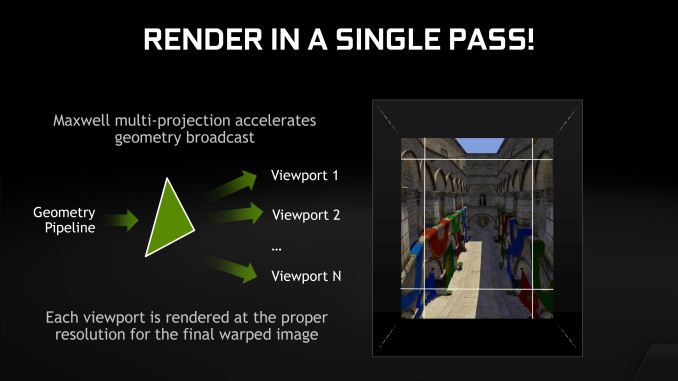

For multi-res shading NVIDIA does just this, leveraging their multi-projection acceleration technology to efficiently implement it. In this process NVIDIA subdivides the image into multiple viewports, scaling the outer viewports to lower resolutions while rendering the inner-most viewport at full resolution, resulting in an image that overall is composed of fewer pixels and hence faster to render. MPA in turn allows NVIDIA to build the scene geometry a single time and project it to the multiple viewports simultaneously, eliminating much of the overhead that would be incurred in rendering the same scene multiple times to multiple viewports.

The end result of multi-res shading is that NVIDIA is claiming that they can offer a 1.3x to 2x increase in pixel shader performance without noticeably compromising the image quality. Like many of the other technologies in the GameWorks VR toolkit this is an implementation of a suggested VR practice, however in NVIDIA’s case the company believes they have a significant technological advantage in implementing it thanks to multi-projection acceleration. With MPA to bring down the cost of rendering to multiple viewports, NVIDIA’s hardware can better take advantage of the performance advantages of this rendering approach, essentially making it an even more efficient method of VR rendering. Of course it’s ultimately up to developers whether they want to implement this or not, so whether this technology sees significant use is up to developers and whether they find the tradeoffs worth the performance gains and necessary development effort.

9 Comments

View All Comments

jjj - Sunday, May 31, 2015 - link

Wonder if VR could enable GPUs with less RAM.If you take the GTX 980TI, lets say they get 100 good dies per wafer and the 28nm wafer is 5k$ so one chip is 50$ )excluding testing and packaging and all that) while at the same time maybe they pay 60$ for 6GB of GDDR5 (disclaimer - this estimate might be way off). The board and all that is on it, the cooling add a bit more but the bulk of the costs are the GPU and the RAM ,with the RAM being a huge chunk nowadays.

Next year VR vs 4k should require less RAM since VR is lower res and higher FPS, unless some of these VR specific features are memory heavy.

Any thoughts on the viability of going maybe more on die cache and less RAM for VR?

TallestJon96 - Monday, June 1, 2015 - link

Seems feasible. I think we are in a VRAM spoke right now with the new consoles and 4k become more reasonable, but as you say VR focussed on higher shader performance and frame rate, not massive textures and pixel count.If anything, faster memory like HBM will be needed, not increased capacity.

invinciblegod - Sunday, May 31, 2015 - link

This assumes that you are always looking straight ahead right? Does that mean you can turn your eyes or else blurry image?phantomferrari - Sunday, May 31, 2015 - link

thats a good point. i see this technology being useful in VR goggles that track the users eyes to see where they are lookingWardrop - Sunday, May 31, 2015 - link

I thought the same thing. Seems the idea though is that the pixels on the edges are squished, hence after the distortion, the edges of the screen are actually higher resolution than the centre. With this technique, the resolution at the edges of the screen could be slightly lowered, meaning after the distortion is applied which squeezes together the edges, the end result should be an optically consistent resolution across the entire screen. This is all in theory. It honestly seems more effort than it's worth.twin - Sunday, May 31, 2015 - link

Pretty sure it's good practice to render at a slightly higher res than native to keep the center "blown up" area looking nice post warp. This leads to even more waste on the outer areas. Still, it's probably not worth the effort.MrSpadge - Monday, June 1, 2015 - link

Exactly: they're not looking at making the edges blurry, they're removing information which couldn't be seen there anyway due to the lens warping."This is all in theory. It honestly seems more effort than it's worth."

In many cases people would go to far larger efforts for even a 10% improvement in shader performance. Unless a developer doesn't care about performance and optimization at all.

Dukealicious - Tuesday, June 9, 2015 - link

In a VR headset quite a lot of the screen is not actually visible. For reference the automap, health, shields, and ammo displays in the borderlands games are outside your vision no matter where you point your eyes. Everything is being super-sampled for not just the distortion correction but also since aliasing is very obvious when a screen is magnified by the lenses. The lenses have a sweet spot of clarity and the image also blurs the further you get from it. A FOV of 120 must be set in games to display correctly as well. So the demands of consumer VR with the release of the Vive and Rift is a 2160x1200 supersampled for distortion correction with a 120 FOV, stereo rendered, and a framerate of 90 fps solid to match their 90 hz display so the head tracking doesn't stutter and make you sick.Any performance saving we can take by not rendering high resolutions in the out of sweet spot zones and not rendering at all in the areas invisible to us is much needed in VR.

This type of rendering will make higher FOV headsets in the future worthwhile from a performance stand point.

I will illustrate the current position of performance without this on the Oculus DK2 which is currently available. The DK@ is only 1920 x 1080 and needs to maintain 75 fps for 75 hz. With a GTX 970 I have to turn all the settings in Bioshock Infinite to medium/low for Geometry 3d in the DK2. Not typically a demanding game by any means.

We have all grown accustom to a certain level of graphical fidelity. Having to drop your settings so drastically for VR is sure to disappoint and this Multi-Res Shading is going to mitigate that somewhat.

twin - Sunday, May 31, 2015 - link

Rendering multiple viewports in a single pass is only in dx12, right? Any word on benefits to stereo rendering?