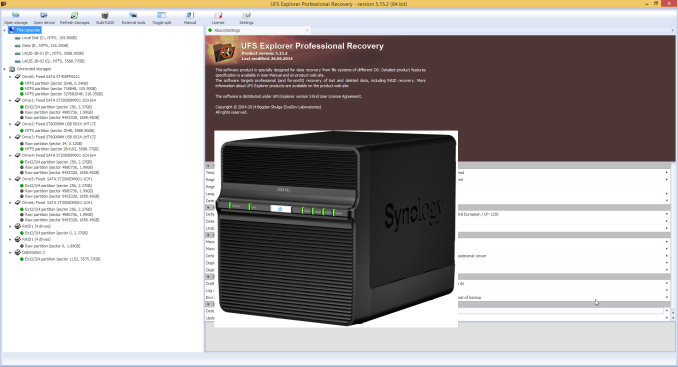

Recovering Data from a Failed Synology NAS

by Ganesh T S on August 22, 2014 6:00 AM EST

It was bound to happen. After 4+ years of running multiple NAS units 24x7, I finally ended up in a situation that brought my data availability to a complete halt. Even though I perform RAID rebuild as part of every NAS evaluation, I have never had the necessity to do one in the course of regular usage. On two occasions (once with a Seagate Barracuda 1 TB drive in a Netgear NV+ v2 and another time with a Samsung Spinpoint 1 TB drive in a QNAP TS-659 Pro II), the NAS UI complained about increasing reallocated sector counts on the drive and I promptly backed up the data and reinitialized the units with new drives.

Failure Symptoms

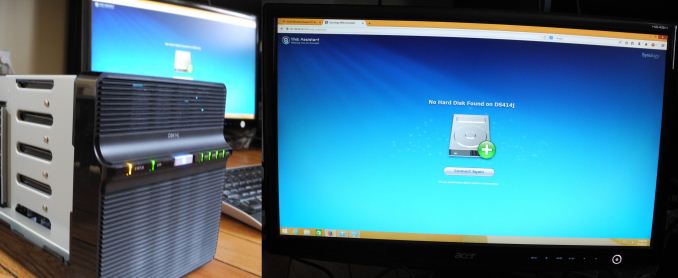

I woke up last Saturday morning to incessant beeping from the recently commissioned Synology DS414j. All four HDD lights were blinking furiously and the status light was glowing orange. The unit's web UI was inaccessible. Left with no other option, I powered down the unit with a long press of the front panel power button and restarted it. This time around, the web UI was accessible, but I was presented with the dreaded message that there were no hard drives in the unit.

The NAS, as per its intended usage scenario, had been only very lightly loaded in terms of network and disk traffic. However, in the interest of full disclosure, I have to note that the unit had been used againt Synology's directions with reference to hot-swapping during the review process. The unit doesn't support hot-swap, but we tested it out and found that it worked. However, the drives that were used for long term testing were never hot-swapped.

Data Availability at Stake

In my original DS414j review, I had indicated its suitability as a backup NAS. After prolonged usage, it was re-purposed slightly. The Cloud Station and related packages were uninstalled as they simply refused to let the disks go to sleep. However, I created a shared folder for storing data and mapped it on a Windows 8.1 VM in the QNAP TS-451 NAS (that is currently under evaluation). By configuring that shared folder as the local path for QSync (QNAP's Dropbox-like package), I intended to get any data uploaded to the DS414j's shared folder backed up in real time to the QNAP AT-TS-451's QSync folder (and vice-versa). The net result was that I was expecting data to be backed up irrespective of whether I uploaded it to the TS-451 or the DS414j. Almost all the data I was storing on the NAS units at that time was being generated by benchmark runs for various reviews in progress.

My first task after seeing the 'hard disk not present' message on the DS414j web page was to ensure that my data backup was up to date on the QNAP TS-451. I had copied over some results to the DS414j on Friday afternoon, but, to my consternation, I found that QSync had failed me. The updates that had occurred in the mapped Samba share hadn't reflected properly on to the QSync folder in the TS-451 (the last version seemed to be from Thursday night, which leads me to suspect that QSync wasn't doing real-time monitoring / updates, or, it was not recognizing updates made to a monitored folder from another machine). In any case, I had apparently lost a day's work (machine time, mainly) worth of data.

55 Comments

View All Comments

omgyeti - Friday, August 22, 2014 - link

Awesome article. I've had a DiskStation for over 3 years without any hiccups, but it's always nice to see an article with multiple recovery solutions presented in the event my luck takes a turn for the worse someday. I also back the NAS up to a USB drive, but don't want that to be my only hope in the event something ever happens.garrytaylo987 - Wednesday, June 6, 2018 - link

Issue related hard-disk are common so don't worry if you need a instant recovery of the data and partition, first try any free tool like mini tool that is secure,user-friendly and free. If the issue is not solved.t-rexky - Friday, August 22, 2014 - link

A very interesting read - thank you.I have DS1512+ with four 1 TB drives set-up using Synology Hybrid RAID and the fifth 3 TB drive holds a backup of the data. Coincidentally, I have been concerned about the backup integrity and I just had some discussions with Synology on the subject. The Synology Backup application cannot create a complete backup of my data because it skips all the symbolic links present in my data on the RAID volume. The only workaround at this point is to set up a cron job to use rsync for backup purposes as opposed to the Synology GUI backup application. Synology have promised to look into correcting this in the future, but right now the backups created by the DSM GUI are not complete.

Dahak - Friday, August 22, 2014 - link

Interesting article and a nice look at some possible ways to try to recover data.But I think that you got lucky as it was a hardware failure and not a drive failure, especially when you mentioned that the UFS Explorer automatically found the array.

Wonder if other people, as I am curious, may want to see how this would play out with a simulated drive failure, ie leave one of the drives off to simulate a failed / clicking drive.

DanNeely - Friday, August 22, 2014 - link

Ganesh already does do raid rebuild tests that simulate this.Dahak - Friday, August 22, 2014 - link

I do know that he does the raid rebuilds, but more a worse case scenario where the raid rebuild does not work due to some hardware issue and have to pull the drive and put them into another system.Although I know ideal that where your backups come in.

Flunk - Friday, August 22, 2014 - link

Compared to my experiences with low-end NAS units from other vendors this actually seems quite reasonable. It's the sort of thing that most enthusiasts or IT people could do without having to send it out for data recovery.icrf - Friday, August 22, 2014 - link

I set up a DS411j a few years ago with 2 TB Seagate drives in RAID 5 that's still working perfectly fine. I was explicit about RAID 5 because I had manually run arrays in mdadm for a while and noticed that's all it was when I logged into a shell on the NAS. I never had any doubt that if the NAS itself failed, but not the drives, that I could just plug them into another machine and have access to everything. It would never have crossed my mind to look for a Windows tool to access them. Having to stop an array that wasn't quite there before forcing it to show up probably would have taken me awhile to figure out, too.The worst that happened to me is when I had drives split between multiple SATA controller cards, and one of the controller cards flaked out and dropped half the drives in my RAID 5 array all at once. Since the array wasn't just degraded, it was down, there were no changes made. I just had to convince madam they weren't half spares. Calling madam --assume-clean was the ticket. You just have to get the order of the drives right when you pass in the devices else the file system is corrupt. You can stop and restart the array with another --assume-clean and another guess at order until the file system is valid without problem.

I love madam. Unfortunately, I also had a drive dying slowly with lots of bad sectors silently corrupting data for months. That led me to ZFS and data checksums, which are completely awesome. I'm not nearly as familiar with ZFS as I am with mdadm, so it makes me a little nervous. It also doesn't allow online capacity expansion, like mdadm. I think my next array is going to be a little more bleeding edge and use btrfs. Should be close to the best of both worlds.

Gigaplex - Saturday, August 23, 2014 - link

I agree with your sentiments about considering btrfs, however I'd advise against it for RAID5 equivalence for quite some time. Not only is it still considered experimental, it flat out isn't finished. Last I checked, it wasn't capable of automatically dropping a drive as it goes bad in parity mode.isa - Friday, August 22, 2014 - link

Great article. I think the two biggest issues were that the QSync app didn't do what you told it or expected it to do, and when it failed, the QSync app didn't tell you that it failed. hardware has come a long way in the past 15 years or so, but the robustness of backup/sync apps has not - we had apps that didn't do what we wanted many years ago.Given the vital importance of backup and sync apps to do what we expect them to do, app developers should spend much more effort with scripts or the like to set up backups more robustly, conduct self-tests of configs and settings to ensure settings will do what you expect, and better alert reporting if things occur that you don't expect. Put another way, you found out that your backup failed only when you needed it, which was exactly the SOTA 20 years ago. Disappointing (but not surprising) that it still may be for many users..