6 TB NAS Drives: WD Red, Seagate Enterprise Capacity and HGST Ultrastar He6 Face-Off

by Ganesh T S on July 21, 2014 11:00 AM EST6 TB Face-Off: The Contenders

Prior to getting into the performance evaluation, we will take a look at the special aspects and compare the specifications of the three drives being considered today.

Western Digital Red 6 TB

The 6 TB Red's claim to fame is undoubtedly its areal density. While Seagate went in for a six-platter design for its 6 TB drives, Western Digital has managed to cram in 1.2 TB/platter and deliver a 6 TB drive with the traditional five platter design. The costs are also kept reasonable because of the use of traditional PMR (perpendicular magnetic recording) in these drives.

The 6 TB drive has a suggested retail price of $299, making it the cheapest of all the three drives that we are considering today.

Seagate Enterprise Capacity 3.5 HDD v4 6 TB

Seagate was the first to utilize PMR to deliver a 6 TB enterprise drive earlier this year. They achieved this through the use of a six platters (compared to the traditional five that most hard drives use at the maximum). A downside of using six platters was that the center screw locations on either side got shifted, rendering some drive caddies unable to hold them properly. However, we had no such issues when trying to use the QNAP rackmount's drive caddy with the Seagate drive.

Seagate claims best in class performance, and we will be verifying those claims in the course of this review. Pricing ranges from around $450 on Amazon (third party seller) to $560 on Newegg.

HGST Ultrastar He6 6 TB

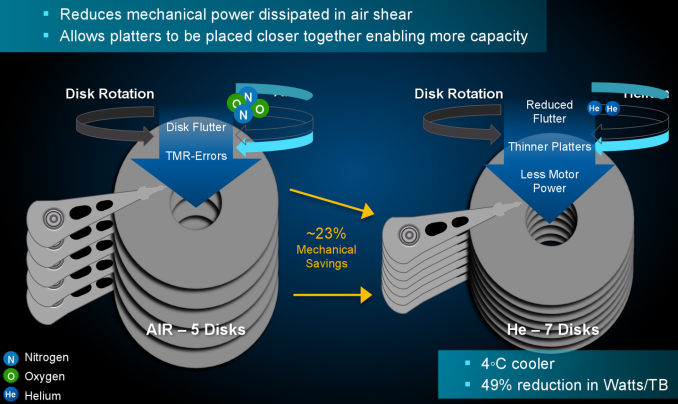

The HGST Ultrastar He6 is undoubtedly the most technologically advanced drive that we are evaluating today. There are two main patented innovations behind the Ultrastar He6, HelioSeal and 7Stac. The former refers to placement of the platters in a hermetically sealed enclosure filled with helium instead of air. The latter refers to packaging of seven platters in the same 1" high form factor of traditional 3.5" drives.

With traditional designs, we have seen a maximum of six platters in a standard 3.5" drive. The additional platter is made possible in helium filled drives because the absence of air shear reduces flutter and allows for thinner platters. The motor power needed to achieve the same rotation speeds is also reduced, thereby lowering total power dissipation. The hermetically sealed nature of the drives also allows for immersive cooling solutions (placement of the drives in a non-conducting liquid). This is something not possible in traditional hard drives due to the presence of a breather port.

The TCO (total cost of ownership) is bound to be much lower for the Ultrastar He6 compared to other 6 TB drives when large scale datacenter applications are considered (due to lower power consumption, cooling costs etc.). The main issue, from the perspective of the SOHOs / home consumers, is the absence of a tier-one e-tailer carrying these drives. We do see third party sellers on Amazon supplying these drives for around $470.

Specifications

The various characteristics / paper specifications of the drives under consideration are available in the table below.

| 6 TB NAS Hard Drive Face-Off Contenders | |||

| WD Red | Seagate Enterprice Capacity 3.5" HDD v4 | HGST Ultrastar He6 | |

| Model Number | WD60EFRX | ST6000NM0024 | HUS726060ALA640 |

| Interface | SATA 6 Gbps | SATA 6 Gbps | SATA 6 Gbps |

| Advanced Format (AF) | Yes | Yes | No (512n) |

| Rotational Speed | IntelliPower (5400 rpm) | 7200 rpm | 7200 rpm |

| Cache | 64 MB | 128 MB | 64 MB |

| Rated Load / Unload Cycles | 300K | 600K | 600K |

| Non-Recoverable Read Errors / Bits Read | 1 per 10E14 | 1 per 10E15 | 1 per 10E15 |

| MTBF | 1M | 1.4 M | 2M |

| Rated Workload | ~120 - 150 TB/yr | < 550 TB/yr | < 550 TB/yr |

| Operating Temperature Range | 0 - 70 C | 5 - 60 C | 5 - 60 C |

| Physical Dimensions | 101.85 mm x 147 mm x 26.1 mm. / 680 grams | 101.85 mm x 147 mm x 26.1 mm / 780 grams | 101.6 mm x 147 mm x 26.1 mm / 640 grams |

| Warranty | 3 years | 5 years | 5 years |

The interesting aspects are highlighted above. Most of these are related to the non-enterprise nature of the WD Red. However, two aspects that stand out are the multi-segmented 128 MB cache in the Seagate drive and the HGST He6 drive's lower weight despite having more platters than the other two drives.

83 Comments

View All Comments

sleewok - Monday, July 21, 2014 - link

Based on my experience with the WD Red drives I'm not surprised you had one fail that quickly. I have a 5 disk (2TB Red) RAID6 setup with my Synology Diskstation. I had 2 drives fail within a week and another 1 within a month. WD replaced them all under warranty. I had a 4th drive seemingly fail, but seemed to fix itself (I may have run a disk check). I simply can't recommend WD Red if you want a reliable setup.Zan Lynx - Monday, July 21, 2014 - link

If we're sharing anecdotal evidence, I have two 2TB Reds in a small home server and they've been great. I run a full btrfs scrub every week and never find any errors.Child mortality is a common issue with electronics. In the past I had two Seagate 15K SCSI drives that both failed in the first week. Does that mean Seagate sucks?

icrf - Monday, July 21, 2014 - link

I had a lot of trouble with 3 TB Green drives, had 2 or 3 early failures in an array of 5 or 6, and one that didn't fail, but silently corrupted data (ZFS was good for letting me know about that). Once all the failures were replaced under warranty, they all did fine.So I guess test a lot, keep on top of it for the first few months or year, and make use of their pretty painless RMA process. WD isn't flawless, but I'd still use them.

Anonymous Blowhard - Monday, July 21, 2014 - link

>early failure>Green drives

Completely unsurprised here, I've had nothing but bad luck with any of those "intelligent power saving" drives that like to park their heads if you aren't constantly hammering them with I/O.

Big ZFS fan here as well, make sure you're on ECC RAM though as I've seen way too many people without it.

icrf - Monday, July 21, 2014 - link

I'm building a new array and will use Red drives, but I'm thinking of going btrfs instead of zfs. I'll still use ECC RAM. Did on the old file server.spazoid - Monday, July 21, 2014 - link

Please stop this "ZFS needs ECC RAM" nonsense. ZFS does not have any particular need of ECC RAM that every other filer doesn't.Anonymous Blowhard - Monday, July 21, 2014 - link

I have no intention of arguing with yet another person who's totally wrong about this.extide - Monday, July 21, 2014 - link

You both are partially right, but the fact is that non ECC RAM on ANY file server can cause corruption. ZFS does a little bit more "processing" on the data (checksums, optional compression, etc) which MIGHT expose you to more issues due to bit flips in memory, but stiff if you are getting frequent memory errors, you should be replacing the bad stick, good memory does not really have frequent bit errors (unless you live in a nuclear power station or something!)FWIW, I have a ZFS machine with a 7TB array, and run scrubs at least once a month, preferably twice. I have had it up and running in it's current state for over 2 years and have NEVER seen even a SINGLE checksum error according to zpool status. I am NOT using ECC RAM.

In a home environment, I would suggest ECC RAM, but in a lot of cases people are re-using old equipment, and many times it is a desktop class CPU which won't support ECC, which means moving to ECC ram might require replacing a lot of other stuff as well, and thus cost quite a bit of money. Now, if you are buying new stuff, you might as well go with an ECC capable setup as the costs aren't really much more, but that only applies if you are buying all new hardware. Now for a business/enterprise setup yes, I would say you should always run ECC, and not only on your ZFS servers, but all of them. However, most of the people on here are not going to be talking about using ZFS in an enterprise environment, at least the people who aren't using ECC!

tl/dr -- Non ECC is FINE for home use. You should always have a backup anyways, though. ZFS by itself is not a backup, unless you have your data duplicated across two volumes.

alpha754293 - Monday, July 21, 2014 - link

The biggest problem I had with ZFS is its total lack of data recovery tools. If your array bites the dust (two non-rotating drives) on a stripped zpool, you're pretty much hosed. (The array won't start up). So you can't just do a bit read in order to recover/salvage whatever data's still on the magnetic disks/platters of the remaining drives and the're nothing that has told me that you can clone the drive (including its UUID) in its entirety in order to "fool" the system thinking that it's the EXACTLY same drive (when it's actually been replaced) so that you can spin up the array/zpool again in order to begin the data extraction process.For that reason, ZFS was dumped back in favor of NTFS (because if an NTFS array goes down, I can still bit-read the drives, and salvage the data that's left on the platters). And I HAD a Premium Support Subscription from Sun (back when it was still Sun), and even they TOLD me that they don't have ANY data recovery tools like that. And they couldn't tell me the procedure for cloning the dead drives either (including its UUID).

Btrfs was also ruled out for the same technical reasons. (Zero data recovery tools available should things go REALLY farrr south.)

name99 - Tuesday, July 22, 2014 - link

"because if an NTFS array goes down, I can still bit-read the drives, and salvage the data that's left on the platters"Are you serious? Extracting info from a bag of random sectors was a reasonable thing to do from a 1.44MB floppy disk, it is insane to imagine you can do this from 6TB or 18 TB or whatever of data.

That's like me giving you a house full of extremely finely shredded then mixed up paper, and you imaging you can construct a million useful documents from it.