NVIDIA Kepler Cards Get HDMI 4K@60Hz Support (Kind Of)

by Ryan Smith on June 20, 2014 10:00 AM EST

An interesting feature has turned up in NVIDIA’s latest drivers: the ability to drive certain displays over HDMI at 4K@60Hz. This is a feat that would typically require HDMI 2.0 – a feature not available in any GPU shipping thus far – so to say it’s unexpected is a bit of an understatement. However as it turns out the situation is not quite cut & dry as it first appears, so there is a notable catch.

First discovered by users, including AT Forums user saeedkunna, when Kepler based video cards using NVIDIA’s R340 drivers are paired up with very recent 4K TVs, they gain the ability to output to those displays at 4K@60Hz over HDMI 1.4. These setups were previously limited to 4K@30Hz due to HDMI bandwidth availability, and while those limitations haven’t gone anywhere, TV manufacturers and now NVIDIA have implemented an interesting workaround for these limitations that teeters between clever and awful.

Lacking the available bandwidth to fully support 4K@60Hz until the arrival of HDMI 2.0, the latest crop of 4K TVs such as the Sony XBR 55X900A and Samsung UE40HU6900 have implemented what amounts to a lower image quality mode that allows for a 4K@60Hz signal to fit within HDMI 1.4’s 8.16Gbps bandwidth limit. To accomplish this, manufacturers are making use of chroma subsampling to reduce the amount of chroma (color) data that needs to be transmitted, thereby freeing up enough bandwidth to increase the image resolution from 1080p to 4K.

An example of a current generation 4K TV: Sony's XBR 55X900A

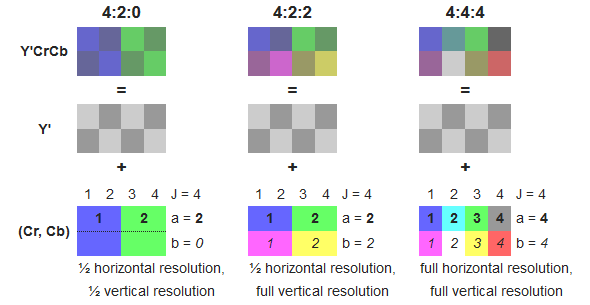

Specifically, manufacturers are making use of Y'CbCr 4:2:0 subsampling, a lower quality sampling mode that requires ¼ the color information of regular Y'CbCr 4:4:4 sampling or RGB sampling. By using this sampling mode manufacturers are able to transmit an image that utilizes full resolution luma (brightness) but a fraction of the chroma resolution, allowing manufacturers to achieve the necessary bandwidth savings.

Wikipedia: diagram on chroma subsampling

The use of chroma subsampling is as old as color television itself, however the use of it in this fashion is uncommon. Most HDMI PC-to-TV setups to date use RGB or 4:4:4 sampling, both of which are full resolution and functionally lossless. 4:2:0 sampling on the other hand is not normally used for the last stage of transmission between source and sink devices – in fact HDMI didn’t even officially support it until recently – and is instead used in the storage of source material itself, be it Blu-Ray discs, TV broadcasts, or streaming videos.

Perceptually 4:2:0 is an efficient way to throw out unnecessary data, making it a good way to pack video, but at the end of the day it’s still ¼ the color information of a full resolution image. Since video sources are already 4:2:0 this ends up being a clever way to transmit video to a TV, as at the most basic level a higher quality mode would be redundant (post-processing aside). But while this works well for video it also only works well for video; for desktop workloads it significantly degrades the image as the color information needed to drive subpixel-accurate text and GUIs is lost.

In any case, with 4:2:0 4K TVs already on the market, NVIDIA has confirmed that they are enabling 4:2:0 4K output on Kepler cards with their R340 drivers. What this means is that Kepler cards can drive 4:2:0 4K TVs at 60Hz today, but they are doing so in a manner that’s only useful for video. For HTPCs this ends up being a good compromise and as far as we can gather this is a clever move on NVIDIA’s part. But for anyone who is seeing the news of NVIDIA supporting 4K@60Hz over HDMI and hoping to use a TV as a desktop monitor, this will still come up short. Until the next generation of video cards and TVs hit the market with full HDMI 2.0 support (4:4:4 and/or RGB), DisplayPort 1.2 will remain the only way to transmit a full resolution 4K image.

54 Comments

View All Comments

nathanddrews - Friday, June 20, 2014 - link

420 color is good enough for Blu-ray most of the time, but banding becomes and issue more often than not. I could see this as a nice option for every 6-bit, TN-based 4K TV out there that already suffers from banding. Not ideal, but nice. Of course it sounds like the manufacturer would have to put out a firmware update to make it work if it doesn't inherently support the option.I'm not upgrading my GPU until DP1.3 hits in 2015.

http://www.brightsideofnews.com/2013/12/03/display...

crimsonson - Friday, June 20, 2014 - link

The primary cause of banding is color bit depth, or lack of it. Specifically, human eye can see banding at 8 bit color depth while 10 bit is almost gone.Chroma subsampling tends to create edge bleeds and blocks because the lack of color accuracy.

nathanddrews - Friday, June 20, 2014 - link

Yes, bit depth is the primary cause.MrSpadge - Monday, June 23, 2014 - link

Yeah, with all those TN panels 4:2:0 won't matter.Dave G - Tuesday, August 12, 2014 - link

As an example, professional HD/SDI connections use 10-bits with 4:2:2 chroma sub-sampling.kwrzesien - Friday, June 20, 2014 - link

Just knowing this difference is enlightening, thanks!MikeMurphy - Friday, June 20, 2014 - link

Great article. Too bad DisplayPort isn't the chosen successor to HDMI for all 4K devices. HDMI bitstream tech is far inferior yet the industry seems to be stuck on it.UltraWide - Friday, June 20, 2014 - link

All consumer electronics devices are entrenched in HDMI, so it's hard to make the switch to DP at this point. They just need to keep improving HDMI.brightblack - Friday, June 20, 2014 - link

Or they could include DP ports along with HDMI.surt - Friday, June 20, 2014 - link

OMG are you kidding, that could ad 50 or even 60 cents to the price of a device!