The Bulldozer Review: AMD FX-8150 Tested

by Anand Lal Shimpi on October 12, 2011 1:27 AM ESTGaming Performance

AMD clearly states in its reviewer's guide that CPU bound gaming performance isn't going to be a strong point of the FX architecture, likely due to its poor single threaded performance. However it is useful to look at both CPU and GPU bound scenarios to paint an accurate picture of how well a CPU handles game workloads, as well as what sort of performance you can expect in present day titles.

Civilization V

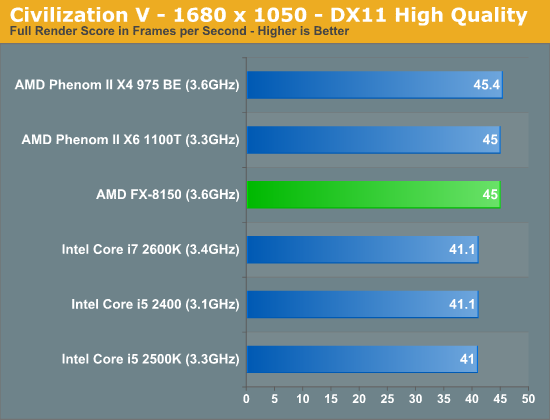

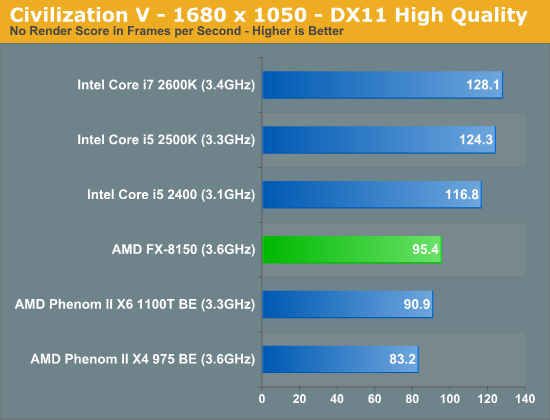

Civ V's lateGameView benchmark presents us with two separate scores: average frame rate for the entire test as well as a no-render score that only looks at CPU performance.

While we're GPU bound in the full render score, AMD's platform appears to have a bit of an advantage here. We've seen this in the past where one platform will hold an advantage over another in a GPU bound scenario and it's always tough to explain. Within each family however there is no advantage to a faster CPU, everything is just GPU bound.

Looking at the no render score, the CPU standings are pretty much as we'd expect. The FX-8150 is thankfully a bit faster than its predecessors, but it still falls behind Sandy Bridge.

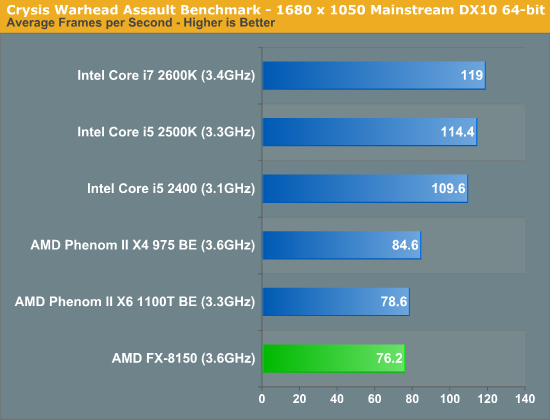

Crysis: Warhead

In CPU bound environments in Crysis Warhead, the FX-8150 is actually slower than the old Phenom II. Sandy Bridge continues to be far ahead.

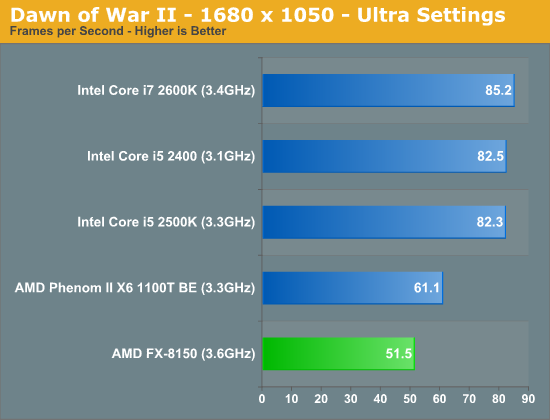

Dawn of War II

We see similar results under Dawn of War II. Lightly threaded performance is simply not a strength of AMD's FX series, and as a result even the old Phenom II X6 pulls ahead.

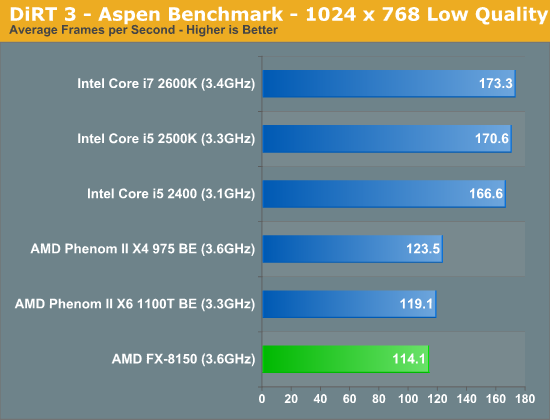

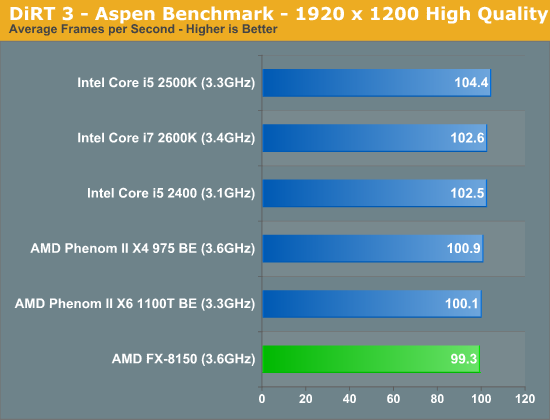

DiRT 3

We ran two DiRT 3 benchmarks to get an idea for CPU bound and GPU bound performance. First the CPU bound settings:

The FX-8150 doesn't do so well here, again falling behind the Phenom IIs. Under more real world GPU bound settings however, Bulldozer looks just fine:

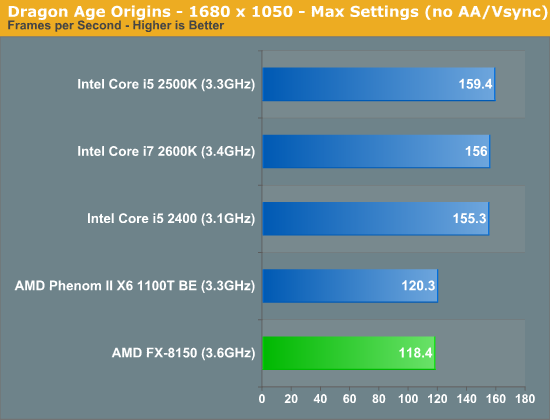

Dragon Age

Dragon Age is another CPU bound title, here the FX-8150 falls behind once again.

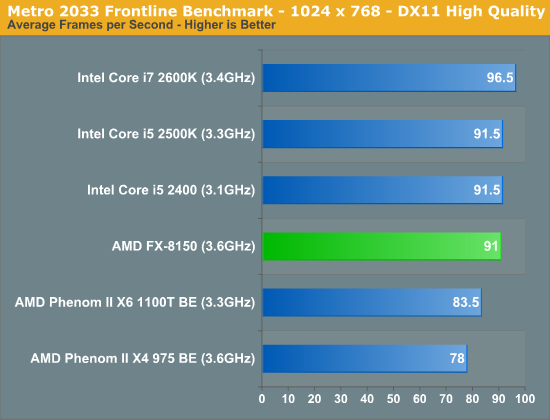

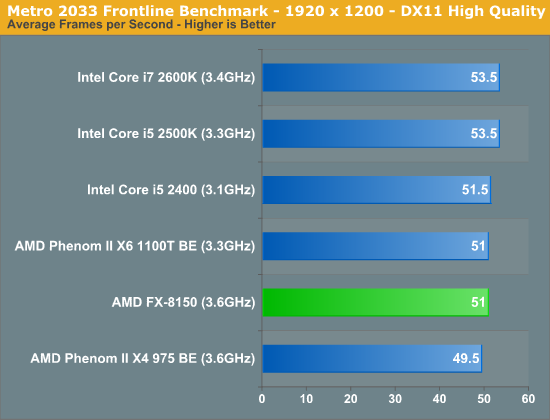

Metro 2033

Metro 2033 is pretty rough even at lower resolutions, but with more of a GPU bottleneck the FX-8150 equals the performance of the 2500K:

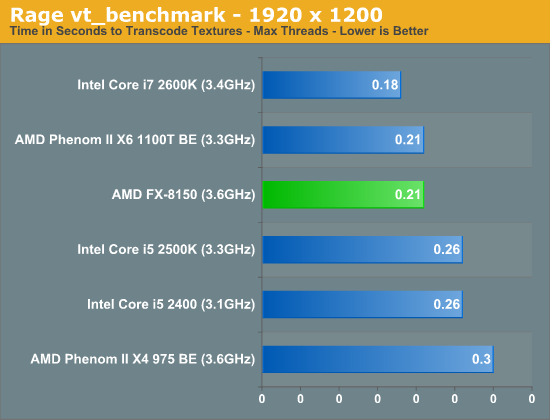

Rage vt_benchmark

While id's long awaited Rage title doesn't exactly have the best benchmarking abilities, there is one unique aspect of the game that we can test: Megatexture. Megatexture works by dynamically taking texture data from disk and constructing texture tiles for the engine to use, a major component for allowing id's developers to uniquely texture the game world. However because of the heavy use of unique textures (id says the original game assets are over 1TB), id needed to get creative on compressing the game's textures to make them fit within the roughly 20GB the game was allotted.

The result is that Rage doesn't store textures in a GPU-usable format such as DXTC/S3TC, instead storing them in an even more compressed format (JPEG XR) as S3TC maxes out at a 6:1 compression ratio. As a consequence whenever you load a texture, Rage needs to transcode the texture from its storage codec to S3TC on the fly. This is a constant process throughout the entire game and this transcoding is a significant burden on the CPU.

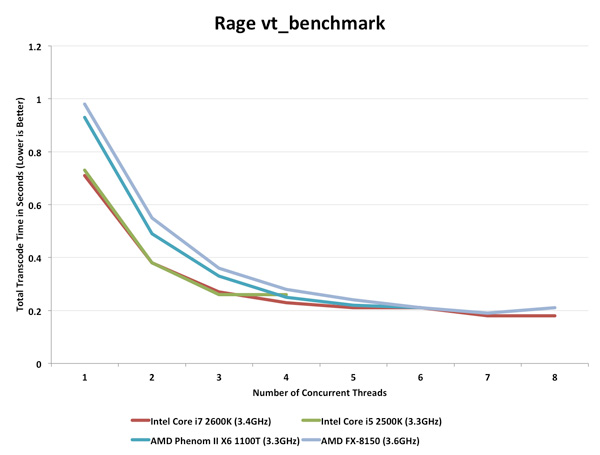

The Benchmark: vt_benchmark flushes the transcoded texture cache and then times how long it takes to transcode all the textures needed for the current scene, from 1 thread to X threads. Thus when you run vt_benchmark 8, for example, it will benchmark from 1 to 8 threads (the default appears to depend on the CPU you have). Since transcoding is done by the CPU this is a pure CPU benchmark. I present the best case transcode time at the maximum number of concurrent threads each CPU can handle:

The FX-8150 does very well here, but so does the Phenom II X6 1100T. Both are faster than Intel's 2500K, but not quite as good as the 2600K. If you want to see how performance scales with thread count, check out the chart below:

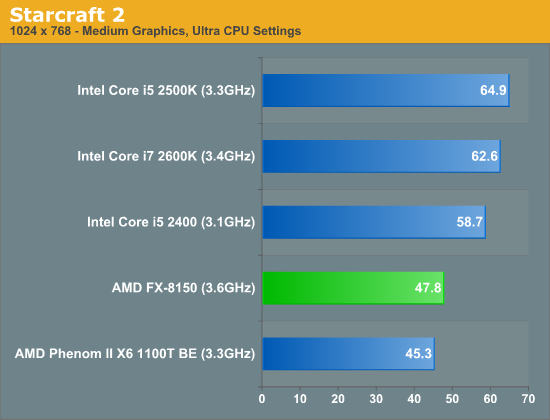

Starcraft 2

Starcraft 2 has traditionally done very well on Intel architectures and Bulldozer is no exception to that rule.

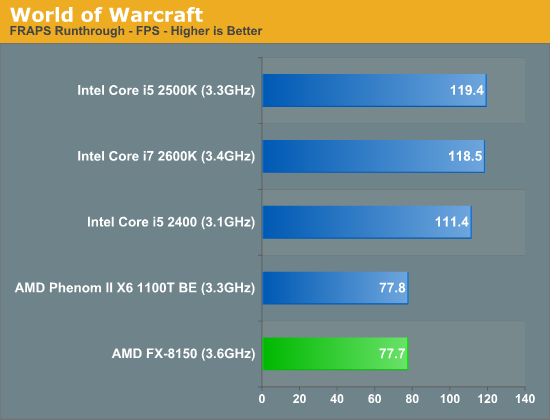

World of Warcraft

430 Comments

View All Comments

Kristian Vättö - Wednesday, October 12, 2011 - link

I'm happy that I went with i5-2500K. Performance, especially in gaming, seems to be pretty horrible.ckryan - Wednesday, October 12, 2011 - link

I was just going to say the same thing. I was all about AMD last year, but early this year I picked up an i5 2500K and was blown away by efficiency and performance even in a hobbled H67. Once I bought a proper P67, it was on. It's not that Bulldozer is terrible (because it isn't); Sandy Bridge is just a "phenom". If SB had just been a little faster than Lynnfield, it would still be fast. But it's a big leap to SB, and it's certainly the best value. AMD has Bulldozer, an inconsistent performer that is better in some areas and worse in others, but has a hard time competing with it's own forebearer. It's still an unusual product that some people will really benefit from and some wont. The demise of the Phenom II can't come soon enough for AMD as some people will look at the benchmarks and conclude that a super cheap X4 955BE is a much better value than BD. I hope it isn't seen that way, but it's not a difficult conclusion to reach. Perhaps BD is more forward looking, and the other octocore will be cheaper than the 8150 so it's a better value. I'd really like to see the performance of the 4- and 6- before making judgement.It's still technically a win, but it's a Pyrrhic victory.

ogreslayer - Wednesday, October 12, 2011 - link

I tell friends that exact thing all the time. Phenoms are great CPUs but switch to Nehelam or Sandy Bridge and the speed is noticibly different. At equal clocks Core 2 Quads are as fast or faster.Bulldozer ends up with a lot of issues fanboys refused to see even though Anandtech and other sites did bring it up in previews. I guess it was just hope and a understandable disbelief that AMD would be behind for a decade till the next architecture. We can start at clockspeed but only being dual-channel is not helping memory bandwidth. I don't think there is enough L3 and they most definitely should have a shortpipeline to crush through processes. They need an 1.4 to 1.6 in CBmarks or what is thhe point of the modules.

The module philosophy is probably close to the future of x86 but I imagine seeing Intel keeping HT enabled on the high-end SKUs. Also I think both of them want to switch FP calculation over to GPUs.

slickr - Wednesday, October 12, 2011 - link

Yeah I agree. To me Bulldozer comes like 1 year late.Its just not competitive enough and the fact that you have to make a sacrifice to single threaded performance for multithreaded when even the multithreaded isn't that good and looses to 2600K is just sad.

They needed to win big with Bulldozer and they failed hard!

retrospooty - Wednesday, October 12, 2011 - link

Ya, it seems to be a pattern lately with the last few AMD architectures.1. Hype up the CPU as the next big thing

2. Release is delayed

3. Once released, benchmarks are severely underwhelming

JasperJanssen - Wednesday, October 12, 2011 - link

4. Immediately start hyping up the next release as the salvation of all.GatorLord - Thursday, October 20, 2011 - link

It looks to me like BD is the CPU beta bug sponge for Trinity and beyond. Everybody these days releases a beta before the money launch.Hence the B3 stepping...and probably a few more now that a capable fab is onboard with TSMC. BD is not a CPU like we're used to...its an APU/HPC engine designed to drive code and a Cayman class GPU at 28nm and lots of GHz...I get it now.

Also, the whole massive cache and 2B transistors, 800M dedicated to I/O, thing (SB uses 995M total) finally makes sense when you realize that this chip was designed to pump many smaller GPGPU caches full of raw data to process and combine all the outputs quickly.

Apparently GPUs compute very fast, but have slow fetch latencies and the best way to overcome that is by having their caches continously and rapidly filled...like from the CPU with the big cache and I/O machine on the same chip...how smart..and convenient...and fast.

Can you say 'OpenCL'?

jleach1 - Friday, October 21, 2011 - link

I don't see how this can be considered an APU, This product isn't being marketed as a HPC proc., and i don't see the benefit of this architecture design in GPGPU environments at all.It's sad...i've always given major kudos to AMD. Back in the days of the Athlon's prime, it was awesome to see david stomping goliath.

But AMD has dropped the ball continuously since then. Thuban was nice, but it might as well be considered a fluke, seeing as AMD took a worthy architecture (Thuban) and ditched it for what's widely considered as a joke.

And the phrase "AMD dropped the ball" is an understatement.

They've ultimately failed. They havent competed with Intel in years. They...have...failed. After thuban came out i was starting to think that the fact that they competed for years on price and clock speed alone was a fluke, and just a blip on the radar. Now i see it the opposite way...it seems that AMD merely puts out good processors every once in a while...and only on accident.

medi01 - Wednesday, October 12, 2011 - link

Well, if anand didn't badmouth AMD's GPU's on top of CPU's, we would see less "fanboys" complainging about anand's bias.vol7ron - Wednesday, October 12, 2011 - link

By badmouth do you mean objectively tell the truth? Do you blame PCMark or FutureMark for any of that? Perhaps if all the tests just said that AMD was clearly better, it wouldn't be badmouthing anymore.