Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTComparing Percentile Numbers Between the GTX 980 Ti and Fury X

As the two top end cards from both graphics silicon manufacturers were released this year, there was all a big buzz about which is best for what. Ryan’s extensive review of the Fury X put the two cards head to head on a variety of contests. For DirectX 12, the situation is a little less clear cut for a number of reasons – games are yet to mature, drivers are also still in the development stage, and both sides competing here are having to rethink their strategies when it comes to game engine integration and the benefits that might provide. Up until this point DX12 contests have either been synthetic or having some controversial issues. So for Fable Legends, we did some extra percentile based analysis for NVIDIA vs. AMD at the top end.

For this set of benchmarks we ran our 1080p Ultra test with any adaptive frame rate technology enabled and recorded the result:

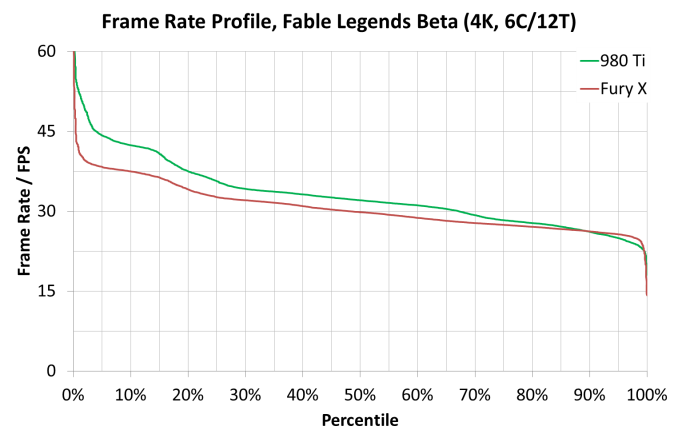

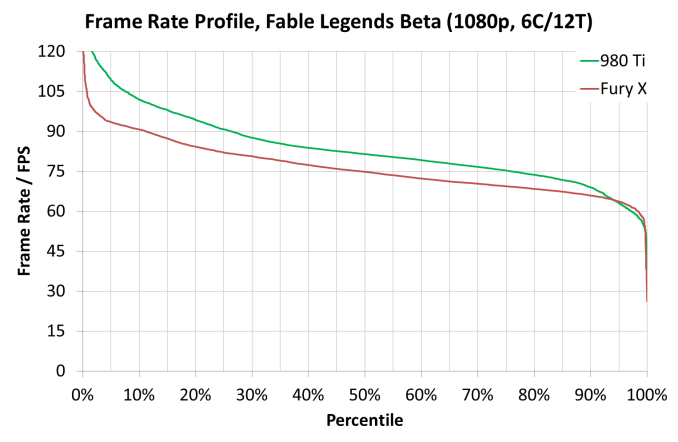

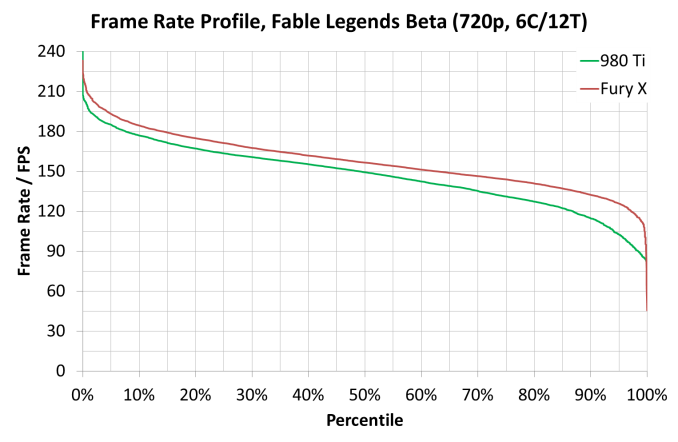

For these tests, usual rules apply – GTX 980 and Fury X, in our Core i7/i5/i3 configurations at all three resolution/setting combinations (3840x2160 Ultra, 1920x1080 Ultra and 1280x720 Low). Data is given in the form of frame rate profile graphs, similar to those on the last page.

As always, Fable Legends is still in early access preview mode and these results may not be indicative of the final version, but at this point they still provide an interesting comparison.

At 3840x2160, both frame rate profiles from each card looks the same no matter the processor used (one could argue that the Fury X is mildly ahead on the i3 at low frame rates), but the 980 Ti has a consistent gap across most of the profile range.

At 1920x1080, the Core i7 model gives a healthy boost to the GTX 980 Ti in high frame rate scenarios, though this seems to be accompanied by an extended drop off region in high frame rate areas. It is also interesting that in the Core i3 mode, the Fury X results jump up and match the GTX 980 Ti almost across the entire range. This again points to some of the data we saw on the previous page – at 1080p somehow having fewer cores gave the results a boost due to lighting scenarios.

At 1280x720, as we saw in the initial GPU comparison page on average frame rates, the Fury X has the upper hand here in all system configurations. Two other obvious points are noticeable here – moving from the Core i5 to the Core i7, especially on the GTX 980 Ti, makes the easy frames go quicker and the harder frames take longer, but also when we move to the Core i3, performance across the board drops like a stone, indicating a CPU limited environment. This is despite the fact that with these cards, 1280x720 at low settings is unlikely to be used anyway.

141 Comments

View All Comments

Alexvrb - Friday, September 25, 2015 - link

Ship them both to the East Coast and set up a Review Office / Beach Resort, complete with community events!zimanodenea - Thursday, September 24, 2015 - link

My Asus m5a97 has an option to do this.mdriftmeyer - Thursday, September 24, 2015 - link

Time to develop in a test harness of equal merits and scope across the globe for the reviewers. To do less is unprofessional. The whole point of a test harness is not to ductape simulations but to cover all bases.Spunjji - Friday, September 25, 2015 - link

Well said. This isn't some tinpot organisation, is it? ;)Drumsticks - Thursday, September 24, 2015 - link

That's a shame. I'd really like to see that comparison. With the improvements Zen should, in theory, bring, it could really give AMD its best chance in years to get some wind under its sails.beck2050 - Thursday, September 24, 2015 - link

A little too early to worry about. Hopefully both companies will improve when 12 becomes standard issue.DrKlahn - Thursday, September 24, 2015 - link

Epic has always worked closely with Nvidia and used their hardware, so the only thing that surprises me is that the gap doesn't favor Nvidia more. It's very early to make any predictions, but there are some interesting conversations on other forums about how both architectures behave in different situations. Nvidia's architecture does appear to have issues in some asynchronous workloads. What little evidence we have says this may be an issue in some games.My own opinion is that with Nvidia's market dominance we will see most developers try to avoid situations where problems occur. As an AMD owner my main hope is that we see DX12 squeeze out proprietary codes and level the playing field more. I'm also happy that the latest Unreal engine appears to run well on both vendors hardware.

jiao lu - Thursday, September 24, 2015 - link

not only close working relationship . the Unreal 3/4 use Nvidia Physics sdk outright. Epic engine is terribly optimized for console right now. basically it is PC engine, churn out pc demo now and then . Now far fewer AAA studio use unreal 4 like they do with unreal 3 in the ps 3/xbox 360 era. I am very much suspicious unreal 5 is not mult-threaded rendering enough , use dx 12 like do dx 11 before.Midwayman - Thursday, September 24, 2015 - link

Well, the xbox one is using AMD hardware and dx12. That's probably a bigger reason to keep it neutral than more nvidia share on the PC.Spunjji - Friday, September 25, 2015 - link

The PS4 is also using the same AMD GCN 1.0 architectures for CPU and GPU