Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTFinal Words

Non-final benchmarks are a tough element to define. On one hand, they do not show the full range of both performance and graphical enhancements and could be subject to critical rendering paths that cause performance issues. On the other side, they are near-final representations and aspirations of the game developers, with the game engine almost at the point of being comfortable. To say that a preview benchmark is somewhere from 50% to 90% representative of the final product is not much of a bold statement to make in these circumstances, but between those two numbers can be a world of difference.

Fable Legends, developed by Lionhead Studios and published by Microsoft, uses EPIC’s Unreal 4 engine. All the elements of that previous sentence have gravitas in the gaming industry: Fable is a well-known franchise, Lionhead is a successful game developer, Microsoft is Microsoft, and EPIC’s Unreal engines have powered triple-A gaming titles for the best part of two decades. With the right ingredients, therein lies the potential for that melt-in-the-mouth cake as long as the oven is set just right.

Convoluted cake metaphors aside, this article set out to test the new Fable Legends benchmark in DirectX 12. As it stands, the software build we received indicated that the benchmark and game is still in 'early access preview' mode, so improvements may happen down the line. Users are interested in how DX12 games will both perform and scale on different hardware and different settings, and we aimed to fill in some of those blanks today. We used several AMD and NVIDIA GPUs, mainly focusing on NVIDIA’s GTX 980 Ti and AMD’s Fury X, with Core i7-X (six cores with HyperThreading), Core i5 (quad core, no HT) and Core i3 (two cores, HT) system configurations. These two GPUs were also tested at 3840x2160 (4K) with Ultra settings, 1920x1080 with Ultra settings and 1280x720 with low settings.

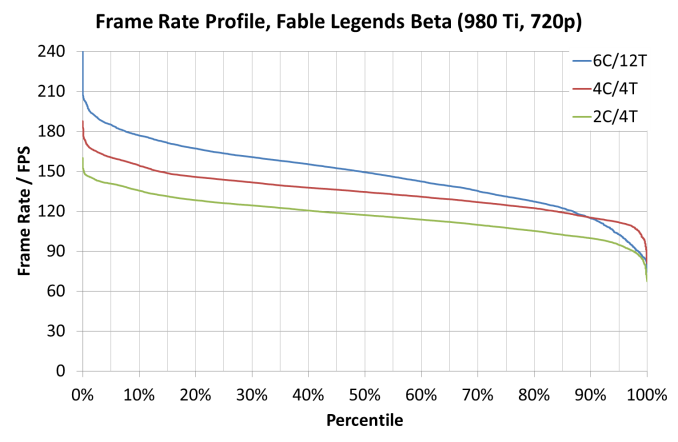

On pure average frame rate numbers, we saw NVIDIA’s GTX 980 Ti by just under 10% in all configurations except for the 1280x720 settings which gave the Fury X a substantial (10%+ on i5 and i3) lead. Looking at CPU scaling, this showed that scaling only ever really occurred at the 1280x720 settings anyway, with both AMD and NVIDIA showing a 20-25% gain moving from a Core i3 to a Core i7. Some of the older cards showed a smaller 7% improvement over the same test.

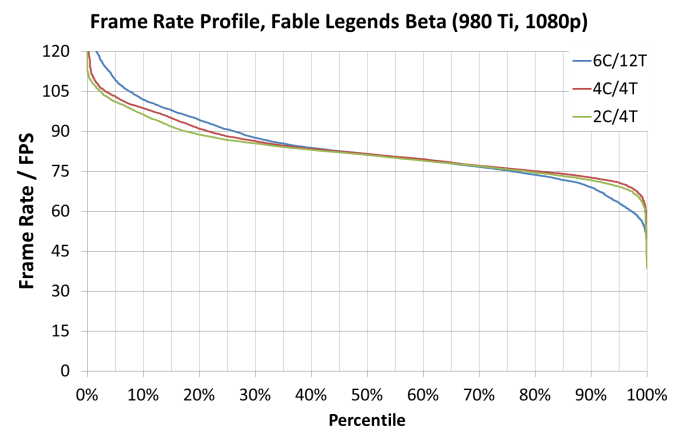

Looking through the frame rate profile data, specifically looking for minimum benchmark percentile numbers, we saw an interesting correlation with using a Core i7 (six core, HT) platform and the frame rates on complex frames being beaten by the Core i5 and even the Core i3 setups, despite the fact that during the easier frames to compute the Core i7 performed better. In our graphs, it gave a tilted axis akin to a seesaw:

When comparing the separate compute profile time data provided by the benchmark, it showed that the Core i7 was taking longer for a few of the lighting techniques, perhaps relating to cache or scheduling issues either at the CPU end or the GPU end which was alleviated with fewer cores in the mix. This may come down to a memory controller not being bombarded with higher priority requests causing a shuffle in the data request queue.

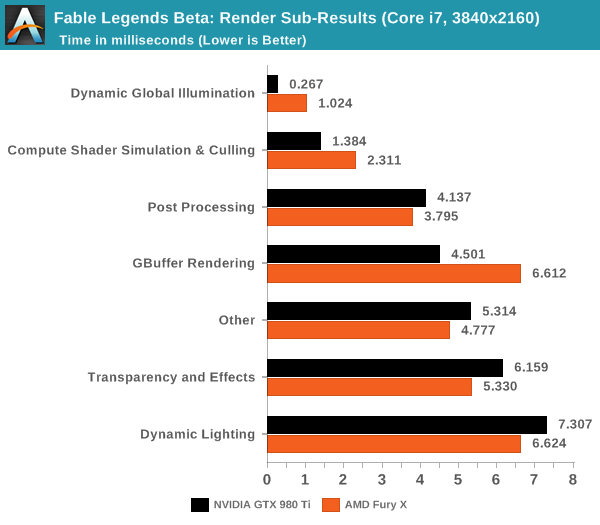

When we do a direct comparison for AMD’s Fury X and NVIDIA’s GTX 980 Ti in the render sub-category results for 4K using a Core i7, both AMD and NVIDIA have their strong points in this benchmark. NVIDIA favors illumination, compute shader work and GBuffer rendering where AMD favors post processing, transparency and dynamic lighting.

DirectX 12 is coming in with new effects to make games look better with new features to allow developers to extract performance out of our hardware. Fable Legends uses EPIC’s Unreal Engine 4 with added effects and represents a multi-year effort to develop the engine around DX12's feature set and ultimately improve performance over DX11. With this benchmark we have begun to peek a little in to what actual graphics performance in games might be like, and if DX12 benefits users on low powered CPUs or high-end GPUs more. That being said, there is a good chance that the performance we’ve seen today will change by release due to driver updates and/or optimizing the game code. Nevertheless, at this point it does appear that a reasonably strong card such as the 290X or GTX 970 are needed to get a smooth 1080p experience (at Ultra settings) with this demo.

141 Comments

View All Comments

tackle70 - Thursday, September 24, 2015 - link

Nice article. Maybe tech forums can now stop with the "AMD will be vastly superior to Nvidia in DX12" nonsense.cmdrdredd - Thursday, September 24, 2015 - link

Leads me to believe more and more that Stardock is up to shenanigans just a bit or that not every game will use certain features that DX12 can perform and Nvidia is not held back in those games.Jtaylor1986 - Thursday, September 24, 2015 - link

I'd say Ashes is a far more representative benchmark. What is the point of doing a landscape simulator benchmark. This demo isn't even trying to replicate real world performancecmdrdredd - Thursday, September 24, 2015 - link

Are you nuts or what? This is a benchmark of the game engine used for Fable Legends. It's as good a benchmark as any when trying to determine performance in a specific game engine.Jtaylor1986 - Thursday, September 24, 2015 - link

Except its completely unrepresentative of actual gameplay unless this grass growing simulator.Jtaylor1986 - Thursday, September 24, 2015 - link

"The benchmark provided is more of a graphics showpiece than a representation of the gameplay, in order to show off the capabilities of the engine and the DX12 implementation. Unfortunately we didn't get to see any gameplay in this benchmark as a result, which would seem to focus more on combat."LukaP - Thursday, September 24, 2015 - link

You dont need gameplay in a benchmark. you need the benchmark to display common geometry, lighting, effects and physics of an engine/backend that drives certain games. And this benchmark does that. If you want to see gameplay, there are many terrific youtubers who focus on that, namely Markiplier, NerdCubed, TotalBiscuit and othersMr Perfect - Thursday, September 24, 2015 - link

Actual gameplay is still important in benchmarking, mainly because that's when framerates usually tank. An empty level can get fantastic FPS, but drop a dozen players having an intense fight into that level and performance goes to hell pretty fast. That's the situation where we hope to see DX12 outshine DX11.Stuka87 - Thursday, September 24, 2015 - link

Wrong, a benchmark without gameplay is worthless. Look at Battlefield 4 as an example. Its built in benchmarks are worthless. Once you join a 64 player server, everything changes.This benchmark shows how a raw engine runs, but is not indicative of how the game will run at all.

Plus its super early in development with drivers that stil need work, which the article states that AMD's driver arrived too late.

inighthawki - Thursday, September 24, 2015 - link

Yes, but when the goal is to show improvements in rendering performance, throwing someone into a 64 player match completely skews the results. The CPU overhead of handling a 64 player multiplayer match will far outweigh to small changes in CPU overhead from a new rendering API.