The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTThe Fiji GPU: Go Big or Go Home

Now that we’ve had a chance to take a look at the architecture backing Fiji, let’s talk about the Fiji GPU itself.

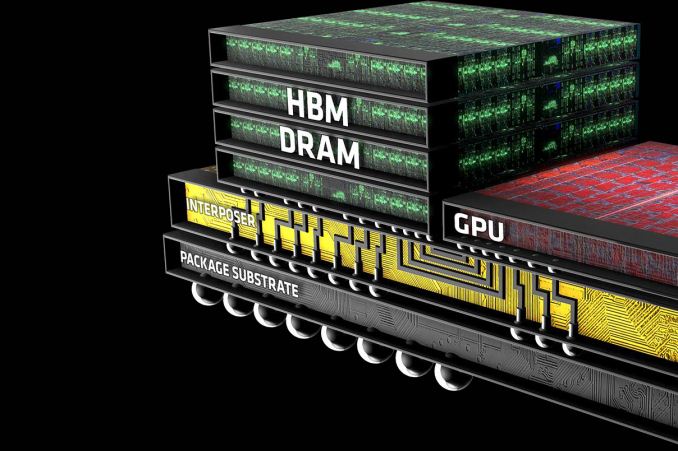

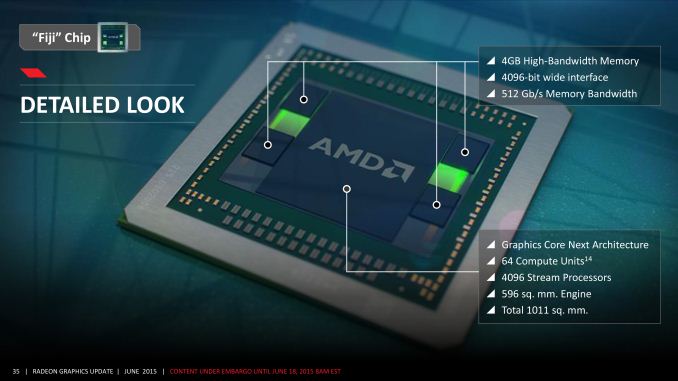

Fiji’s inclusion of High Bandwidth Memory (HBM) technology complicates the picture somewhat when talking about GPUs. Whereas past GPUs were defined by the GPU die itself and then the organic substrate package it sits on, the inclusion of HBM requires a third layer, the silicon interposer. The job of the interposer is to sit between the package and the GPU, serving as the layer that connects the on-package HBM memory stacks with the GPU. Essentially a very large chip without any expensive logic on it, the silicon interposer allows for finer, denser signal routing than organic packaging is capable of, making the ultra-wide 4096-bit HBM bus viable for the first time.

We’ll get to HBM in detail in a bit, but it’s important to call out the impact of HBM and the interposer early, since they have a distinct impact on how Fiji was designed and what its capabilities are.

As for Fiji itself, Fiji is unlike any GPU built before by AMD, and not only due to the use of HBM. More than anything else, it’s simply huge, 596mm2 to be precise. As we mentioned in our introduction, AMD has traditionally shied away from big chips, even after the “small die” era ended, and for good reason. Big chips are expensive to develop, expensive to produce, take longer to develop, and yield worse than small chips (this being especially the case early-on for 40nm). Altogether they’re riskier than smaller chips, and while there are times where they are necessary, AMD has never reached this point until now.

The end result is that for the first time since the unified shader era began, AMD has gone toe-to-toe with NVIDIA on die size. Fiji’s 596mm2 die size is just 5mm2 (<1%) smaller than NVIDIA’s GM200, and more notably still hits TSMC’s 28nm reticle limit. TSMC can’t build chips any bigger than this; Fiji is as big a chip as AMD can order.

| AMD Big GPUs | ||||

| Die Size | Native FP64 Rate | |||

| Fiji (GCN 1.2) | 596mm2 | 1/16 | ||

| Hawaii (GCN 1.1) | 438mm2 | 1/2 | ||

| Tahiti (GCN 1.0) | 352mm2 | 1/4 | ||

| Cayman (VLIW4) | 389mm2 | 1/4 | ||

| Cypress (VLIW5) | 334mm2 | 1/5 | ||

| RV790 (VLIW5) | 282mm2 | N/A | ||

Looking at Fiji relative to AMD’s other big GPUs, it becomes very clear very quickly just how significant this change is for AMD. When Hawaii was released in 2013 at 438mm2, it was already AMD’s biggest GPU ever for its time. And yet Fiji dwarfs it, coming in at 158mm2 (36%) larger. The fact that Fiji comes at the latter-half of the 28nm process’s life time means that such a large GPU is not nearly as risky now as it would have been in 2011/2012 (NVIDIA surely took some licks internally on GK110), but still, nothing else we can show you today can really sell the significance of Fiji to AMD as much as the die size can.

And the fun doesn’t stop there. Along with producing the biggest die they could, AMD has also more or less gone the direction of NVIDIA and Maxwell in the case of Fiji, building what is unambiguously the most gaming/FP32-centric GPU the company could build. With GCN supporting power-of-two FP64 rates between 1/2 and 1/16, AMD has gone for the bare minimum in FP64 performance that their architecture allows, leading to a 1/16 FP64 rate on Fiji. This is a significant departure from Hawaii, which implemented native support for ½ rate, and on consumer parts offered a handicapped 1/8 rate. Fiji will not be a FP64 powerhouse – its 4GB of VRAM is already perhaps too large of a handicap for the HPC market – so instead we get AMD’s best FP32 GPU going against NVIDIA’s best FP32 GPU.

AMD’s final ace up their sleeve on die size is HBM. Along with HBM’s bandwidth and power benefits, HBM is also much simpler to implement, requiring less GPU space for PHYs than GDDR5 does. This is in part due to the fact that HBM stacks have their own logic layer, distributing some of the logic on to each stack, and furthermore a benefit of the fact that the signaling logic that remains doesn’t have to be nearly as complex since the frequencies are so much lower. 4096-bits of HBM PHYs still takes up a fair bit of space – though AMD won’t tell us how much – but it’s notably lower than the amount of space AMD was losing to Hawaii’s GDDR5 memory controllers.

The end result is that not only has AMD built their biggest GPU ever, but they have done virtually everything they can to maximize the amount of die space they get to allocate to FP32 and rendering resources. Simply put, AMD has never reached so high and aimed for parity with NVIDIA in this manner.

Ultimately this puts Fiji’s transistor count at 8.9 billion transistors, even more than the 8 billion transistors found in NVIDIA’s GM200, and, as expected, significantly more than Hawaii’s 6.2 billion. Interestingly enough, on a relative basis this is almost exactly the same increase we saw with Hawaii; Fiji packs in 43.5% more transistors than Hawaii, and Hawaii packed in 43.9% more transistors than Tahiti. So going by transistors alone, Fiji is very much to Hawaii what Hawaii was to Tahiti.

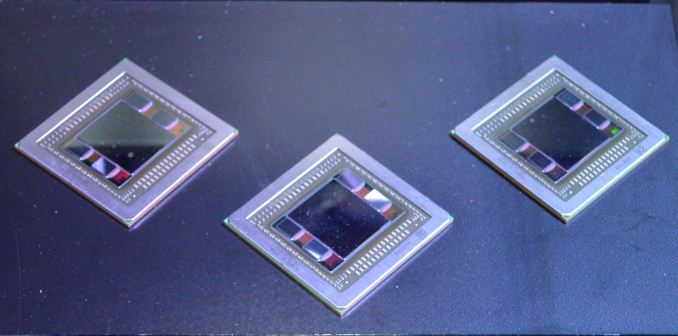

Finally, as large as the Fiji GPU is, the silicon interposer it sits on is even larger. The interposer measures 1011mm2, nearly twice the size of Fiji. Since Fiji and its HBM stacks need to fit on top of it, the interposer must be very large to do its job, and in the process it pushes its own limits. The actual interposer die is believed to exceed the reticle limit of the 65nm process AMD is using to have it built, and as a result the interposer is carefully constructed so that only the areas that need connectivity receive metal layers. This allows AMD to put down such a large interposer without actually needing a fab capable of reaching such a large reticle limit.

What’s interesting from a design perspective is that the interposer and everything on it is essentially the heart and soul of the GPU. There is plenty of power regulation circuitry on the organic package and even more on the board itself, but within the 1011mm2 floorplan of the interposer, all of Fiji’s logic and memory is located. By mobile standards it’s very nearly an SoC in and of itself; it needs little more than external power and I/O to operate.

458 Comments

View All Comments

Scali - Tuesday, July 7, 2015 - link

Even better, there are various vendors that sell a short version of the GTX970 (including Asus and Gigabyte for example), so it can take on the Nano card directly, as a good choice for a mini-ITX based HTPC.And unlike the Nano, the 970 DOES have HDMI 2.0, so you can get 4k 60 Hz on your TV.

Oxford Guy - Thursday, July 9, 2015 - link

28 GB/s + XOR contention is fast performance indeed, at half the speed of a midrange card from 2007.Gothmoth - Monday, July 6, 2015 - link

so in short another BULLDOZER.... :-(after all the hype not enough and too late.

i agree the card is not bad.. but after all the HYPE it IS a disappointment.

OC results are terrible... and AMD said it will be an overclockers dream.

add to that that i read many complains about the noisy watercooler (yes for retail versions not early preview versions).

iamserious - Monday, July 6, 2015 - link

It looks ugly. Loliamserious - Monday, July 6, 2015 - link

Also. I understand it's a little early but I thought this card was supposed to blow the GTX 980Ti out of the water with it's new memory. The performance to price ratio is decent but I was expecting a bit larger jump in performance increase. Perhaps with the driver updates things will change.Scali - Tuesday, July 7, 2015 - link

Hum, unless I missed it, I didn't see any mention of the fact that this card only supports DX12 level 12_0, where nVidia's 9xx-series support 12_1.That, combined with the lack of HDMI 2.0 and the 4 GB limit, makes the Fury X into a poor choice for the longer term. It is a dated architecture, pumped up to higher performance levels.

FMinus - Tuesday, July 7, 2015 - link

Whilst it's beyond me why they skimped on HDMI 2.0 - there's adapters if you really want to run this card on a TV. It's not such a huge drama tho, the cards will drive DP monitors in the vast majority, so, I'm much more sad at the missing DVI out.Scali - Wednesday, July 8, 2015 - link

I think the reason why there's no HDMI 2.0 is simple: they re-used their dated architecture, and did not spend time on developing new features, such as HDMI 2.0 or 12_1 support.With nVidia already having this technology on the market for more than half a year, AMD is starting to drop behind. They were losing sales to nVidia, and their new offerings don't seem compelling enough to regain their lost marketshare, hence their profits will be limited, hence their investment in R&D for the next generation will be limited. Which is a problem, since they need to invest more just to get where nVidia already is.

It looks like they may be going down the same downward spiral as their CPU division.

sa365 - Tuesday, July 7, 2015 - link

Well at least AMD aren't cheating by allowing the driver to remove AF despite what settings are selected in game. Just so they can win benchmarks.How about some fair, like for like benchmarking and see where these cards really stand.

FourEyedGeek - Tuesday, July 7, 2015 - link

As for the consoles having 8 GB of RAM, not only is that shared, but the OS uses 3 GB to 3.5 GB, meaning there is only a max of 5 GB for the games on those consoles. A typical PC being used with this card will have 8 to 16 GB plus the 4 GB on the card. Giving a total of 12 GB to 20 GB.In all honesty at 4K resolutions, how important is Anti-Aliasing on the eye? I can't imagine it being necessary at all, let alone 4xMSAA.