The NVIDIA GeForce GTX 980 Ti Review

by Ryan Smith on May 31, 2015 6:00 PM ESTCompute

Shifting gears, we have our look at compute performance. Since GTX Titan X has no compute feature advantage - no fast double precision support like what's found in the Kepler generation Titans - the performance difference between the GTX Titan X and GTX 980 Ti should be very straightforward.

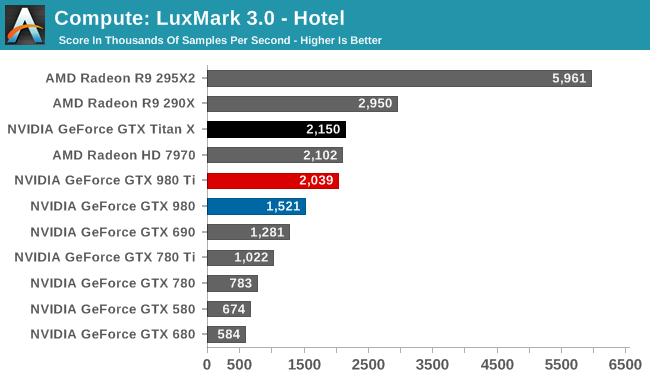

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

With the pace set for GM200 by GTX Titan X, there’s little to say here that hasn’t already been said. Maxwell does not fare well in LuxMark, and while GTX 980 Ti continues to stick very close to GTX Titan X, it none the less ends up right behind the Radeon HD 7970 in this benchmark.

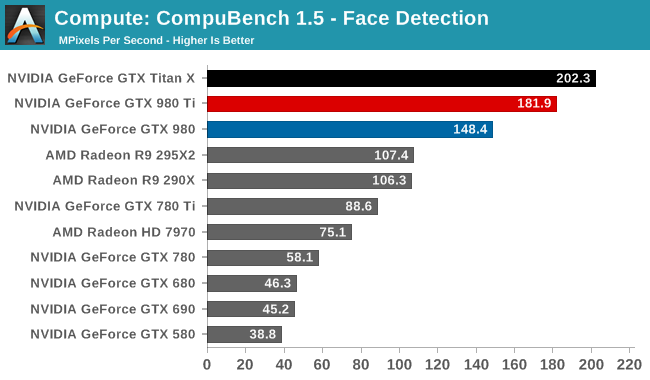

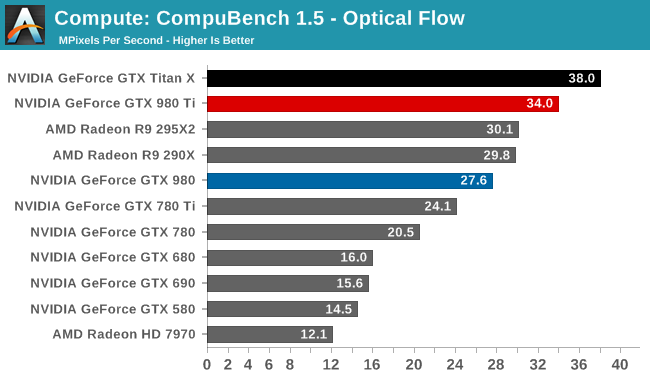

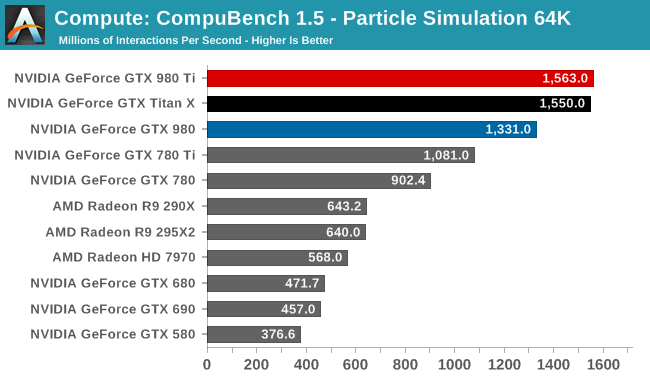

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Although GTX T980 Ti struggled at LuxMark, the same cannot be said for CompuBench. Though taking the second spot in all 3 sub-tests - right behind GTX Titan X - there's a bit wider of a gap than normal between the two GM200 cards, causing GTX 980 Ti to trail a little more significantly than in other tests. Given the short nature of these tests, GTX 980 Ti doesn't get to enjoy its usual clockspeed advantage, making this one of the only benchmarks where the theoretical 9% performance difference between the cards becomes a reality.

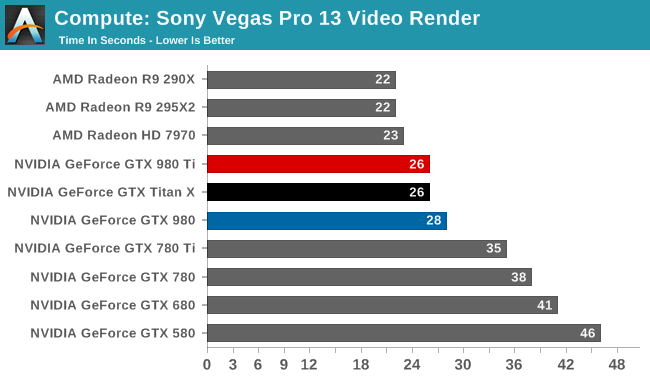

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

Traditionally a benchmark that favors AMD, GTX 980 Ti fares as well as GTX Titan X, closing the gap some. But it's still not enough to surpass Radeon HD 7970, let alone Radeon R9 290X.

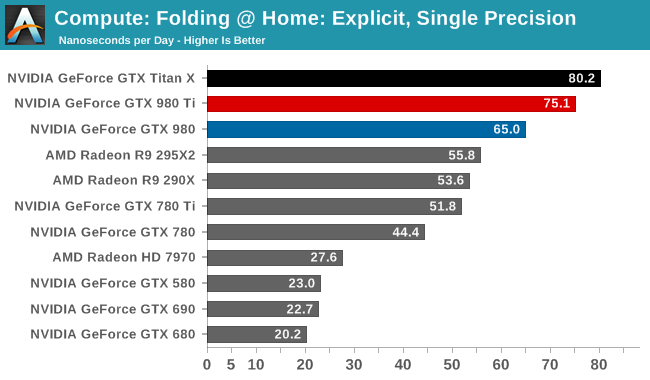

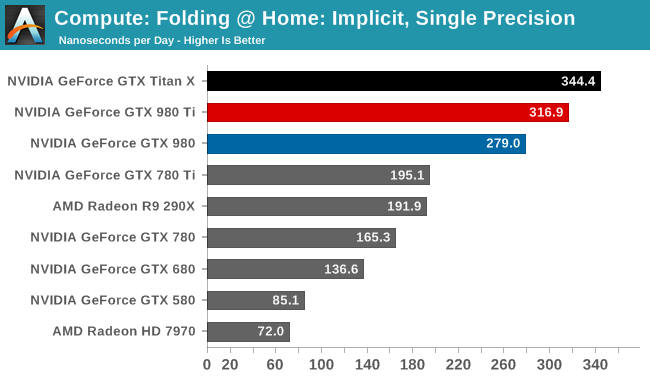

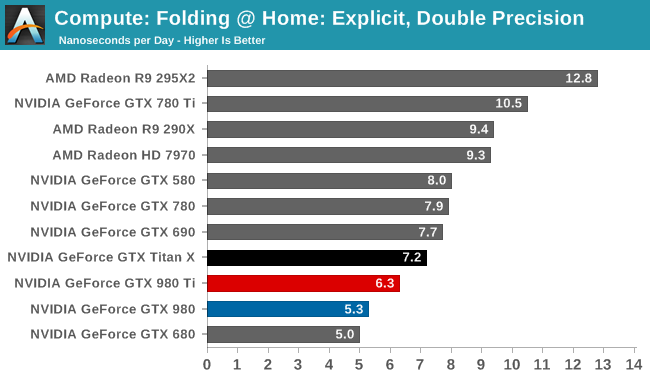

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Folding @ Home’s single precision tests reiterate GM200's FP32 compute credentials. Second only to GTX Titan X, GTX 980 Ti fares very well here.

Meanwhile Folding @ Home’s double precision test reiterates GM200's poor FP64 compute performance. At 6.3ns/day, it, like the GTX Titan X, occupies the lower portion of our benchmark charts, below AMD's cards and NVIDIA's high-performnace FP64 cards.

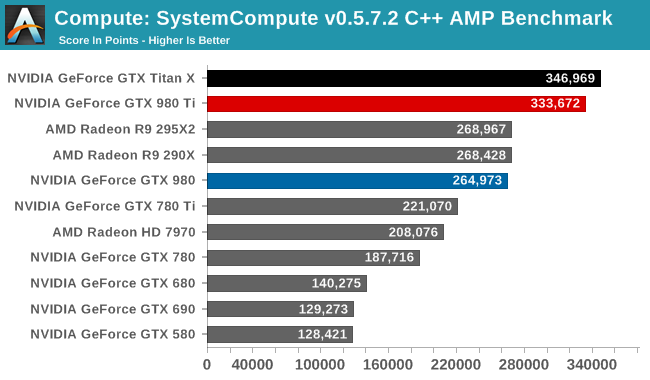

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

We end up ending our benchmarks where we started: with the GTX 980 Ti slightly trailing the GTX Titan X, and with the two GM200 cards taking the top two spots overall. So as with GTX Titan X, GTX 980 Ti is a force to be reckoned with for FP32 compute, which for a pure consumer card should be a good match for consumer compute workloads.

290 Comments

View All Comments

RaistlinZ - Sunday, May 31, 2015 - link

What more would a review of the 960 tell you that you don't already know, honestly? I'd rather read reviews about interesting products like the 980Ti. People need to let the 960 review go already, geez.Michael Bay - Sunday, May 31, 2015 - link

I only trust AT numbers and am in no hurry to upgrade.God I wish they would compare Baytrail/Cherrytrail to i3s.

Brett Howse - Sunday, May 31, 2015 - link

I did compare Cherry Trail to the i3 SP3 in the Surface 3 review. Was there more you were looking for?Michael Bay - Monday, June 1, 2015 - link

I`m trying to get a cheap small notebook for my father. He is currently on i3-380UM and the choice is between N3558 and i3-4030U. Workload is strictly internet browsing/ms office.Not much point in changing anything if performance is going to be worse than it was...

sandy105 - Monday, June 1, 2015 - link

Exactly , it would be interesting to see how much faster than baytrail they are ?DanNeely - Sunday, May 31, 2015 - link

DVI may be an obsolescent standard at this point; but 4/5k gaming is still expensive enough that a lot of the people buying into it now are ones who're upgrading from older 2560x1600 displays that don't do DP/HDMI 2. A lot of those people will probably keep using their old monitor as a secondary display after getting a new higher resolution one (I know I plan to); and good DL-DVI to display port adapters are still relatively expensive at ~$70. (There're cheaper ones; but they've all got lots of bad reviews from people who found they weren't operating reliably and were generating display artifacts: messed up scan lines.) Unless it dies first, I'd like to be able to keep using my existing NEC 3090 for a few more years without having to spend money on an expensive dongle.YazX_ - Sunday, May 31, 2015 - link

Dude, majority are still playing on 1920x1080 and just few now are making the leap to 2560x1440p, i have been gaming on 1440p since two years and not planning to go 4k anytime soon since hardware still not mature enough to play at 4k comfortably with single video card.thus, DVI is not going anywhere since dual layer DVI supports 1440p and probably most of 1080p gamers are using DVI unless if they have G-Sync or want to use Adaptive V-Sync then they have to use DP, and dont forget that there are too many people who bought 27" Korean 1440 monitors that doesnt have except DVI ports.

DanNeely - Sunday, May 31, 2015 - link

If you're playing at 1920/60hz this card's massive overkill, and in any event it's a non-issue for you because your monitor is only using a single link in the DVI and you can use a dirt cheap passive DVI-HDMI/DP adapter now; and worst case would only need a cheap single link adapter in the future.My comment was directed toward Ryan's comment on page 2 (near the bottom, above the last picture) suggesting that the DVI port wasn't really needed since any monitor it could drive wouldn't need this much horse power to run games.

FlushedBubblyJock - Wednesday, June 10, 2015 - link

totally disagree - I game at 1920x1200, the only rez the 980ti is capable of without knocking down the eye candy.Kutark - Monday, June 1, 2015 - link

Exactly. I literally just now upgraded to a 1440p monitor, and i can't even express in words how little of a sh*t i give about 4k gaming. Ive been a hardware nerd for a long time, but when i got into home theater i learned just how much resolution actually matters. 4k is overkill for a 120" projected image at a 15' seating distance. 4k at normal desk viewing distances is way beyond overkill. They've done tests on fighter pilots who have ridiculous vision, like 20/7.5 and such, and even they can't see a difference at those seating distances. 4k is almost as much of a marketing BS gimmick than 3D was for tv's.Anyways im clearly getting angry. But point still stands, every single gamer i know is still on 1080p, i was the first to splurge on a 1440p monitor. And now its put me into a position where my SLI'd 760's aren't really doing the deed, especially being 2gb cards. So, 980ti fits the bill for my gsync 144hz 1440p monitor just about perfectly.