The Intel Xeon E7-8800 v3 Review: The POWER8 Killer?

by Johan De Gelas on May 8, 2015 8:00 AM EST- Posted in

- CPUs

- IT Computing

- Intel

- Xeon

- Haswell

- Enterprise

- server

- Enterprise CPUs

- POWER

- POWER8

POWER8 Servers: The Reality Check

As we've just seen, the specs of the POWER8 as announced at launch are very impressive. But what about the in the real world? The top models (10-12 at 4 GHz+, 2TB per socket) are still limited to the extremely expensive E870/E880, which typically costs around 3 times as much (or more) as a comparable Xeon E7 system. But there is light at the end of the tunnel: "PowerLinux" quad socket systems are more expensive than comparable x86 systems, but only by 10 to 30%.

The real competition for x86 must probably come from the third parties of the OpenPower Fondation. IBM sells them POWER8 chips at much more reasonable prices ($2k - $3k), so it is possible to build a reasonably priced POWER8 system. The POWER8 chips sold to third parties are somewhat "lighter" versions, but that is more an advantage than you would think. For example, by keeping the clockspeed a bit lower, the power consumption is lower (190W TDP). These chips also have only 4 (instead of 8) memory buffer chips, which "limits" them to 1 TB of memory, but again this saves quite a bit of power, between 50W and 80W. In other words, the POWER8 chips available to third parties are much more reasonable and even more competitive than the power gobbling, ultra expensive behemoths that got all the attention at launch.

Tyan already has an one socket server and several Taiwanese (Wistron) and Chinese vendors are developing 2 socket systems. Quad socket models are not yet on the horizon as far as we know, but is probably going to change soon.

POWER8 vs. Xeon E5 v3: SPECing It Out

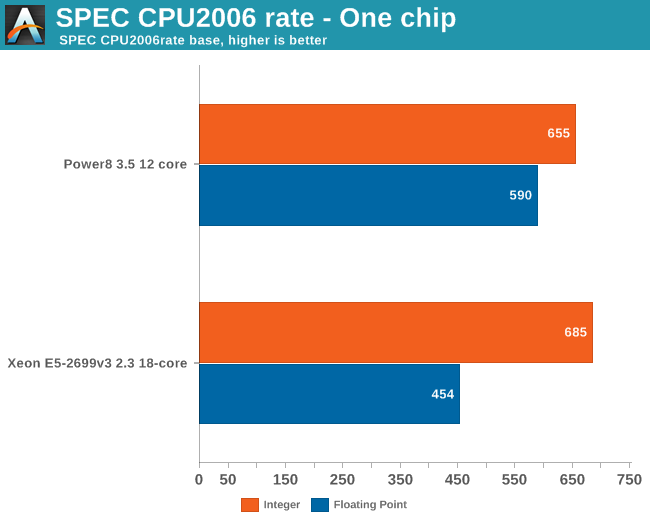

Unfortunately we did not have access to a full blown POWER8 system at this time. But as our loyal readers know, we do not limit our server testing to the x86 world (see here and here) . So until a POWER8 system arrives, we'll have to check out the available industry standard benchmarks. To that end we looked up the SPEC CPU2006 numbers for a single socket CPU.

The 12 cores inside the POWER8 - the single socket chips found in the more reasonable priced servers - perform very well. The integer performance is only a few percentages lower than the Intel chip and POWER8's floating point performance is well ahead of the Xeon.

Overall the POWER8 is quite capable of keeping up with the Xeon E5-2699v3. And don't let the "2.3 GHz" official clockspeed fool you into thinking that the Xeons are clocked unnecessarily low, either: in SPECint, the XEON is running at 2.8 GHz most of the time.

Ultimately, the POWER8 is able to offer slightly higher raw performance than the Intel CPUs, however it just won't be able to do so at the same performance/watt. Meanwhile the reasonable pricing of the POWER8 chips should result in third party servers that are strongly competitive with the Xeon on a performance-per-dollar basis. Reasonably priced, well performing dual and quad socket Linux on Power servers should be possible very soon.

146 Comments

View All Comments

DanNeely - Saturday, May 9, 2015 - link

The work loads that you'd be buying racks of servers for are better handled with individually less expensive systems. These 4/8way leviatans are for the one or two core business functions that only scale up not out; so the typical customer would only be buying a handful of these max.The other half is that even a thousand or two thousand/year in increased operating costs for the server is not only dwarfed by the price of the server; but by the price of software that makes the server look cheap. The best server for those applications isn't the server that costs the least to run. It's not the server that has the cheapest hardware price either. It's the one that lets you get away with the cheapest licensing fee for the application you're running.

One extreme example from the better part of a decade ago was that prior to being acquired by Oracle, Sun was making extremely wide processors that were very competitive on a per socket basis but used a huge number of really slow cores/threads to get their throughput. At that time Oracle licensed its DB on a per core (per thread?) basis, not per socket. As a result, an $80-100k HP/IBM server was a cheaper way to run a massive Oracle database than a $30k Sun box even if your workload was such that the cheap Sun hardware performed equally well; because Oracle's licensing ate several times the difference in hardware prices.

KateH - Saturday, May 9, 2015 - link

I think the Intel transition was almost-entirely dictated by the lack of mobile options for PowerPC. 125W each for 970MP's sounds like a lot, but keep in mind that the Mac Pro has been using a pair of 100-130W Xeons since the beginning in 2008. Workstations and HPC are much, much less constrained by TDP. The direction that Power and SPARC has been taking for the past decade of cramming loads of SMT-enabled, high-clocked cores into a single chip somewhat negates the power concerns- if a Power8 is pulling a couple hundred watts for a 12C/96T chip, that's probably going to be worth it for the users that need that much grunt. Even Intel's E7-8890V3 is a 165W chip!melgross - Saturday, May 9, 2015 - link

Actually, the G5 was moving faster than Netburst was. In a bit over a year, it would have caught up, then moved past. Intel's unexpected move to the older "M" series for the Yonah series surprised everyone (particularly AMD), and allowed Apple to make that move. It never would have happened with Netburst.Apple switched for two reasons. One was that IBM failed to deliver a mobile G5 chip right at the time when laptop sales were increasing faster than desktop sales, and Apple was forced into using two G4s instead, which wasn't a good alternative. IBM delivered the chip after Apple switched over, but it was too late.

The second reason was that Apple wanted better Windows compatibility, which could only occur using x86 chips.

Kevin G - Saturday, May 9, 2015 - link

IBM did fail to make a G5 chip for laptops which significantly hurt Apple. Though Apple did have a plan B: PowerPC chips from PA-Semi. Also Apple never shipped a laptop with two G4 chips.And Apple didn't care about Windows software compatibility. Apple did care about hardware support as many chips couldn't be used in big endian mode or it made writing firmware for those chips complicated.

And the real second reasons why Apple ditched PowerPC was due to chipsets. The PCIe based G5's actually had a chipset that was more expensive than the CPUs that were used. It was composed of a DDR2/Hypertransport north bridge, two memory buffers, a hypertransport PCIe bridge chip from Broadcomm/Serverworks and a south bridge chip to handle SATA/USB IO, Firewire 800 chip, and a pair of Broadcomm ethernet chips. The dual core 2.5 Ghz PowerPC 970MP at the time were going between $200 and $250 a piece. Not only was the hardware complex for the motherboards but so was the software side. PowerPC 970's cannot boot themselves as they need a service processor to initialize the FSB. The PowerPC 970 chipsets Apple used have an embedded PowerPC 400 series chip in them that'll initialize and calibrate the PowerPC's high speed FSB before handing off the rest of the boot process.

SnowCat00 - Friday, May 8, 2015 - link

I would question how accurate that chart is...Mainframe sales are up: http://www.businessinsider.com/mainframe-saves-ibm...

Also as someone who works with mainframes, if one wanted to they could consolidate a entire data center to one big z13.

ats - Friday, May 8, 2015 - link

Um, I'm not sure you quite comprehend the scale of some of the datacenters out here. While Z13 is very nice, Its hardly a replacement of 10 racks of 8 socket Xeons.usernametaken76 - Friday, May 8, 2015 - link

That depends entirely on what those 10 racks worth of systems are doing and what type of applications they are running and at what utilization.Mainframes are built to run up to 100% utilization. Real world x86 systems at or above 80% are either rendering video, doing HPC or they have process control issues.

Real world Enterprise applications running in a virtualized environment is a more appropriate comparison. Everywhere I look it's VMWare at the moment.

Compare a PowerVM DLPAR to a VMWare VM running Linux x64 for a more fair, real world comparison.

melgross - Saturday, May 9, 2015 - link

It isn't the same thing. Mainframes excell in I/O, which often trumps pure processing power. It's a very different environment.ats - Saturday, May 9, 2015 - link

Um, the days of mainframes having any real advantage in I/O are long gone, fyi.Kevin G - Saturday, May 9, 2015 - link

Sort of. Mainframes still farm off most IO commands to dedicated coprocessors so that they don't eat away CPU cycles running actually applications.Mainframes also have dedicated hardware for encryption and compression. This is becoming more common in the x86 world on a drive basis but the mainframe implements this at a system level so that any drive's data can be encrypted and compressed.

It is also because of these coprocessors that IBM's mainframe virtualization is so robust: even the hypervisor itself can be virtualized on top of an another hypervisor without any slow down in IO or reduction in functionality.