Intel SSD 750 PCIe SSD Review: NVMe for the Client

by Kristian Vättö on April 2, 2015 12:00 PM ESTSequential Read Performance

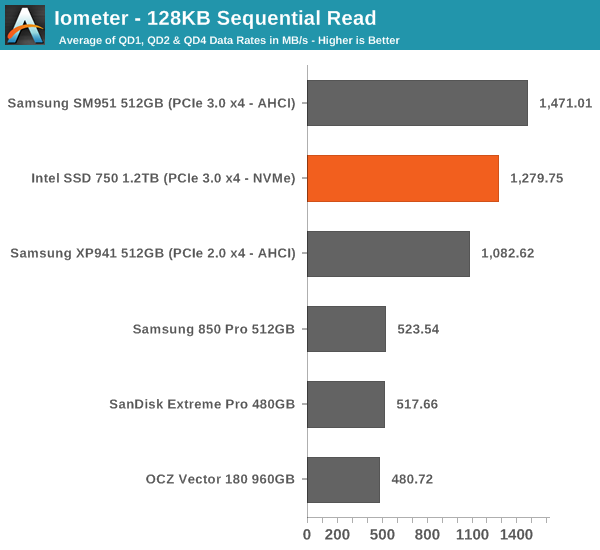

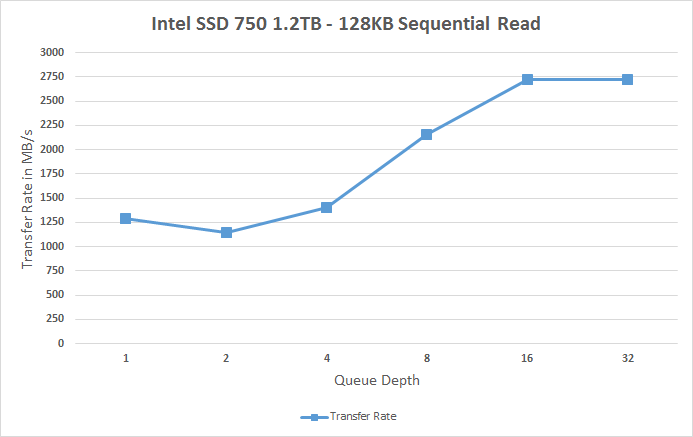

Our sequential tests are conducted in the same manner as our random IO tests. Each queue depth is tested for three minutes without any idle time in between the tests and the IOs are 4K aligned similar to what you would experience in a typical desktop OS.

In sequential read performance the SM951 keeps its lead. While the SSD 750 reaches up to 2.75GB/s at high queue depths, the scaling at small queue depths is very poor. I think this is an area where Intel should have put in a little more effort because it would translate to better performance in more typical workloads.

|

|||||||||

Sequential Write Performance

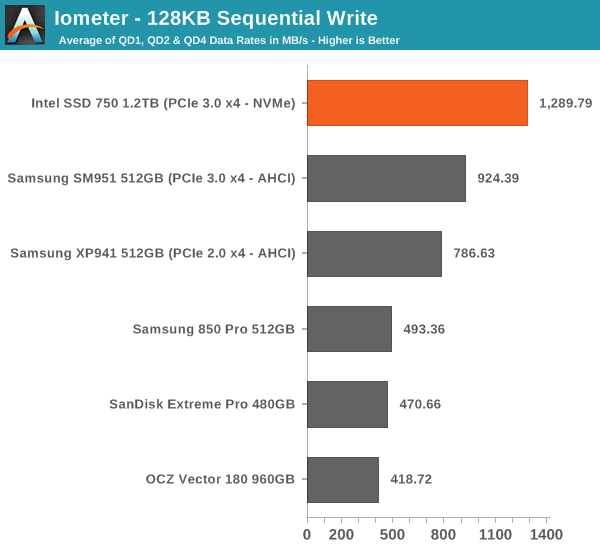

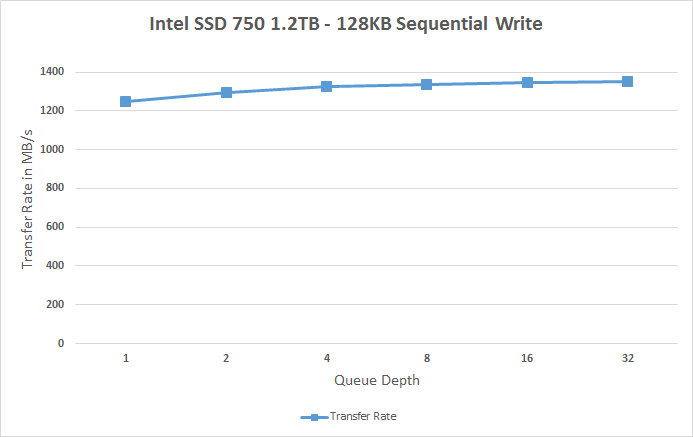

Sequential write testing differs from random testing in the sense that the LBA span is not limited. That's because sequential IOs don't fragment the drive, so the performance will be at its peak regardless.

The SSD 750 is faster in sequential writes than the SM951, but the difference isn't all that big when you take into account that the SSD 750 has more than twice the amount of NAND. The SM951 did have some throttling issues as you can see in the graph below and I bet that with a heatsink and proper cooling the two would be quite identical because at queue depth of 1 the SSD 750 is only marginally faster. It's again a bit disappointing that the SSD 750 isn't that well optimized for sequential IO because there's prcatically no scaling at all and the drive maxes out at ~1350MB/s.

|

|||||||||

132 Comments

View All Comments

knweiss - Thursday, April 2, 2015 - link

According to Semiaccurate the 400 GB drive has "only" 512 MB DRAM.(Unfortunately, ARK hasn't been updated yet so I can't verify.)

eddieobscurant - Thursday, April 2, 2015 - link

You're right it's probably 512mb for the 400gb model and 1gb for the 1.2tb modelAzunia - Thursday, April 2, 2015 - link

In PCPer's review of this drive, they actually talk about the problems of benchmarking this drive. (https://www.youtube.com/watch?v=ubxgTBqgXV8)Seems like some benchmarks like Iometer cannot actually feed the drive, due to being programmed with a single thread. Have you had similar experiences during benchmarking, or is their logic faulty?

Kristian Vättö - Friday, April 3, 2015 - link

I didn't notice anything that would suggest a problem with Iometer's capability of saturating the drive. In fact, Intel provided us Iometer benchmarking guidelines for the review, although they didn't really differ from what I've been doing for a while now.Azunia - Friday, April 3, 2015 - link

Reread their article and it seems like the only problem is the Iometer's Fileserver IOPS Test, which peaks at around 200.000 IOPS, since you don't use that one thats probably the reason why you saw no problem.Gigaplex - Thursday, April 2, 2015 - link

"so if you were to put two SSD 750s in RAID 0 the only option would be to use software RAID. That in turn will render the volume unbootable"It's incredibly easy to use software RAID in Linux on the boot drives. Not all software RAID implementations are as limiting as Windows.

PubFiction - Friday, April 3, 2015 - link

"For better readability, I now provide bar graphs with the first one being an average IOPS of the last 400 seconds and the second graph displaying the standard deviation during the same period"lol why not just portray standard deviation as error bars like they are supposed to be shown. Kudos for being one of the few sites to recognize this but what a convoluted senseless way of showing them.

Chloiber - Friday, April 3, 2015 - link

I think the practical tests of many other reviews show that the normal consumer has absolutely no benefit (except being able to copy files faster) from such an SSD. We have reached the peak a long time ago. SSDs are not the limiting factor anymore.Still, it's great to see that we finally again major improvements. It was always sad that all SSDs got limited by the interface. This was the case with SATA 2, it's the case with SATA 3.

akdj - Friday, April 3, 2015 - link

Thanks for sharing KristianQuery about the thorough put using these on external Thunderbolt docks and PCIe 'decks' (several new third party drive and GPU enclosires experimenting with the latter ...and adding powerful desktop cards {GPU} etc) ... Would there still be the 'bottle neck' (not that SLI nor Crossfire with the exception of the MacPro and how the two AMDs work together--would be a concern in OS X but Windows motherboards...) if you were to utilize the TBolt headers to the PCIe lane-->CPU? These seem like a better idea than external video cards for what I'm Doing on the rMBPs. The GPUs are quick enough, especially in tandem with the IrisPro and its ability to 'calculate' ;) -- but a 2.4GB twin card RAID external box with a 'one cord' plug hot or cold would be SWEEET,

wyewye - Friday, April 3, 2015 - link

Kristian: test with QD128, moron, its NVM.Anandtech becomes more and more idiotic: poor articles and crappy hosting, you have to real pages multiple times to access anything.

Go look at TSSDreview for a competent review.