The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTBattlefield 4

Kicking off our 2015 benchmark suite is Battlefield 4, DICE’s 2013 multiplayer military shooter. After a rocky start, Battlefield 4 has since become a challenging game in its own right and a showcase title for low-level graphics APIs. As these benchmarks are from single player mode, based on our experiences our rule of thumb here is that multiplayer framerates will dip to half our single player framerates, which means a card needs to be able to average at least 60fps if it’s to be able to hold up in multiplayer.

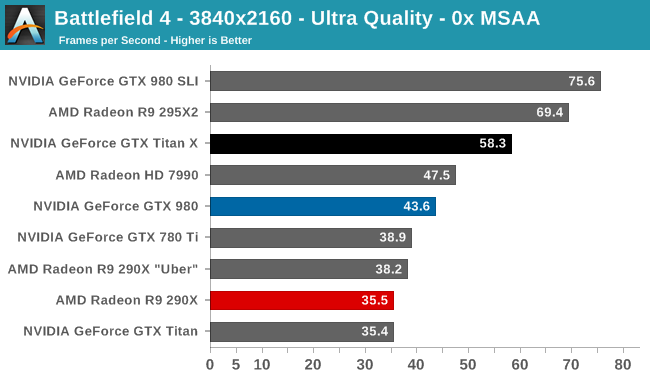

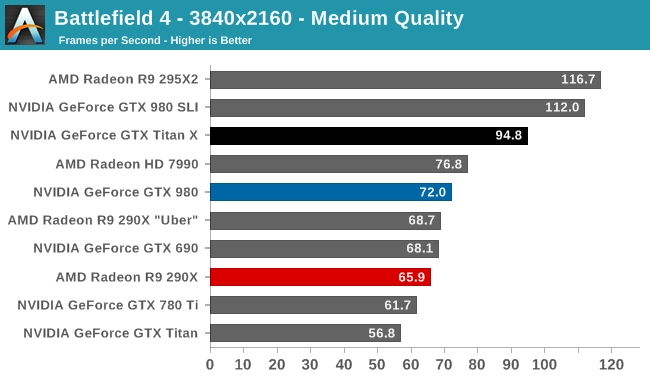

After stripping away the Frostbite engine’s expensive (and not wholly effective) MSAA, what we’re left with for BF4 at 4K with Ultra quality puts the GTX Titan X in a pretty good light. At 58.3fps it’s not quite up to the 60fps mark, but it comes very close, close enough that the GTX Titan X should be able to stay above 30fps virtually the entire time, and never drop too far below 30fps in even the worst case scenario. Alternatively, dropping to Medium quality should give the GTX Titan X plenty of headroom, with an average framerate of 94.8fps meaning even the lowest framerate never drops below 45fps.

From a benchmarking perspective Battlefield 4 at this point is a well optimized title that’s a pretty good microcosm of overall GPU performance. In this case we find that the GTX Titan X performs around 33% better than the GTX 980, which is almost exactly in-line with our earlier performance predictions. Keeping in mind that while GTX Titan X has 50% more execution units than GTX 980, it’s also clocked at around 88% of the clockspeed, so 33% is right where we should be in a GPU-bound scenario.

Otherwise compared to the GTX 780 Ti and the original GTX Titan, the performance advantage at 4K is around 50% and 66% respectively. GTX Titan X is not going to double the original Titan’s performance – there’s only so much you can do without a die shrink – but it continues to be amazing just how much extra performance NVIDIA has been able to wring out without increasing power consumption and with only a minimal increase in die size.

On the broader competitive landscape, this is far from the Radeon R9 290X/290XU’s best title, with GTX Titan X leading by 50-60%. However this is also a showcase title for when AFR goes right, as the R9 295X2 and GTX 980 SLI both shoot well past the GTX Titan X, demonstrating the performance/consistency tradeoff inherent in multi-GPU setups.

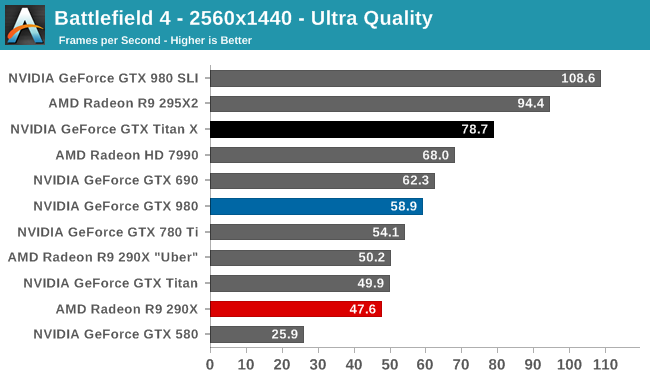

Finally, shifting gears for a moment, gamers looking for the ultimate 1440p card will not be disappointed. GTX Titan X will not get to 120fps here (it won’t even come close), but at 78.7fps it’s well suited for driving 1440p144 displays. In fact it’s the only single-GPU card to do better than 60fps at this resolution.

276 Comments

View All Comments

Kevin G - Tuesday, March 17, 2015 - link

Last I checked, rectal limits are a bit north of 700 mm^2. However, nVidia is already in the crazy realm in terms of economics when it comes to supply/demand/yields/cost. Getting fully functional chips with die sizes over 600 mm^2 isn't easy. Then again, it isn't easy putting down $999 USD for a graphics card.However, harvested parts should be quiet plentiful and the retail price of such a card should be appropriately lower.

Michael Bay - Wednesday, March 18, 2015 - link

>rectal limits are a bit north of 700 mm^2Oh wow.

Kevin G - Wednesday, March 18, 2015 - link

@Michael BayIntel's limit is supposed to be between 750 and 800 mm^2. They have released a 699 mm^2 product commercially (Tukwilla Itanium 2) a few years ago so it can be done.

Michael Bay - Wednesday, March 18, 2015 - link

>rectal limitsD. Lister - Wednesday, March 18, 2015 - link

lolchizow - Tuesday, March 17, 2015 - link

Yes its clear Nvidia had to make sacrifices somewhere to maintain advancements on 28nm and it looks like FP64/DP got the cut. I'm fine with it though, at least on GeForce products I don't want to pay a penny more for non-gaming products, if someone wants dedicated compute, go Tesla/Quadro.Yojimbo - Tuesday, March 17, 2015 - link

Kepler also has dedicated FP64 cores and from what I see in Anandtech articles, those cores are not used for FP32 calculations. How does NVIDIA save power with Maxwell by leaving FP64 cores off the die? The Maxwell GPUs seem to still be FP64 capable with respect to the number of FP64 cores placed on the die. It seems what they save by having less FP64 cores is die space and, as a result, the ability to have more FP32 cores. In other words, I haven't seen any information about Maxwell that leads me to believe they couldn't have added more FP64 cores when designing GM200 to make a GPU with superior double precision performance and inferior single precision performance compared with the configuration they actually chose for GM200. Maybe they just judged single precision performance to be more important to focus on than double precision, with a performance boost for double precision users having to wait until Pascal is released. Perhaps it was a choice between making a modest performance boost for both single and double precision calculations or making a significant performance boost for single precision calculations by forgoing double precision. Maybe they thought the efficiency gain of Maxwell could not carry sales on its own.testbug00 - Tuesday, March 17, 2015 - link

If this is a 250W card using about the same power as the 290x under gaming load, what does that make the 290x?Creig - Tuesday, March 17, 2015 - link

A very good value for the money.shing3232 - Tuesday, March 17, 2015 - link

Agree.