The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTOverclocking

Finally, no review of a GTX Titan card would be complete without a look at overclocking performance.

From a design standpoint, GTX Titan X already ships close to its power limits. NVIDIA’s 250W TDP can only be raised another 10% – to 275W – meaning that in TDP limited scenarios there’s not much headroom to play with. On the other hand with the stock voltage being so low, in clockspeed limited scenarios there’s a lot of room for pushing the performance envelope through overvolting. And neither of these options addresses the most potent aspect of overclocking, which is pushing the entirely clockspeed curve higher at the same voltages by increasing the clockspeed offsets.

GTX 980 ended up being a very capable overclocker, and as we’ll see it’s much the same story for the GTX Titan X.

| GeForce GTX Titan X Overclocking | ||||

| Stock | Overclocked | |||

| Core Clock | 1002MHz | 1202MHz | ||

| Boost Clock | 1076Mhz | 1276MHz | ||

| Max Boost Clock | 1215MHz | 1452MHz | ||

| Memory Clock | 7GHz | 7.8GHz | ||

| Max Voltage | 1.162v | 1.218v | ||

Even when packing 8B transistors into a 601mm2, the GM200 GPU backing the GTX Titan X continues to offer the same kind of excellent overclocking headroom that we’ve come to see from the other Maxwell GPUs. Overall we have been able to increase our GPU clockspeed by 200MHz (20%) and the memory clockspeed by 800MHz (11%). At its peak this leads to the GTX Titan X pushing a maximum boost clock of 1.45GHz, and while TDP restrictions mean it can’t sustain this under most workloads, it’s still an impressive outcome for overclocking such a large GPU.

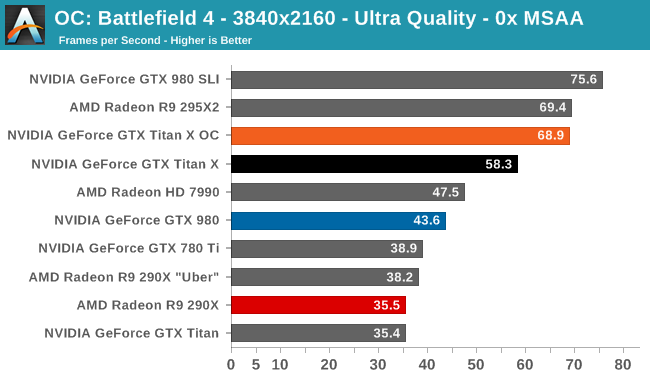

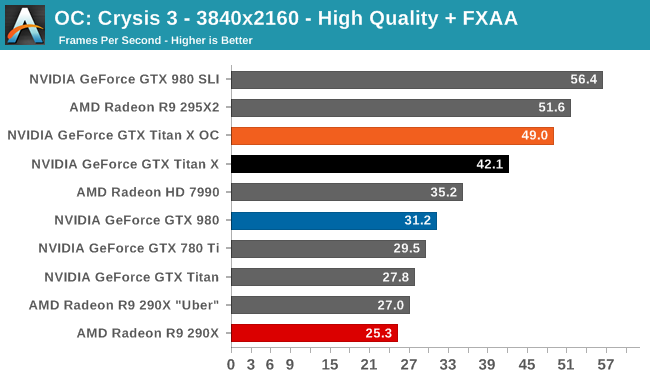

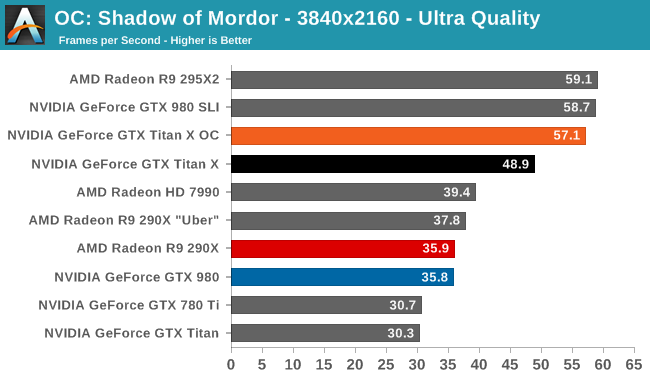

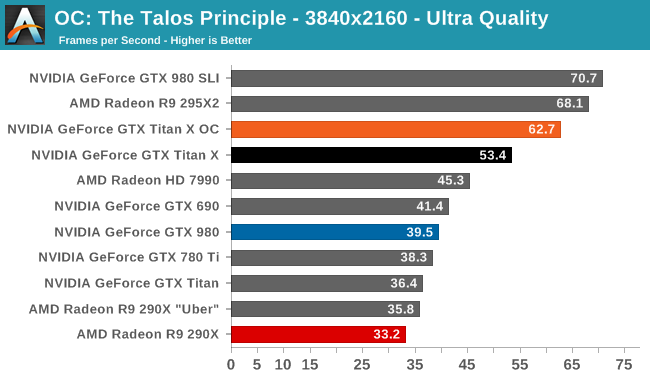

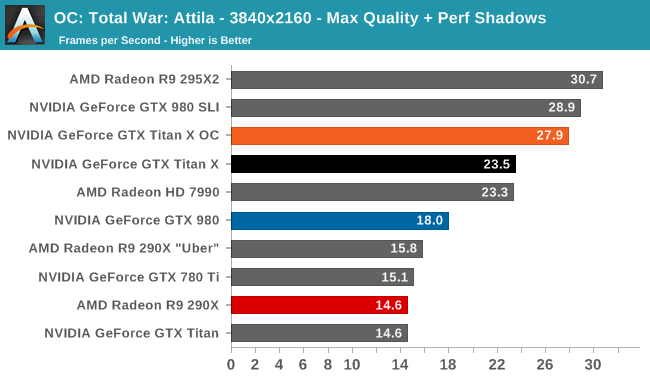

The performance gains from this overclock are a very consistent 16-19% across all 5 of our sample games at 4K, indicating that we're almost entirely GPU-bound as opposed to memory-bound. Though not quite enough to push the GTX Titan X above 60fps in Shadow of Mordor or Crysis 3, this puts it even closer than the GTX Titan X was at stock. Meanwhile we do crack 60fps on Battlefield 4 and The Talos Principle.

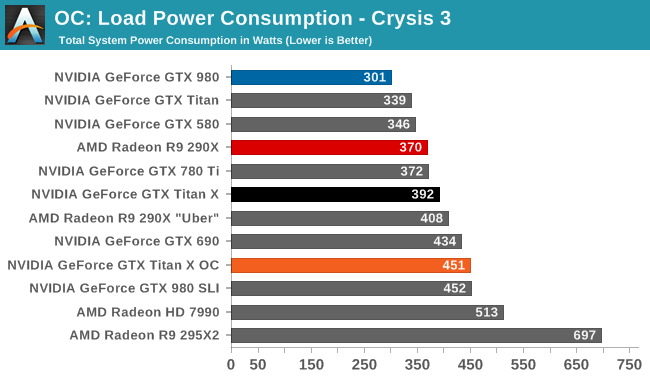

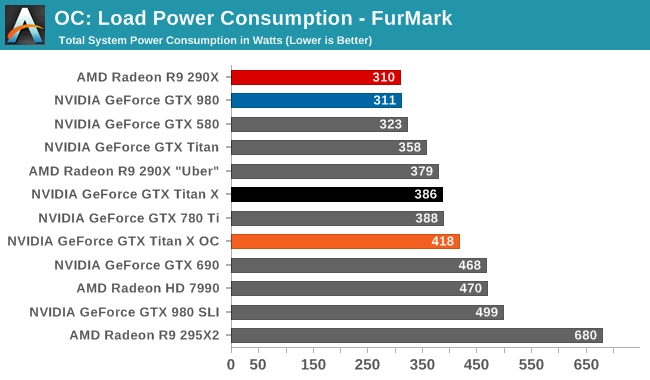

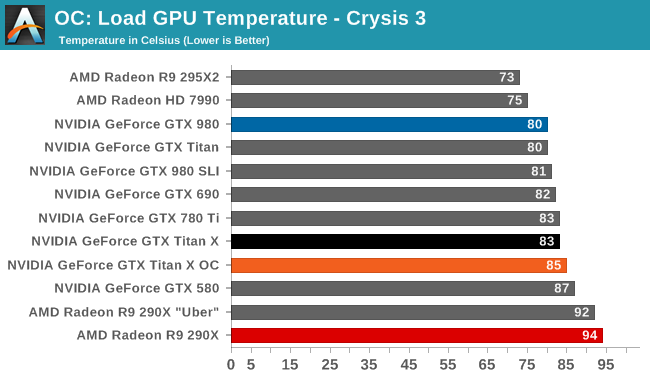

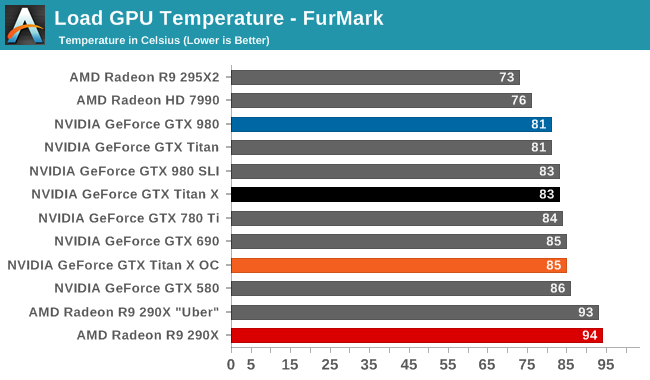

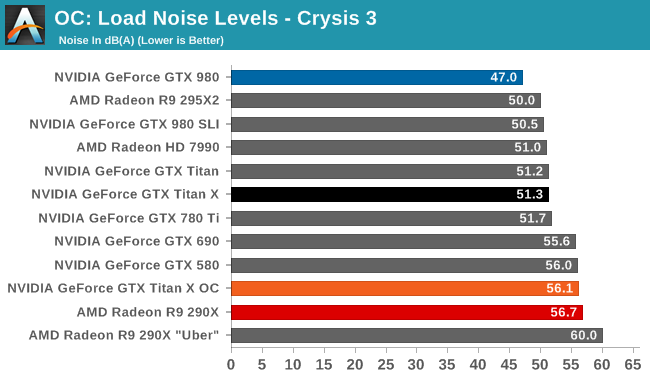

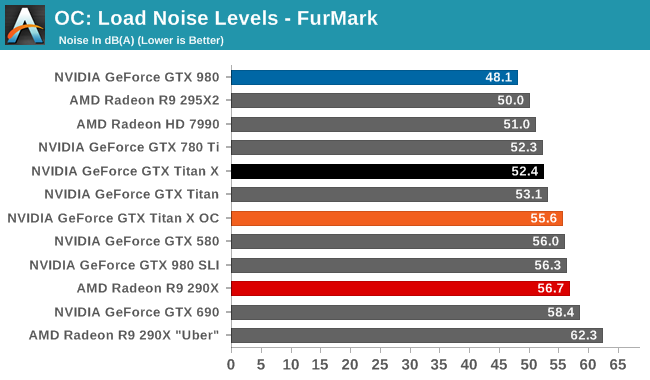

The tradeoff for this overclock is of course power and noise, both of which see significant increases. In fact the jump in power consumption with Crysis is a bit unexpected – further research shows that the GTX Titan X shifts from being temperature limited to TDP limited as a result of our overclocking efforts – while FurMark is in-line with the 25W increase in TDP. The 55dB noise levels that result, though not extreme, also mean that GTX Titan X is drifting farther away from being a quiet card. Ultimately it’s a pretty straightforward tradeoff for a further 16%+ increase in performance, but a tradeoff nonetheless.

276 Comments

View All Comments

Denithor - Wednesday, March 18, 2015 - link

Correct, but then they should have priced it around $800, not $1k. The reason they could demand $1k for the original Titan was due to the FP64 compute functionality on board.This is exactly what they did when they made the GTX 560 Ti, chopped out the compute features to maximize gaming power at a low cost. The reason that one was such a great card was due to price positioning, not just performance.

chizow - Monday, March 23, 2015 - link

@Denithor, I disagree, the reason they could charge $1K for the original Titan was because there was still considerable doubt there would ever be a traditionally priced GeForce GTX card based on GK110, the compute aspect was just add-on BS to fluff up the price.Since then of course, they released not 1, but 2 traditional GTX cards (780 and Ti) that were much better received by the gaming market in terms of both price and in the case of the Ti, performance. Most notably was the fact the original Titan price on FS/FT and Ebay markets quickly dropped below that of the 780Ti. If the allure of the Titan was indeed for DP compute, it would have held its price, but the fact Titan owners were dumping their cards for less than what it cost to buy a 780Ti clearly showed the demand and price justification for a Titan for compute alone simply wasn't there. Also, important to note Titan's drivers were still GeForce, so even if it did have better DP performance, there were still a lot of driver limitations related to CUDA preventing it from reaching Quadro/Tesla levels of performance.

Simply put, Nvidia couldn't pull that trick again under the guise of compute this time around, and people like me who weren't willing to pay a penny for compute over gaming weren't willing to justify that price tag for features we had no use for. Titan X on the other hand, its 100% dedicated to gamers, not a single transistor budgeted for something I don't care about, and no false pretenses to go with it.

Samus - Thursday, March 19, 2015 - link

The identity crisis this card has with itself is that for all the effort, it's still slower than two 980's in SLI, and when overclocked to try to catch up to them, ends up using MORE POWER than two 980's in SLI.So for the price (being identical) wouldn't you just pick up two 980's which offer more performance, less power consumption and FP64 (even if you don't need it, it'll help the resell value in the future)?

LukaP - Thursday, March 19, 2015 - link

The 980 have the same 1/32 DP performance as the Titan X. And Titan never was a sensible card. Noone sensible buys it over a x80 of that generation (which i assume will be 1080 or whatever they call it, based on GM200 with less ram, and maybe some disabled ROPs).The Titan is a true flagship. making no sense economically, but increasing your penis size by miles

chizow - Monday, March 23, 2015 - link

I considered going this route but ultimately decided against it despite having used many SLI setups in the past. There's a number of things to like about the 980 but ultimately I felt I didn't want to be hamstrung by the 4GB in the future. There are already a number of games that push right up to that 4GB VRAM usage at 1440p and in the end I was more interested in bringing up min FPS than absolutely maxing out top-end FPS with 980 SLI.Power I would say is about the same, 980 is super efficient but once overclocked, with 2 of them I am sure the 980 set-up would use as much if not more than the single Titan X.

naxeem - Saturday, March 21, 2015 - link

You're forgetting three things:1. NO game uses even close to 8GB, let alone 12

2. $1000/1300€ puts it to exactly double the price of exactly the same performance level you get with any other solution: 970 SLI kicks it with $750, 295x2 does the same, 2x290X also...

In Europe, the card is even 30% more expensive than in US and than other cards so even less people will buy it there.

3. In summer, when AMD releases 390X for $700 and gives even better performance, Nvidia will either have to drop TitanX to the same price or suffer being smashed around at the market.

Keep in mind HBM is seriously a performance kicker for high resolutions, end-game gaming that TitanX is intended for. No amount of RAM can counter RAM bandwidth, especially when you don't really need over 6-7GB for even the most demanding games out there.

ArmedandDangerous - Saturday, March 21, 2015 - link

Or they could just say fuck it and keep the Titan at it's exact price and release a x80 GM200 at a lower price with some features cut that will still compete with whatever AMD has to offer. This is the 3rd Titan, how can you not know this by now.naxeem - Tuesday, March 24, 2015 - link

Well, yes. But without any compute performance of previous Titans, who would any why buy a 1000 Titan X while having exact same performance in some 980Ti or alike?Those who need 12GB for rendering may as well buy Quadros with more VRAM... When you need 12, you need more anyway... For gaming, 12GB means jack sht.

Thetrav55 - Friday, March 20, 2015 - link

Well its only the fastest card in the WORLD look at it that way the fattest card in the world ONLY 1000$ I know I know 1000 does not justify the performance but its the fastest card in the WORLD!!!agentbb007 - Wednesday, June 24, 2015 - link

LOL had to laugh @ farealstarfareal's comment that the 390X would likely blow the doors off the Titan X, the 390X is nowhere near the Titan X, it's closer to a 980. The all mighty R9 FuryX reviews posted this morning and it's not even beating the 980ti.