The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

CPU Scaling

Diving into our look at DirectX 12, let’s start with what is going to be the most critical component for a benchmark like Star Swarm, the CPU scaling.

Because Star Swarm is designed to exploit the threading inefficiencies of DirectX 11, the biggest gains from switching to DirectX 12 on Star Swarm come from removing the CPU bottleneck. Under DirectX 11 the bulk of Star Swarm’s batch submission work happens under a single thread, and as a result the benchmark is effectively bottlenecked by single-threaded performance, unable to scale out with multiple CPU cores. This is one of the issues DirectX 12 sets out to resolve, with the low-level API allowing Oxide to more directly control how work is submitted, and as such better balance it over multiple CPU cores.

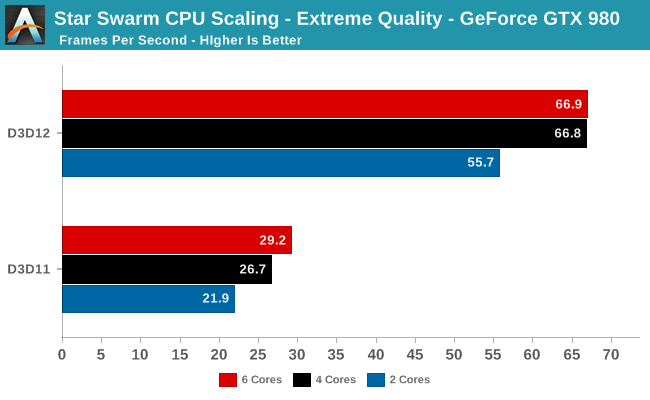

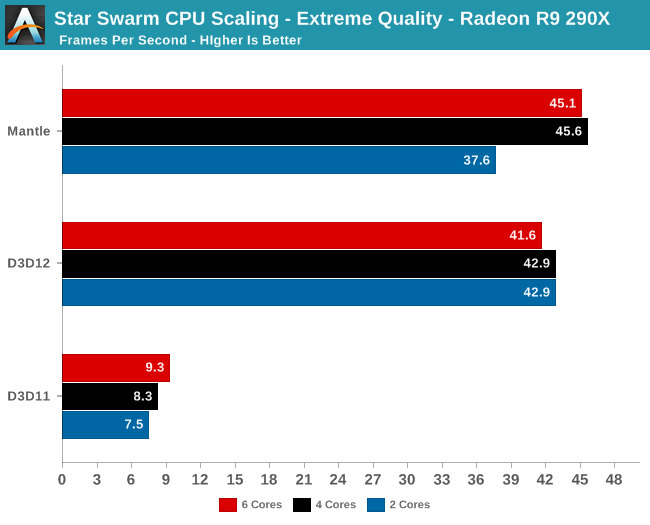

Starting with a look at CPU scaling on our fastest cards, what we find is that besides the absurd performance difference between DirectX 11 and DirectX 12, performance scales roughly as we’d expect among our CPU configurations. Star Swarm's DirectX 11 path, being single-threaded bound, scales very slightly with clockspeed and core count increases. The DirectX 12 path on the other hand scales up moderately well from 2 to 4 cores, but doesn’t scale up beyond that. This is due to the fact that at these settings, even pushing over 100K draw calls, both GPUs are solidly GPU limited. Anything more than 4 cores goes to waste as we’re no longer CPU-bound. Which means that we don’t even need a highly threaded processor to take advantage of DirectX 12’s strengths in this scenario, as even a 4 core processor provides plenty of kick.

Meanwhile this setup also highlights the fact that under DirectX 11, there is a massive difference in performance between AMD and NVIDIA. In both cases we are completely CPU bound, with AMD’s drivers only able to deliver 1/3rd the performance of NVIDIA’s. Given that this is the original Mantle benchmark I’m not sure we should read into the DirectX 11 situation too much since AMD has little incentive to optimize for this game, but there is clearly a massive difference in CPU efficiency under DirectX 11 in this case.

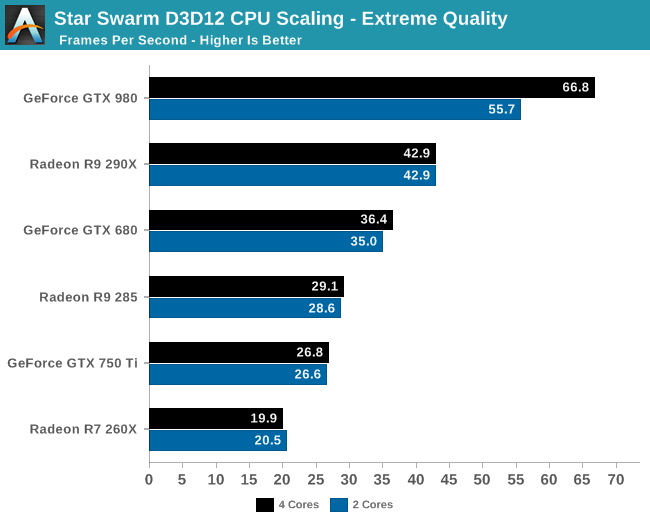

Having effectively ruled out the need for 6 core CPUs for Star Swarm, let’s take a look at a breakdown across all of our cards for performance with 2 and 4 cores. What we find is that Star Swarm and DirectX 12 are so efficient that only our most powerful card, the GTX 980, finds itself CPU-bound with just 2 cores. For the AMD cards and other NVIDIA cards we can get GPU bound with the equivalent of an Intel Core i3 processor, showcasing just how effective DirectX 12’s improved batch submission process can be. In fact it’s so efficient that Oxide is running both batch submission and a complete AI simulation over just 2 cores.

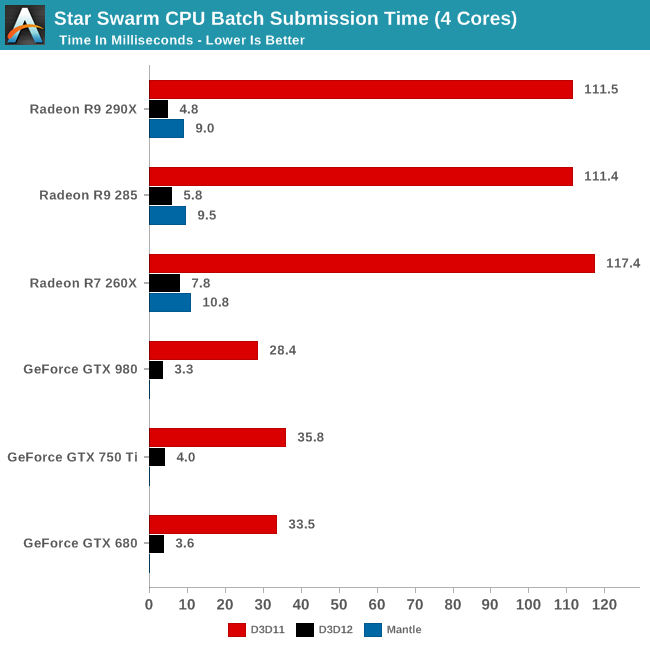

Speaking of batch submission, if we look at Star Swarm’s statistics we can find out just what’s going on with batch submission. The results are nothing short of incredible, particularly in the case of AMD. Batch submission time is down from dozens of milliseconds or more to just 3-5ms for our fastest cards, an improvement just overof a whole order of magnitude. For all practical purposes the need to spend CPU time to submit batches has been eliminated entirely, with upwards of 120K draw calls being submitted in a handful of milliseconds. It is this optimization that is at the core of Star Swarm’s DirectX 12 performance improvements, and going forward it could potentially benefit many other games as well.

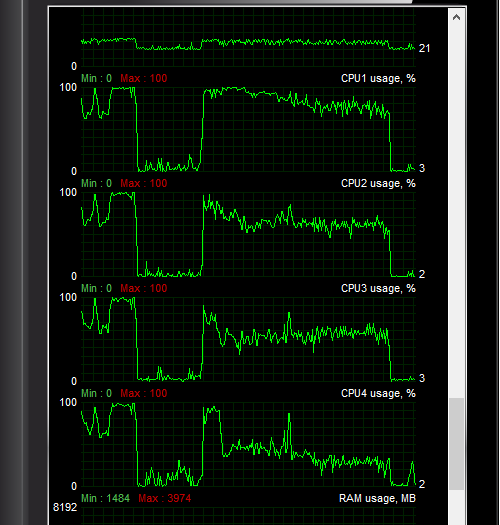

Another metric we can look at is actual CPU usage as reported by the OS, as shown above. In this case CPU usage more or less perfectly matches our earlier expectations: with DirectX 11 both the GTX 980 and R9 290X show very uneven usage with 1-2 cores doing the bulk of the work, whereas with DirectX 12 CPU usage is spread out evenly over all 4 CPU cores.

At the risk of speaking to the point that it’s redundant, what we’re seeing here is exactly why Mantle, DirectX 12, OpenGL Next, and other low-level APIs have been created. With single-threaded performance struggling to increase while GPUs continue to improve by leaps and bounds with each generation, something must be done to allow games to better spread out their rendering & submission workloads over multiple cores. The solution to that problem is to eliminate the abstraction and let the developers do it themselves through APIs like DirectX 12.

245 Comments

View All Comments

lilmoe - Friday, February 6, 2015 - link

These tests should totally NOT be done on a Core i7....tipoo - Friday, February 6, 2015 - link

He also has an i5 and i3 in there...?Gigaplex - Sunday, February 8, 2015 - link

He has an i7 with some cores disabled to simulate i5 and i3.ColdSnowden - Friday, February 6, 2015 - link

Why do AMD radeons have a much slower batch submission time? Does that mean that using an Nvidia card with a faster batch submission time can lessen cpu bottlenecking, even if DX11 is held constant?junky77 - Friday, February 6, 2015 - link

Also, what about testing with AMD APUs and/or CPUs.. this could make a change in this areaWaltC - Friday, February 6, 2015 - link

Very good write-up! My own thought about Mantle is that AMD pushed it to light a fire under Microsoft and get the company stimulated again as to D3d and the Windows desktop--among other things. Prior to AMD's Mantle announcement it wasn't certain if Microsoft was ever going to do another version of D3d--the scuttlebutt was "No" from some quarters, as hard as that was to believe. At any rate, as a stimulus it seems to have worked as a couple of months after the Mantle announcement Microsoft announces D3d/DX12, the description of which sounded suspiciously like Mantle. I think that as D3d moves ahead and continues to develop that Mantle will sort of hit the back burners @ AMD and then, like an old soldier, just fade away...;) Microsoft needs to invest heavily in DX/D3d development in the direction they are moving now, and I think as the tablet fad continues to wane and desktops continue to rebound that Microsoft is unlikely to forget its core strengths again--which means robust development for D3d for many years to come. Maximizing hardware efficiencies is not just great for lower-end PC gpus & cpus, it's also great for xBone & Microsoft's continued push into mobile devices. Looks like clear sailing ahead...;)Ryan Smith - Saturday, February 7, 2015 - link

"Prior to AMD's Mantle announcement it wasn't certain if Microsoft was ever going to do another version of D3d"Although I don't have access to the specific timelines, I would strongly advice not conflating the public API announcements with development milestones.

Mike Mantor (AMD) and Max McMullen (MS) may not go out and get microbrews together, but all of the major players in this industry are on roughly the same page. DX12 would have been in the drawing board before the public announcement of Mantle. Though I couldn't say which API was on the drawing board first.

at80eighty - Friday, February 6, 2015 - link

Great article. Loved the "first thoughts" endingHisDivineOrder - Friday, February 6, 2015 - link

I have to say, I expected Mantle to do a LOT better for AMD than DX12 would simply because it would be more closely tailored to the architecture. I mean, that's what AMD's been saying forever and a day now. It just doesn't look true, though.Perhaps it's AMD sucks at optimization because Mantle looks as optimized for AMD architecture as DX11 is in comparison to their overall gains. Meanwhile, nVidia looks like they've been using all this time waiting on DX12 to show up to really hone their drivers to fighting shape.

I guess this is what happens when you fire large numbers of your employees without doing much about the people directing them whose mistakes are the most reflected by flagging sales. Then you go and hire a few big names, trumpet those names, but you don't have the "little people" to back them up and do incredible feats.

Then again, it probably has to do with the fact that nVidia's released a whole new generation of product while AMD's still using the same product from two years ago that they've stretched thin across multiple quarters via delayed releases and LOTS of rebrands and rebundling.

Jumangi - Saturday, February 7, 2015 - link

No it was because Mantle only worked on AMD cards and NVidia has about 2/3 of the discrete graphics card market so most developers never bothered. Mantle never had a chance for widespread adoption.