6 TB NAS Drives: WD Red, Seagate Enterprise Capacity and HGST Ultrastar He6 Face-Off

by Ganesh T S on July 21, 2014 11:00 AM ESTMiscellaneous Aspects and Final Words

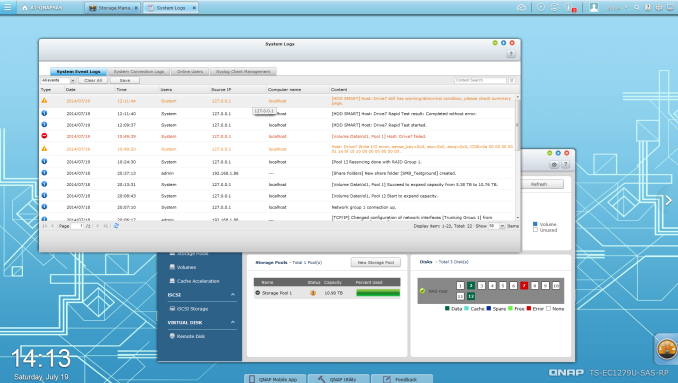

In the process of reviewing the Western Digital Red 6 TB drives, we did face one hiccup. Our QNAP testbed NAS finished resyncing a RAID-5 volume with three of those drives, but suddenly indicated an I/O error for one of them.

We were a bit surprised (in all our experience with hard drive review units, we had never had one fail that quickly). To check into the issue, we ran the SMART diagnostics and also a short test from within the NAS UI. Even though both of them passed clean, the NAS still refused to accept the disk for inclusion in the RAID volume. Fortunately, we had a spare drive that we could use to rebuild the volume. Putting the 'failed' drive in a PC didn't reveal any problems either. We are chalking this down to compatibility issues, though it is strange that the rebuilt volume with the same disks completed benchmarking without any problems. In any case, I would advise prospective consumers to ensure that their NAS is in the compatibility list for the drive before moving forward with the purchase.

RAID Resync and Power Consumption

The other aspect of interest when it comes to hard drives and NAS units is the RAID rebuild / resync times and the associated power consumption numbers. The following table presents the relevant values for the resyncing of a RAID-5 volume involving the respective drives.

| QNAP TS-EC1279U-SAS-RP RAID-5 Volume Resync | ||||

| Disk Model | Duration | Avg. Power | ||

| Western Digital Red 6 TB | 14h 27m 52s | 90.48 W | ||

| Seagate Enterprise Capacity 3.5" HDD v4 6 TB | 10h 24m 22s | 105.42 W | ||

| HGST Ultrastar He6 6 TB | 12h 34m 20s | 95.36 W | ||

Update: We also have some power consumption numbers under different scenarios. In each of these cases, we have three of the drives under consideration configured in a RAID-5 volume in the NAS. The access mode is exercised by running the corresponding IOMeter trace from 25 clients simultaneously.

| QNAP TS-EC1279U-SAS-RP RAID-5 Power Consumption | |||

| Workload | WD Red 6 TB | Seagate Enterprise Capacity 3.5" HDD v4 6 TB | HGST Ultrastar He6 6 TB |

| Idle | 79.34 W | 87.16 W | 84.98 W |

|

Max. Throughput (100% Reads) |

93.90 W | 107.22 W | 97.58 W |

|

Real Life (60% Random, 65% Reads) |

84.04 W | 109.25 W | 94.03 W |

|

Max. Throughput (50% Reads) |

96.74 W | 112.82 W | 99.25 W |

|

Random 8 KB (70% Reads) |

85.22 W | 105.65 W | 91.47 W |

As expected, the Seagate Enterprise Capacity 3.5" HDD v4 consumes the most power, while the He6 is much better off thanks to its HelioSeal technology while retaining the same rotational speed. The WD Red, on the other hand, wins the power efficiency battle as expected - a good thing for home consumers who value that over pure performance.

Concluding Remarks

We have taken a look at three different 6 TB drives, but it is hard to recommend any particular one as the clear cut choice unless the particular application is known. The interesting aspect here is that none of the three drives have overlapping use-cases. For home consumers who are interested in stashing their media collection / smartphone-captured photos and videos and expect only four or five clients to simultaneously access the NAS, the lower power consumption as well as the price of the WD Red 6 TB is hard to ignore. For users looking for absolute performance and those who need multiple iSCSI LUNs for virtual machines and other such applications would find the Seagate Enterprise Capacity v4 6 TB a good choice. The HGST Ultrastar He6 is based on upcoming technological advancements, and hence, carries a premium. However, the TCO aspect turns out to be in its favour, particularly when multiple drives running 24x7 are needed. It offers the best balance of power consumption, price and performance.

83 Comments

View All Comments

brettinator - Friday, March 18, 2016 - link

I realize this is years old, but I did indeed use raw i/o on a 10TB fried RAID 6 volume to recover copious amounts of source code.andychow - Monday, November 24, 2014 - link

@extide, you've just shown that you don't understand how it works. You're NEVER going to have checksum errors if your data is being corrupted by your RAM. That's why you need ECC RAM, so errors don't happen "up there".You might have tons of corrupted files, you just don't know it. 4 GB of RAM has a 96% percent chance of having a bit error in three days without ECC RAM.

alpha754293 - Monday, July 21, 2014 - link

Yeah....while the official docs say you "need" ECC, the truth is - you really don't. It's nice, and it'll help to mitigate like bit-flip errors and stuff like that, but I mean...by that point, you're already passing PBs of data through the array/zpool before it's even noticable. And part of that has to do with the fact that it does block-by-block checksumming, which means that given the nature of how people run their systems, it'll probably reduce your ERRs even further, but you might be talking like a third of what's already an INCREDIBLY small percentage.A system will NEVER complain if you have ECC RAM (and have ECC enabled, because my servers have ECC RAM, but I've always disabled ECC in the BIOS), but it isn't going to NOT startup if you have ECC RAM, but with ECC disabled.

And so far, I haven't seen ANY discernable evidence that suggests that ECC is an absolute must when running ZFS, and you can SAY that I am wrong, but you will also need to back that statement up with evidence/data.

AlmaFather - Monday, July 28, 2014 - link

Some information:http://forums.freenas.org/index.php?threads/ecc-vs...

Samus - Monday, July 21, 2014 - link

The problem with power saving "green" style drives is the APM is too aggressive. Even Seagate, who doesn't actively manufacture a "green" drive at a hardware level, uses firmware that sets aggressive APM values in many low end and external versions of their drives, including the Barracuda XT.This is a completely unacceptable practice because the drives are effectively self-destructing. Most consumer drives are rated at 250,000 load/unload cycles and I've racked up 90,000 cycles in a matter of MONTHS on drives with heavy IO (seeding torrents, SQL databases, exchange servers, etc)

HDPARM is a tool that you can send SMART commands to a drive and disable APM (by setting the value to 255) overriding the firmware value. At least until the next power cycle...

name99 - Tuesday, July 22, 2014 - link

I don't know if this is the ONLY problem.My most recent (USB3 Seagate 5GB) drive consistently exhibited a strange failure mode where it frequently seemed to disconnect from my Mac. Acting on a hunch I disabled the OSX Energy Saver "Put hard disks to sleep when possible" setting, and the problem went away. (And energy usage hasn't gone up because the Seagate drive puts itself to sleep anyway.)

Now you're welcome to read this as "Apple sux, obviously they screwed up" if you like. I'd disagree with that interpretation given that I've connected dozens of different disks from different vendors to different macs and have never seen this before. What I think is happening is Seagate is not handling a race condition well --- something like "Seagate starts to power down, half-way through it gets a command from OSX to power down, and it mishandles this command and puts itself into some sort of comatose mode that requires power cycling".

I appreciate that disk firmware is hard to write, and that power management is tough. Even so, it's hard not to get angry at what seems like pretty obvious incompetence in the code coupled to an obviously not very demanding test regime.

jay401 - Tuesday, July 22, 2014 - link

> Completely unsurprised here, I've had nothing but bad luck with any of those "intelligent power saving" drives that like to park their heads if you aren't constantly hammering them with I/O.I fixed that the day i bought mine with the wdidle utility. No more excessive head parking, no excessive wear. I've had 3 2TB Greens and 2 3TB Greens with no issues so far (thankfully). Currently running a pair of 4TB Reds, but have not seen any excessive head parking showing up in the SMART data with those.

chekk - Monday, July 21, 2014 - link

Yes, I just test all new drives thoroughly for a month or so before trusting them. My anecdotal evidence across about 50 drives is that they are either DOA, fail in the first month or last for years. But hey, YMMV.icrf - Monday, July 21, 2014 - link

My anecdotal experience is about the same, but I'd extend the early death window a few more months. I don't know that I've gone through 50 drives, but I've definitely seen a couple dozen, and that's the pattern. One year warranty is a bit short for comfort, but I don't know that I care much about 5 years over 3.Guspaz - Tuesday, July 22, 2014 - link

I've had a bunch of 2TB greens in a ZFS server (15 of them) for years and none of them have failed. I expected them to fail, and I designed the setup to tolerate two to four of them failing without data loss, but... nothing.