Intel SSD 335 (240GB) Review

by Kristian Vättö on October 29, 2012 11:30 AM ESTIntroduction

Back in February, Intel released its first SandForce based SSD: the Intel SSD 520. Since then Intel's SSD lineup has evolved. A couple of months after the 520's release, Intel released a more mainstream focused SSD 330. Architecturally the SSD 520 and 330 were the same as both used SandForce's SF-2281 controller and IMFT's MLC NAND. The only real differences were limited to NAND quality and firmware; the 520 used higher binned NAND with more P/E cycles and its firmware was also more finely tuned to provide better performance.

While SandForce has yet to release its 3rd generation SSD controller, there's still room to upgrade one major component of these drives: the NAND itself. IMFT (Intel's and Micron's NAND joint-venture) has been fairly open about its next generation NAND products, including the transition to 20nm MLC NAND. Moving to smaller process geometries decreases die area, which increases the number of NAND die that can be produced on a single wafer (or increases the capacity that can reliably be produced on a single die). The move to 20nm is a necessary part of continuing to drive SSD costs down, although as with all process transitions we won't see those cost savings initially (die savings are offset by higher costs of a new process at the start).

IMFT's 20nm announcement happened back in April 2011. At the time, we were told not to expect to see 64Gb 20nm MLC NAND devices in SSDs until the middle of 2012. Now, a year and a half later, production is finally at a stage where volume and yields are both high enough for an actual product release. The vehicle for introduction? Intel's SSD 335.

As the name already suggests, the 335 is not a major upgrade over the 330. Intel usually reserves XX0 product names for bigger upgrades, such as the SSD 520 update where Intel moved to SandForce from a Marvell controller that was used in the SSD 510. The more minor updates (usually NAND die shrinks) only change the last number of the model. In other words, SSD 335 is essentially the SSD 330 but with 20nm MLC NAND instead of 25nm MLC NAND. Below is a full comparison of Intel's current consumer SSDs:

| Comparison of Intel's Consumer SSDs | |||

| SSD 330 (240GB) | SSD 335 (240GB) | SSD 520 (240GB) | |

| Capacities (GB) | 60, 120, 180, 240 | 240 | 60, 120, 180, 240, 480 |

| Controller | SandForce SF-2281 | ||

| NAND | Intel 25nm MLC | Intel 20nm MLC | Intel 25nm MLC |

| Sequential Read | 500MB/s | 500MB/s | 550MB/s |

| Sequential Write | 450MB/s | 450MB/s | 520MB/s |

| 4KB Random Read | 42K IOPS | 42K IOPS | 50K IOPS |

| 4KB Random Write | 52K IOPS | 52K IOPS | 80K IOPS |

| Warranty | 3 years | 3 years | 5 years |

At first, the SSD 335 will only be available in a 240GB capacity. I suspect that this has to do with 20nm NAND yields and volumes; it's a new process, yields are obviously lower and Intel hasn't had time to build an enormous stock yet. By only releasing a 240GB model at this point, which Intel tells us is the most popular capacity, it makes sure the 240GB model should be available in sufficient volume for the holiday market. If Intel had released all capacities simultaneously, it's possible that some capacities would have ran out of stock quickly. Intel likely still has a decent stock of 25nm NAND, so the 330 will stick around for at least a few months while the 335 ramps up additional capacities. The Intel SSD 520 will still be available as well, although I'm hearing that its successor is coming soon.

In terms of performance, the SSD 330 and SSD 335 are similar. This isn't shocking given that they are both based on the same controller and the only difference is the move from 25nm to 20nm MLC NAND. We aren't going to see any significant improvements in SandForce based SSDs until the third generation (SF-3000) controllers become available, which should be some time next year. There have of course been minor modifications to the firmware to support 20nm MLC NAND.

Similar to the SSD 330, the SSD 335 comes with a desktop installation kit including a 2.5" to 3.5" adapter, SATA cable and a Molex to SATA power adapter.

| NewEgg Price Comparison (10/29/2012) | ||||

| Capacity | 60/64GB | 120/128GB | 240/256GB | 480/512GB |

| Intel SSD 335 | N/A | N/A | $184 (MSRP) | N/A |

| Intel SSD 330 | $70 | $99 | $190 | N/A |

| Intel SSD 520 | $95 | $120 | $240 | $490 |

| Crucial m4 | $75 | $110 | $200 | $390 |

| Samsung SSD 830 | $100 | $85 | $170 | $530 |

| Samsung SSD 840 | N/A | $110 | $200 | $450 |

| Plextor M5S | $65 | $120 | $200 | N/A |

| OCZ Vertex 4 | $75 | $100 | $200 | $400 |

| Corsair Neutron | N/A | $130 | $220 | N/A |

Intel's target with the SSD 330 was to bring an affordable drive to the market and the SSD 335 continues this trend. Suggested retail price of $184 for a 240GB drive is very competitive and there aren't many drives that can beat that at the moment.

Update: The 240GB SSD 335 is already available in NewEgg for $210, which is unfortunately over $20 more than what the MSRP suggested.

The NAND

Intel's 20nm MLC NAND is mostly the same as its 25nm MLC NAND. We are still looking at 8GB per die with an 8KB page size, although Intel does have a 16GB die in development which will also increase the page size to 16KB. Since the key aspects are the same, there haven't been any dramatic changes to performance. Intel wouldn't provide us with any specific numbers but program latency is the same and erase time is slightly longer than its 25nm MLC NAND.

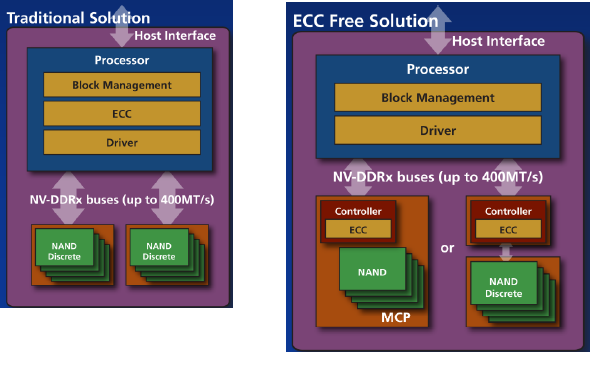

The new NAND also enables ONFI 2.3 support. ONFI 2.3 doesn't bring any bandwidth improvements as the spec still maxes out at 200MB/s. IMFT's 16GB die will feature ONFI 3.0 support, bringing the maximum bandwidth between the controller and NAND to 400MB/s per channel. The biggest new feature in ONFI 2.3 is support for the EZ-NAND protocol, although Intel has not adopted this protocol to its NAND. EZ-NAND allows ECC to be offloaded from the SSD controller to a separate controller (can be integrated into the NAND package as well):

Normal NAND on the left - EZ-NAND on the right

The advantage of offloading the ECC from the controller is that now ECC can be updated along with NAND without the need for a new controller. ECC is strictly implemented in hardware, which means a firmware update doesn't help; you will need a new physical controller to update ECC. As we move to smaller process nodes, the need for ECC increases as the error rate goes up. With more error prone NAND, it becomes even more important to decouple ECC generations from the host controller since the same controller can be used for more than one NAND generation. In the Intel SSD 335, ECC is still handled by the SF-2281 controller but in theory, manufacturers using Intel NAND could implement a third party off-chip ECC controller in their SSDs.

69 Comments

View All Comments

Per Hansson - Tuesday, October 30, 2012 - link

No, it does not work like that.A slow DMM might take a reading every second.

An example, in seconds:

1: 2w

2: 2w

3: 2w

Average=2w

A fast DMM might take readings every 100ms:

1: 2w

2: 0.5w

3: 2w

4: 0.5w

Average=1w

As you see a DMM does not take a continous reading, it takes readings at points in time and averages those...

An SSD drive might actually change power levels much more frequently, like every millisencond (consider their performance, how long does it take to write 4KB of data as an example?)

hrga - Thursday, November 1, 2012 - link

dont think SSD even try to write such a small amount of data as 4kB every milisecond considering how large buffers usually have 128GB LPDDR2. So thes kind of small writes occur in bursts when they accumulate every 15-30s (at least hope so as this was case with hard drives) That ofc depends on firmware and values in it.Per Hansson - Thursday, November 1, 2012 - link

That makes no difference, I sincerely hope that no drive waits 15 > 30 seconds to write data to disk because that is just a recipe for data loss in case of power failure or BSOD.I also hope no drive uses a 128GB write cache. (Intel's in house controller keeps no user data in cache as an example, but I digress)

Even if the drive waits a minute before it writes the 4KB of data you must still have a DMM capable of catching that write, which is completed in less than a millisecond.

Otherwise the increased power consumption during the disk write will be completely missed by the DMM

Mr Alpha - Monday, October 29, 2012 - link

Wouldn't it make more sense to the idle power consumption on a platform that supports DPIM? The idle power usage is mostly a matter on mobile devices, and it is on those you get DPIM support.sheh - Monday, October 29, 2012 - link

The text says total writes were 1.2TB, (+3.8TB=) 5TB, and 37.8TB. The screenshots show "host writes" at 1.51TB, 2.11TB, and 3.90TB?sheh - Monday, October 29, 2012 - link

And why the odd power on hours counts?Kristian Vättö - Monday, October 29, 2012 - link

You are mixing host writes with the actual NAND writes. Host writes are the data that the host (e.g. an operating system) sends to the SSD controller to write. NAND writes show much is written to the NAND.When the SSD is pushed to a corner like I did, you will end up having more NAND writes than host writes because of read-modify-write (i.e. all user-accessible LBAs are already full, so the controller must read the block to a cache, modify the data and rewrite the block). Basically, your host may be telling the controller to write 4KB but the controller ends up writing 2048MB (that's the block size).

extide - Monday, October 29, 2012 - link

Block size is 2048KB*sheh - Monday, October 29, 2012 - link

So the write amplification in the end was x9.7?Are NAND writes also reported by SMART?

And with the messed up power on count, how can you know the rest of the SMART data is reliable?

Kristian Vättö - Tuesday, October 30, 2012 - link

Yes, write amplification was around 9.7x in the end. That makes sense because the drive becomes more and more fragmented the more you write to it.As you can see in the screenshots, the SMAT value F9 corresponds to NAND writes. Most manufacturers don't report this data, though.

We just have to assume that the values are correct. Otherwise we could doubt every single test result we get, which would make reviewing impossible. The data makes sense so at least it's not screaming that something is off, and from what I have read, we aren't the only site who noticed weird endurance behavior.