NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTTheoreticals

As with any new architecture, we want to take a few moments to look at theoretical performance. These numbers shouldn’t be taken too seriously for cross-vendor comparison, but these numbers often tell us more about interesting architectural improvements that occur from one generation to the next.

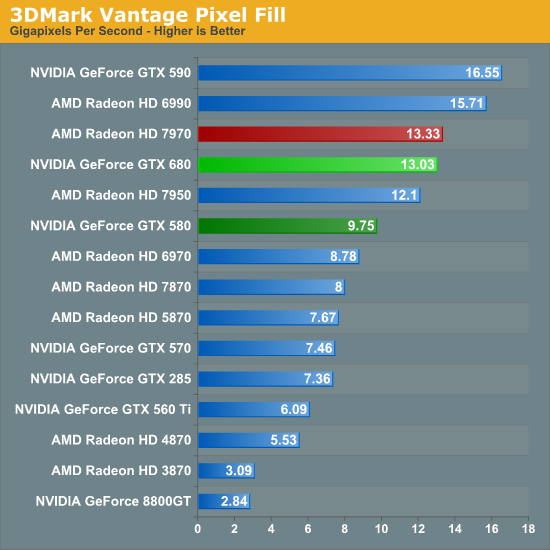

Our first theoretical test is perhaps the most perplexing: 3DMark Vantage’s pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to a mix of ROP performance and the memory bandwidth needed to feed those ROPs.

Compared to the GTX 580, the GTX 680 has almost exactly the same amount of memory bandwidth (192GB/sec) and only 86% of the theoretical ROP performance (37Gpix vs. 32Gpix). In short, it shouldn’t outperform the GTX 580 here, and yet it outperforms the 580 by 33%.

Why does it do this? That’s the hard thing to answer. As we mentioned in our look at GK104’s architecture, NVIDIA did make some minor incremental improvements to their ROPs coming from GF114, such as slightly improved compression and improved polygon merging. One of those may very well be the contributing factor, particularly the compression improvements since this is a typically memory bandwidth bottlenecked test. Alternatively, it’s interesting to note that the difference between the two video cards is almost identical to the difference in the core clock. GTX 560 Ti’s results tend to blow a hole in this theory, but it bears consideration.

In any case, it’s an interesting turn of events and hopefully one that isn’t simply an edge case. As we’ve seen in our benchmarks GTX 680 has strong performance – even if its lead compared to the 7970 diminishes with resolution – but compared to the GTX 580 in particular it needs strong ROP performance across all games in order to deliver good performance at high resolutions and anti-aliasing.

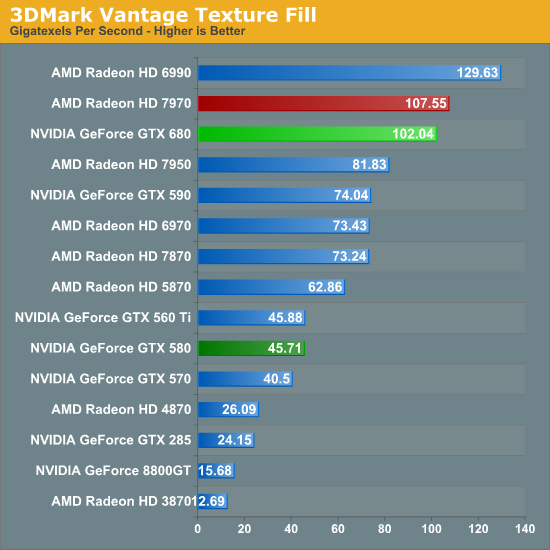

Our second theoretical test is 3DMark Vantage’s texture fill test, which to no surprise has the GTX 680 handily clobbering all prior NVIDIA cards. NVIDIA’s inclusion of 128 texture units on GK104 versus 64 on their previous generation GPUs gives the GTX 680 far better texturing performance. The 30%+ core clock difference only serves to further widen the gap.

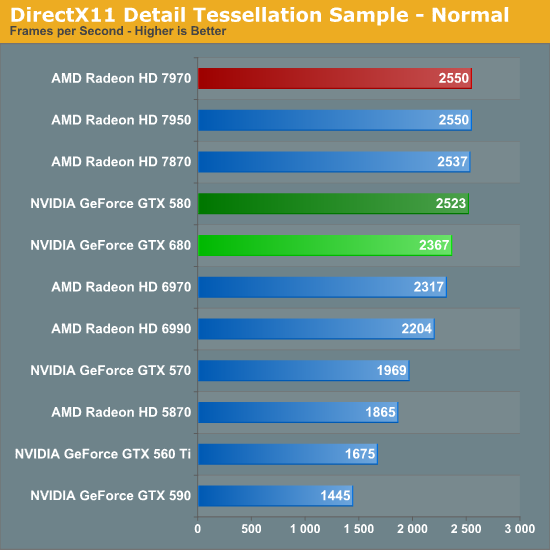

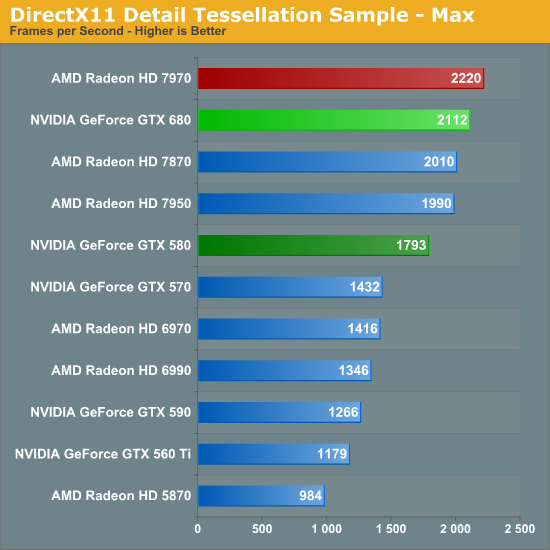

Our third theoretical test is the set of settings we use with Microsoft’s Detail Tessellation sample program out of the DX11 SDK. Overall while NVIDIA didn’t make any significant changes to their tessellation hardware (peak triangle rate is still 4/cycle), they have been working on further improving performance at absurdly high tessellation factors. You can see some of this in action at the max factor setting, but even then we’re running into a general performance wall since the Detail Tessellation program can’t go to the absolute highest tessellation factors NVIDIA’s hardware supports.

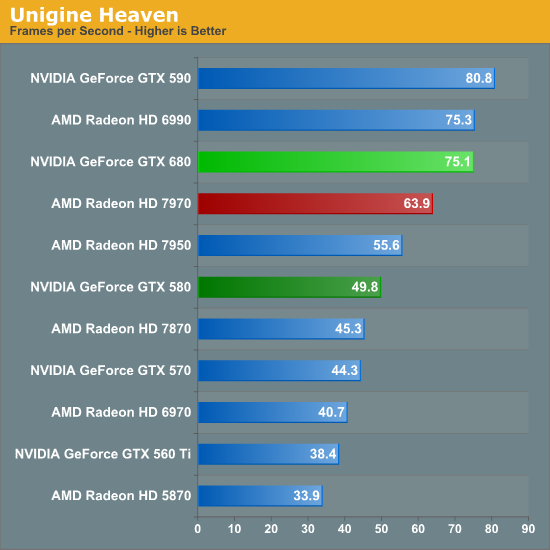

Our final theoretical test is Unigine Heaven 2.5, a benchmark that straddles the line between a synthetic benchmark and a real-world benchmark as the engine is licensed but no notable DX11 games have been produced using it yet. In any case the Heaven benchmark is notable for its heavy use of tessellation, which means it’s largely a proxy test for tessellation performance. Here we can see the GTX 680 shoot well ahead of the GTX 580 – by more than we saw in the DX11 Detail Tessellation sample – but at the same time there’s a lot more going on in Heaven than just tessellation.

Honestly at this point in time I’m not sure just how much more tessellation performance is going to matter. Until DX11 is the baseline API for games, tessellation is still an add-on feature, which means it’s being used to add fine detail to specific models rather than being used on everything in a game world. This demands good tessellation at high factors but at the same time it’s subject to diminishing returns on the improvement to image quality as triangles reach single pixel sizes and smaller. To that end I’m still waiting to see the day where we see tessellation scale similarly to textures – that is by using full MIP chaining of displacement maps – at which point we can evaluate tessellation performance similar to texture performance when it comes to both measuring the performance hit and evaluating the difference in image quality.

404 Comments

View All Comments

Sabresiberian - Thursday, March 22, 2012 - link

Do you work for AMD's marketing department, or are you just a fanboy with tunnel vision?silverblue - Thursday, March 22, 2012 - link

Could be beenthere under a different name... ;)CeriseCogburn - Thursday, March 22, 2012 - link

Youtube has settled that lie - all the "bumpgate" models have defectively designed heatsinks - end users are inserting a penny (old for copper content) above the gpu to solve the large gap while removing the laughable quarter inch thick spongepad.It was all another lie that misplaced blame. Much like the ati chip that failed in xbox360 - never blamed on ati strangely.... (same thing bad HS design).

Arbie - Thursday, March 22, 2012 - link

IMHO the only game worth basing a purchase decision on is Crysis / Waheard. There, even the 7950 beats the GTX680, especially in the crucial area of minimum frame rate. The AMD cards also take significantly less power long-term (which is most important) and at load. They are noisier under load but not enough to matter while I'm playing.

So for me it's still AMD.

kallogan - Thursday, March 22, 2012 - link

Don't know if you can say that. Crysis is old now. No directx 11. But it's true the GTX 680 does not particularly shine in heavy games like Metro 2033 or Crysis warhead compared to other games that may be more Nvidia optimised like BF3.CeriseCogburn - Tuesday, March 27, 2012 - link

Except in the most punishing benchmark Shotun 2 total War, the GTX680 by Nvidia spanks the 7970 and wins at all 3 resolutions !*

*

Can we get a big fanboy applause for the 7970 not doing well at all in very punishing high quality games comparing to the GTX680 ?

Sabresiberian - Thursday, March 22, 2012 - link

The key phrase you use here is "where it matters to me". I wouldn't argue with that at all - your decision is clearly the right one for your gaming tastes.That being said, you change your wording a bit, and it seems to me to imply (softening it "IMHO") that everyone should choose by your standards; that is also clearly wrong. The games I play are World of Warcraft, and Skyrim. WoW test results can be best compared to BF3, of those benches that were used in this article. I've never played Crysis passed a demo - so choosing based on that benchmark would be shooting myself in the proverbial foot.

Clearly, the GTX 680 is the better choice for me.

I've always said, choose your hardware by application, not by overall results (unless, of course, overall results matches your application cross-section :) ), and the benches in this article are more data to back up that recommendation.

;)

3DoubleD - Thursday, March 22, 2012 - link

Please don't buy a GTX 680 for WoW...It's even overkill for Skyrim, since you don't really need much more than 30 fps. You'd be fine using more economical variants.

CeriseCogburn - Thursday, March 22, 2012 - link

Wrong, but enjoy your XFX amd D double D.The cards, all of them, are not good enough yet.

Always turning down settings and begging the vsync.

They all fail our current gams and single monitor resolutions.

Iketh - Thursday, March 22, 2012 - link

for pvp you most certainly do need more than 30 FPS, try 60 at the least and 75 as ideal with a 120hz monitor... the more FPS I can get, the better I perform... your statement is true for raiding only