AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

PCI Express 3.0: More Bandwidth For Compute

It may seem like it’s still fairly new, but PCI Express 2 is actually a relatively old addition to motherboards and video cards. AMD first added support for it with the Radeon HD 3870 back in 2008 so it’s been nearly 4 years since video cards made the jump. At the same time PCI Express 3.0 has been in the works for some time now and although it hasn’t been 4 years it feels like it has been much longer. PCIe 3.0 motherboards only finally became available last month with the launch of the Sandy Bridge-E platform and now the first PCIe 3.0 video cards are becoming available with Tahiti.

But at first glance it may not seem like PCIe 3.0 is all that important. Additional PCIe bandwidth has proven to be generally unnecessary when it comes to gaming, as single-GPU cards typically only benefit by a couple percent (if at all) when moving from PCIe 2.1 x8 to x16. There will of course come a time where games need more PCIe bandwidth, but right now PCIe 2.1 x16 (8GB/sec) handles the task with room to spare.

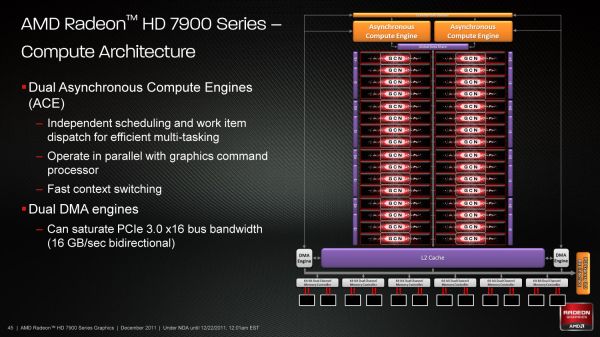

So why is PCIe 3.0 important then? It’s not the games, it’s the computing. GPUs have a great deal of internal memory bandwidth (264GB/sec; more with cache) but shuffling data between the GPU and the CPU is a high latency, heavily bottlenecked process that tops out at 8GB/sec under PCIe 2.1. And since GPUs are still specialized devices that excel at parallel code execution, a lot of workloads exist that will need to constantly move data between the GPU and the CPU to maximize parallel and serial code execution. As it stands today GPUs are really only best suited for workloads that involve sending work to the GPU and keeping it there; heterogeneous computing is a luxury there isn’t bandwidth for.

The long term solution of course is to bring the CPU and the GPU together, which is what Fusion does. CPU/GPU bandwidth just in Llano is over 20GB/sec, and latency is greatly reduced due to the CPU and GPU being on the same die. But this doesn’t preclude the fact that AMD also wants to bring some of these same benefits to discrete GPUs, which is where PCI e 3.0 comes in.

With PCIe 3.0 transport bandwidth is again being doubled, from 500MB/sec per lane bidirectional to 1GB/sec per lane bidirectional, which for an x16 device means doubling the available bandwidth from 8GB/sec to 16GB/sec. This is accomplished by increasing the frequency of the underlying bus itself from 5 GT/sec to 8 GT/sec, while decreasing overhead from 20% (8b/10b encoding) to 1% through the use of a highly efficient 128b/130b encoding scheme. Meanwhile latency doesn’t change – it’s largely a product of physics and physical distances – but merely doubling the bandwidth can greatly improve performance for bandwidth-hungry compute applications.

As with any other specialized change like this the benefit is going to heavily depend on the application being used, however AMD is confident that there are applications that will completely saturate PCIe 3.0 (and thensome), and it’s easy to imagine why.

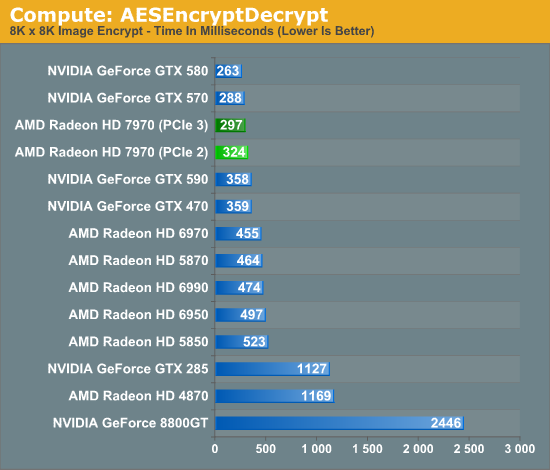

Even among our limited selection compute benchmarks we found something that directly benefitted from PCIe 3.0. AESEncryptDecrypt, a sample application from AMD’s APP SDK, demonstrates AES encryption performance by running it on square image files. Throwing it a large 8K x 8K image not only creates a lot of work for the GPU, but a lot of PCIe traffic too. In our case simply enabling PCIe 3.0 improved performance by 9%, from 324ms down to 297ms.

Ultimately having more bandwidth is not only going to improve compute performance for AMD, but will give the company a critical edge over NVIDIA for the time being. Kepler will no doubt ship with PCIe 3.0, but that’s months down the line. In the meantime users and organizations with high bandwidth compute workloads have Tahiti.

292 Comments

View All Comments

CeriseCogburn - Thursday, March 8, 2012 - link

Interesting, amd finally copied nvidia..." This problem forms the basis of this benchmark, and the NQueen test proves once more that AMD's Radeon HD 7970 tremendously benefits from leaving behind the VLIW architecture in complex workloads. Both the HD 7970 and the GTX 580 are nearly twice as fast as the older Radeons. "

When we show diversity we should also show that amd radeon has been massively crippled for a long time except when "simpleton" was the key to speed. "Superior architecture" actually means "simple and stupid" - hence "fast" at repeating simpleton nothings, but unable to handle "complex tasks".

LOL - the dumb gpu by amd has finally "evolved".

chizow - Thursday, December 22, 2011 - link

....unfortunately its going to be pitted against Kepler for the long haul.There's a lot to like about Southern Islands but I think its going to end up a very similar situation as Evergreen vs. Fermi, where Evergreen released sooner and took the early lead, but Fermi ultimately won the generation. I expect similar with Tahiti holding the lead for the next 3-6 months until Kepler arrives, but Kepler and its refresh parts winning this 28nm generation once they hit the streets.

Overall the performance and changes AMD made with Tahiti look great compared to Northern Islands, but compared to Fermi parts, its just far less impressive. If you already owned an AMD NI or Evergreen part, there'd be a lot of reason to upgrade, but if you own a Fermi generation Nvidia card there's just far less reason to, especially at the asking price.

I do like how AMD opened up the graphics pipeline with Tahiti though, 384-bit bus, 3GB framebuffer, although I wonder if holding steady with ROPs hurts them compared to Kepler. It would've also been interesting to see how the 3GB GTX 580 compared at 2560 since the 1.5GB model tended to struggle even against 2GB NI parts at that resolution.

ravisurdhar - Thursday, December 22, 2011 - link

My thoughts exactly. Can't wait to see what Kepler can do.Also...4+B transistors? mind=blown. I remember when we were ogling over 1B. Moore's law is crazy.... :D

johnpombrio - Wednesday, December 28, 2011 - link

Exactly. If you look at all the changes that AMD did on the card, I would have expected better results: the power consumption decrease with the Radeon 7970 is mainly due to the die shrink to 28nm. NVidia is planning on a die shrink of their existing Fermi architecture before Kepler is released:http://news.softpedia.com/news/Nvidia-Kepler-Is-On...

Another effect of the die shrink is that clock speed usually increases as there is less heat created at the lower voltage needed with a smaller transistor.

The third change that is not revolutionary is the bump of AMD's 7970's memory bus from 384 bits (matching the 580) from the 6970's 256 bits along with 3GB DDR5 memory vs the GTX580's 1.5GB and the 6970's 2GB.

The final non revolutionary change is bumping the number of stream processors by 33% from 1,536 to 2,048.

Again, breaking out my calculator, the 35% bump in the number of stream processors ALONE causes the increase in the change in the benchmark differences between the 7970 and the 6970.

The higher benchmark, however, does not show ANY OTHER large speed bumps that SHOULD HAVE OCCURED due to the increase in the memory bus size, the higher amount of memory, compute performance, texture fill rate, or finally the NEW ARCHITECTURE.

If I add up all the increases in the technology, I would have expected benchmarks in excess of 50-60% over the previous generation. Perhaps I am naive in how much to expect but, hell, a doubling of transistor count should have produced a lot more than a 35% increase. Add the new architecture, smaller die size, and more memory and I am underwhelmed.

CeriseCogburn - Thursday, March 8, 2012 - link

Well, we can wait for their 50%+ driver increase package+ hotfixes - because after reading that it appears they are missing the boat in drivers by a wide margin.Hopefully a few months after Kepler blows them away, and the amd fans finally allow themselves to complain to the proper authorities and not blame it on Nvida, they will finally come through with a "fix" like they did when the amd (lead site review mastas) fans FINALLY complained about crossfire scaling....

KaarlisK - Thursday, December 22, 2011 - link

What is the power consumption with multiple monitors? Previously, you could not downclock GDDR5, so the resulting consumption was horrible.Ryan Smith - Thursday, December 22, 2011 - link

"On that note, for anyone who is curious about idle clockspeeds and power consumption with multiple monitors, it has not changed relative to the 6970. When using a TMDS-type monitor along with any other monitor, AMD has to raise their idle clockspeeds from 350MHz core and 600Mhz memory to 350MHz core and the full 5.5GHz speed for memory, with the power penalty for that being around 30W. Matched timing monitors used exclusively over DisplayPort will continue to be the only way to be able to use multiple monitors without incurring an idle penalty."KaarlisK - Thursday, December 22, 2011 - link

Thank you for actually replying :)I am so sorry for having missed this.

ltcommanderdata - Thursday, December 22, 2011 - link

Great review.Here's hoping that AMD will implement 64-bit FP support across the whole GCN family and not just the top-end model. Seeing AMD's mobile GPUs don't use the highest-end chip, settling for the 2nd highest and lower, there hasn't been 64-bit FP support in AMD mobile GPUs since the Mobility HD4800 series. I'm interested in this because I can then dabble in some 64-bit GPGPU programming on the go. It also has implications for Apple since their iMacs stick to mobile GPUs, so would otherwise be stuck without 64-bit FP support which presumably could be useful for some of their professional apps.

In regards to hardware accelerated Megatexture, is it directly applicable to id Tech 5's OpenGL 3.2 solution? ie. Will id Tech 5 games see an immediate speed-up with no recoding needed? Or does Partially Resident Texture support require a custom AMD specific OpenGL extension? If it's the later, I can't see it going anywhere unless nVidia agrees to make it a multivendor EXT extension.

Ryan Smith - Thursday, December 22, 2011 - link

Games will need to be specifically coded for PRT; it won't benefit any current games. And you are correct in that it will require and AMD OpenGL extension to use (it won't be accessible from D3D at this time).