AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

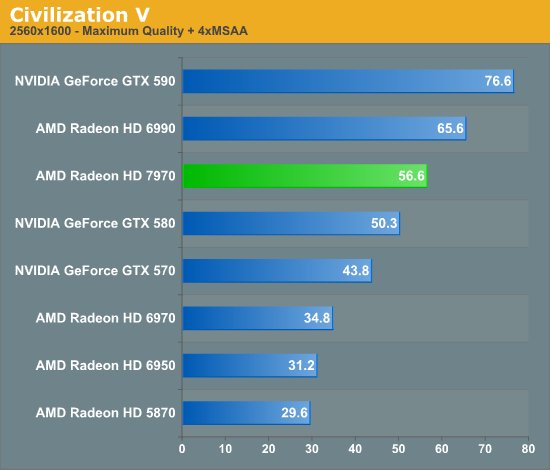

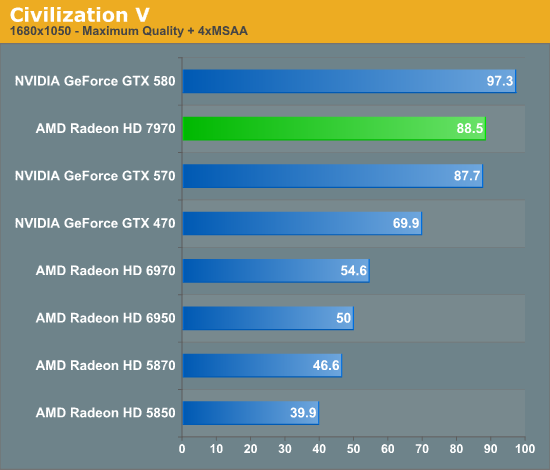

Civilization V

Our final game, Civilization 5, gives us an interesting look at things that other RTSes cannot match, with a much weaker focus on shading in the game world, and a much greater focus on creating the geometry needed to bring such a world to life. In doing so it uses a slew of DirectX 11 technologies, including tessellation for said geometry, driver command lists for reducing CPU overhead, and compute shaders for on-the-fly texture decompression.

Because of the fact that Civilization V uses driver command lists, we were originally not going to include it in this benchmark suite as a gaming benchmark. If it were solely a DCL test it would do a good job highlighting the fact that AMD doesn’t currently support the feature, but a poor job of actually explaining any hardware/architectural differences. It was only after we saw AMD’s reviewer’s guide that we decided to go ahead and include it, because quite frankly we didn’t believe the numbers AMD had published.

With the GTX 580 and the 6970, the 6970 routinely lost to the GTX 580 by large margins. We had long assumed this was solely due to NVIDIA’s inclusion of DCLs, as we’ve seen a moderate single-GPU performance deficit and equally moderate multi-GPU lead for AMD melt away when NVIDIA added DCL support. The 7970 required that we rethink this.

If Civilization V was solely a DCL test, then our 2560 results would be impossible – the 7970 is winning by 12% in a game NVIDIA previous won by a massive margin. NVIDIA only regains their lead at 1680, which at this resolution we’re not nearly as likely to be GPU-bound.

So what changed? AMD has yet to spill the beans, but short of a secret DCL implementation for just CivV we have to look elsewhere. Next to DCL CivV’s other killer feature is its use of compute shaders, and GCN is a compute architecture. To that extent we believe at this point that while AMD is still facing some kind of DCL bottleneck, they have completely opened the floodgates on whatever compute shader bottleneck was standing in their way before. This is particularly evident when comparing the 7970 to the 6970, where the 7970 enjoys a consistent 62% performance advantage. It’s simply an incredible turnabout to see the 7970 do so well when the 6970 did so poorly.

Of course if this performance boost really was all about compute shaders, it raises a particularly exciting question: just how much higher could AMD go if they had DCLs? Hopefully one day that’s an answer we get to find out.

292 Comments

View All Comments

Ananke - Thursday, December 22, 2011 - link

"The 7970 leads the 5870 by 50-60% here and in a number of other games"...and as I see it also carries 500-600% of price premium over the 5870.Meh, this is so so priced for a FireGL card, but very badly placed for a consumer market. Regardless, CUDA is getting more open meanwhile. AMD is still several generations/years behind in the HPC market and marketing a product above the NVidia price targets will not help AMD to make it popular.

Having say so, I am using ATI cards for gaming for several years already, and I am very pleased with their IQ and performance. I have always pre-purchased my ATI cards... What I am missing though is teh promised and never materialized consumer level software that can utilize its calculation ability, aka CyberLink and other video transcoders. If it was not for the naughty Nvidia power draw in the 5th series, I would've gone green to have CUDA. Hence, considering SO MUCH MONEY, I am waiting at least 6 months from now to see what the prices will be for the both new contenders in next GPU architectures :).

Dangerous_Dave - Thursday, December 22, 2011 - link

Seems like AMD can't do anything right these days. Bulldozer was designed for a world that doesn't exist, and now we have this new GPU stinking up the place. I'm sorry but @28nm you have double the transistors per area compared with @40nm, yet the performance is only 30% better for a chip that is virtually the same size! It should be at least twice as far ahead of the 6970 as that, even on immature drivers. As it stands, AMD @ 28nm is only just ahead of Nvidia @ 40nm as far as minimums go (the only thing that matters).I shudder to think how badly AMD is going to get destroyed when Nvidia release their 28nm GPU.

Finally - Friday, December 23, 2011 - link

I shudder to think how badly one Nvidia fanboy's ego is going to get scratched if team red released a better GPU and his favourite team has nothing to offer.Oh... they did?

CeriseCogburn - Thursday, March 8, 2012 - link

We have to let amd "go first" now since they have been so on the brink of bankruptcy collapse for so long that they've had to sell off most of their assets... and refinance by AbuDhabi oil money...I think it's nice our laws and global economy puts pressure on the big winners to not utterly crush the underdogs...

Really, if amd makes another fail it might be the last one before collapse and "restructuring" and frankly not many of us want to see that...

They already made the "last move" a dying company does and slashed with the ax at their people...

If the amd fans didn't constantly demand they be given a few dollars off all the time, amd might not be failing - I mean think about it - a near constant loss, because the excessive demand for price vs perf vs the enemy is all the radeon fans claim to care about.

It would be better for us all if the radeon fans dropped the constant $ complaints and just manned up and supported AMD as real fans, with their pocketbooks... instead of driving their favorite toward bankruptcy and cooked books filled with red ink...

Dangerous_Dave - Thursday, December 22, 2011 - link

On reflection this card is even worse than my initial analysis. For 3.4billion transistors AMD could have done no research at all and simply integrated two 6870s onto a single die (a la 5870 vs 4870) and ramped up the clock speed to somewhere over 1Ghz (since 28nm would have easily allowed that). This would have produced performance similar to a 6990, and far in excess of the 7970.Instead we've done a lot of research and spent 4.1billion transistors creating a card that is far worse than a 6990!

That's the value of AMD's creative thinking.

cknobman - Thursday, December 22, 2011 - link

The sad part is your likely too stupid to realize just how idiotic your post sounds.They introduced a new architecture that facilitates much better compute performance as well as giving more gaming performance.

Did you read the article and look at the compute benchmarks or did you just flip through the game benchmark pages and look at numbers without reading?

Zingam - Thursday, December 22, 2011 - link

Or maybe you just don't realize that they've added another 2 billion transistors for minimal graphics performance increase over the previous generation.That's almost as if you buy a new generation BMW that has instead 300 hp, 600hp but is not able to drag a bigger trailer.

The only benefit for you would be that you can brag that you've just got the most expensive and useless car available.

Finally - Friday, December 23, 2011 - link

Rule 1A:The frequency of a car pseudoanalogy to explain a technical concept increases with thread length. This will make many people chuckle, as computer people are rarely knowledgeable about vehicular mechanics.

cknobman - Friday, December 23, 2011 - link

Holy sh!t are you not reading and understanding the article and posts here??????????The extra transistors and new architecture were to increase COMPUTE PERFORMANCE as well as graphics.

Think bigger picture here dude not just games. Think of fusion and how general computing and graphics computing will merge into one.

This architecture is much bigger than just being a graphics card for games.

This is AMD's fermi except they did it about 100x better than Nvidia keeping power in check and still having amazing performance.

Plus your looking at probably beta drivers (heck maybe alpha) so there could very will be another 10+% increase in performance once this thing hit retail shelves and gets some driver improvements.

CeriseCogburn - Thursday, March 8, 2012 - link

I see. So when nvidia did it, it was abandoning gamers for 6 months of ripping away and gnawing plus... but now, since it's amd, amd has done it 100X better... and no abandonment...Wow.

I love hypocrisy in it's full raw and massive form - it's an absolute wonder to behold.