AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher, Cont

Having established what’s bad about VLIW as a compute architecture, let’s discuss what makes a good compute architecture. The most fundamental aspect of compute is that developers want stable and predictable performance, something that VLIW didn’t lend itself to because it was dependency limited. Architectures that can’t work around dependencies will see their performance vary due to those dependencies. Consequently, if you want an architecture with stable performance that’s going to be good for compute workloads then you want an architecture that isn’t impacted by dependencies.

Ultimately dependencies and ILP go hand-in-hand. If you can extract ILP from a workload, then your architecture is by definition bursty. An architecture that can’t extract ILP may not be able to achieve the same level of peak performance, but it will not burst and hence it will be more consistent. This is the guiding principle behind NVIDIA’s Fermi architecture; GF100/GF110 have no ability to extract ILP, and developers love it for that reason.

So with those design goals in mind, let’s talk GCN.

VLIW is a traditional and well proven design for parallel processing. But it is not the only traditional and well proven design for parallel processing. For GCN AMD will be replacing VLIW with what’s fundamentally a Single Instruction Multiple Data (SIMD) vector architecture (note: technically VLIW is a subset of SIMD, but for the purposes of this refresher we’re considering them to be different).

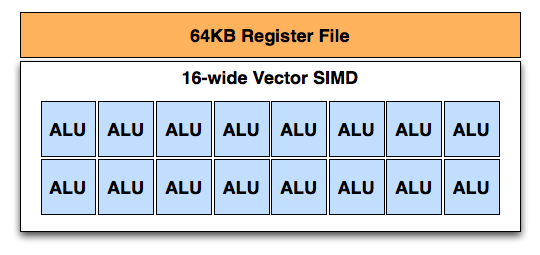

A Single GCN SIMD

At the most fundamental level AMD is still using simple ALUs, just like Cayman before it. In GCN these ALUs are organized into a single SIMD unit, the smallest unit of work for GCN. A SIMD is composed of 16 of these ALUs, along with a 64KB register file for the SIMDs to keep data in.

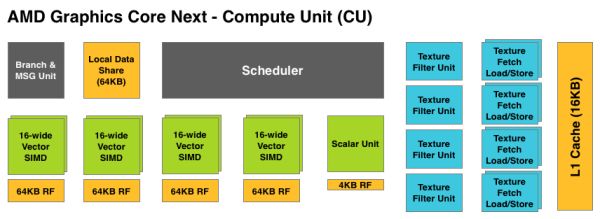

Above the individual SIMD we have a Compute Unit, the smallest fully independent functional unit. A CU is composed of 4 SIMD units, a hardware scheduler, a branch unit, L1 cache, a local date share, 4 texture units (each with 4 texture fetch load/store units), and a special scalar unit. The scalar unit is responsible for all of the arithmetic operations the simple ALUs can’t do or won’t do efficiently, such as conditional statements (if/then) and transcendental operations.

Because the smallest unit of work is the SIMD and a CU has 4 SIMDs, a CU works on 4 different wavefronts at once. As wavefronts are still 64 operations wide, each cycle a SIMD will complete ¼ of the operations on their respective wavefront, and after 4 cycles the current instruction for the active wavefront is completed.

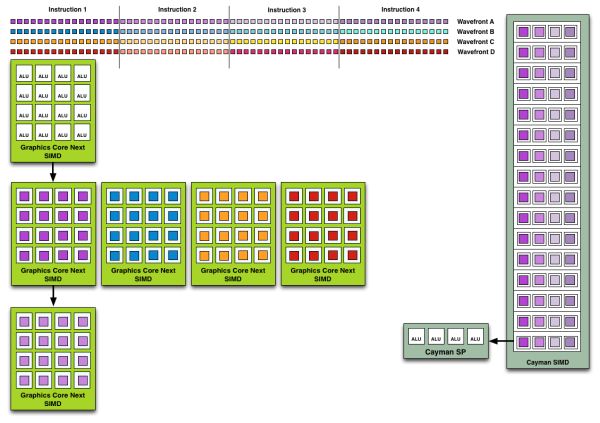

Cayman by comparison would attempt to execute multiple instructions from the same wavefront in parallel, rather than executing a single instruction from multiple wavefronts. This is where Cayman got bursty – if the instructions were in any way dependent, Cayman would have to let some of its ALUs go idle. GCN on the other hand does not face this issue, because each SIMD handles single instructions from different wavefronts they are in no way attempting to take advantage of ILP, and their performance will be very consistent.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

There are other aspects of GCN that influence its performance – the scalar unit plays a huge part – but in comparison to Cayman, this is the single biggest difference. By not taking advantage of ILP, but instead taking advantage of Thread Level Parallism (TLP) in the form of executing more wavefronts at once, GCN will be able to deliver high compute performance and to do so consistently.

Bringing this all together, to make a complete GPU a number of these GCN CUs will be combined with the rest of the parts we’re accustomed to seeing on a GPU. A frontend is responsible for feeding the GPU, as it contains both the command processors (ACEs) responsible for feeding the CUs and the geometry engines responsible for geometry setup. Meanwhile coming after the CUs will be the ROPs that handle the actual render operations, the L2 cache, the memory controllers, and the various fixed function controllers such as the display controllers, PCIe bus controllers, Universal Video Decoder, and Video Codec Engine.

At the end of the day if AMD has done their homework GCN should significantly improve compute performance relative to VLIW4 while gaming performance should be just as good. Gaming shader operations will execute across the CUs in a much different manner than they did across VLIW, but they should do so at a similar speed. And for games that use compute shaders, they should directly benefit from the compute improvements. It’s by building out a GPU in this manner that AMD can make an architecture that’s significantly better at compute without sacrificing gaming performance, and this is why the resulting GCN architecture is balanced for both compute and graphics.

292 Comments

View All Comments

haukionkannel - Thursday, December 22, 2011 - link

Well, 7970 and other GCN based new cards are not so much driver depended as those older radeons. So the improvements are not going to be so great, but surely there will be some! So the gap between 580 or 6970 vs 7970 is going to be wider, but do not expect as big steps as 6970 got via new sets of drivers.Ryan Smith - Thursday, December 22, 2011 - link

This is actually an excellent point. Drivers will still play a big part in performance, but with GCN the shader compiler in particular is now no longer the end all and be all of shader performance as the CUs can do their own scheduling.CeriseCogburn - Thursday, March 8, 2012 - link

I hate to say it but once you implement a 10% IQ cheat, it's though to do it again and get away with it again in stock drivers.I see the 797x has finally got something to control the excessive shimmering... that's about 5 years of fail finally contained...that I've more or less been told to ignore.... until the 100+ gig zip download here... to prove amd has at least finally dealt with one IQ epic fail... (of course all the reviewers claim there are no differences all the time - after pointing out the 10% cheat, then forgetting about it, having the shimmer, then "not noticing it in game" - etc).

I'm just GLAD amd finally did something about that particular one of their problems.

Halleluiah !

Now some PhysX (fine bullet or open cl but for pete sakes nvidia is also ahead on both of those!) and AA working even when cranking it to 4X plus would be great... hopefully their new arch CAN DO.

If I get a couple 7970's am I going to regret it is my question - how much still doesn't work and or is inferior to nvidia... I guess I'll learn to ignore it all.

IceDread - Thursday, December 22, 2011 - link

It's a good card, but for me it's not worth it to upgrade from a 5970 to a 7970. Looks like that would be about the same performance.Scali - Thursday, December 22, 2011 - link

This is exactly the reason why I made Endless City available for Radeons:http://scalibq.wordpress.com/2010/11/25/running-nv...

Could you run it and give some framerate numbers with FRAPS or such?

Boissez - Thursday, December 22, 2011 - link

What many seem to be missing is that it is actually CHEAPER than the current street prices on the 3GB-equiped GTX 580. IOW it offers superior performance, features, thermals, etc. at a lower price than current gen at a lower price.What AMD should do is get a 1.5 GB model out @450$ ASAP.

SlyNine - Thursday, December 22, 2011 - link

Looks like I'll be sticking with my 5870. I upgraded from 2 8800GT's ( that in SLI never functioned quite right because they were hitting over 100C ever with after market HSF) and enjoyed over 2x the performance.When I upgraded from a 1900XT to the 8800GT's same thing, 800XT-1900XT, 9700pro - 800XT, 4200(nvidia)-9700pro. The list goes on to my first Geforce 256 card.

Whats the point, My 5870 is 2! generations behind the 7970 yet this would be the worst $per increase in performance yet. Bummer I really want something to drive a new 120hz monitor, if I ever get one. But then thats kinda dependent on whether or not a single GPU can push it.

Finally - Thursday, December 22, 2011 - link

Since when do top-of-the-line cards give you the best FPS/$?For the last few months the HD6870+HD6850 were leading all those comparisons by quite some margin. The DH7970 will not change that.

SlyNine - Thursday, December 22, 2011 - link

If you read my post, you will notice that I'm compairing it to the improvments I have paid for in the past.40-60% Better than a 2 YO 5870 Is much worse than I have seen so far. Considering that its not just one generation but 2 generations beyond and for 500+$ to boot. This is the worst upgrade for the cost I have seen.....

SlyNine - Thursday, December 22, 2011 - link

The 6870 would not lead the cost per upgrade in performance at all, It would be in the negitives for me.