AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher: Graphics Core Next

One of the things we’ve seen as a result of the shift from pure graphics GPUs to mixed graphics and compute GPUs is how NVIDIA and AMD go about making their announcements and courting developers. With graphics GPUs there was no great need to discuss products or architectures ahead of time; a few choice developers would get engineering sample hardware a few months early, and everyone else would wait for the actual product launch. With the inclusion of compute capabilities however comes the need to approach launches in a different manner, a more CPU-like manner.

As a result both NVIDIA and AMD have begun revealing their architectures to developers roughly six months before the first products launch. This is very similar to how CPU launches are handled, where the basic principles of an architecture are publically disclosed months in advance. All of this is necessary as the compute (and specifically, HPC) development pipeline is far more focused on optimizing code around a specific architecture in order to maximize performance; whereas graphics development is still fairly abstracted by APIs, compute developers want to get down and dirty, and to do that they need to know as much about new architectures as possible as soon as possible.

It’s for these reasons that AMD announced Graphics Core Next, the fundamental architecture behind AMD’s new GPUs, back in June of this year at the AMD Fusion Developers Summit. There are some implementation and product specific details that we haven’t known until now, and of course very little was revealed about GCN’s graphics capabilities, but otherwise on the compute side AMD is delivering on exactly what they promised 6 months ago.

Since we’ve already covered the fundamentals of GCN in our GCN preview and the Radeon HD 7970 is primarily a gaming product we’re not going to go over GCN in depth here, but I’d encourage you to read our preview to fully understand the intricacies of GCN. But if you’re not interested in that, here’s a quick refresher on GCN with details pertinent to the 7970.

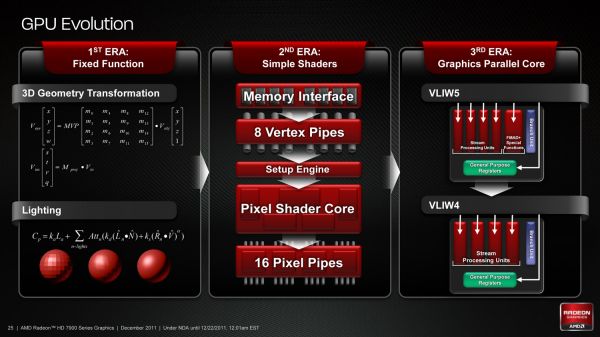

As we’ve already seen in some depth with the Radeon HD 6970, VLIW architectures are very good for graphics work, but they’re poor for compute work. VLIW designs excel in high instruction level parallelism (ILP) use cases, which graphics falls under quite nicely thanks to the fact that with most operations pixels and the color component channels of pixels are independently addressable datum. In fact at the time of the Cayman launch AMD found that the average slot utilization factor for shader programs on their VLIW5 architecture was 3.4 out of 5, reflecting the fact that most shader operations were operating on pixels or other data types that could be scheduled together

Meanwhile, at a hardware level VLIW is a unique design in that it’s the epitome of the “more is better” philosophy. AMD’s high steam processor counts with VLIW4 and VLIW5 are a result of VLIW being a very thin type of architecture that purposely uses many simple ALUs, as opposed to fewer complex units (e.g. Fermi). Furthermore all of the scheduling for VLIW is done in advance by the compiler, so VLIW designs are in effect very dense collections of simple ALUs and cache.

The hardware traits of VLIW mean that for a VLIW architecture to work, the workloads need to map well to the architecture. Complex operations that the simple ALUs can’t handle are bad for VLIW, as are instructions that aren’t trivial to schedule together due to dependencies or other conflicts. As we’ve seen graphics operations do map well to VLIW, which is why VLIW has been in use since the earliest pixel shader equipped GPUs. Yet even then graphics operations don’t achieve perfect utilization under VLIW, but that’s okay because VLIW designs are so dense that it’s not a big problem if they’re operating at under full efficiency.

When it comes to compute workloads however, the idiosyncrasies of VLIW start to become a problem. “Compute” covers a wide range of workloads and algorithms; graphics algorithms may be rigidly defined, but compute workloads can be virtually anything. On the one hand there are compute workloads such as password hashing that are every bit as embarrassingly parallel as graphics workloads are, meaning these map well to existing VLIW architectures. On the other hand there are tasks like texture decompression which are parallel but not embarrassingly so, which means they map poorly to VLIW architectures. At one extreme you have a highly parallel workload, and at the other you have an almost serial workload.

Cayman, A VLIW4 Design

So long as you only want to handle the highly parallel workloads VLIW is fine. But using VLIW as the basis of a compute architecture is going is limit what tasks your processor is sufficiently good at. If you want to handle a wider spectrum of compute workloads you need a more general purpose architecture, and this is the situation AMD faced.

But why does AMD want to chase compute in the first place when they already have a successful graphics GPU business? In the long term GCN plays a big part in AMD’s Fusion plans, but in the short term there’s a much simpler answer: because they have to.

In Q3’2011 NVIDIA’s Professional Solutions Business (Quadro + Tesla) had an operating income of 95M on 230M in revenue. Their (consumer) GPU business had an operating income of 146M, but on a much larger 644M in revenue. Professional products have much higher profit margins and it’s a growing business, particularly the GPU computing side. As it stands NVIDIA and AMD may have relatively equal shares of the discrete GPU market, but it’s NVIDIA that makes all the money. For AMD’s GPU business it’s no longer enough to focus only on graphics, they need a larger piece of the professional product market to survive and thrive in the future. And thus we have GCN.

292 Comments

View All Comments

chiddy - Thursday, December 22, 2011 - link

Ryan,Thanks for the great review. My only gripe - and I've been noticing this for a while - is the complete non-mention of drivers or driver releases for Linux/Unix and/or their problems.

For example, Catalyst drivers exhibit graphical corruption when using the latest version (Version 3) of Gnome Desktop Environment since its release before April. This is a major bug which required most users of AMD/ATI GPUs to either switch desktop environments, switch to Nvidia or Intel GPUs, or use the open source drivers which lack many features. A partial fix appeared in Catalyst 11.9 making Gnome3 usable but there are still elements of screen corruption on occassion. (Details in the "non-official" AMD run bugzilla http://ati.cchtml.com/show_bug.cgi?id=99 ).

AMD have numerous other issues with Linux Catalyst drivers including buggy openGL implementation, etc.

Essentially, as a hardware review, a quick once over with non-Microsoft OSs would help alot, especially for products which are marketed as supporting such platforms.

Regards,

kyuu - Thursday, December 22, 2011 - link

Why in the heck would they mention Linux drivers and their issues in an article covering the (paper) release and preliminary benchmarking of AMD's new graphics cards? It has nada to do with the subject at hand.Besides, hardly anyone cares, and those that do care already know.

chiddy - Thursday, December 22, 2011 - link

And I guess that AMD GPUs are sold as "Windows Only"?Thanks for your informative insight.

MrSpadge - Thursday, December 22, 2011 - link

There are no games for *nix and everything always depends on your distribution. The problems are so diverse and numerous.. it would take an entire article to briefly touch this field.Exagerating, but I really wouldn't be interested in endless *nix troubleshooting. Hell, I can't even get nVidia 2D acceleration in CentOS..

chiddy - Thursday, December 22, 2011 - link

You have a valid point on that front and I agree, nor would I expect such an article any time soon.However, on the other hand, one would at the very least expect a GPU using manufacturer released drivers to load a usable desktop. This is an issue that was distro agnostic and instantly noticeable, and only affected AMD hardware, as do most *nix GPU driver issues!

If all that was done during a new GPU review was fire it up in any *nix distribution of choice for just a few minutes (even Ubuntu as I think its the most popular at the moment) to ensure that the basics work it would still be a great help.

I will have to accept though that there is precious little interest!

Ryan Smith - Thursday, December 22, 2011 - link

Hi Chiddy;It's a fair request, so I'll give you a fair answer.

The fact of the matter is that Linux drivers are not a top priority for either NVIDIA or AMD. Neither party makes Linux drivers available for our launch reviews, so I wouldn't be able to test new cards at launch. Not to speak for either company, but how many users are shelling out $550 to run Linux? Cards like the 7970 have a very specifically defined role: Windows gaming video card, and their actions reflect this.

At best we'd be able to look at these issues at some point after the launch when AMD or NVIDIA have added support for the new product to their respective Linux drivers. But that far after the product's launch and for such a small category of users (there just aren't many desktop Linux users these days), I'm not sure it would be worth the effort on our part.

chiddy - Friday, December 23, 2011 - link

Hi Ryan,Thanks very much for taking the time to respond. I fully appreciate your position, particularly as the posts above very much corroborate the lack of interest!

Thanks again for the response, I very much appreciate the hard work yourself and the rest of the AT team are doing, and its quality speaks for itself in the steady increase in readers over the years.

If you do however ever find the time to do a brief piece on *nix GPU support after launch of the next generation nVidia and AMD GPUs that would be wonderful - and even though one would definately not buy a top level GPU for *nix, it would very much help those of us who are dual booting (in my case Windows for gaming / Scientific Linux for work), and somewhat remove the guessing game during purchase time. If not though I fully understand :-).

Regards,

Ali

CeriseCogburn - Thursday, March 8, 2012 - link

Nvidia consistenly wins over and over again in this area, so it's "of no interest", like PhysX...AmdInside - Thursday, December 22, 2011 - link

I won't be getting much sleep tonight since that article took a long time to read (can't imagine how long it must have taken to write up). Great article as usual. While it has some very nice features, all in all, it doesn't make me regret my purchase of a Geforce GTX 580 a couple of months ago. Especially since I mainly picked it up for Battlefield 3.ET - Thursday, December 22, 2011 - link

The Cayman GPU's got quite a performance boost from drivers over time, gaining on NVIDIA's GPU since their launce. The difference in architecture between the 79x0 and 69x0 is higher than the 69x0 and 58x0, so I'm sure there's quite a bit of room for performance improvement in games.Have to say though that I really hope AMD stops increasing the card size each generation.