AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

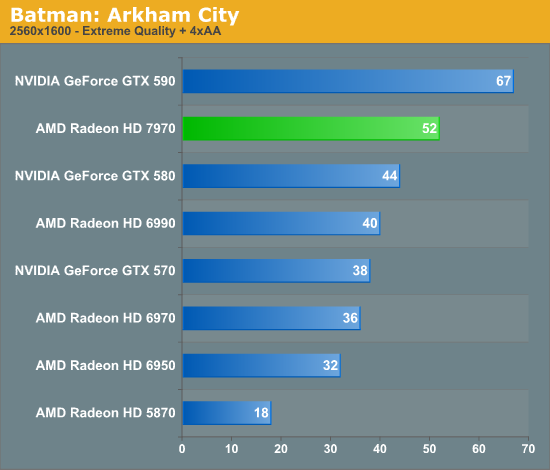

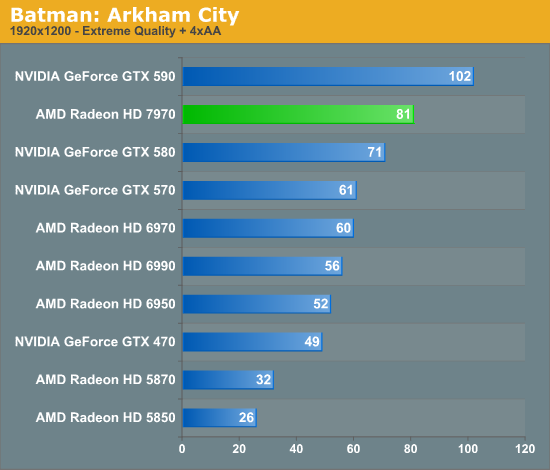

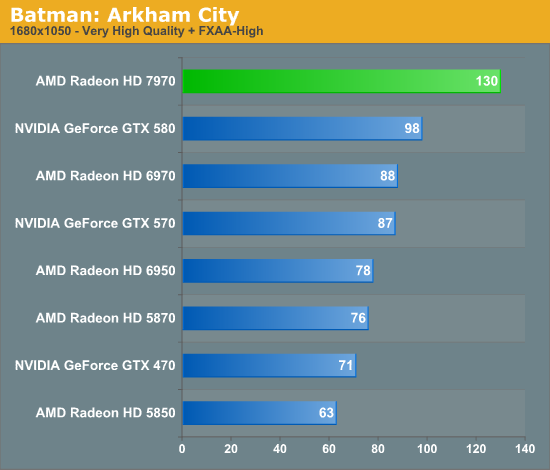

Batman: Arkham City

After a rocky launch last month, Rocksteady finally got their DirectX 11 problems sorted out for Batman: Arkham City earlier this month. Batman: Arkham City is loosely based on Unreal Engine 3, while the DirectX 11 functionality was apparently developed in-house. With the addition of these features Batman is far more a GPU demanding game than its predecessor was, particularly with tessellation cranked up to high.

At Extreme settings Batman is quite daunting for our entire GPU lineup at 2560. Nothing except the GTX 590 can crack 60fps, though the 7970 begins to come close at 52fps. Relative to NVIDIA’s lineup Batman ends up being one of the weaker games for the 7970, with the 7970 only taking an 18% lead over the GTX 580 at 2560. As for the 6970, the 7970 has another very strong showing opposite AMD’s previous generation, beating the 6970 by 44%.

At 1920 we’re still using Extreme settings and the story is much the same, though the 7970’s lead drops a bit more. Against the GTX 580 it’s now only 14% faster, and against the 6970 it’s 35% faster. Things do eventually pick up at 1680 when we back off to Very High settings and stop using MSAA, at which point the 7970 takes a surprising 32% lead over the GTX 580 while the lead over the 6970 jumps back up to 47%.

Looking at all of our cards it’s really the 5870 that tells the whole story. Tessellation plays a large factor in Batman’s performance, and as a result the partially tessellation-constrained 5870 absolutely struggles even at 1920. Consequently this is further proof that AMD was able to get a great deal of additional performance out of their geometry engines even with the 2 tringle/clock limit.

292 Comments

View All Comments

Ryan Smith - Thursday, December 22, 2011 - link

Since 1920x1200 has already been commented on elsewhere I'm just going to jump right to your comment on minimum FPS.I completely agree, and we're trying to add it where it makes sense. A lot of benchmarks are wildly inconsistent about their minimum FPS, largely thanks to the fact that minimum FPS is an instantaneous data point. When your values vary by 20%+ per run (as minimums often do), even averaging repeated trials isn't nearly accurate enough to present meaningful results.

CeriseCogburn - Thursday, March 8, 2012 - link

HardOCP shows long in game fps per second charts that show dips and bottom outs are more than one momentary lapse and often are extended time periods of lowest frame rate runs, so I have to respectfully disagree.Perhaps the fault is fraps can show you a single instance of lowest frame rate number, and hence it's the analysis that utterly fails - given the time constraints that were made obvious, it is also clear that the extra work it would take for an easily reasoned and reasonable result that is actually of worthy accuracy is not in the cards here.

thunderising - Thursday, December 22, 2011 - link

Okay. This card has left me thrilled, but wanting for more. Why?Well, for example, every reviewer has hit the CCC Core and Memory Max Limits, which turns into a healthy 10-12% performance boost, all for 10W.

What, legit reviews got it to 1165MHz core and 6550Mhz memory for a 21-24% increase in performance. Now that's HUGE!

I think AMD could have gone for something like this with the final clocks, to squeeze out every last bit of performance from this amazing card:

Core - 1050 MHz

Memory - 1500 MHz (6000MHz QDR)

This was not only easily achievable, but would have placed this card at a 8-10% increase in performance all for a mere <10W rise in Load Power.

Hoping for AIBs like Sapphire to show their magic! HD7970 Toxic, MmmmmmM...

Otherwise, fantastic card I say.

Death666Angel - Friday, December 23, 2011 - link

Maybe they'll do a 4870/4890 thing again? Launch the HD7970 and HD7970X2 and then launch a HD7990 with higher clocks later to counter nVidia.... Who knows. :-)Mishera - Sunday, December 25, 2011 - link

They've been doing it for quite some time now. Their plan has been to release a chip balancing die size, performance, and cost. Then later to compete on high end release a dual-chip card. Anand wrote on this a while ago with the rv770 story (http://www.anandtech.com/show/2679).Even looking at the picture of chip sizes, the 7970 is still a reasonable size. And this really was a brilliant move as though Nvidia has half the marketshare and does make a lot of money from their cards, their design philosophy has been hurting them a lot from a business standpoint.

On a side note, Amd really made a great choice by choosing to wait until now to push for general computing. Though that probably means more people to support development and drivers, which means more hiring which is the opposite way Amd has been going. It will be interesting to see how this dichotomy will develop in the future. But right now kudos to Amd.

CeriseCogburn - Thursday, March 8, 2012 - link

Does that mean amd is abandoning gamers as we heard the scream whilst Nvidia was doing thus ?I don't quite get it - now what nvidia did that hurt them, is praise worthy since amd did it, finally.

Forgive me as I scoff at the immense dichotomy...

"Perfect ripeness at the perfect time" - sorry not buying it....

privatosan - Thursday, December 22, 2011 - link

PRT is a nice feature, but there is an failure in the article:'For AMD’s technology each tile will be 64KB, which for an uncompressed 32bit texture would be enough room for a 4K x 4K chunk.'

The tile would be 128 x 128 texels; 4K x 4K would be quite big for a tile.

futrtrubl - Thursday, December 22, 2011 - link

I was going to comment on that too. A 4k x 4k x 32bit (4byte) texture chunk would be around 67MB uncompressed. For a 32bit texture you could only fit a 128x128 array in a 64KB chunk. An 8bit/pixel texture could be 4k*4kStonedofmoo - Thursday, December 22, 2011 - link

Thanks for the review. A request though...To the hardware sites doing these reviews, many of us in this day and age run dual monitor or more. It always frustrates in me in these reviews that we get a long write up on the power saving techniques the new cards use, and never any mention of it helps those of us running more than one display.

For those not in the know, if you run more than one display on all the current generations the cards do NOT downclock the GPU and memory nearly as much as they do on single montor configurations. This burns quite a lot more power and obviously kicks out more heat. No site ever mentions this which is odd considering so many of us have more than one display these days.

I would happily buy the card that finally overcomes this and actually finds a way of knocking back the clocks with multi-monitor setups. Is the new Radeon 7xxx series that card?

Galcobar - Thursday, December 22, 2011 - link

It's in the article, on the page entitled "Meet the Radeon 7970."Ryan also replied to a similar comment by quoting the paragraph addressing multi-monitor setups and power consumption at the top of page of the comments.

That's two mentions, and the answer to your question.