AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher, Cont

Having established what’s bad about VLIW as a compute architecture, let’s discuss what makes a good compute architecture. The most fundamental aspect of compute is that developers want stable and predictable performance, something that VLIW didn’t lend itself to because it was dependency limited. Architectures that can’t work around dependencies will see their performance vary due to those dependencies. Consequently, if you want an architecture with stable performance that’s going to be good for compute workloads then you want an architecture that isn’t impacted by dependencies.

Ultimately dependencies and ILP go hand-in-hand. If you can extract ILP from a workload, then your architecture is by definition bursty. An architecture that can’t extract ILP may not be able to achieve the same level of peak performance, but it will not burst and hence it will be more consistent. This is the guiding principle behind NVIDIA’s Fermi architecture; GF100/GF110 have no ability to extract ILP, and developers love it for that reason.

So with those design goals in mind, let’s talk GCN.

VLIW is a traditional and well proven design for parallel processing. But it is not the only traditional and well proven design for parallel processing. For GCN AMD will be replacing VLIW with what’s fundamentally a Single Instruction Multiple Data (SIMD) vector architecture (note: technically VLIW is a subset of SIMD, but for the purposes of this refresher we’re considering them to be different).

A Single GCN SIMD

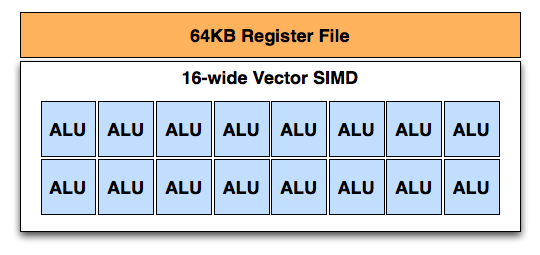

At the most fundamental level AMD is still using simple ALUs, just like Cayman before it. In GCN these ALUs are organized into a single SIMD unit, the smallest unit of work for GCN. A SIMD is composed of 16 of these ALUs, along with a 64KB register file for the SIMDs to keep data in.

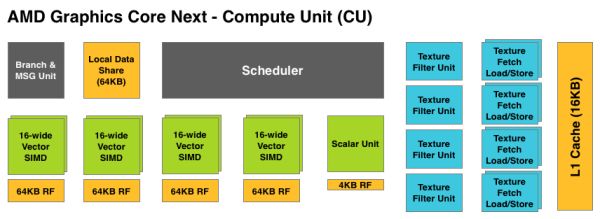

Above the individual SIMD we have a Compute Unit, the smallest fully independent functional unit. A CU is composed of 4 SIMD units, a hardware scheduler, a branch unit, L1 cache, a local date share, 4 texture units (each with 4 texture fetch load/store units), and a special scalar unit. The scalar unit is responsible for all of the arithmetic operations the simple ALUs can’t do or won’t do efficiently, such as conditional statements (if/then) and transcendental operations.

Because the smallest unit of work is the SIMD and a CU has 4 SIMDs, a CU works on 4 different wavefronts at once. As wavefronts are still 64 operations wide, each cycle a SIMD will complete ¼ of the operations on their respective wavefront, and after 4 cycles the current instruction for the active wavefront is completed.

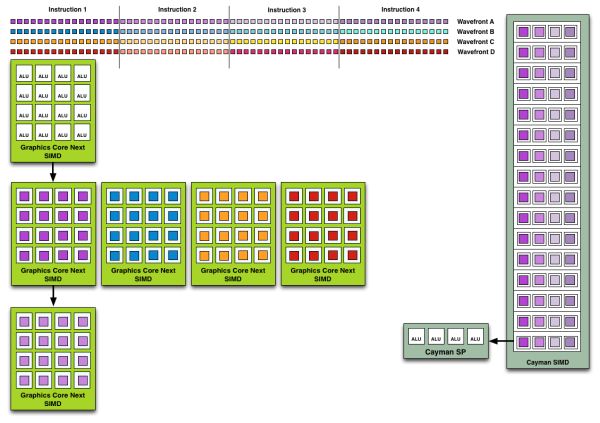

Cayman by comparison would attempt to execute multiple instructions from the same wavefront in parallel, rather than executing a single instruction from multiple wavefronts. This is where Cayman got bursty – if the instructions were in any way dependent, Cayman would have to let some of its ALUs go idle. GCN on the other hand does not face this issue, because each SIMD handles single instructions from different wavefronts they are in no way attempting to take advantage of ILP, and their performance will be very consistent.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

There are other aspects of GCN that influence its performance – the scalar unit plays a huge part – but in comparison to Cayman, this is the single biggest difference. By not taking advantage of ILP, but instead taking advantage of Thread Level Parallism (TLP) in the form of executing more wavefronts at once, GCN will be able to deliver high compute performance and to do so consistently.

Bringing this all together, to make a complete GPU a number of these GCN CUs will be combined with the rest of the parts we’re accustomed to seeing on a GPU. A frontend is responsible for feeding the GPU, as it contains both the command processors (ACEs) responsible for feeding the CUs and the geometry engines responsible for geometry setup. Meanwhile coming after the CUs will be the ROPs that handle the actual render operations, the L2 cache, the memory controllers, and the various fixed function controllers such as the display controllers, PCIe bus controllers, Universal Video Decoder, and Video Codec Engine.

At the end of the day if AMD has done their homework GCN should significantly improve compute performance relative to VLIW4 while gaming performance should be just as good. Gaming shader operations will execute across the CUs in a much different manner than they did across VLIW, but they should do so at a similar speed. And for games that use compute shaders, they should directly benefit from the compute improvements. It’s by building out a GPU in this manner that AMD can make an architecture that’s significantly better at compute without sacrificing gaming performance, and this is why the resulting GCN architecture is balanced for both compute and graphics.

292 Comments

View All Comments

Esbornia - Thursday, December 22, 2011 - link

Fan boy much?CeriseCogburn - Thursday, March 8, 2012 - link

Finally, piroroadkill, Esbornia - the gentleman ericore merely stated what all the articles here have done as analysis while the radeonite fans repeated it ad infinitum screaming nvidia's giant core count doesn't give the percentage increase it should considering transistor increase.Now, when it's amd's turn, we get ericore under 3 attacks in a row...---

So you three all take it back concerning fermi ?

maverickuw - Thursday, December 22, 2011 - link

I want to know when the 7950 will come out and hopefully it'll come out at $400duploxxx - Thursday, December 22, 2011 - link

Only the fact that ATI is able to bring a new architecture on a new process and result in such a performance increase for that power consumption is a clear winner.looking at the past with Fermy 1st launch and even Cayman VLIW4 they had much more issues to start with.

nice job, while probably nv680 will be more performing it will take them at least a while to release that product and it will need to be also huge in size.

ecuador - Thursday, December 22, 2011 - link

Nice review, although I really think testing 1680x1050 for a $550 is a big waste of time, which could have to perhaps multi-monitor testing etc.Esbornia - Thursday, December 22, 2011 - link

Its Anand you should expect this kind of shiet.Ryan Smith - Thursday, December 22, 2011 - link

In this case the purpose of 1680 is to allow us to draw comparisons to low-end cards and older cards, which is something we consider to be important. The 8800GT and 3870 in particular do not offer meaningful performance at 1920.poohbear - Thursday, December 22, 2011 - link

Why do you bencmark @ 1920x1200 resolution? according to the Steam December survey only 8% of gamers have that resolution, whereas 24% have 1920x1080 and 18% use 1680x1050 (the 2 most popular). Also, minimum FPS would be nice to know in your benchmarks, that is really useful for us! just a heads up for next time u benchmark a video card! Otherwise nice review! lotsa good info at the beginning!:)Galcobar - Thursday, December 22, 2011 - link

Page 4, comments section.Esbornia - Thursday, December 22, 2011 - link

They dont want to show the improvements on min FPS cause they hate AMD, you should know that already.