AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher, Cont

Having established what’s bad about VLIW as a compute architecture, let’s discuss what makes a good compute architecture. The most fundamental aspect of compute is that developers want stable and predictable performance, something that VLIW didn’t lend itself to because it was dependency limited. Architectures that can’t work around dependencies will see their performance vary due to those dependencies. Consequently, if you want an architecture with stable performance that’s going to be good for compute workloads then you want an architecture that isn’t impacted by dependencies.

Ultimately dependencies and ILP go hand-in-hand. If you can extract ILP from a workload, then your architecture is by definition bursty. An architecture that can’t extract ILP may not be able to achieve the same level of peak performance, but it will not burst and hence it will be more consistent. This is the guiding principle behind NVIDIA’s Fermi architecture; GF100/GF110 have no ability to extract ILP, and developers love it for that reason.

So with those design goals in mind, let’s talk GCN.

VLIW is a traditional and well proven design for parallel processing. But it is not the only traditional and well proven design for parallel processing. For GCN AMD will be replacing VLIW with what’s fundamentally a Single Instruction Multiple Data (SIMD) vector architecture (note: technically VLIW is a subset of SIMD, but for the purposes of this refresher we’re considering them to be different).

A Single GCN SIMD

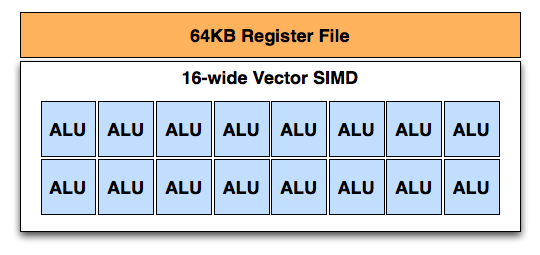

At the most fundamental level AMD is still using simple ALUs, just like Cayman before it. In GCN these ALUs are organized into a single SIMD unit, the smallest unit of work for GCN. A SIMD is composed of 16 of these ALUs, along with a 64KB register file for the SIMDs to keep data in.

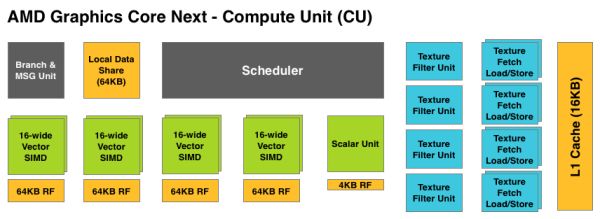

Above the individual SIMD we have a Compute Unit, the smallest fully independent functional unit. A CU is composed of 4 SIMD units, a hardware scheduler, a branch unit, L1 cache, a local date share, 4 texture units (each with 4 texture fetch load/store units), and a special scalar unit. The scalar unit is responsible for all of the arithmetic operations the simple ALUs can’t do or won’t do efficiently, such as conditional statements (if/then) and transcendental operations.

Because the smallest unit of work is the SIMD and a CU has 4 SIMDs, a CU works on 4 different wavefronts at once. As wavefronts are still 64 operations wide, each cycle a SIMD will complete ¼ of the operations on their respective wavefront, and after 4 cycles the current instruction for the active wavefront is completed.

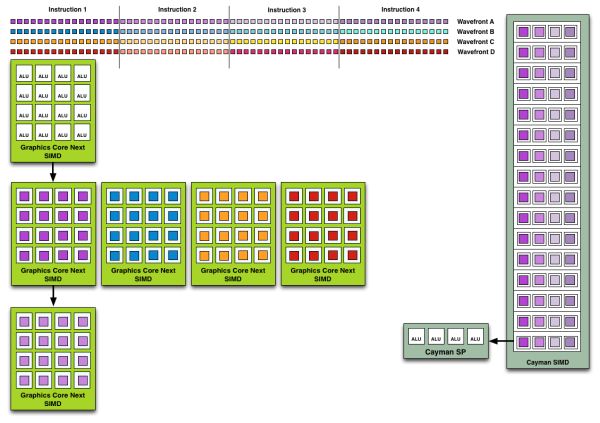

Cayman by comparison would attempt to execute multiple instructions from the same wavefront in parallel, rather than executing a single instruction from multiple wavefronts. This is where Cayman got bursty – if the instructions were in any way dependent, Cayman would have to let some of its ALUs go idle. GCN on the other hand does not face this issue, because each SIMD handles single instructions from different wavefronts they are in no way attempting to take advantage of ILP, and their performance will be very consistent.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

There are other aspects of GCN that influence its performance – the scalar unit plays a huge part – but in comparison to Cayman, this is the single biggest difference. By not taking advantage of ILP, but instead taking advantage of Thread Level Parallism (TLP) in the form of executing more wavefronts at once, GCN will be able to deliver high compute performance and to do so consistently.

Bringing this all together, to make a complete GPU a number of these GCN CUs will be combined with the rest of the parts we’re accustomed to seeing on a GPU. A frontend is responsible for feeding the GPU, as it contains both the command processors (ACEs) responsible for feeding the CUs and the geometry engines responsible for geometry setup. Meanwhile coming after the CUs will be the ROPs that handle the actual render operations, the L2 cache, the memory controllers, and the various fixed function controllers such as the display controllers, PCIe bus controllers, Universal Video Decoder, and Video Codec Engine.

At the end of the day if AMD has done their homework GCN should significantly improve compute performance relative to VLIW4 while gaming performance should be just as good. Gaming shader operations will execute across the CUs in a much different manner than they did across VLIW, but they should do so at a similar speed. And for games that use compute shaders, they should directly benefit from the compute improvements. It’s by building out a GPU in this manner that AMD can make an architecture that’s significantly better at compute without sacrificing gaming performance, and this is why the resulting GCN architecture is balanced for both compute and graphics.

292 Comments

View All Comments

Zingam - Thursday, December 22, 2011 - link

And at the time when it is available in D3D. AMD's implementation won't be compatible... :D That's sounds familiar. So will have to wait for another generation to get the things right.Ryan Smith - Thursday, December 22, 2011 - link

As for your question about FP64, it's worth noting that of the FP64 rates AMD listed for GCN, "0" was not explicitly an option. It's quite possible that anything using GCN will have at a minimum 1/16th FP64.Sind - Thursday, December 22, 2011 - link

Excellent review thanks Ryan. Looking forward to see what the 7950 performance and pricing will end up. Also to see what nv has up their sleeves. Although I can't shake the feeling amd is holding back.chizow - Thursday, December 22, 2011 - link

Another great article, I really enjoyed all the state-of-the-industry commentary more than the actual benchmarks and performance numbers.One thing I may have missed was any coverage at all of GCN. Usually you guys have all those block diagrams and arrows explaining the changes in architecture. I know you or Anand did a write-up on GCN awhile ago, but I may have missed the link to it in this article. Or maybe put a quick recap in there with a link to the full write-up.

But with GCN, I guess we can close the book on AMD's past Vec5/VLIW4 archs as compute failures? For years ATI/AMD and their supporters have insisted it was the better compute architecture, and now we're on the 3rd major arch change since unified shaders, while Nvidia has remained remarkably consistent with their simple SP approach. I think the most striking aspect of this consistency is that you can run any CUDA or GPU accelerated apps on GPUs as old as G80, while you even noted you can't even run some of the most popular compute apps on 7970 because of arch-specific customizations.

I also really enjoyed the ISV and driver/support commentary. It sounds like AMD is finally serious about "getting in the game" or whatever they're branding it nowadays, but I have seen them ramp up their efforts with their logo program. I think one important thing for them to focus on is to get into more *quality* games rather than just focusing on getting their logo program into more games. Still, as long as both Nvidia and AMD are working to further the compatibility of their cards without pushing too many vendor-specific features, I think that's a win overall for gamers.

A few other minor things:

1) I believe Nvidia will soon be countering MLAA with a driver-enabled version of their FXAA. While FXAA is available to both AMD and Nvidia if implemented in-game, providing it driver-side will be a pretty big win for Nvidia given how much better performance and quality it offers over AMD's MLAA.

2) When referring to active DP adapter, shouldn't it be DL-DVI? In your blurb it said SL-DVI. Its interesting they went this route with the outputs, but providing the active adapter was definitely a smart move. Also, is there any reason GPU mfgs don't just add additional TMDS transmitters to overcome the 4x limitation? Or is it just a cost issue?

3) The HDMI discussion is a bit fuzzy. HDMI 1.4b specs were just finalized, but haven't been released. Any idea whether or not SI or Kepler will support 1.4b? Biggest concern here is for 120Hz 1080p 3D support.

Again, thoroughly enjoyed reading the article, great job as usual!

Ryan Smith - Thursday, December 22, 2011 - link

Thanks for the kind words.Quick answers:

2) No, it's an active SL-DVI adapter. DL-DVI adapters exist, but are much more expensive and more cumbersome to use because they require an additional power source (usually USB).

As for why you don't see video cards that support more than 2 TMDS-type displays, it's both an engineering and a cost issue. On the engineering side each TMDS source (and thus each supported TMDS display) requires its own clock generator, whereas DisplayPort only requires 1 common clock generator. On the cost side those clock generators cost money to implement, but using TMDS also requires paying royalties to Silicon Image. The royalty is on the order of cents, but AMD and NVIDIA would still rather not pay it.

3) SI will support 1080P 120Hz frame packed S3D.

ericore - Thursday, December 22, 2011 - link

Core Next: It appears AMD is playing catchup to Nvidia's Cuda, but to an extent that halves the potential performance metrics; I see no other reason why they could not have achieved at varying 25-50% improvement in FPS. That is going to cost them, not just for marginally better performance 5-25%, but they are price matching GTX 580 which means less sales though I suppose people who buy 500$ + GPUs buy them no matter what. Though in this case, they may wait to see what Nvidia has to offer.Other New AMD GPUs: Will be releasing in February and April are based on the current architecture, but with two critical differences; smaller node + low power based silicon VS the norm performance based silicon. We will see very similar performance metrics, but the table completely flips around: we will see them, cheaper, much more power efficient and therefore very quiet GPUs; I am excited though I would hate to buy this and see Nvidia deliver where AMD failed.

Thanks Anand, always a pleasure reading your articles.

Angrybird - Thursday, December 22, 2011 - link

any hint on 7950? this card should go head to head with gtx580 when it release. good job for AMD, great review for Ryan!ericore - Thursday, December 22, 2011 - link

I should add with over 4 billion transistors, they've added more than 35% more transistors but only squeeze 5-25% improvement; unacceptable. That is a complete fail in that context relative to advancement in gaming. Too much catchup with Nvidia.Finally - Thursday, December 22, 2011 - link

...that saying? It goes like this:If you don't show up for a race, you lose by default.

Your favourite company lost, so their fanboys may become green of envydia :)

Besides that - I'd never shell out more than 150€ for a petty GPU, so neither company's product would have appealed to me...

piroroadkill - Thursday, December 22, 2011 - link

Wait, catchup? In my eyes, they were already winning. 6950 with dual BIOS, unlock it to 6970.. unbelievable value.. profit??Already has a larger framebuffer than the GTX580, so...