The Bulldozer Review: AMD FX-8150 Tested

by Anand Lal Shimpi on October 12, 2011 1:27 AM ESTGaming Performance

AMD clearly states in its reviewer's guide that CPU bound gaming performance isn't going to be a strong point of the FX architecture, likely due to its poor single threaded performance. However it is useful to look at both CPU and GPU bound scenarios to paint an accurate picture of how well a CPU handles game workloads, as well as what sort of performance you can expect in present day titles.

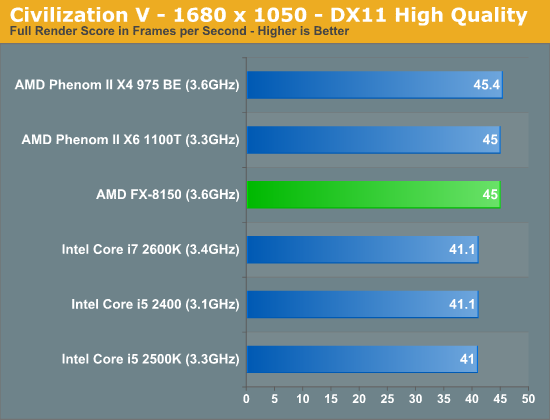

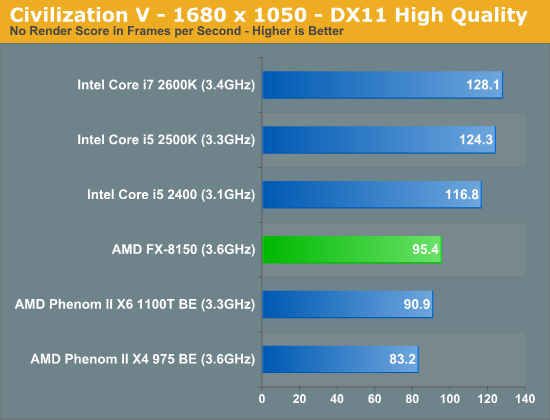

Civilization V

Civ V's lateGameView benchmark presents us with two separate scores: average frame rate for the entire test as well as a no-render score that only looks at CPU performance.

While we're GPU bound in the full render score, AMD's platform appears to have a bit of an advantage here. We've seen this in the past where one platform will hold an advantage over another in a GPU bound scenario and it's always tough to explain. Within each family however there is no advantage to a faster CPU, everything is just GPU bound.

Looking at the no render score, the CPU standings are pretty much as we'd expect. The FX-8150 is thankfully a bit faster than its predecessors, but it still falls behind Sandy Bridge.

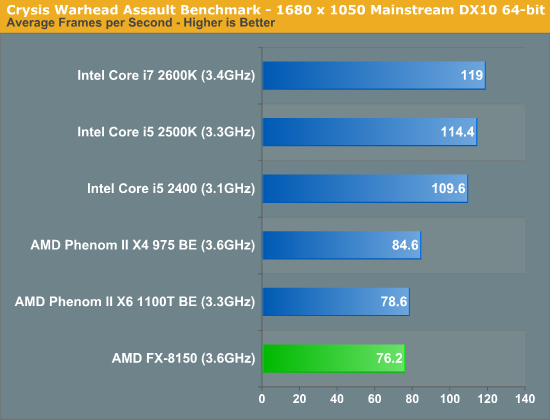

Crysis: Warhead

In CPU bound environments in Crysis Warhead, the FX-8150 is actually slower than the old Phenom II. Sandy Bridge continues to be far ahead.

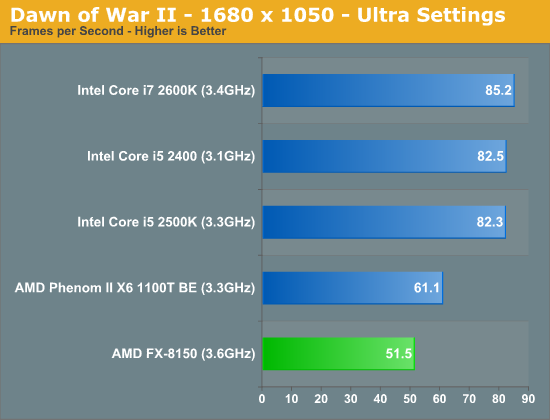

Dawn of War II

We see similar results under Dawn of War II. Lightly threaded performance is simply not a strength of AMD's FX series, and as a result even the old Phenom II X6 pulls ahead.

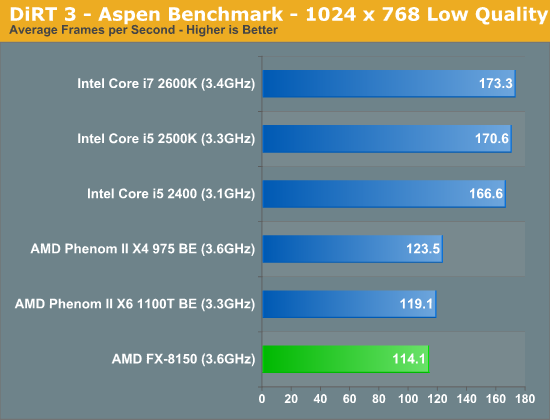

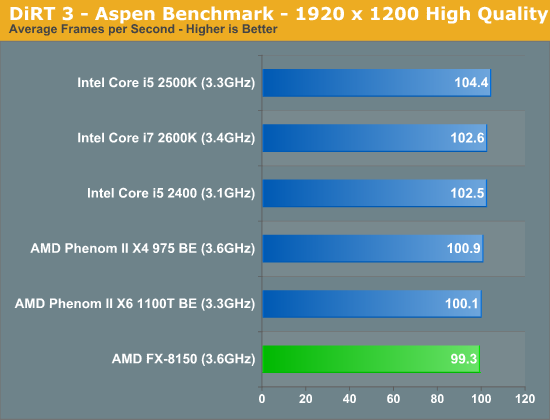

DiRT 3

We ran two DiRT 3 benchmarks to get an idea for CPU bound and GPU bound performance. First the CPU bound settings:

The FX-8150 doesn't do so well here, again falling behind the Phenom IIs. Under more real world GPU bound settings however, Bulldozer looks just fine:

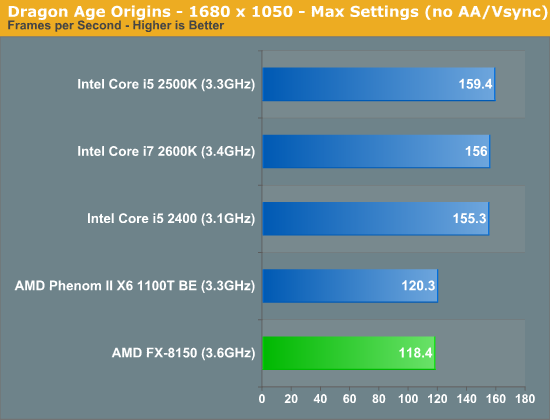

Dragon Age

Dragon Age is another CPU bound title, here the FX-8150 falls behind once again.

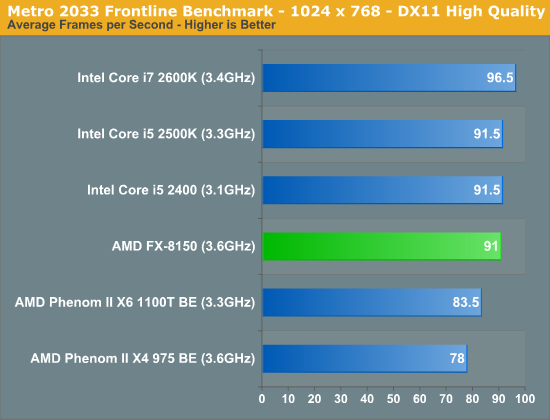

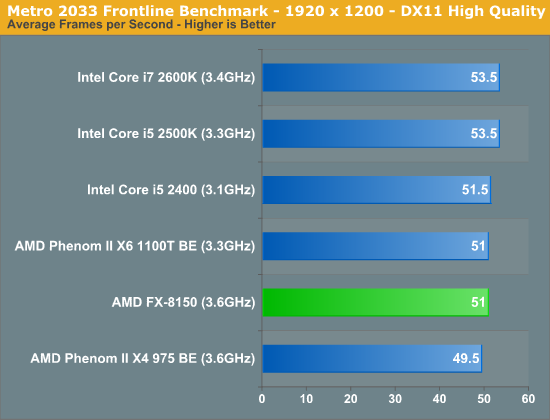

Metro 2033

Metro 2033 is pretty rough even at lower resolutions, but with more of a GPU bottleneck the FX-8150 equals the performance of the 2500K:

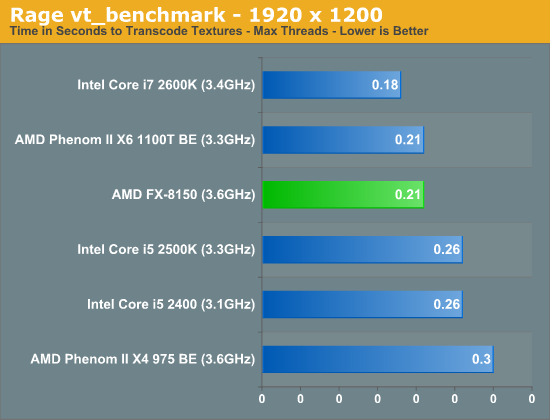

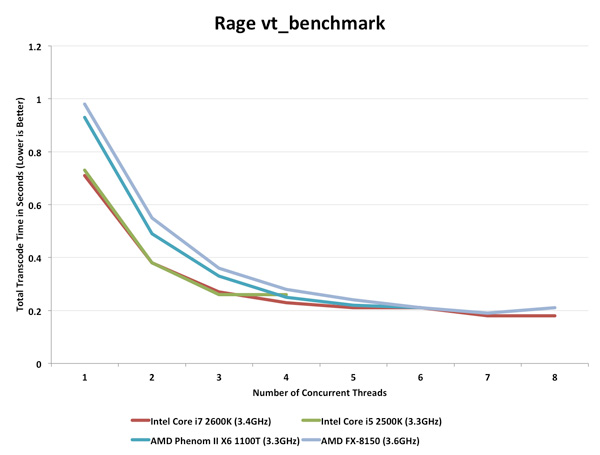

Rage vt_benchmark

While id's long awaited Rage title doesn't exactly have the best benchmarking abilities, there is one unique aspect of the game that we can test: Megatexture. Megatexture works by dynamically taking texture data from disk and constructing texture tiles for the engine to use, a major component for allowing id's developers to uniquely texture the game world. However because of the heavy use of unique textures (id says the original game assets are over 1TB), id needed to get creative on compressing the game's textures to make them fit within the roughly 20GB the game was allotted.

The result is that Rage doesn't store textures in a GPU-usable format such as DXTC/S3TC, instead storing them in an even more compressed format (JPEG XR) as S3TC maxes out at a 6:1 compression ratio. As a consequence whenever you load a texture, Rage needs to transcode the texture from its storage codec to S3TC on the fly. This is a constant process throughout the entire game and this transcoding is a significant burden on the CPU.

The Benchmark: vt_benchmark flushes the transcoded texture cache and then times how long it takes to transcode all the textures needed for the current scene, from 1 thread to X threads. Thus when you run vt_benchmark 8, for example, it will benchmark from 1 to 8 threads (the default appears to depend on the CPU you have). Since transcoding is done by the CPU this is a pure CPU benchmark. I present the best case transcode time at the maximum number of concurrent threads each CPU can handle:

The FX-8150 does very well here, but so does the Phenom II X6 1100T. Both are faster than Intel's 2500K, but not quite as good as the 2600K. If you want to see how performance scales with thread count, check out the chart below:

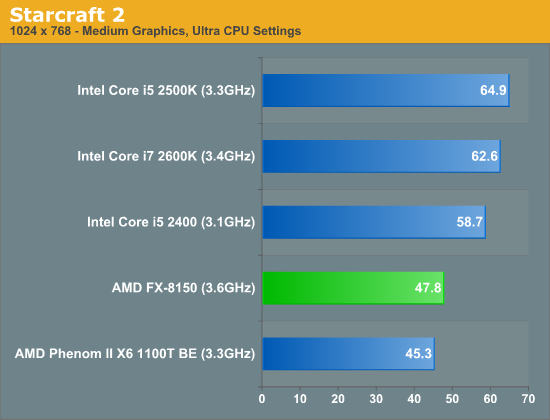

Starcraft 2

Starcraft 2 has traditionally done very well on Intel architectures and Bulldozer is no exception to that rule.

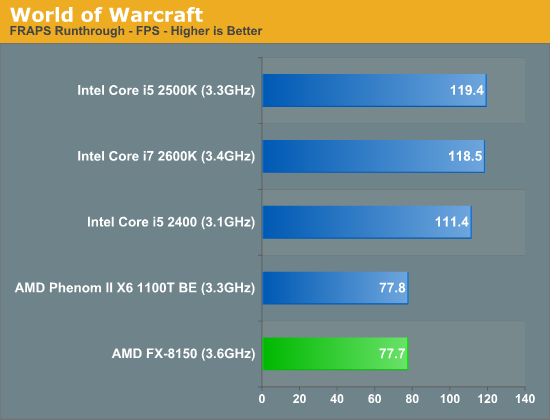

World of Warcraft

430 Comments

View All Comments

THizzle7XU - Wednesday, October 12, 2011 - link

Well, why would you target the variable PC segment when you can program for a well established, large user-base platform with a single configuration and make a ton more money with probably far less QA work since there's only one set (two for multi-platform PS3 games) of hardware to test?And it's not like 360/PS3 games suddenly look like crap 5-6 years into their cycles. Think about how good PS2 games looked 7 years into that system's life cycle (God of War 2). Devs are just now getting the most of of the hardware. It's a great time to be playing games on 360/PS3 (and PC!).

GatorLord - Wednesday, October 12, 2011 - link

Consider what AMD is and what AMD isn't and where computing is headed and this chip is really beginning to make sense. While these benches seem frustrating to those of us on a desktop today I think a slightly deeper dive shows that there is a whole world of hope here...with these chips, not something later.I dug into the deal with Cray and Oak Ridge, and Cray is selling ORNL massively powerful computers (think petaflops) using Bulldozer CPUs controlling Nvidia Tesla GPUs which perform the bulk of the processing. The GPUs do vastly more and faster FPU calculations and the CPU is vastly better at dishing out the grunt work and processing the results for use by humans or software or other hardware. This is the future of High Performance Computing, today, but on a government scale. OK, so what? I'm a client user.

Here's what: AMD is actually best at making GPUs...no question. They have been in the GPGPU space as long as Nvidia...except the AMD engineers can collaborate on both CPU and GPU projects simultaneously without a bunch of awkward NDAs and antitrust BS getting in the way. That means that while they obviously can turn humble server chips into supercomputers by harnessing the many cores on a graphics card, how much more than we've seen is possible on our lowly desktops when this rebranded server chip enslaves the Ferraris on the PCI bus next door...the GPUs.

I get it...it makes perfect sense now. Don't waste real estate on FPU dies when the one's next door are hundreds or thousands of times better and faster too. This is not the beginning of the end of AMD, but the end of the beginning (to shamlessely quote Churchill). Now all that cryptic talk about a supercomputer in your tablet makes sense...think Llano with a so-so CPU and a big GPU on the same die with some code tweaks to schedule the GPU as a massive FPU and the picture starts taking shape.

Now imagine a full blown server chip (BD) harnessing full blown GPUs...Radeon 6XXX or 7XXX and we are talking about performance improvements in the orders of magnitude, not percentage points. Is AMD crazy? I'm thinking crazy like a fox.

Oh..as a disclaimer, while I'm long AMD...I'm just an enthusiast like the rest of you and not a shill...I want both companies to make fast chips that I can use to do Monte Carlos and linear regressions...it just looks like AMD seems to have figured out how to play the hand they're holding for change...here's to the future for us all.

Menoetios - Wednesday, October 12, 2011 - link

I think you bring up a very good point here. This chip looks like it's designed to be very closely paired with a highly programmable GPU, which is where the GPU roadmaps are leading over the next year and a half. While the apples-to-apples nature of this review draw a disappointing picture, I'm very curious how AMD's "Fusion" products next year will look, as the various compute elements of the CPU and GPU become more tightly integrated. Bulldozer appears to fit perfectly in an ecosystem that we don't quite have yet.GatorLord - Wednesday, October 12, 2011 - link

Exactly. Ecosystem...I like it. This is what it must feel like to pick up a flashlight at the entrance to the tunnel when all you're used to is clubs and torches. Until you find the switch, it just seems worse at either...then viola!actionjksn - Wednesday, October 12, 2011 - link

Wow I hope that made you feel better about the crappy chip also known a "Man With A Shovel"I was just hoping AMD would quit forcing Intel to have to keep on crippling their chips, just to keep them from putting AMD out of business. AMD better fix this abortion quick, this is getting old.

GatorLord - Thursday, October 13, 2011 - link

Feeling fine. Not as good in the short run, but feeling better about the long run. Unfortunately, due to constraints, it takes AMD too long to get stuff dialed in and by the time they do, Intel has already made an end run and beat them to the punch.Intel can do that, they're 40x as big as AMD. Actually, and this may sound crazy until you digest it, the smartest thing Intel could do is spin off a couple of really good dev labs as competitors. Relying on AMD to drive your competition is risky in that AMD may not be able to innovate fast enough to push Intel where it could be if they had more and better sharks in the water nipping at their tails.

You really need eight or more highly capable, highly aggressive competitors to create a fully functioning market free of monopolistic and oligopolistic sluggishness and BS hand signalling between them. This space is too capital intensive for that at the time being with the current chip making technology what it is.

yankeeDDL - Wednesday, October 12, 2011 - link

Just to be the devil's advocate ...The launch event in London sported 2 PC, side by side, running Cinebench.

One had the core i5-2500k, the other the FX8150.

Of course, these systems are prepared by AMD, so the results from Anand are clearly more reliable (at least all the conditions are documented).

Nevertheless, it is clear that in the demo from AMD, the FX runs faster. Not by a lot, but it is clearly faster than the i5.

Video: http://www.viddler.com/explore/engadget/videos/335...

Even so, assuming that this was a valid datapoint, things won't change too much: the i5-2500k is cheaper and (would be) slightly slower than the FX8150 in the most heavily threaded benchmark. But it would be slightly better than Anand's results show.

KamikaZeeFu - Wednesday, October 12, 2011 - link

"Nevertheless, it is clear that in the demo from AMD, the FX runs faster. Not by a lot, but it is clearly faster than the i5."Check the review, cinebench r11.5 multithreaded chart.

Anand's numbers mirror the ones by AMD. Multithreaded workloads are the only case where the 8150 will outperform an i5 2500k because it can process twice the amount of threads.

Really disappointed in AMD here, but I expected subpar performance because it was eerily quiet about the FX line as far as performance went.

Desktop BD is a full failure, they were aiming for high clock speeds and made sacrifices, but still failed their objective. By the time their process is mature and 4 GHz dozers hit the channel, Ivy bridge will be out.

As far as server performance goes, not even sure they will succeed there.

As seen in the review, clock for clock performance isn't up compared to the prvious generation, and in some cases it's actually slower. Considering that servers run at lower clocks in the first place, I don't see BD being any threat to intels server lineup.

4 years to develop this chip, and their motto seemed to be "we'll do netburst but in not-fail"

medi01 - Wednesday, October 12, 2011 - link

So CPU is a bottleneck in your games eh?TekDemon - Wednesday, October 12, 2011 - link

It's not but people don't buy CPUs for today's games, generally you want your system to be future proof so the more extra headroom there is in these CPU benchmarks the better it holds up over the long term. Look back at CPU benchmarks from 3-4 years ago and you'll see that the CPUs that barely passed muster back then easily bottleneck you whereas CPUs that had extra headroom are still usable for gaming. For example the Core 2 Duo E8400 or E8500 is still a very capable gaming CPU, especially when given a mild overclock and frankly in games that only use a few threads (like Starcraft 2) it gives Bulldozer a run for the money.I'm not a fanboy either way since I own that E8400 as well as a Phenom II (unlocked to X4, OC'ed to 3.9Ghz) and a i5 2500K but if I was building a new system I sure as heck would want extra headroom for future-proofing.

That said? Of course these chips will be more than enough power for general use. They're just not going to be good for high end systems. But in a general use situation the problem is that the power consumption is just crappy compared to the intel solutions, even if you can argue that it's more than enough power for most people why would you want to use more electricity?