The Bulldozer Review: AMD FX-8150 Tested

by Anand Lal Shimpi on October 12, 2011 1:27 AM ESTThe Pursuit of Clock Speed

Thus far I have pointed out that a number of resources in Bulldozer have gone down in number compared to their abundance in AMD's Phenom II architecture. Many of these tradeoffs were made in order to keep die size in check while adding new features (e.g. wider front end, larger queues/data structures, new instruction support). Everywhere from the Bulldozer front-end through the execution clusters, AMD's opportunity to increase performance depends on both efficiency and clock speed. Bulldozer has to make better use of its resources than Phenom II as well as run at higher frequencies to outperform its predecessor. As a result, a major target for Bulldozer was to be able to scale to higher clock speeds.

AMD's architects called this pursuit a low gate count per pipeline stage design. By reducing the number of gates per pipeline stage, you reduce the time spent in each stage and can increase the overall frequency of the processor. If this sounds familiar, it's because Intel used similar logic in the creation of the Pentium 4.

Where Bulldozer is different is AMD insists the design didn't aggressively pursue frequency like the P4, but rather aggressively pursued gate count reduction per stage. According to AMD, the former results in power problems while the latter is more manageable.

AMD's target for Bulldozer was a 30% higher frequency than the previous generation architecture. Unfortunately that's a fairly vague statement and I couldn't get AMD to commit to anything more pronounced, but if we look at the top-end Phenom II X6 at 3.3GHz a 30% increase in frequency would put Bulldozer at 4.3GHz.

Unfortunately 4.3GHz isn't what the top-end AMD FX CPU ships at. The best we'll get at launch is 3.6GHz, a meager 9% increase over the outgoing architecture. Turbo Core does get AMD close to those initial frequency targets, however the turbo frequencies are only typically seen for very short periods of time.

As you may remember from the Pentium 4 days, a significantly deeper pipeline can bring with it significant penalties. We have two prior examples of architectures that increased pipeline length over their predecessors: Willamette and Prescott.

Willamette doubled the pipeline length of the P6 and it was due to make up for it by the corresponding increase in clock frequency. If you do less per clock cycle, you need to throw more clock cycles at the problem to have a neutral impact on performance. Although Willamette ran at higher clock speeds than the outgoing P6 architecture, the increase in frequency was gated by process technology. It wasn't until Northwood arrived that Intel could hit the clock speeds required to truly put distance between its newest and older architectures.

Prescott lengthened the pipeline once more, this time quite significantly. Much to our surprise however, thanks to a lot of clever work on the architecture side Intel was able to keep average instructions executed per clock constant while increasing the length of the pipe. This enabled Prescott to hit higher frequencies and deliver more performance at the same time, without starting at an inherent disadvantage. Where Prescott did fall short however was in the power consumption department. Running at extremely high frequencies required very high voltages and as a result, power consumption skyrocketed.

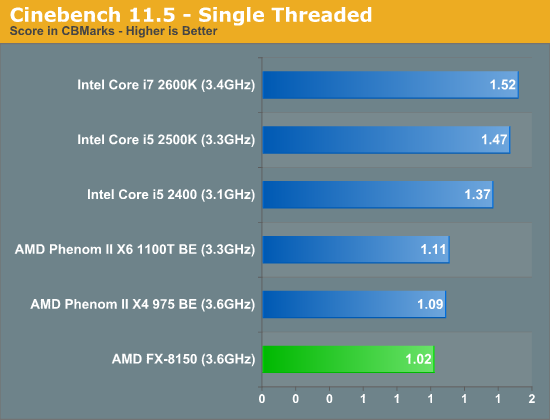

AMD's goal with Bulldozer was to have IPC remain constant compared to its predecessor, while increasing frequency, similar to Prescott. If IPC can remain constant, any frequency increases will translate into performance advantages. AMD attempted to do this through a wider front end, larger data structures within the chip and a wider execution path through each core. In many senses it succeeded, however single threaded performance still took a hit compared to Phenom II:

At the same clock speed, Phenom II is almost 7% faster per core than Bulldozer according to our Cinebench results. This takes into account all of the aforementioned IPC improvements. Despite AMD's efforts, IPC went down.

A slight reduction in IPC however is easily made up for by an increase in operating frequency. Unfortunately, it doesn't appear that AMD was able to hit the clock targets it needed for Bulldozer this time around.

We've recently reported on Global Foundries' issues with 32nm yields. I can't help but wonder if the same type of issues that are impacting Llano today are also holding Bulldozer back.

430 Comments

View All Comments

medi01 - Thursday, October 13, 2011 - link

Slightest "problem" imaginable with AMD GPUs would make it into titles.nVidia article would go with comparing cherry picked overclocked board vs standard from AMD, with laughable "explanations" of "oh nVidia marketing asked us to do it, we kinda refused but then we thought that since we've already kinda refused, we might still do what they've asked".

"Objectively", are you kidding me?

JKflipflop98 - Thursday, October 13, 2011 - link

Anand runs the test, then writes down the number. Then he runs the test on the other PC, and writes down the number.If your number is lower, then it's physics "badmouthing" your precious, and not the site.

actionjksn - Wednesday, October 12, 2011 - link

@medi01 Considering the results I think Anand were more than kind enough to AMD.medi01 - Thursday, October 13, 2011 - link

I recall low power AMD CPUs being tested on 1000Watt PSUs on this very site. How normal was that, cough? iPhones "forgoten in pocket" (authors comment) on comparison photos where they would look unfavourably)Thing with tests is, you have games that favour one manufacturer, then other games that favour another. Choose "right" set of games, and viola...

The move with 1000Watt PSU on 35W TDP CPU is TOO DAMN LOW and should never happen.

On top of it, absolute majority of games is more GPU sensitive, than CPU sensitive. Now one could reduce resolution to ridiculously low levels so that CPU becomes a bottleneck. but then, who on earth would care whether you get 150 or 194 frames per second at a resolution which you'll never use?

Stas - Thursday, October 13, 2011 - link

Not sure what the deal is with PSUs or what article you're referring to. I'm assuming it made AMD power consumption look worse than it was because 1kW PSU was running at 10% load, thus way out of efficiency range. But w/e. My comment is mostly on CPU performance in games. Just because you don't run a game on the top-end CPU with $800 in multi-gpu tandem at lowest settings, doesn't mean it shouldn't be used to determine CPU performance. By making the CPU the bottleneck, you make it do as much as it can side-by-side with the GPU spiting out frames while whistling tunes and picking it's finger nails. There is more load on CPU than GPU. Which ever CPU is faster - that CPU will provide more FPS. Simple as that.Sure, no one will see 20%-30% performance difference using more appropriate resolution and quality settings. But we're enthusiasts, we want to see peak performance difference and extreme loads. Most synthetic tests are irrelevant in everyday use, but performance has been measured that way for decades.

jleach1 - Friday, October 14, 2011 - link

I haven't seen one single sentence that was questionable in a and graphics review. In fact I'm glad to say that I'm a big fan of Intel CPU and and hour combos, and have never had even as much as a hint of bias.As a over exaggeration, in an age where were all stuffing multiple cards in our systems, and cards are efficient, reliable, powerful, and they run cool. yes the drivers have sucked in the past, but they don't really.

(emphasis on the word seem)

NvIdia cards have just seemed clunky and hot as hell since the 400 series. I don't feel like gaming next to a space heater. And I definitely don't want to pay 40 percent more for ten percent performance just to have a space heater and bragging rights.

its like amd graphics are similar to intels CPU lineup, they're great performance per dollar parts, and they're efficient. But NvIdia and Intel graphics are like amd CPUs, they're either inefficient, or they're good at only a few things.

The moral? what the *$&* amd....you might as well write off the whole desktop business if the competition IS fifty percent faster and gaining ground....that 15 percent you're promising next year better be closer to 50 or I'm going to forget about your processors altogether.

jleach1 - Friday, October 14, 2011 - link

Intel CPU and amd combos*....sorry for the bat grammar. Writing on a tablet with Swype.CeriseCogburn - Wednesday, March 21, 2012 - link

40% more cost and 10% more performance?You said that's across the board.

I'm certainly glad you aren't the reviewer here on anything. I mean really that was over the top.

CeriseCogburn - Friday, June 8, 2012 - link

They went fullblown favor the bullsnoozer by using the GPU limited amd hd5870 to make the stupid amd cpu look good.Thank your lucky stars they did that much for you.

MJEvans - Thursday, October 13, 2011 - link

I think your later point is exactly why the FPU support isn't as strong. (most) tasks that use FPU appear to be operating on large matrices of data, while sequential processing seems to have a good design idea (even if the implementation is a little immature and a little early), but slower latency l1/l2 cache access. I hope that's an area that will be addressed by the next iteration.