A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTCrysis, BattleForge, Metro 2033, and HAWX

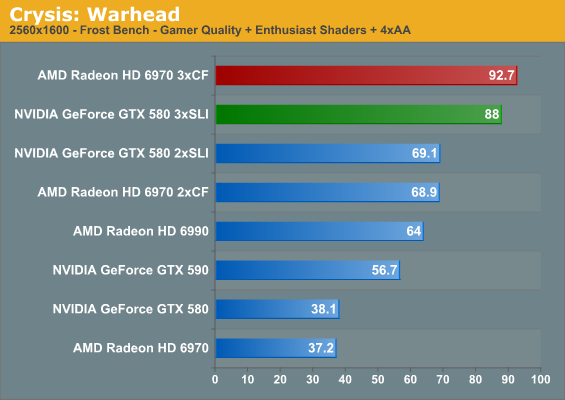

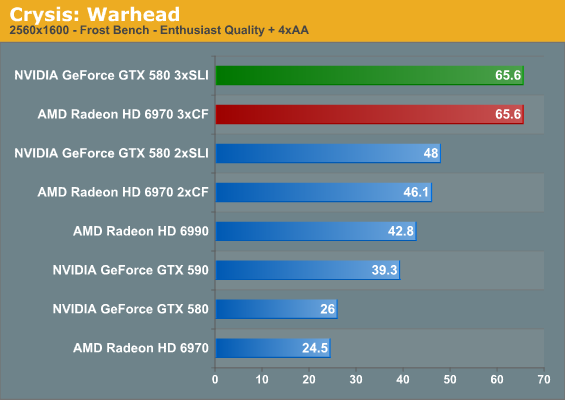

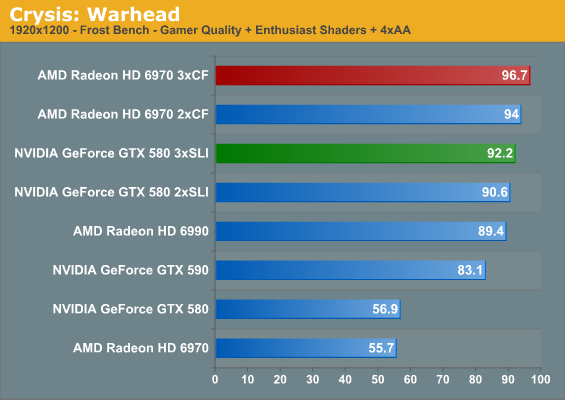

For the sake of completeness we have included both 2560x1600 and 1920x1200 results in our charts. However with current GPU performance a triple-GPU setup only makes sense at 2560, so that’s the resolution we’re going to be focusing on for commentary and scaling purposes.

As we normally turn to Crysis as our first benchmark it ends up being quite amusing when we have a rather exact tie on our hands. The triple GTX 580 setup ends up exactly tying the triple 6970 setup at 2560x1600 with full enthusiast settings at 65.6fps. This is quite an appropriate allegory for AMD and NVIDIA’s relative performance as of late, as the two are normally very close when it comes to cards at the same price. It’s also probably not the best start for the triple GTX 580 though, as it means NVIDIA’s lead at one and two cards has melted away by the 3rd.

We have however finally established what it takes to play Crysis at full resolution on a single monitor with every setting turned up – it takes no fewer than three GPUs to do the job. Given traditional GPU performance growth curves, it should be possible to do this on a single GPU by early 2014 or so, only some 7 years after the release of Crysis: Warhead. If you want SSAA though, you may as well throw in another few years.

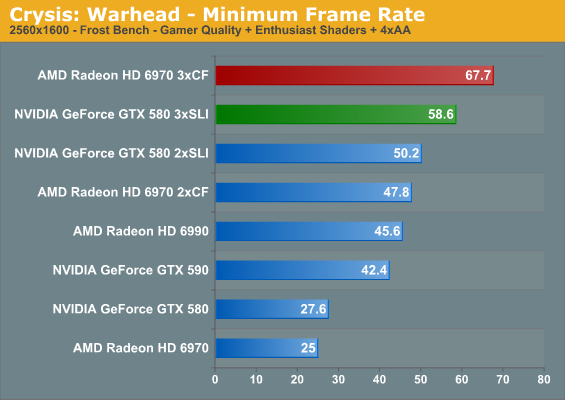

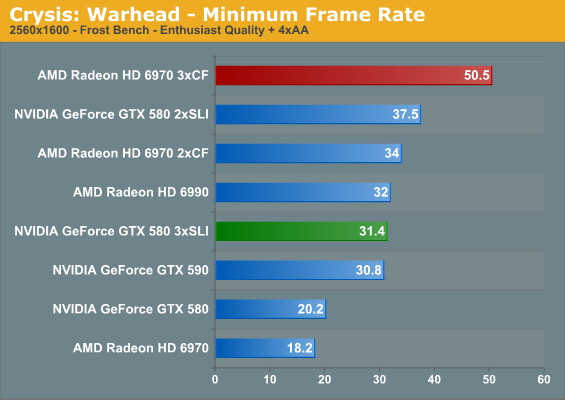

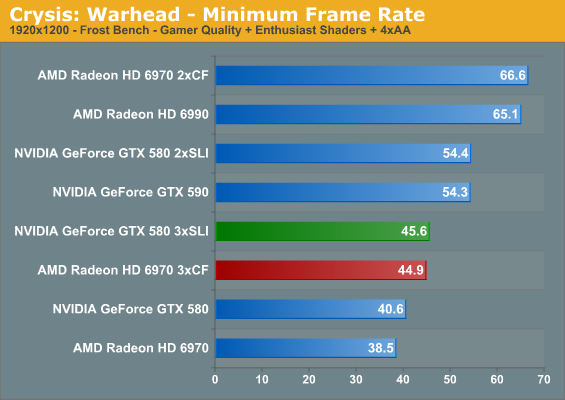

Moving on, it’s interesting to note that while we had a tie at 2560 with Enthusiast settings for the average framerate, the same cannot be said of the minimums. At 2560, no matter the quality, AMD has a distinct edge in the minimum framerate. This is particularly pronounced at 2560E, where moving from two to three GPUs causes a drop in the framerate on the GTX 580. This is probably a result of the differences in the cards’ memory capacity – additional GPUs require additional memory, and it seems the GTX 580 and its 1.5GB has reached its limit. We never seriously imagined we’d find a notable difference between 1.5GB and 2GB at this point in time, but here we are.

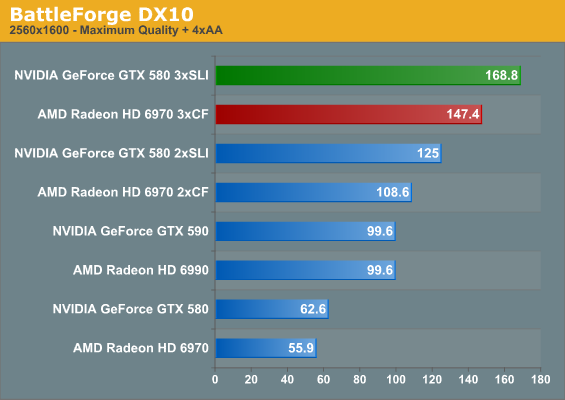

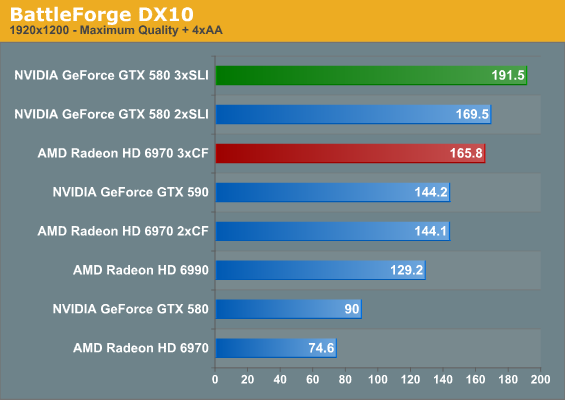

BattleForge is a shader-bound game that normally favors NVIDIA, and this doesn’t change with three GPUs. However even though it’s one of our more intensive games, three GPUs is simply overkill for one monitor.

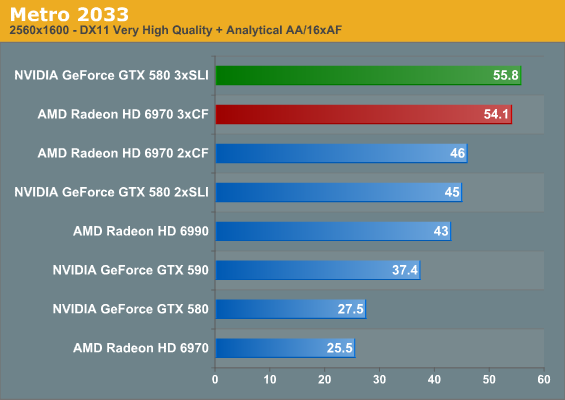

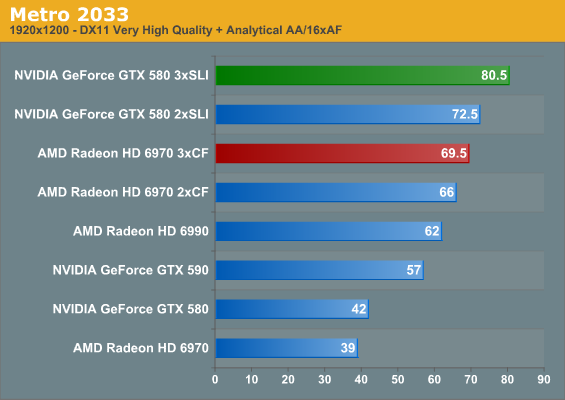

Metro 2033 is the only other title in our current lineup that can challenge Crysis for the title of the most demanding game, and here that’s a bout it would win. Even with three GPUs we can’t crack 60fps, and we still haven’t enabled a few extra features such as Depth of Field. The 6970 and GTX 580 are normally close with one and two GPUs, and we see that relationship extend to three GPUs. The triple GTX 580 setup has the lead by under 2fps, but it’s not the lead one normally expects from the GTX 580.

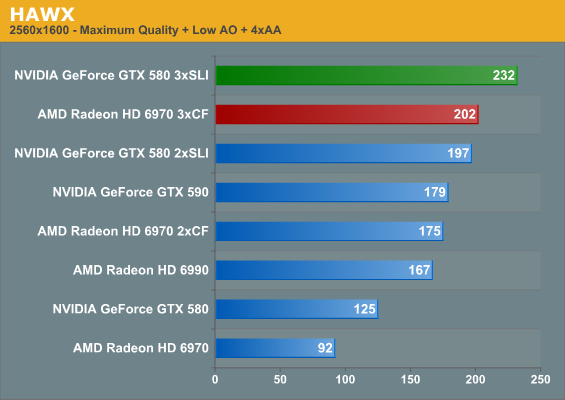

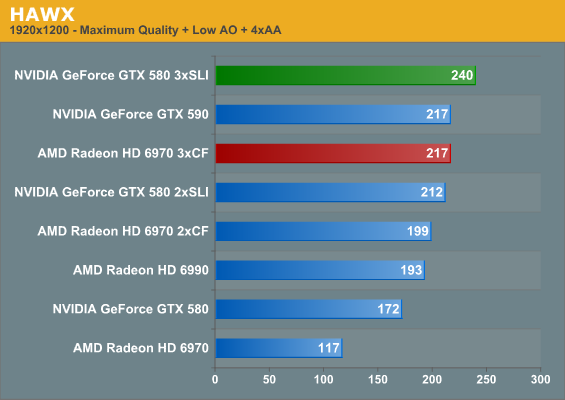

Our next game is HAWX, a title that shifts us towards games that are CPU bound. Even with that it’s actually one of the most electrically demanding games in our test suite, which is why we use it as a backup for our power/temperature/noise testing. Here we see both the triple GTX 580 and triple 6970 crack 200fps at 2560, with the GTX 580 taking top honors.

| Radeon HD 6970 | GeForce GTX 580 | |||||

| GPUs | 1->2 | 2->3 | 1->3 | 1->2 | 2->3 | 1->3 |

| Crysis G+E Avg |

185%

|

134%

|

249%

|

181%

|

127%

|

230%

|

| Crysis E |

188%

|

142%

|

268%

|

184%

|

136%

|

252%

|

| Crysis G+E Min |

191%

|

141%

|

270%

|

181%

|

116%

|

212%

|

| Crysis E Min |

186%

|

148%

|

277%

|

185%

|

83%

|

155%

|

| BattleForge |

194%

|

135%

|

263%

|

199%

|

135%

|

269%

|

| Metro 2033 |

180%

|

117%

|

212%

|

163%

|

124%

|

202%

|

| HAWX |

190%

|

115%

|

219%

|

157%

|

117%

|

185%

|

Having taken a look at raw performance, what does the scaling situation look like? All together it’s very good. For a dual-GPU configuration the weakest game for both AMD and NVIDIA is Metro 2033, where AMD gets 180% while NVIDIA manages 163% a single video card’s performance respectively. At the other end, NVIDIA manages almost perfect scaling for BattleForge at 199%, while AMD’s best showing is in the same game at 194%.

Adding in a 3rd GPU significantly shakes things up however. The best case scenario for going from two GPUs to three GPUs is 150%, which appears to be a harder target to reach. At 142% under Crysis with Enthusiast settings AMD does quite well, which is why they close the overall performance gap there. NVIDIA doesn’t do as quite well however, managing 136%. The weakest for both meanwhile is HAWX, which is what we’d expect for a game passing 200fps and almost assuredly running straight into a CPU bottleneck.

The Crysis minimum framerate gives us a moment’s pause though. AMD gets almost perfect scaling moving from two to three GPUs when it comes to minimum framerates in Crysis, meanwhile NVIDIA ends up losing performance here with Enthusiast settings. This is likely not a story of GPU scaling and more a story about GPU memory, but regardless the outcome is a definite hit in performance. Thus while minimum framerate scaling from one to two GPUs is rather close between NVIDIA and AMD with full enthusiast settings and slightly in AMD’s favor with gamer + enthusiast, AMD has a definite advantage going from two to three GPUs all of the time out of this batch of games.

Sticking with average framerates and throwing out a clearly CPU limited HAWX, neither side seems to have a strong advantage moving from two GPUs to three GPUs; the average gain is 131%, or some 62% the theoretical maximum. AMD does have a slight edge here, but keep in mind we’re looking at percentages, so AMD’s edge is often a couple of frames per second at best.

Going from one GPU to two GPUs also gives AMD a minor advantage, with the average performance being 186% for for AMD versus 182% for NVIDIA. Much like we’ve seen in our individual GPU reviews though, this almost constantly flip-flops based on the game being tested, which is why in the end the average gains are so close.

97 Comments

View All Comments

Ryan Smith - Monday, April 4, 2011 - link

There are 2 reasons for that:1) We can't immediately get another 6990. I know it seems odd that we'd have trouble getting anything, but vendors are generally uninterested in sampling cards that are reference, which is why we're so grateful to Zotac and PowerColor for the reference 580/6970.

2) We actually can't run a second 6990 with our existing testbed. The Rampage II Extreme only has x16 slots at positions 2 and 4; position 6 is x8. The spacing needs for a 6990CF setup require 2 empty slots, meaning we'd have to install it in position 6. Worse yet is that position 6 is abutted by our Antec 1200W PSU - this isn't a problem with single-GPU cards as the blowers are well clear of the PSU, but a center-mounted fan like the 6990 would get choked just as if there was another card immediately next to it.

We will be rebuilding our testbed for SNB and using a mobo with better spacing, but that's not going to happen right away. The point being that we're not ignoring the 590/6990 multiple card configurations, it's just not something we're in a position to test right now.

piroroadkill - Monday, April 4, 2011 - link

As long as it's in the works, that's alright. Seems like you have your reasons for it being the way it is.Rukur - Monday, April 4, 2011 - link

This whole technology is stupid with monitors.Why don't you stitch together 3 projectors for a seamless canvas to play a game ?

SlyNine - Monday, April 4, 2011 - link

"This whole technology is stupid with monitors." Do you suppose neural interfaces will be her soon. kick ass.Rukur - Monday, April 4, 2011 - link

Can you read more than one sentence ?monkeyshambler - Monday, April 4, 2011 - link

Interesting stuff, but for a 3 card SLI / crossfire what I'd really want to see is what the framerates are when every setting on the card is maxed.e.g. 24x AA 16x AF, high quality settings selected in the driver control panels etc.

supplement this with whats the performance on triple SLI with 3 1920*1080 monitors @ 4x AA

As lets face it if your going to spend this sort of money (and likely a watercooling rig too as theirs no way three cards are tolerable otherwise) you want to have a genuine show of why you should invest.

The current resolutions just will never stretch the cards or enable them to differentiate significantly from a standard SLI setup.

Hope we can see some of the above in a future article....

Rukur - Monday, April 4, 2011 - link

I tend to agree. How is maxing everything any worse then half inch monitor bezels all over your play area.The whole idea of eye infinity is stupid unless we all look through widows with 1 inch gaps while racing extreme cars.

How about some projectors stitched together for real people to actually try.

erple2 - Tuesday, April 5, 2011 - link

Wasn't there an analysis a while back comparing 1x, 2x, 4x, 8x and 16x AA? I thought that the conclusion to that was that there's no discernible difference between 8x and 16x AA, and the differences between 4x and 8x were only visible in careful examination of static images. Under normal play, you couldn't actually tell any difference between them.Maybe I'm just remembering wrong.

Also, I think that Ryan mentioned why they haven't yet done the triple monitor tests yet (lack of hardware).

DanNeely - Tuesday, April 5, 2011 - link

That's generally correct. Toms Hardware has run PCI restriction tests roughly once per GPU generation. The only game that ever really suffered at x4 bandwidth was MS flight simulator.PCIe bandwidth can impact some compute tasks. Einstien@home runs about 30% faster on a 460 in an 16x slot vs an 8x.

fepple - Monday, April 4, 2011 - link

With my two 5870s I have a wierd problem in crossfire. I have two screens a 24''' LCD and a 37'' LED TV. When in crossfire if I play video on the second screen it gets some odd artifacts of black(ish) horizontal lines across the bottom of the screen. Only solution i've found is to not have the cards in crossfire and plug the TV/Screen into different cards for watching stuff.Annoying, any thoughts?