A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTCrysis, BattleForge, Metro 2033, and HAWX

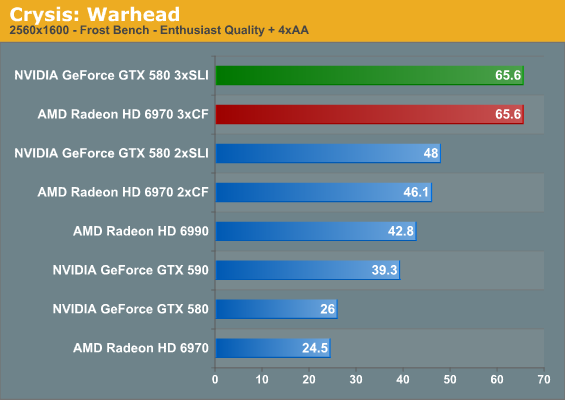

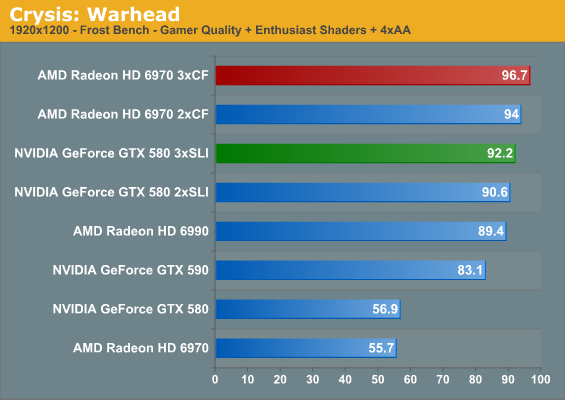

For the sake of completeness we have included both 2560x1600 and 1920x1200 results in our charts. However with current GPU performance a triple-GPU setup only makes sense at 2560, so that’s the resolution we’re going to be focusing on for commentary and scaling purposes.

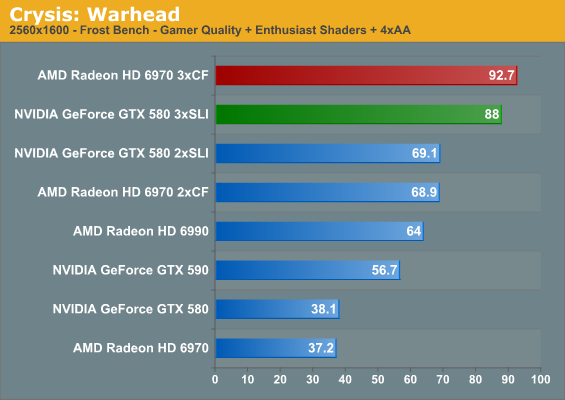

As we normally turn to Crysis as our first benchmark it ends up being quite amusing when we have a rather exact tie on our hands. The triple GTX 580 setup ends up exactly tying the triple 6970 setup at 2560x1600 with full enthusiast settings at 65.6fps. This is quite an appropriate allegory for AMD and NVIDIA’s relative performance as of late, as the two are normally very close when it comes to cards at the same price. It’s also probably not the best start for the triple GTX 580 though, as it means NVIDIA’s lead at one and two cards has melted away by the 3rd.

We have however finally established what it takes to play Crysis at full resolution on a single monitor with every setting turned up – it takes no fewer than three GPUs to do the job. Given traditional GPU performance growth curves, it should be possible to do this on a single GPU by early 2014 or so, only some 7 years after the release of Crysis: Warhead. If you want SSAA though, you may as well throw in another few years.

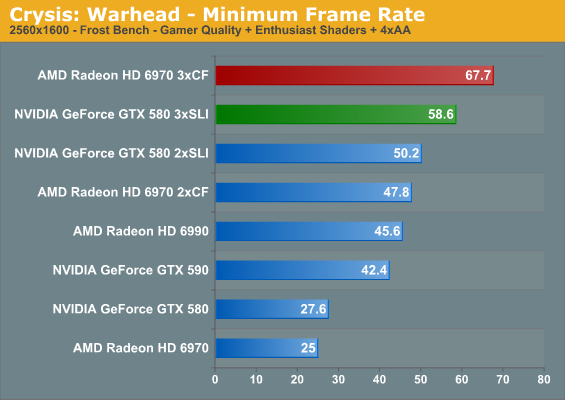

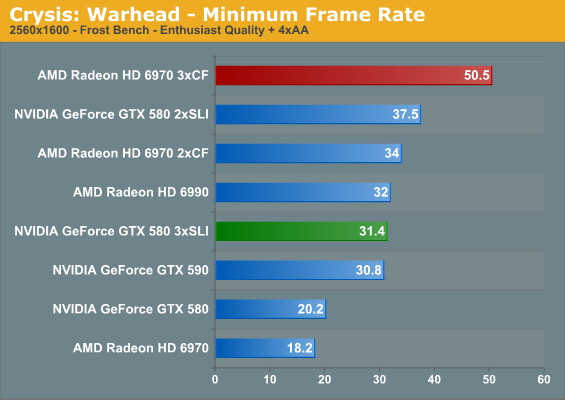

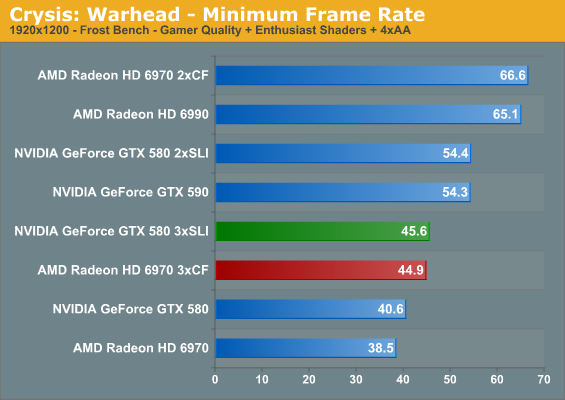

Moving on, it’s interesting to note that while we had a tie at 2560 with Enthusiast settings for the average framerate, the same cannot be said of the minimums. At 2560, no matter the quality, AMD has a distinct edge in the minimum framerate. This is particularly pronounced at 2560E, where moving from two to three GPUs causes a drop in the framerate on the GTX 580. This is probably a result of the differences in the cards’ memory capacity – additional GPUs require additional memory, and it seems the GTX 580 and its 1.5GB has reached its limit. We never seriously imagined we’d find a notable difference between 1.5GB and 2GB at this point in time, but here we are.

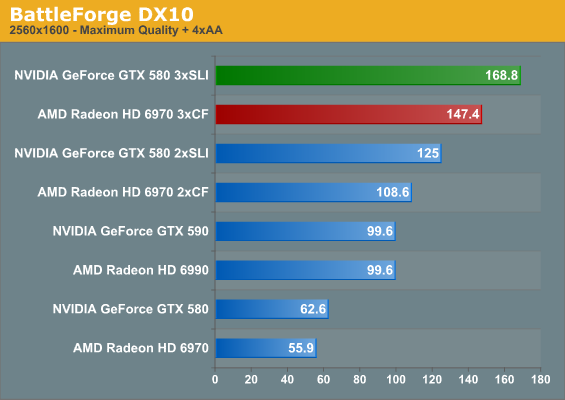

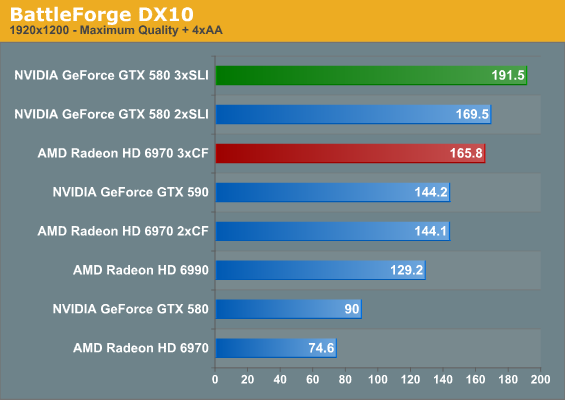

BattleForge is a shader-bound game that normally favors NVIDIA, and this doesn’t change with three GPUs. However even though it’s one of our more intensive games, three GPUs is simply overkill for one monitor.

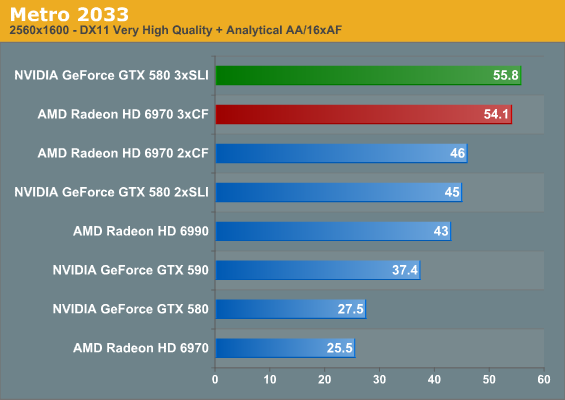

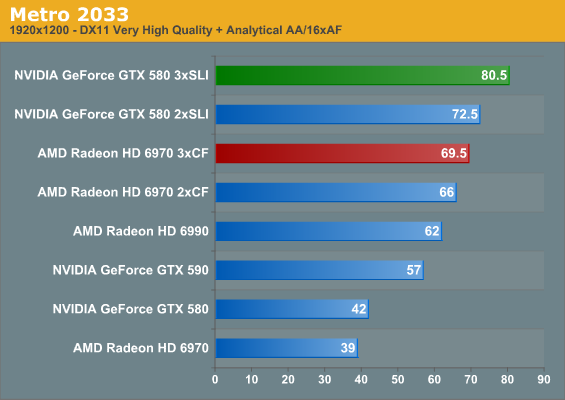

Metro 2033 is the only other title in our current lineup that can challenge Crysis for the title of the most demanding game, and here that’s a bout it would win. Even with three GPUs we can’t crack 60fps, and we still haven’t enabled a few extra features such as Depth of Field. The 6970 and GTX 580 are normally close with one and two GPUs, and we see that relationship extend to three GPUs. The triple GTX 580 setup has the lead by under 2fps, but it’s not the lead one normally expects from the GTX 580.

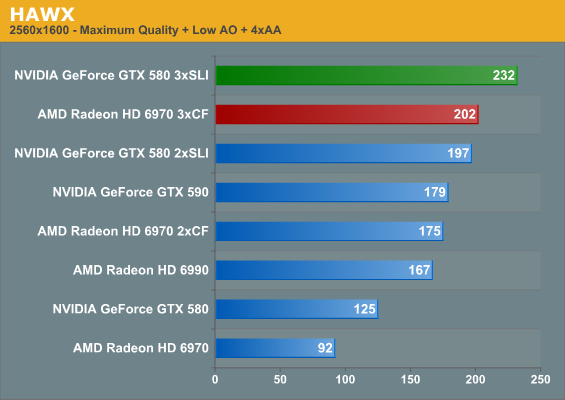

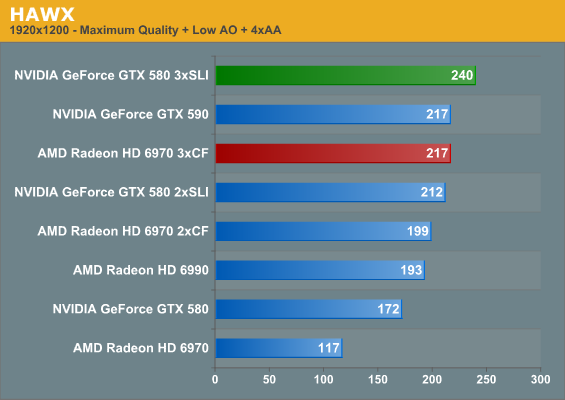

Our next game is HAWX, a title that shifts us towards games that are CPU bound. Even with that it’s actually one of the most electrically demanding games in our test suite, which is why we use it as a backup for our power/temperature/noise testing. Here we see both the triple GTX 580 and triple 6970 crack 200fps at 2560, with the GTX 580 taking top honors.

| Radeon HD 6970 | GeForce GTX 580 | |||||

| GPUs | 1->2 | 2->3 | 1->3 | 1->2 | 2->3 | 1->3 |

| Crysis G+E Avg |

185%

|

134%

|

249%

|

181%

|

127%

|

230%

|

| Crysis E |

188%

|

142%

|

268%

|

184%

|

136%

|

252%

|

| Crysis G+E Min |

191%

|

141%

|

270%

|

181%

|

116%

|

212%

|

| Crysis E Min |

186%

|

148%

|

277%

|

185%

|

83%

|

155%

|

| BattleForge |

194%

|

135%

|

263%

|

199%

|

135%

|

269%

|

| Metro 2033 |

180%

|

117%

|

212%

|

163%

|

124%

|

202%

|

| HAWX |

190%

|

115%

|

219%

|

157%

|

117%

|

185%

|

Having taken a look at raw performance, what does the scaling situation look like? All together it’s very good. For a dual-GPU configuration the weakest game for both AMD and NVIDIA is Metro 2033, where AMD gets 180% while NVIDIA manages 163% a single video card’s performance respectively. At the other end, NVIDIA manages almost perfect scaling for BattleForge at 199%, while AMD’s best showing is in the same game at 194%.

Adding in a 3rd GPU significantly shakes things up however. The best case scenario for going from two GPUs to three GPUs is 150%, which appears to be a harder target to reach. At 142% under Crysis with Enthusiast settings AMD does quite well, which is why they close the overall performance gap there. NVIDIA doesn’t do as quite well however, managing 136%. The weakest for both meanwhile is HAWX, which is what we’d expect for a game passing 200fps and almost assuredly running straight into a CPU bottleneck.

The Crysis minimum framerate gives us a moment’s pause though. AMD gets almost perfect scaling moving from two to three GPUs when it comes to minimum framerates in Crysis, meanwhile NVIDIA ends up losing performance here with Enthusiast settings. This is likely not a story of GPU scaling and more a story about GPU memory, but regardless the outcome is a definite hit in performance. Thus while minimum framerate scaling from one to two GPUs is rather close between NVIDIA and AMD with full enthusiast settings and slightly in AMD’s favor with gamer + enthusiast, AMD has a definite advantage going from two to three GPUs all of the time out of this batch of games.

Sticking with average framerates and throwing out a clearly CPU limited HAWX, neither side seems to have a strong advantage moving from two GPUs to three GPUs; the average gain is 131%, or some 62% the theoretical maximum. AMD does have a slight edge here, but keep in mind we’re looking at percentages, so AMD’s edge is often a couple of frames per second at best.

Going from one GPU to two GPUs also gives AMD a minor advantage, with the average performance being 186% for for AMD versus 182% for NVIDIA. Much like we’ve seen in our individual GPU reviews though, this almost constantly flip-flops based on the game being tested, which is why in the end the average gains are so close.

97 Comments

View All Comments

taltamir - Sunday, April 3, 2011 - link

wouldn't it make more sense to use a Radeon 6970 + 6990 together to get triple GPU?nVidia triple GPU seems to lower min FPS, that is just fail.

Finally: Where are the eyefinity tests? none of the results were relevant since all are over 60fps with dual SLI.

Triple monitor+ would be actually interesting to see

semo - Sunday, April 3, 2011 - link

Ryan mentions in the conclusion that a triple monitor setup article is coming.ATI seems to be the clear winner here but the conclusion seems to downplay this fact. Also, the X58 platform isn't the only one that has more than 16 PCIe lanes...

gentlearc - Sunday, April 3, 2011 - link

If you're considering going triple-gpu, I don't see how scaling matters other than an FYI. There isn't a performance comparison, just more performance. You're not going to realistically sell both your 580s and go and get three 6970s. I'd really like if you look at lower end cards capable of triple-gpu and their merit. The 5770 crossfired was a great way of extending the life of one 5770. Two 260s was another sound choice by enthusiasts looking for a low price tag upgrade.So, the question I would like answered is if triple gpu is a viable option for extending the life of your currently compatible mobo. Can going triple gpus extend the life of your i7 920 as a competent gaming machine until a complete upgrade makes more sense?

SNB-E will be the cpu upgrade path, but will be available around the time the next generation of gpus are out. Is picking up a 2nd and/or 3rd gpu going to be a worthy upgrade or is the loss of selling three gpus to buy the next gen cards too much?

medi01 - Sunday, April 3, 2011 - link

Besides, 350$ GPU is compared to 500$ GPU. Or so it was last time I've checked on froogle (and that was today, 3d of April 2011)A5 - Sunday, April 3, 2011 - link

AT's editorial stance has always been that SLI/XFire is not an upgrade path, just an extra option at the high end, and doubly so for Tri-fire and 3x SLI.I'd think buying a 3rd 5770 would not be a particularly wise purchase unless you absolutely didn't have the budget to get 1 or 2 higher-end cards.

Mr Alpha - Sunday, April 3, 2011 - link

I use RadeonPro to setup per application crossfire settings. While it is a bummer it doesn't ship with AMD's drivers, per application settings is not an insurmountable obstacle for AMD users.BrightCandle - Sunday, April 3, 2011 - link

I found this program recently and it has been a huge help. While Crysis 2 has flickering lights (don't get me started on this games bugs!) using Radeon Pro I could fix the CF profile and play happily, without shouting at ATI to fix their CF profiles, again.Pirks - Sunday, April 3, 2011 - link

I noticed that you guys never employ useful distributed computing/GPU computing tests in your GPU reviews. You tend to employ some useless GPU computing benchmarks like some weird raytracers or something, I mean stuff people would not normally use. But you never employ really useful tests like say distributed.net's GPU computation clients, AKA dnetc. Those dnetc clients exist in AMD Stream and nVidia CUDA versions (check out http://www.distributed.net/Download_clients - see, they have CUDA 2.2, CUDA 3.1 and Stream versions too) and I thought you'd be using them in your benchmarks, but you don't, why?Also check out their GPU speed database at http://n1cgi.distributed.net/speed/query.php?cputy...

So why don't you guys use this kind of benchmark in your future GPU computing speed tests instead of useless raytracer? OK if you think AT readers really bother with raytracers why don't you just add these dnetc GPU clients to your GPU computing benchmark suite?

What do you think Ryan? Or is it someone else doing GPU computing tests in your labs? Is it Jarred maybe?

I can help with setting up those tests but I don't know who to talk to among AT editors

Thanks for reading my rant :)

P.S. dnetc GPU client scales 100% _always_, like when you get three GPUs in your machine your keyrate in RC5-72 is _exactly_ 300% of your single GPU, I tested this setup myself once at work, so just FYI...

Arnulf - Sunday, April 3, 2011 - link

"P.S. dnetc GPU client scales 100% _always_, like when you get three GPUs in your machine your keyrate in RC5-72 is _exactly_ 300% of your single GPU, I tested this setup myself once at work, so just FYI... "So you are essentially arguing running dnetc tests make no sense since they scale perfectly proportionally with the number of GPUs ?

Pirks - Sunday, April 3, 2011 - link

No, I mean the general GPU reviews here, not this particular one about scaling