The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTIntel’s Quick Sync Technology

In recent years video transcoding has become one of the most widespread consumers of CPU power. The popularity of YouTube alone has turned nearly everyone with a webcam into a producer, and every PC into a video editing station. The mobile revolution hasn’t slowed things down either. No smartphone can play full bitrate/resolution 1080p content from a Blu-ray disc, so if you want to carry your best quality movies and TV shows with you, you’ll have to transcode to a more compressed format. The same goes for the new wave of tablets.

At a high level, video transcoding involves taking a compressed video stream and further compressing it to better match the storage and decoding abilities of a target device. The reason this is transcoding and not encoding is because the source format is almost always already encoded in some sort of a compressed format. The most common, these days, being H.264/AVC.

Transcoding is a particularly CPU intensive task because of the three dimensional nature of the compression. Each individual frame within a video can be compressed; however, since sequential frames of video typically have many of the same elements, video compression algorithms look at data that’s repeated temporally as well as spatially.

I remember sitting in a hotel room in Times Square while Godfrey Cheng and Matthew Witheiler of ATI explained to me the challenges of decoding HD-DVD and Blu-ray content. ATI was about to unveil hardware acceleration for some of the stages of the H.264 decoding pipeline. Full hardware decode acceleration wouldn’t come for another year at that point.

The advent of fixed function video decode in modern GPUs is important because it helped enable GPU accelerated transcoding. The first step of the video transcode process is to first decode the source video. Since transcoding involves taking a video already in a compressed format and encoding it in a new format, hardware accelerated video decode is key. How fast a decode engine is has a tremendous impact on how fast a hardware accelerated video encode can run. This is true for two reasons.

First, unlike in a playback scenario where you only need to decode faster than the frame rate of the video, when transcoding the video decode engine can run as fast as possible. The faster frames can be decoded, the faster they can be fed to the transcode engine. The second and less obvious point is that some of the hardware you need to accelerate video encoding is already present in a video decode engine (e.g. iDCT/DCT hardware).

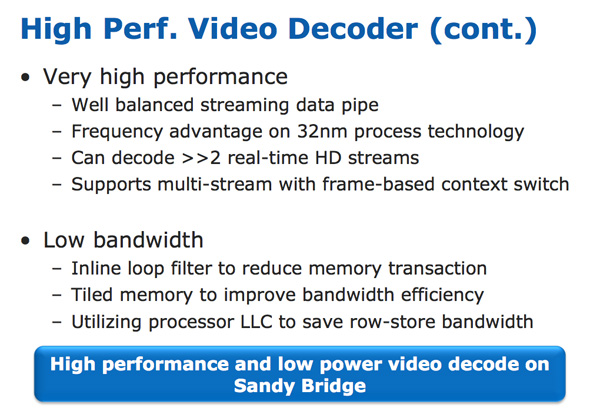

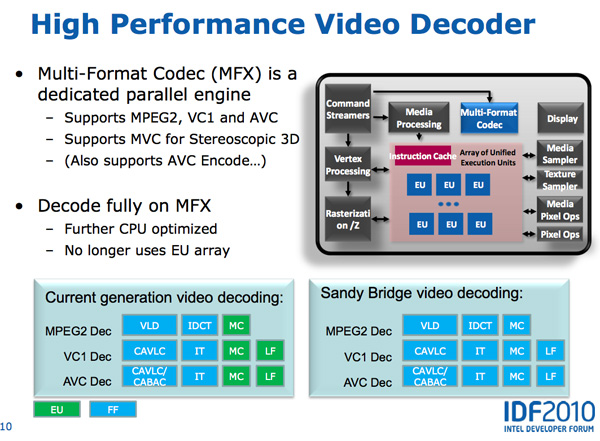

With video transcoding as a feature of Sandy Bridge’s GPU, Intel beefed up the video decode engine from what it had in Clarkdale. In the first generation Core series processors, video decode acceleration was split between fixed function decode hardware and the GPU’s EU array. With Sandy Bridge and the second generation Core CPUs, video decoding is done entirely in fixed function hardware. This is not ideal from a flexibility standpoint (e.g. newer video codecs can’t be fully hardware accelerated on existing hardware), but it is the most efficient method to build a video decoder from a power and performance standpoint. Both AMD and NVIDIA have fixed function video decode hardware in their GPUs now; neither rely on the shader cores to accelerate video decode.

The resulting hardware is both performance and power efficient. To test the performance of the decode engine I launched multiple instances of a 15Mbps 1080p high profile H.264 video running at 23.976 fps. I kept launching instances of the video until the system could no longer maintain full frame rate in all of the simultaneous streams. The graph below shows the maximum number of streams I could run in parallel:

| Intel Core i5-2500K | NVIDIA GeForce GTX 460 | AMD Radeon HD 6870 | |

| Number of Parallel 1080p HP Streams | 5 streams | 3 streams | 1 stream |

AMD’s Radeon HD 6000 series GPUs can only manage a single high profile, 1080p H.264 stream, which is perfectly sufficient for video playback. NVIDIA’s GeForce GTX 460 does much better; it could handle three simultaneous streams. Sandy Bridge however takes the cake as a single Core i5-2500K can decode five streams in tandem.

The Sandy Bridge decoder is likely helped by the very large (and high bandwidth) L3 cache connected to it. This is the first advantage Intel has in what it calls its Quick Sync technology: a very fast decode engine.

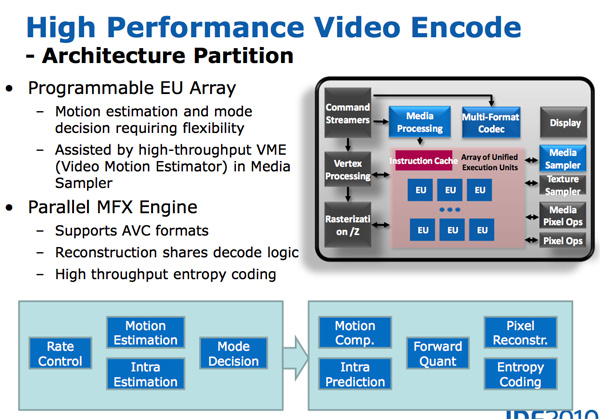

The decode engine is also reused during the actual encode phase. Once frames of the source video are decoded, they are actually fed to the programmable EU array to be split apart and prepared for transcoding. The data in each frame is transformed from the spatial domain (location of each pixel) to the frequency domain (how often pixels of a certain color appear); this is done by the use of a discrete cosine transform. You may remember that inverse discrete cosine transform hardware is necessary to decode video; well, that same hardware is useful in the domain transform needed when transcoding.

Motion search, the most compute intensive part of the transcode process, is done in the EU array. It's the combination of the fast decoder, the EU array, and fixed function hardware that make up Intel's Quick Sync engine.

283 Comments

View All Comments

CreativeStandard - Monday, January 3, 2011 - link

PC mag reports these new i7's only support up to 1333 DDR3 but you are running faster, is PC mag wrong, what is the maximum supported memory speeds?Akv - Monday, January 3, 2011 - link

Is it true that it has embedded DRM ?DanNeely - Monday, January 3, 2011 - link

Only to the extent that like all intel Core2 and later systems it supports a TPM module to allow locking down servers in the enterprise market and that the system *could* be used to implement consumer DRM at some hypothetical point in the future; but since consumer systems aren't sold with TPM modules it would have no impact on systems bought without.shabby - Monday, January 3, 2011 - link

Drm is only on the h67 chipset, and its basically just for watching movies on demand and nothing more.Akv - Monday, January 3, 2011 - link

Mmmhh... ok...Nevertheless the intel HD + H67 was already modest, if it has DRM in addition then it becomes not particularly seducing.

marraco - Monday, January 3, 2011 - link

Thanks for adding Visual Studio compilation benchmark. (Although you omitted the 920).It seems that not even SSD, nor can better processors do much for that annoying time waster. It does not matter how much money you throw at it.

I wish to see also SLI/3-way SLI/crossfire performance, since the better cards frequently are CPU bottlenecked. How much better it does relative to i7 920? And with good cooler at 5Ghz?

Note: you mention 3 video cards on test setup, but what one is on the benchmarks?

Anand Lal Shimpi - Monday, January 3, 2011 - link

You're welcome on the VS compile benchmark. I'm going to keep playing with the test to see if I can use it in our SSD reviews going forward :)I want to do more GPU investigations but they'll have to wait until after CES.

I've also updated the gaming performance page indicating what GPU was used in each game, as well as the settings for each game. Sorry, I just ran out of time last night and had to catch a flight early this morning for CES.

Take care,

Anand

c0d1f1ed - Monday, January 3, 2011 - link

I wonder how this CPU scores with SwiftShader. The CPU part actually has more computing power than the GPU part. All that's lacking to really make it efficient at graphics is support for gather/scatter instructions. We could then have CPUs with more generic cores instead.aapocketz - Monday, January 3, 2011 - link

I have read that CPU overclock is only available on P67 motherboards, and H67 motherboards cannot overclock the CPU, so you can either use the onboard graphics OR get overclocking? Is this true?"K-series SKUs get Intel’s HD Graphics 3000, while the non-K series SKUs are left with the lower HD Graphics 2000 GPU."

whats the point of improving the graphics on K series, if pretty much everyone who gets one will have a P67 motherboard which cannot even access the GPU?

Let me know if I am totally not reading this right...

MrCromulent - Monday, January 3, 2011 - link

Great review as always, but on the HTPC page I would have wished for a comparison of the deinterlacing quality of SD (480i/576i) and HD (1080i) material. Ati's onboard chips don't offer vector adaptive deinterlacing for 1080i material - can Intel do better?My HD5770 does a pretty fine job, but I want to lose the dedicated video card in my next HTPC.