ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

Then we have NVIDIA publishing slides like this:

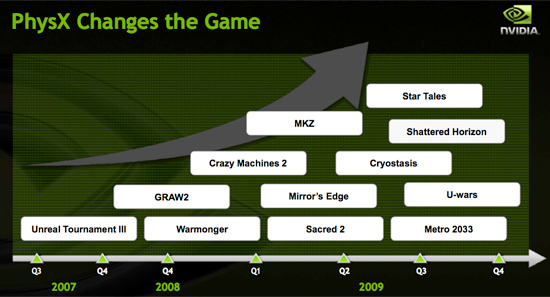

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

Hauk - Thursday, April 2, 2009 - link

Congrats ATI, the 4890 is a strong performer! So much chatter about what constitutes rebadging; at the end of the day it's performance that matters. 4890 does a great job for the money.The GTX 275 performs well but lacks excitement IMO. Nothing surprising or exciting; we've already seen a 240 shader enabled gpu on a 260 style interface (x2). If anything, the 285 receives strong competition from both 4890 and 275. Makes little sense to remain at it's price point. It's price should be $300.

Times are tight. Cheers to competition...

slickr - Thursday, April 2, 2009 - link

I can't believe how biased anandtech has become.I've checked all other review sites and in all the GTX275 was winning by a pretty big margin, here it actually looses to the HD4890.

Now I'm not a fanboy for either, I've had 2 nvidia graphic cards and 2 ATI cards, the current one is ATI, but this bias thing can't go un-noticed.

Some investigators must be summoned to deal with anandtech, this has been going for quite a while now.

z3R0C00L - Friday, April 3, 2009 - link

I see the difference.. those "other reviews" used the Catalyst 9.3 drivers.Anandtech, HardOCP and Firingsquad used the new 9.4 Beta drivers.

No bias on Anandtech's part. Rather a bias from those other sites who used the new nVIDIA BETA driver but not the ATi one that has proper support for the 4890.

B3an - Thursday, April 2, 2009 - link

I dont normally take notice of comments like this on here, but it does seem a little like it. It's as if NV have pissed off Anandtech with there dirty tactics (understandable), and Anandtech are being a little bias because of this.JarredWalton - Thursday, April 2, 2009 - link

I've looked at three reviews (FiringSquad, THG, and HardOCP - also Xbitlabs, but they didn't have the GTX 275 in their results). I'm not quite sure what horribly biased and inaccurate results we're supposed to have, as most of the tests are quite similar to ours. Two sites - HardOCP and FiringSquad - essentially end up as a tie. THG favors the 275, at least at lower resolutions and without 4xAA, but then several of the games they test we didn't use, and vice versa. (The 4970 also beats the 275 there if you run 4xAA 2560x1600.)Obviously, we had a lengthy rant on CUDA and PhysX and discussed the usefulness of those features (conclusion: meh), but with all the marketing in that area it was something that was begging to be done. Pricing, availability, and drivers are still areas you need to look at, but it's really a very close race.

If you have reviews that show very different results than what I'm seeing, post the name of the site rather than making vague claims like, "I've checked all other review sites and in all the GTX275 was winning by a pretty big margin, here it actually looses to the HD4890."

SkullOne - Thursday, April 2, 2009 - link

That's different then what I've seen. I dunno what sites you visit but all of the ones I've been to show them just about neck and neck or the 4890 just edging out the 275.Personally I give the edge to the 4890 due to it's high overclockability.

SkullOne - Thursday, April 2, 2009 - link

That's different then what I've seen. I dunno what sites you visit but all of the ones I've been to show them just about neck and neck or the 4890 just edging out the 275.Personally I give the edge to the 4890 due to it's high overclockability.

Spoelie - Thursday, April 2, 2009 - link

p=2 As we can clearly see, in the cards we *r*estedp=11 particles are one of the most difficult things to do on the CPU *thanks*

Drivers? test table says 8.12 hotfix but we're at 9.3/9.4 now...

Yojimbo - Thursday, April 2, 2009 - link

First you say you aren't concerned about the 4890 being a rebadge because at the end of the day it's performance that matters, and then you said the GTX 275 lacks excitement because "we've already seen a 140 shader enabled gpu on a 260 style interface (x2)," whatever the significance of already seeing that is.Aren't these contradictory statements?

SiliconDoc - Thursday, April 23, 2009 - link

Of course it's contradictory, it's a red rooster statement. Then the 3m they use to just crank a few more mhz is the rework.. LOLPay homage to the red and hate green and spew accordingly with as many lies as possible or you won't fit in here - been like that for quite some time. Be smug and arrogant about it, too, and never amdit your massive errors - that's how to do it.

Make sure you whine about nvidia and say you hate them in as many ways as possible, as well - be absolutely insane mostly, that's what works - like screaming they can take down nvidia when the red rooster shop has been losing a billion a year on a billion in sales.

Be an opposite man.

Of course it's contradictory. Duhh.. they're insane man - they are GONERS.