Analyzing Falkor’s Microarchitecture: A Deep Dive into Qualcomm’s Centriq 2400 for Windows Server and Linux

by Ian Cutress on August 20, 2017 11:00 AM EST- Posted in

- CPUs

- Qualcomm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Centriq

- Centriq 2400

Closing Thoughts: Qualcomm’s Competition

For the most part, five/six major names in this space are competing for the bulk of data center business: Intel, AMD, IBM, Cavium, and now Qualcomm. The first two are based in the omnipresent x86 architecture and are using different microarchitecture designs to account for most of the market (and Intel is most of that).

Intel’s main product is the Xeon Scalable Processor Family, launched in July, and builds on a new version of their 6th Generation core design by increasing the L2 cache, adding support for AVX-512, moving to an internal mesh topology, and offering up to 28 cores with 768 GB/DRAM per socket (up to 1.5TB with special models). Omnipath versions are also available, and the chipset ecosystem can add support for 10 gigabit Ethernet natively, at the expense of PCIe lanes. Xeon systems can be designed with up to 8 sockets natively, depending on the processor used (and cost). Interested customers can buy these parts today from OEMs.

Intel also has the latest generation of Atom cores, found in the new Denverton products. While Intel doesn’t necessarily promote these cores for the data center, some OEMs such as HP have developed ‘Moonshot’ style of deployments that place up to 60 SoCs with up to 8 cores each in a single server (which can move up to 16 cores per SoC with Denverton).

- Intel Unveils the Xeon Scalable Processor Family: Skylake-SP in Bronze, Silver, Gold and Platinum

- Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade

AMD meanwhile launched their attack back on the high-end server market earlier this year with EPYC. This product uses their new high-performance Zen microarchitecture, and implements a multi-silicon die design to supports up to 32 cores and 2 TB of DRAM per socket. By implementing their new Infinity Fabric technology, AMD is promoting a wide bandwidth product that despite the multi-silicon design is engineered with strong FP units and plenty of memory and IO bandwidth. Each EPYC processor offers 128 PCIe lanes for add-in cards or storage, and can use 64 PCIe lanes to connect to a second socket, offering 64 cores/128 threads with 4TB of DRAM and 128 PCIe lanes in a 2P system. AMD is slowly rolling out EPYC to premium customers first, with wider availability during the second half of 2017.

IBM is perhaps the odd-one out here, but due to the size is hard to ignore. IBM’s POWER architecture, and subsequent POWER8 and upcoming POWER9 designs, aim heavily on the ‘more of everything’ approach. More cores, wider cores, more threads per core, more frequency, and more memory, which translates to more cost and more energy. IBM’s partners can have custom designs of the microarchitecture implementation depending on their needs, as IBM tends to focus on the more mission critical mainframe infrastructure, but is slowly attempting to move into the traditional data center market. Large numbers such as ‘5.2 GHz’ can be enough to cause potential customers do a double take and analyze what IBM has to offer. We’ve tested IBM’s base POWER8 in the lab, and POWER9 is just around the corner.

- Assessing IBM's POWER8, Part 1: A Low Level Look at Little Endian

- Assessing IBM's POWER8, Part 2: Server Applications on OpenPOWER

Cavium is the most notable public player using ARM designs in commercial systems so far (there are a number of non-public players focusing on niche scenarios, or whom have little exposure outside of China). The original design, the Cavium ThunderX, uses a custom ARMv8 core, and is designed to provide large numbers of small CPU cores with as much memory bandwidth and IO as possible. For a design that uses relatively simple 2 instruction-per-clock CPU cores, the ThunderX chips are quite large, and Cavium is positioning that product in the high performance networking market as well as environments where core counts matter than peak performance, as seen in our review which pegged per-core performance at the level of Intel’s Atom chips. The newer ThunderX2 is aiming at HPC workloads, so it will focus more on higher per-core performance. With ARM having recently announced the A75 and A55 cores under the DynamIQ banner, we’re expecting Cavium’s future designs to use a number of new design choices.

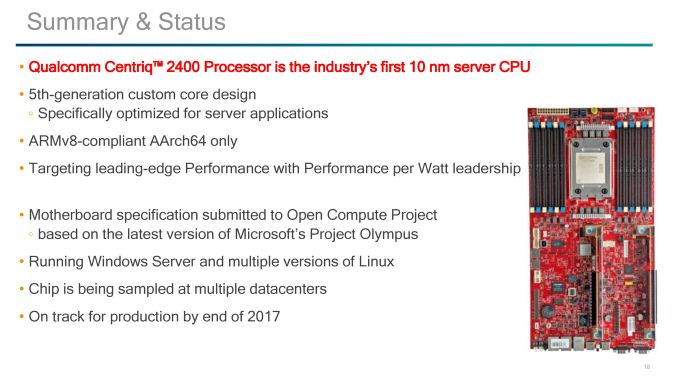

So now Qualcomm enters the fray with the Centriq 2400 family, using Falkor cores, aiming to go above Cavium and push into the traditional x86 and data center arena where others have tried and got stuck into a bit of a quagmire. Qualcomm is hoping that its expertise within the ARM ecosystem, as well as the clout of the new product, will be something that the Big Seven Plus One cannot ignore. One big hurdle is that this space is traditionally x86, so moving to ARM requires potential code changes and recompiling that will lose potential software efficiency developed over a decade. Also the Windows Server market, which Qualcomm is solving with Microsoft with a form of x86 emulation. Much like we have been hearing about Windows 10 on Qualcomm’s Snapdragon 835 mobile chipsets, Qualcomm is going to be supporting Windows Server on Centriq 2400-series SoCs.

Wrapping thigns up, while Qualcomm has given us more information than we expected, we’d still love to hear exact numbers for L2 and L3 cache sizes, die sizes, TDPs, frequencies (we’ve been told >2.0 GHz with no turbo modes), the different SKUs coming to market, and confirmation about which foundry partner they are using. Qualcomm will also have to be wary about ensuring sufficient support on all operating systems for customers that are interested, especially if this hardware migrates out of the specific customer set that are amenable to testing new platforms.

The Centriq 2400 family is currently being sampled in data centers, and moving into production by the end of 2017. The media sample timeframe unknown, however we're hoping we can get one in for testing before too long.

41 Comments

View All Comments

DanNeely - Sunday, August 20, 2017 - link

Mobile CSS needs fixed. Bulleted lists need to wrap, instead of overflowing into horizontal scroll as they currently do on Android.twotwotwo - Sunday, August 20, 2017 - link

The things I most wonder about the chip itself are, how much worse will single-thread speed be, and how much better will throughput/$ be?For serial speed, there's sort of a sliding scale of "good enough"; you can certainly find uses for chips that are slower than Intel's large cores, but as single-thread perf gets worse and worse, more types of app become tricky to run on it because of latency. So you want latency stats for typical enterprisey Java or C# (or, heck, Go) Web app or widely used databases or infrastructure tools. That's also a test of how well compilers and runtimes are tuned for ARMV8, the chip, and live with may cores, but since early customers will have to deal with the ecosystem that exists today, that's reasonable.

For cost-effective throughput I guess we need to have an idea of at least price and power consumption, and parallel benchmarks that will hit bottlenecks a single-threaded one might not, like memory bandwidth. And the toughest comparison is probably against Intel's parts in the same segment, Xeon D and their server Atom chips. Something that makes it harder to win big on throughput/$ is that the CPU's cost and power consumption are only a piece of the total: DRAM, storage, network, and so on account for a lot of it. Also, the big cloud customers Qualcomm wants to win probably aren't paying the same premiums as you and I are to Intel.

Then, aside from questions about the chip itself, there are questions about the ecosystem and customers. There are the questions above of how well toolchains and software are tuned. Maybe the biggest question is whether some big customer will make the leap and do some deployments on lots of slower cores. It might be a strategic long-term bet for some big cloud company that wants more competition in the server chip space, but I bet they have to be willing to lose real money on the effort for a generation or two first.

name99 - Monday, August 21, 2017 - link

Intel sells 28 core CPUs that run at 2.1 GHz (and turbo up to 3.8GHz but see below).Hell, they sell 16 core systems that run at 2.0 GHz and only turbo up to 2.8 GHz.

Remember QC is not TRYING to sell these to amateurs, or even as office servers. They are targeted at data warehouse tasks where the job they're doing will be pretty well defined, and it's expected for the most part that ALL the cores will be running (ie when the work load lightens, you shut down entire dies and then racks, you don't futz around with just shutting down single cores).

For environments like that, turbo'ing is of much less value. QC doesn't have to (and isn't) targeting the entire space of HPC+server+data warehouse, just the part that's a good match to what they're offering.

Threska - Tuesday, August 22, 2017 - link

Well there is the small developer virtualization market like Ansible.prisonerX - Monday, August 21, 2017 - link

A preoccupation with single thread performance is the domain of video game playing teenagers and not terribly important, neither is the "latency" you refer to. This sort of high-core, efficient processor is going to be used where throughput and price/power/performance ratio (ie, all three, not just any one of those), are the key metric.Latency is mostly irrelevant since processing will be stream oriented and bandwidth limited rather than hamstrung by latency (thus features such as memory bandwidth compression). Gimmicks like "turbo" (which should be called by its proper name: "throttling") and favoring single thread performance are counterproductive in this mode. Being able to deploy many CPUs in dense compute nodes is what is required and memory, storage and networking are minor parts here.

I don't know why you think compilers or runtimes is a concern, 99.9% of code is common across archs, so if you've supported a lot of x86 cores your code is going to function well for a lot of ARM cores with a small amount of arch specific configuration. The compilers themselves, namely GCC and LLVM are mature as is their support for ARM.

Finally the new ARM CPUs don't have to beat Intel, just stay roughly competitive, because the one thing the tech industry hates more than a monopoly is a monopoly that has abused its monopoly powers, and Intel is it. Industry is itching for an alternative, and near enough is good enough.

deltaFx2 - Thursday, August 24, 2017 - link

"Industry is itching for an alternative": While this is true, is the industry truly interested in an alternative ISA, or alternative supplier? Because there is one now in the x86 space, and is very competitive, and in some metrics better than Intel. Also, your argument about single threaded performance being irrelevant in servers is false. A famous example of this is a paper in ISCA by google folks arguing in favor of high IPC machines (among other things). They also note that memory bandwidth is not as critical as latency. Now this is specific to google, but in plenty of other cases too, unless you have a very lopsided configuration, bandwidth doesn't get anywhere near saturation. There are also plenty of server users who provide extra cooling capacity to run at higher than base frequencies because it's cheaper than scaling out to more nodes. Obviously, your workloads should scale with freq."Finally the new ARM CPUs don't have to beat Intel," -> change intel to AMD. AMD is hugely motivated to compete on price and has the performance to match intel in many workloads. And AMD's killer app is the 1P system, exactly where Qualcomm intends to go. You also have to add the cost of porting from x86->ARM (recompile, validation, etc). Time is money and employees need to be paid. So the question is, why ARM? More threads/socket? Nope. More memory/socket? Nope. More perf/thread? Probably not based on the architecture described but we'll see. More connectivity then? Nope. Lower absolute power? Maybe. Lower cost? I suspect AMD's MCM design is great for yields. And there's the porting cost if you're not already on ARM.

There's a lot more work to be done and money to be spent before ARM becomes competitive in the mainstream server space. QC has the deep pockets to stick it out, but I am not sure about cavium.

Gc - Sunday, August 20, 2017 - link

Confusing terminology: prefetch vs. fetchPrefetch heuristics predict *future* memory addresses based on past memory access patterns, such as sequential or striding patterns, and try to prefetch the relevant cache lines *before* a miss occurs, attempting to avoid the cache miss or at least reduce the delay. A memory fetch to satisfy a cache miss is not a prefetch.

Slide: "Hardware Prefetch on L1 miss."

Text: "An L1 miss will initiate a hardware prefetch."

The initial fetch is after the miss, so the initial fetch is not a 'prefetch'. I assume this means that it is not only fetching the missed cache line but also triggering the prefetchers to fetch additional cache lines.

Slide: "Hardware data prefetch engine Prefetches for L1, L2, and L3 caches"

Text: "If a miss occurs on the L1-data cache, hardware data prefetchers are used to probe the L2 and L3 caches."

The slide is saying that data is prefetched at all three levels of cache. I'm not sure what the text is saying. Probing refers to querying the caches around the fabric to see which if any holds the requested cache line. This is part of any fetch, not specific to prefetching. Maybe the text is trying to say that the prefetchers not only remember past addresses and predict future addresses, but also remember which cache held past addresses and predicts which cache holds fetched and prefetched addresses?

YoloPascual - Monday, August 21, 2017 - link

Inb4 fanless data centers near the equator.KongClaude - Tuesday, August 22, 2017 - link

'however Samsung does not have much experience with large silicon dies'I don't remember the actual die size for the DEC Alpha's that Samsung fabbed back in the day, the Alpha was a fairly large CPU even by todays standard. Would they have let go of that knowledge or is Alpha being relegated to low-volume/not much experience?

psychobriggsy - Tuesday, August 22, 2017 - link

We should also consider that GlobalFoundries licensed Samsung's 14nm after digging their own 14nm hole and failing to get out of it, and right now AMD are making 486mm^2 Vega dies on that process. The process doesn't have a massive maximum reticle size however, IIRC it's around 700mm^2, whereas TSMC can do just over 800mm^2 on their 16nm.