Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

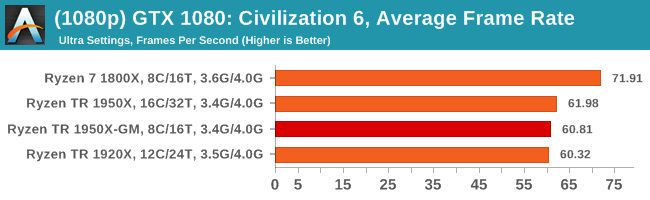

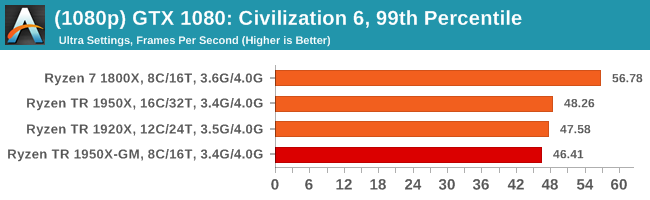

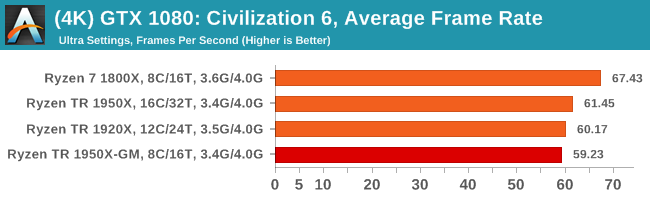

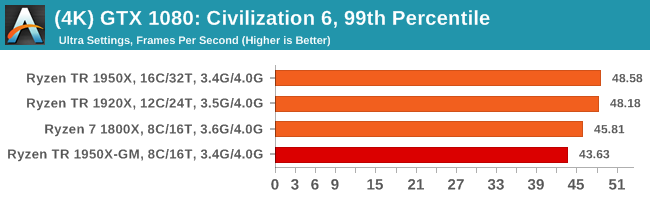

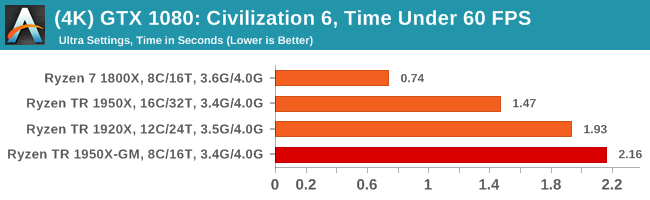

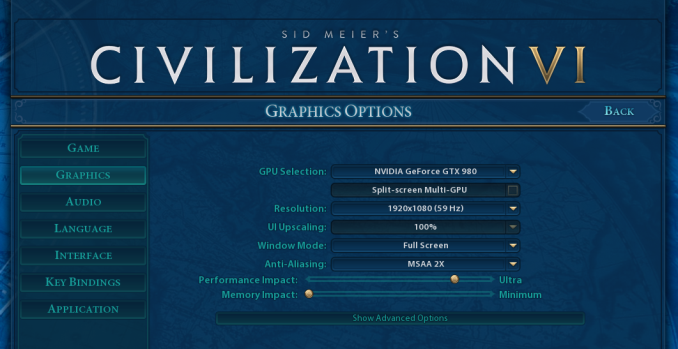

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

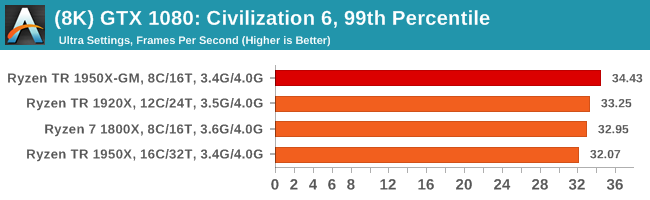

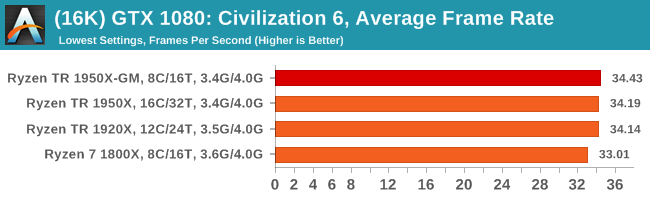

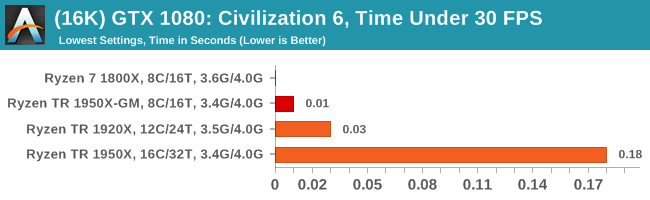

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

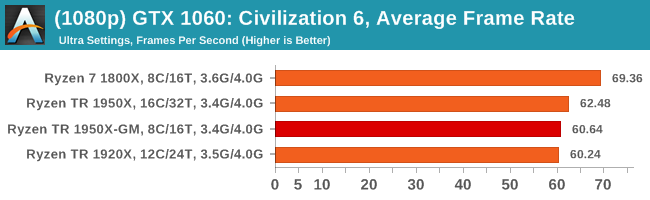

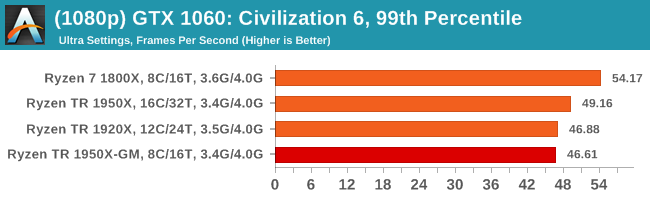

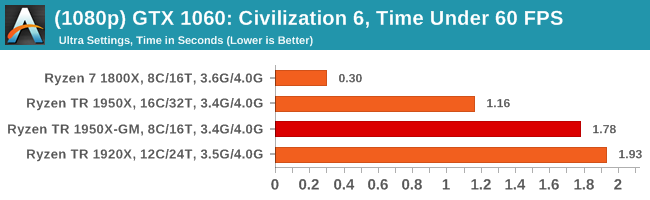

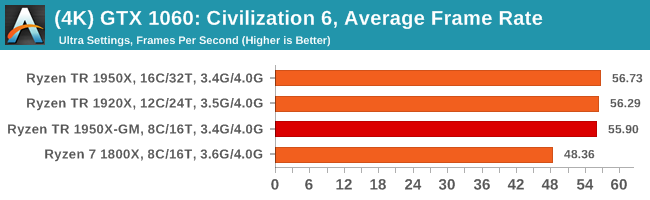

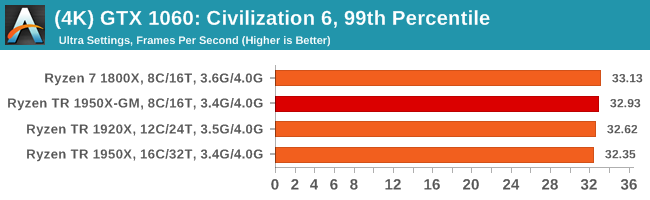

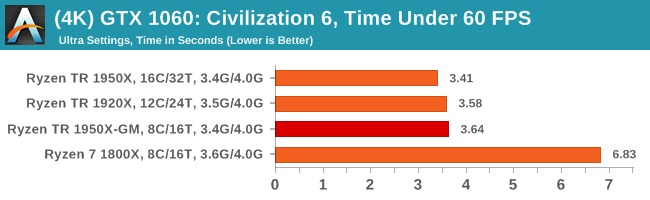

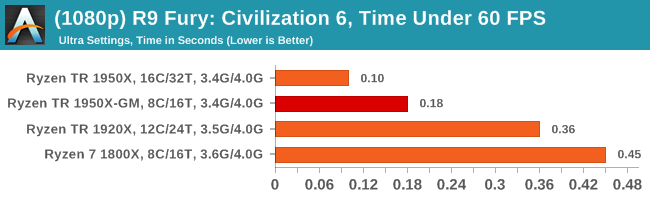

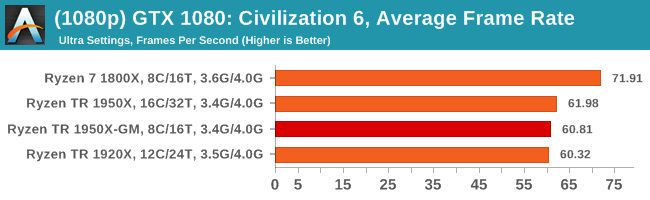

We tested both of the 1950X and the 1920X at their default settings, as well as the 1950X in Game Mode as shown by 1950X-G.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

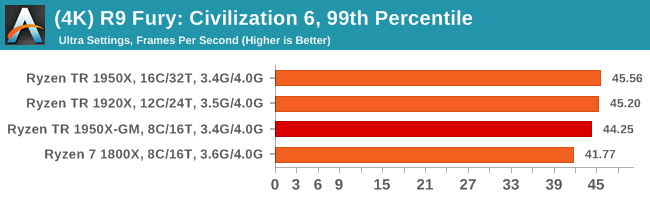

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

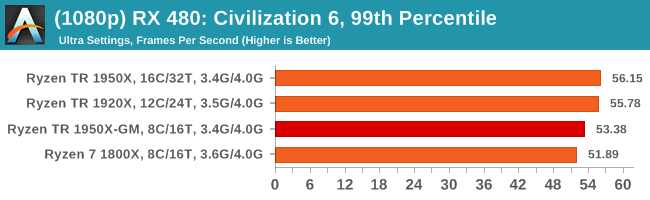

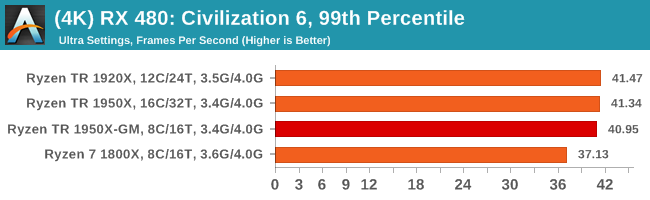

Sapphire Nitro RX 480 8G Performance

1080p

4K

104 Comments

View All Comments

peevee - Friday, August 18, 2017 - link

Of course. Work CPUs must be tested at work. Kiddies are fine with i3s.Ian Cutress - Sunday, August 20, 2017 - link

https://myhacker.net hacking news hacking tutorials hacking ebooksIGTrading - Thursday, August 17, 2017 - link

It would be nice and very useful to post some power consumption results at the platform level, if we're doing "extra" additional testing.It is very important since we're paying for the motherboard just as much as we pay for a Ryzen 5 or even Ryzen 7 processor.

And it will correctly compare the TCO of the X399 platform with the TCO of X299.

jordanclock - Thursday, August 17, 2017 - link

So it looks like AMD should have gone with just disabling SMT for Game Mode. There are way more benefits and it is easier to understand the implications. I haven't seen similar comparisons for Intel in a while, perhaps that can be exploration for Skylake-X as well?HStewart - Thursday, August 17, 2017 - link

I would think disable SMT would be better, but the reason maybe in designed of link between the two 8 Core dies on chip.GruenSein - Thursday, August 17, 2017 - link

I'd really love to see a frame time probability distribution (Frame time on x-axis, rate of occurrence on y-axis). Especially in cases with very unlikely frames below a 60Hz rate, the difference between TR and TR-GM/1800X seem most apparent. Without the distribution, we will never know if we are seeing the same distribution but slightly shifted towards lower frame rates as the slopes of the distribution might be steep. However, those frames with frame times above a 60Hz rate might be real stutters down to a 30Hz rate but they might just as well be frames at a 59,7Hz rate. I realize why this threshold was selected but every threshold is quite arbitrary.MrSpadge - Thursday, August 17, 2017 - link

Does AMD comment on the update? What's their reason for choosing 8C/16T over 16C/16T?> One could postulate that Windows could do something similar with the equivalent of hyperthreads.

They're actually already doing that. Loading 50% of all threads on an SMT machine will result in ~50% average load on every logical core, i.e. all physical cores are only working on 1 thread at a time.

I know mathematically other schedulings are possible, leading to the same result - but by now I think it's common knowledge that the default Win scheduler works like that. Hence most lightly threaded software is indifferent to SMT. Except games.

NetMage - Sunday, August 20, 2017 - link

Then why did SMT mode show differences from Creator mode in the original review?Dribble - Thursday, August 17, 2017 - link

No one is ever going to run game mode - why buy a really expensive chip and then disable half of it, especially as you have to reboot to do it? It's only use is to make threadripper look slightly better in reviews. Imo it would be more honest as a reviewer to just run it in creator mode all the time.jordanclock - Thursday, August 17, 2017 - link

The point is compatibility, as mentioned in the article multiple times. AMD is offering this as an option for applications (mainly games) that do not run correctly, if at all, on >16 core CPUs.