Investigating Cavium's ThunderX: The First ARM Server SoC With Ambition

by Johan De Gelas on June 15, 2016 8:00 AM EST- Posted in

- SoCs

- IT Computing

- Enterprise

- Enterprise CPUs

- Microserver

- Cavium

Benchmarks Versus Reality

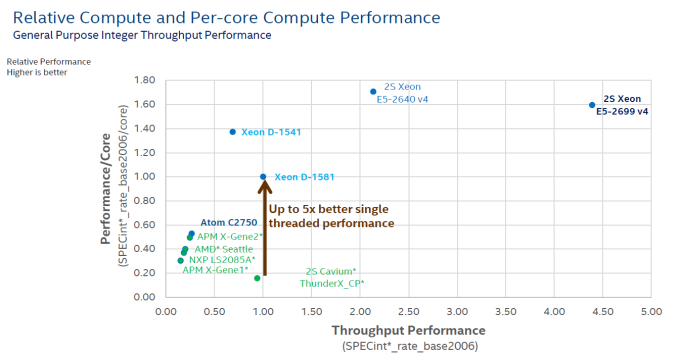

Ever run into the problem that your manager wants a clear and short answer, while the real story has lots of nuances? (ed: and hence AnandTech) The short but inaccurate answer almost always wins. It is human nature to ignore complex stories and to prefer easy to grasp answers. The graph below is a perfect illustration of that. Although this one has been produced by Intel, almost everybody in the industry, including the ARM SoC companies, love the simplicity it affords in describing the competitive situation.

The graph compares the ICC compiled & published results for SPECint_rate_base2006 with some of the claimed (gcc compiled?) results of the ARM server SoC vendors.

The graph shows two important performance vectors: throughput and single core performance. The former (X-axis) is self-explanatory, the latter (Y-axis) should give an indication of response times (latency). The two combined (x, y coordinate) should give you an idea on how the SoC/CPU performs in most applications that are not perfectly parallel. It is a very elegant way to give a short and crystal clear answer to anyone with a technical or scientific background.

But there are many drawbacks. The main problem is "single core performance". Since this is just diving the score by the number of cores, this favors the CPUs with some form of hardware multi-threading. But in many cases, the extra threads only help with throughput and not with latency. For example, if there are a few heavy SQL requests that keep you waiting, adding threads to a core does not help at all, on the contrary. So the graph above gives a 20% advantage to the SMT capable cores of Intel on y-axis, while hyperthreading is most of the time a feature that boosts throughput.

Secondly, dividing throughput by the number of cores means also that you favor the architectures that are able to run many instances of SPECint. In other words, it is all about memory bandwidth and cache size. So if a CPU does not scale well, the graph will show a lower per core performance. So basically this kind of graph creates the illusion of showing two performance parameters (throughput and latency), but it is in fact showing throughput and something that is more related to throughput (throughput normalized per core?) than latency. And of course, SPECint_rate is only a very inaccurate proxy for server compute performance: IPC is higher than in most server applications and there is too much emphasis on cache size and memory bandwidth. Running 32 parallel instances of an application is totally different from running one application with 32 threads.

This is definitely not written to defend or attack any vendor: many vendors publish and abuse these kind of graphs to make their point. Our point is that it is very likely that this kind of graph gives you a very inaccurate and incomplete view of the competition.

But as the saying goes, the proof is in the pudding, so let's put together a framework for comparing these high level overviews with real world testing. First step, let's pretend the graph above is accurate. So the Cavium ThunderX has absolutely terrible single threaded performance: one-fifth that of the best Xeon D, not even close to any of the other ARM SoCs. A ThunderX core cannot even deliver half the performance of an ARM Cortex-A57 core (+/- 10 points per core), which is worse than the humble Cortex-A53. It does not get any better: the throughput of a single ThunderX SoC is less than half of the Xeon D-1581. The single threaded performance of the Xeon D-1581 is only 57% of the Xeon E5-2640's and it cannot compete with the throughput of even a single Xeon E5-2640 (2S = 2.2 times the Xeon-D 1581).

Second step, do some testing instead of believing vendor claims or published results from SPEC CPU2006. Third step, compare the graph above with our test results...

82 Comments

View All Comments

Daniel Egger - Wednesday, June 15, 2016 - link

I could hardly disagree more about the remote management of SuperMicro vs. HP. Remote management of HP is *the horror*, I've never seen worse and I've seen a lot. It's clunky, it requires a license to be useful (others do to but SuperMicro does not have such nonsense), the BCM tends to crash a lot (which is very annoying for a remote management solution), boot is even slower than all other systems I know due to the way they integrate the BIOS and remote management on the system and it also uses Java unless you have Windows machines around to use the .NET version.For the remote management alone I would chose SuperMicro over most other vendors any day.

JohanAnandtech - Thursday, June 16, 2016 - link

I found the .Net client of HP much less sluggish, and I have seen no crashing at all. I guess there is no optimal remote management client, but I really like the "boot into firmware" option that Intel implemented.rahvin - Thursday, June 16, 2016 - link

Not only that but Supermicro actually releases updates for their BCM's. I had the same shocked reaction to the HP claim. Started to wonder if I was the only one that thought supermicro was light years ahead in usability.I should note that Supermicro's awful Java tool works on Linux as well as windows. Though it refuses to run if your Java isn't the newest version available.

pencea - Wednesday, June 15, 2016 - link

All these articles and yet still no review for the GTX 1080, while other major sites have already posted their reviews of both 1070 & 1080. Guru3D already has 2 custom 1080 and a custom 1070 review up.Ryan Smith - Wednesday, June 15, 2016 - link

It'll be done when it's done.pencea - Wednesday, June 15, 2016 - link

Unacceptably late for something that should've been posted weeks ago.Meteor2 - Thursday, June 16, 2016 - link

Will anyone read it though? Your ad impressions are going to suffer.Ryan Smith - Thursday, June 16, 2016 - link

Maybe. Maybe not. But it's my own fault regardless. All I can do is get it done as soon as I reasonably can, and hope it's something you guys find useful.name99 - Thursday, June 16, 2016 - link

Give it a freaking rest. No-one is impressed by your constant whining about this.pencea - Thursday, June 16, 2016 - link

Not looking to impress anyone. As a long time viewer of this site, I'm simply disappointed that a reputational site like this is constantly late for GPU reviews.